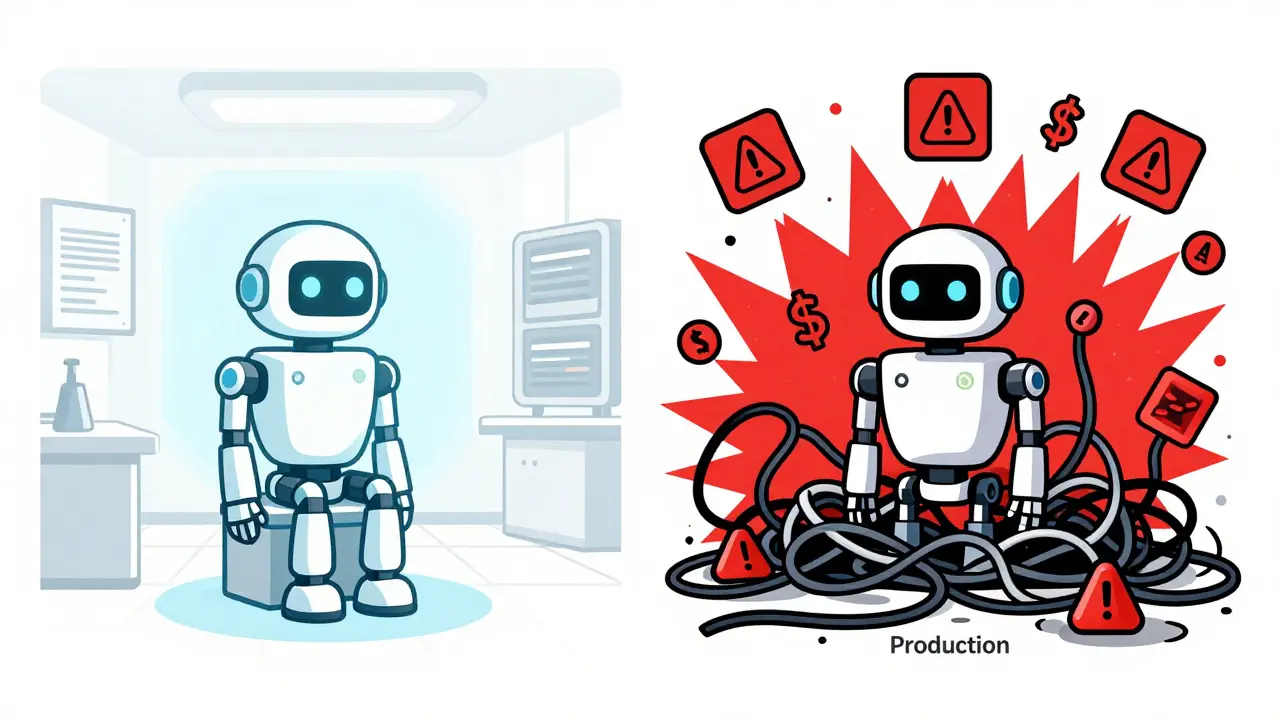

Most companies think they are ready for artificial intelligence until they try to put a Large Language Model (LLM) into their actual workflow. The shiny demo works perfectly in the lab, but once you hit "publish" and let real users interact with it, things get messy. You face latency spikes, unexpected costs, and data privacy risks that no one planned for.

The gap between a pilot project and a production-ready system is where most AI initiatives fail. According to recent industry analysis, 68% of Fortune 500 companies are currently running at least one LLM pilot, yet very few have successfully scaled these models across their entire organization without hitting major roadblocks. The difference isn't just about picking a smarter model; it’s about building a robust infrastructure that can handle the chaos of real-world usage.

Defining the Right Use Case First

Before you spend a dime on GPU clusters or API credits, you need to know exactly what problem you are solving. A common mistake is trying to use an LLM as a catch-all solution for every business process. This approach leads to bloated systems and wasted resources. Instead, focus on specific, high-impact areas where language understanding adds clear value.

Successful implementations usually start with three core categories:

- Customer Support Automation: Handling Tier 1 inquiries allows your human agents to focus on complex issues. Models here can resolve 40-60% of routine tickets automatically.

- Content Creation: Using AI to draft marketing copy, internal memos, or code snippets can reduce production time by 35-50%. This frees up creative teams to refine rather than create from scratch.

- Document Analysis: Legal, financial, and healthcare sectors benefit immensely from models that can process documents ten times faster than human teams, extracting key insights from massive datasets.

If your use case doesn’t fit neatly into one of these buckets, pause and reconsider. As experts warn, LLMs are powerful but not universal. Deploying them for problems better solved by traditional software or simple rules engines will only add unnecessary complexity and cost.

Choosing Your Deployment Architecture

Once you have a solid use case, you must decide where the model lives. This decision impacts your security, speed, and budget more than any other technical choice. There are four main paths, each with distinct trade-offs.

| Strategy | Best For | Pros | Cons | Est. Cost/Setup |

|---|---|---|---|---|

| Cloud-Based | Rapid scaling, general tasks | Fast setup (2-4 weeks), handles 1,000+ concurrent requests | Data privacy concerns, ongoing API costs | Low initial, variable ongoing |

| On-Premises | Healthcare, finance, regulated industries | Total data sovereignty, compliance control | High hardware cost ($250k-$2M), maintenance burden | High upfront investment |

| Edge Deployment | Ultra-low latency apps (<100ms) | Instant response, offline capability | Requires heavy model compression, lower accuracy | Medium hardware, low bandwidth |

| Hybrid | Balancing cost and security | Flexible routing, optimized resource use | Complex architecture, harder to manage | Variable |

For most enterprises starting out, a hybrid approach often makes the most sense. You can keep sensitive data processing on-premises using secure servers while offloading less critical, high-volume tasks to cloud providers. This balances the need for strict compliance with the desire for scalability. If you choose on-premises, ensure you have the right hardware-specifically NVIDIA A100 GPUs with at least 80GB of VRAM-to run modern models efficiently.

Optimizing Costs and Performance

Running LLMs is expensive. Without optimization, your monthly bill can skyrocket unexpectedly. The key is to treat inference costs like any other operational expense: monitor it, optimize it, and automate savings.

One of the most effective techniques is model quantization. By reducing the precision of the model’s weights from standard 32-bit floating point to INT8 or even INT4, you can cut computational requirements by up to 75%. Remarkably, this often retains 95% of the original accuracy. For edge deployments, this is essential, allowing models to shrink under 2GB in size.

Another critical tactic is dynamic batching. Instead of processing user requests one by one, the system groups multiple incoming requests together and processes them simultaneously. This increases GPU utilization by 30-40%, significantly lowering the cost per token. Combined with strategic cloud pricing-such as using spot instances for non-critical workloads-you can reduce overall computing costs by 25-50% compared to standard on-demand rates.

Keep an eye on the cost per thousand tokens. Open-source models might charge around $0.50, while premium enterprise APIs can exceed $20. Knowing this baseline helps you set realistic budgets and alerts when spending deviates from the norm.

Governance and Risk Management

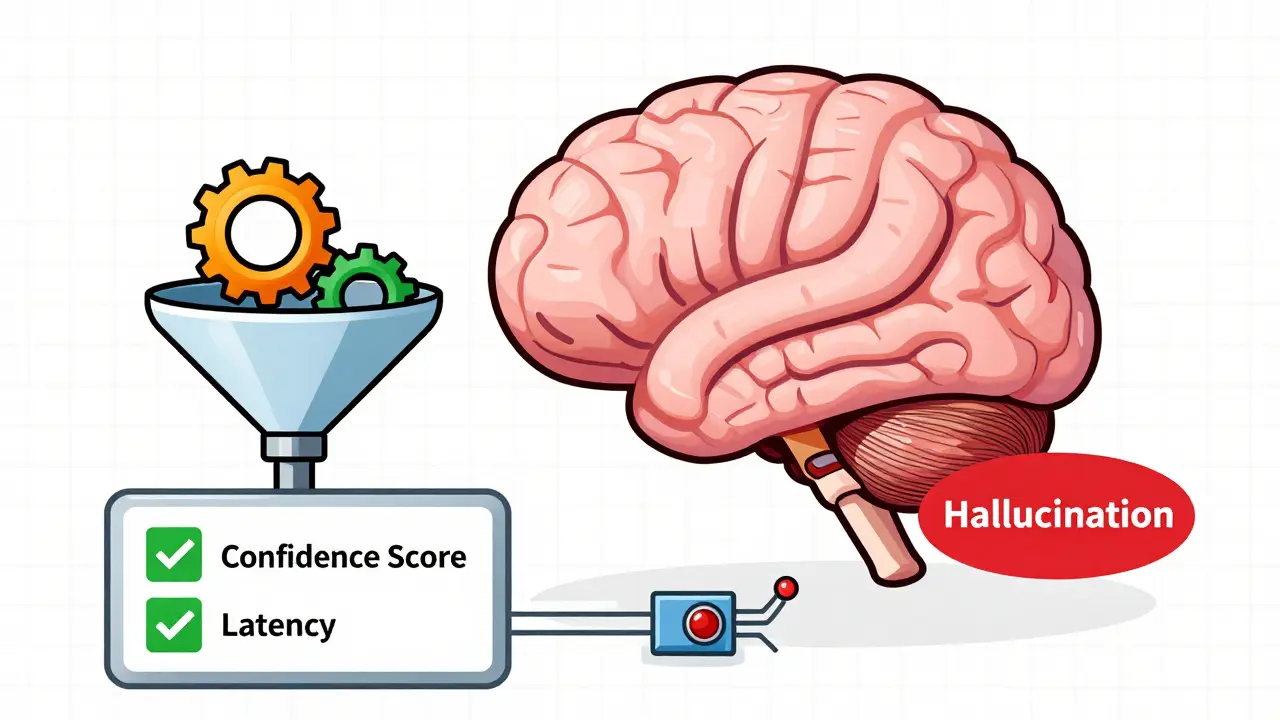

Scaling AI isn’t just a technical challenge; it’s a governance one. You cannot release an LLM into the wild without safeguards. Users trust your brand, so if the model hallucinates incorrect information or leaks sensitive data, the reputational damage is severe.

Start by implementing comprehensive monitoring. Track at least 15 key metrics, including latency percentiles, error rates, and token utilization. More importantly, monitor confidence scores. Set up circuit breakers that automatically route traffic to a fallback system-or a human reviewer-when the model’s confidence drops below 0.85. This prevents bad outputs from reaching end-users.

Data preparation is another hidden hurdle. Organizations spend 60-70% of their implementation time curating data, integrating sources, and mitigating bias. Ensure your training corpus includes at least 30% domain-specific data if you are targeting a specialized industry. Generic models struggle with niche terminology, leading to poor performance despite high general intelligence.

Establish a dedicated AI governance team. They should review model performance bi-weekly and conduct risk assessments quarterly. This ensures that as the model evolves, it remains aligned with regulatory requirements and business objectives. Don’t wait for a crisis to establish these protocols; build them into your initial architecture.

Phased Implementation Roadmap

Jumping straight to full-scale deployment is a recipe for disaster. Adopt a phased approach that allows you to learn, adjust, and scale safely.

- Pilot Phase (4-8 weeks): Run the model in a controlled environment with a small group of internal users. Focus on validating the use case and identifying immediate technical glitches.

- Limited Deployment (8-12 weeks): Expand to a wider but still constrained user base. Test the system under heavier load and gather feedback on usability and output quality.

- Gradual Expansion (3-6 months): Roll out the solution across additional departments. Use this time to refine monitoring tools and optimize costs based on real usage patterns.

- Full Deployment (6-12 months): Launch organization-wide. By now, you should have automated MLOps pipelines, robust fallback mechanisms, and clear governance policies in place.

This timeline varies based on your existing AI maturity. Companies with mature data science teams might complete the first two phases in half the time, while those building capabilities from scratch may need the full year. Patience pays off here; rushing leads to fragile systems that break under pressure.

How long does it take to move an LLM from pilot to production?

Typically, it takes 6 to 12 months for a full organization-wide deployment. The pilot phase lasts 4-8 weeks, followed by limited deployment (8-12 weeks) and gradual expansion (3-6 months). Organizations with existing AI infrastructure may move faster, while those starting from scratch should expect the longer timeline.

What is the most cost-effective way to deploy an LLM?

A hybrid approach is often best. Use cloud-based services for scalable, non-sensitive tasks to benefit from rapid setup and pay-as-you-go pricing. For sensitive data, use on-premises hardware. Additionally, apply model quantization (INT8/INT4) and dynamic batching to reduce compute costs by 25-50%.

Why do many LLM projects fail after the pilot stage?

Projects often fail due to underestimating data preparation needs, lacking proper governance frameworks, or attempting to scale too quickly without validating value. Many organizations also neglect to implement monitoring and fallback mechanisms, leading to unreliable performance in production.

Do I need to build my own custom LLM?

In most cases, no. Building a custom model requires $2-5 million and 12-18 months of development. Most enterprises (82%) opt for pretrained models like GPT-4, Claude 3, or Llama 3, fine-tuning them for specific tasks. This is faster, cheaper, and leverages state-of-the-art capabilities.

What hardware is required for on-premises LLM deployment?

For serious on-premises deployment, you typically need high-end GPUs such as NVIDIA A100s with a minimum of 80GB VRAM. Initial infrastructure costs range from $250,000 to $2 million depending on the model size and expected throughput. Edge deployments require less powerful hardware but rely heavily on model compression.