Category: AI Technology

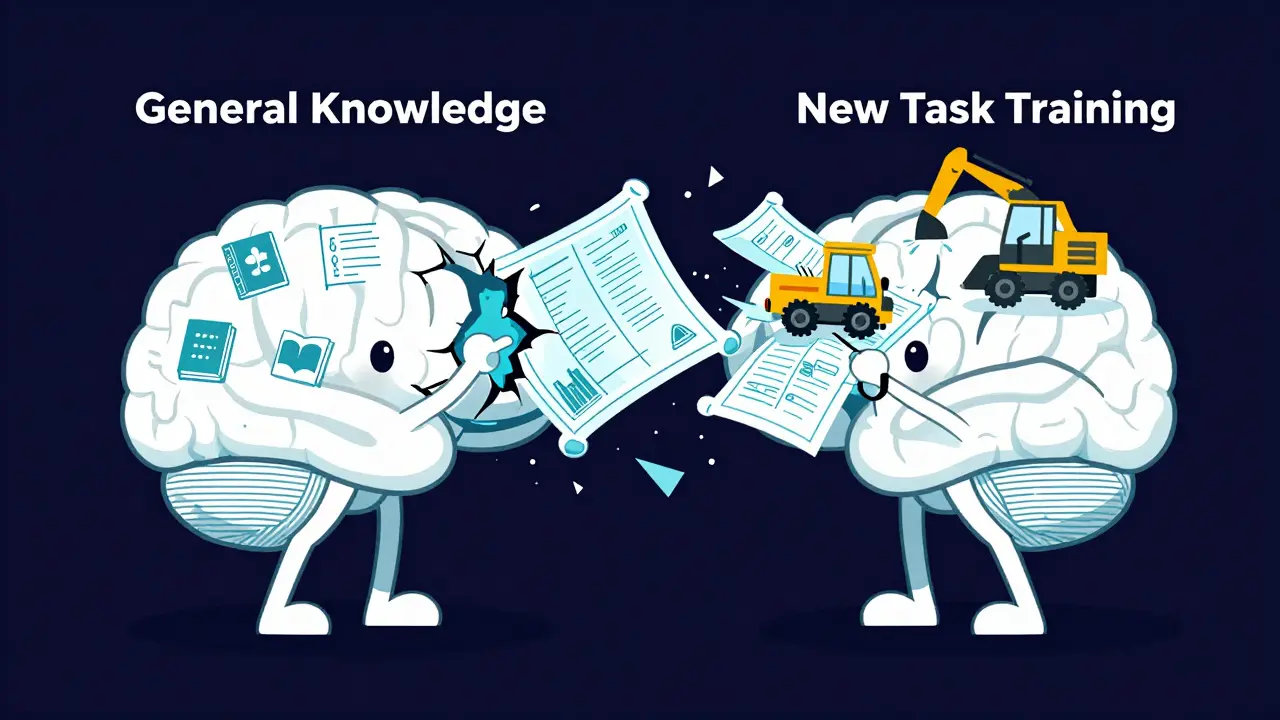

Preventing Catastrophic Forgetting During LLM Fine-Tuning: Techniques That Work

Discover why LLMs forget general knowledge after fine-tuning and how techniques like FIP, STM, and EWC can stop catastrophic forgetting in 2026.

Read moreVibe Coding for Global Teams: Real-World Use Cases and Speed Gains

Discover how vibe coding helps global teams ship software faster. Learn real use cases, productivity gains, and tips for distributed organizations.

Read moreAI Watermarking and Detection: Methods, Limitations, and the Reality of Synthetic Content

Explore the reality of AI watermarking and detection in 2026. Learn how methods like SynthID and C2PA work, their limitations against attacks, and why they are not silver bullets for verifying synthetic content.

Read moreFirebase Studio and Vibe Coding: Auto-Provisioned Backends in Minutes

Explore how Firebase Studio and vibe coding transform app development by auto-provisioning backends in minutes. Learn about Gemini AI integration, MCP servers, and practical steps to build full-stack apps faster.

Read moreThe Role of Datasets in NLP: From Wikipedia to Web-Scale LLM Corpora

Explore how NLP datasets evolved from structured Wikipedia entries to massive web-scale corpora. Learn about key resources like Hugging Face, specialized benchmarks, and the ethical challenges of training modern Large Language Models.

Read moreHow Tokenizer Design Choices Impact LLM Quality: A Practical Guide

Discover how tokenizer design choices like BPE, Unigram, and vocabulary size directly impact LLM accuracy, memory usage, and speed. Learn practical strategies to optimize your training pipeline.

Read moreAPI LLMs vs On-Prem Deployment: Latency, Control, and Cost Tradeoffs

Explore the critical tradeoffs between API LLMs and on-prem deployment. We analyze latency speeds, data control, hidden costs, and scalability to help you decide the best AI infrastructure strategy for 2026.

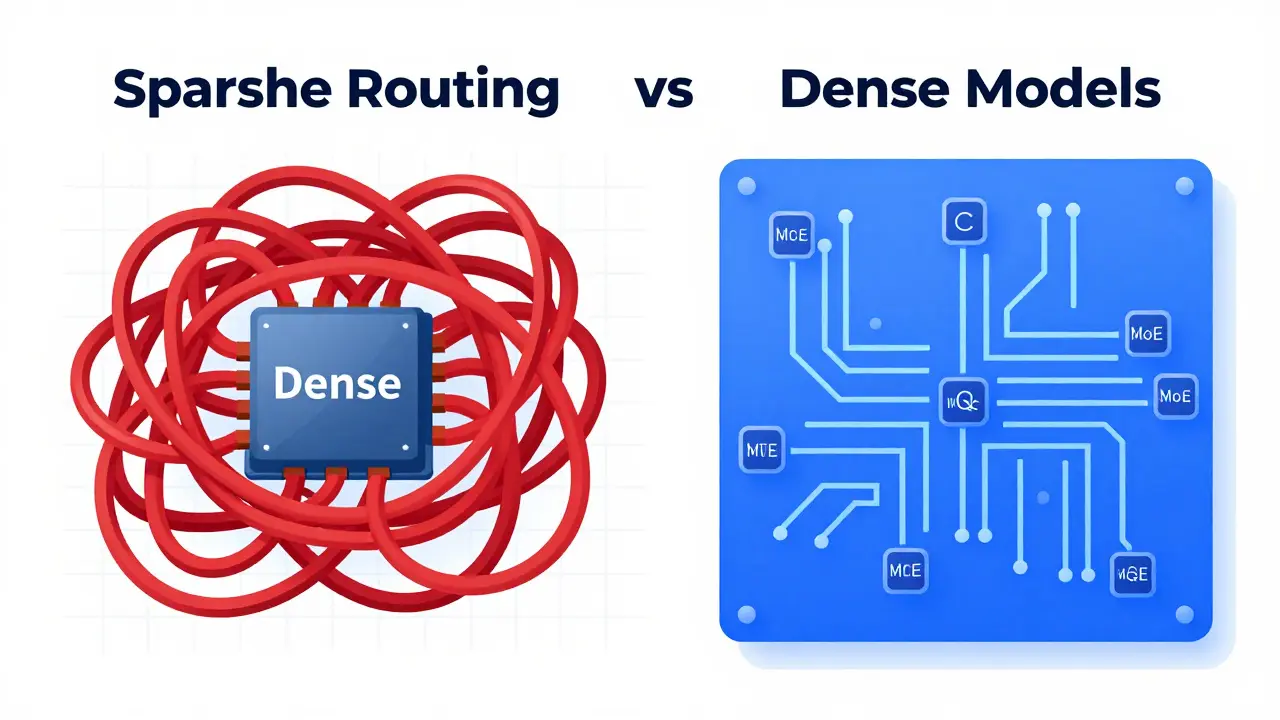

Read moreSparse and Dynamic Routing in LLMs: The MoE Revolution Explained

Explore how sparse and dynamic routing via Mixture of Experts (MoE) transforms LLMs. Learn about efficiency gains, RouteSAE, and implementation challenges in 2026.

Read moreProduct Design with Multimodal Generative AI: Rapid Prototypes and Iterations

Discover how multimodal generative AI transforms product design by integrating text, images, and data to create rapid prototypes. Learn about the six-stage workflow, industry applications, and practical implementation strategies.

Read moreUnit Test First Prompting: How to Generate Tests Before Code

Learn Unit Test First Prompting: a method to generate AI unit tests before code. Improve security, reduce bugs, and master TDD with LLMs like ChatGPT and GitHub Copilot.

Read moreAccessibility-Inclusive Vibe Coding: Patterns That Meet WCAG by Default

Learn how Accessibility-Inclusive Vibe Coding integrates AI speed with WCAG compliance. Discover patterns, tools like axe MCP Server, and workflows to build inclusive apps by default.

Read moreSelf-Supervised Learning for Generative AI: From Pretraining to Fine-Tuning

Self-supervised learning transforms generative AI by leveraging 98% of unlabeled data. Learn how pretraining on puzzles enables powerful models like GPT-4, with real-world enterprise applications and future trends.

Read more