Training a large language model (LLM) isn’t just about throwing compute at the problem. It’s about navigating a fragile, high-stakes process where small instabilities can cost millions and weeks of work. One of the most effective - and surprisingly simple - ways to stabilize this process is through checkpoint averaging and its more refined cousin, exponential moving average (EMA). These aren’t just buzzwords. They’re now standard practice for teams training models above 1 billion parameters. And if you’re not using them, you’re leaving performance, cost, and reliability on the table.

What Checkpoint Averaging Actually Does

Think of training an LLM like hiking up a mountain. You don’t just stop at the peak. You take a few steps back, look around, and maybe even descend a little to find a better path. Checkpoint averaging works the same way. Instead of picking the final model state as your best output, you take snapshots - called checkpoints - at regular intervals during training. These are full copies of the model’s weights, saved every few hours or after a set number of training steps. Then, you average them together.

This isn’t magic. It’s statistics. Training isn’t smooth. Loss curves bounce around. Learning rates jump. Gradients wiggle. The final model you get might be stuck in a local minimum, or worse - a noisy, unstable one. By averaging multiple checkpoints, you smooth out those spikes. You’re essentially taking the consensus of the model’s behavior over time, not just its last guess.

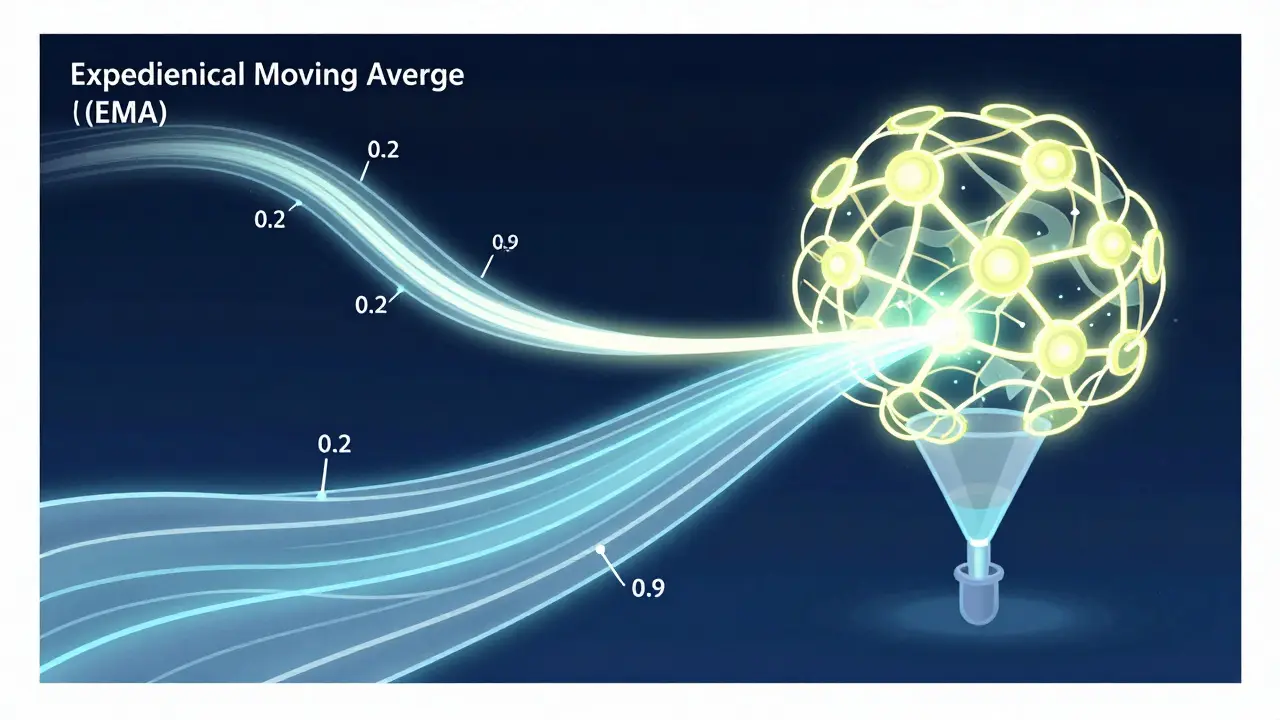

Simple moving average (SMA) treats all checkpoints equally. But EMA? It gives more weight to recent ones. That’s crucial. Early training learns basic patterns. Later training refines them. EMA reflects that progression. A decay rate of 0.2-0.9999 controls how much weight recent checkpoints carry. Too high (like 0.9999), and you barely average anything - you’re just copying the last checkpoint. Too low (like 0.1), and you lose the benefit of recent improvements. Most teams find 0.2-0.9 works best for large models.

Why It Works So Well

The real win? Performance gains without extra training. A 2025 arXiv paper showed that checkpoint averaging improved average benchmark scores by +1.64 points across 12 standard NLP tasks. That’s a 3.3% lift - and it cost nothing in compute time. You’re not retraining. You’re just combining existing saves.

Teams using this technique report tangible results. One user on Reddit averaged the last 8 checkpoints of a 70B model and saw their MMLU score jump from 68.2 to 69.7. Zero extra training. Just better generalization. Another user on GitHub saved their 13B model from collapse by merging three pre-failure checkpoints - recovering three weeks of work. That’s not hypothetical. That’s real-world resilience.

Why does this happen? Because the loss landscape between checkpoints is often flatter than expected. As Carnegie Mellon’s Ruslan Salakhutdinov put it, the model doesn’t just drift randomly - it explores stable regions. Averaging pulls you into those regions. And Meta AI’s Anna Rohrbach nailed it: checkpoint averaging gives the highest ROI for LLM pre-training. For less than 0.1% extra computational overhead, you get 1-2% performance gains.

How It Compares to Other Methods

Some teams rely on learning rate scheduling - warmup, stable, decay. Others use stochastic weight averaging (SWA). But checkpoint averaging outperforms both. A March 2023 OpenReview study showed it beat conventional training and SWA by 0.8-1.2 perplexity points on language modeling tasks. Even better, combining it with moderate learning rate decay (called CDMA) pushed gains to +1.64 points.

Here’s the catch: it only works if your training is stable. If your loss spikes violently - say, from a bad batch, a corrupted data sample, or a misconfigured optimizer - averaging across those spikes makes things worse. The same arXiv paper found performance dropped by up to 2.3 points when checkpoints included post-spike states. That’s why smart implementations don’t average everything. They filter. They skip checkpoints after sudden loss jumps. They use adaptive selection. NVIDIA’s upcoming NeMo 2.0 will do this automatically, using gradient similarity to pick the best candidates.

And it doesn’t help during fine-tuning. On small datasets, averaging increases overfitting risk by 18-22%. That’s because fine-tuning is about precision, not exploration. You’re not hunting for a better landscape - you’re fine-tuning a single peak. Checkpoint averaging belongs in pre-training, not adaptation.

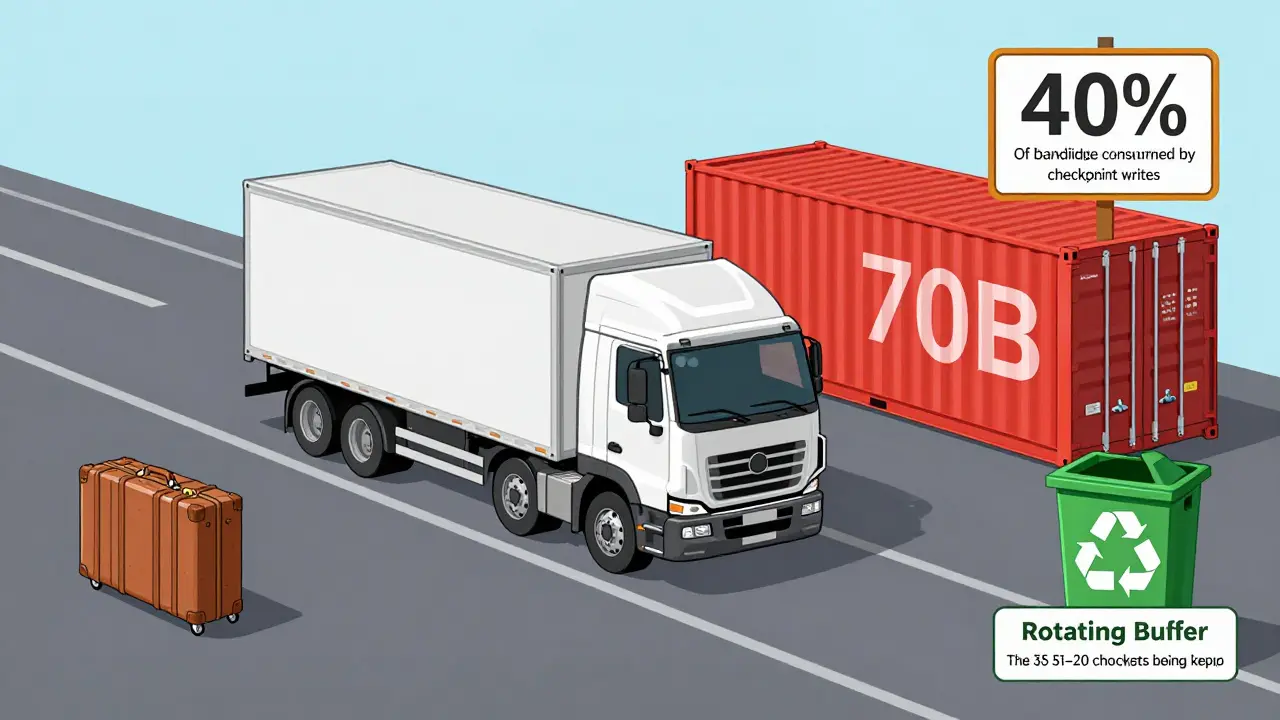

Storage Costs - The Hidden Price

There’s no free lunch. Storing checkpoints eats storage. A 7B model checkpoint takes about 56GB. A 70B model? Around 560GB. For trillion-parameter models? Each checkpoint is roughly 2 terabytes. That’s not just disk space - it’s I/O bandwidth. One DDN whitepaper found checkpointing consumed 40% of peak write bandwidth for trillion-parameter models. That’s a bottleneck. And for teams running dozens of experiments, storage can eat 38-42% of the total training budget.

So how do you manage it? Most teams save checkpoints every 10 minutes or every 2,000-5,000 training steps. For base models, averaging the last 5 is enough. For GPT-3 scale models, use the last 20. And don’t save every single one. Use a rotating buffer. Keep only the last N. That’s what Hugging Face Transformers does by default since version 4.25.0. You can enable EMA with a single flag: use_ema=True.

Implementation: What You Actually Need to Do

You don’t need to build this from scratch. If you’re using Hugging Face Transformers, PyTorch Lightning, or NVIDIA NeMo, it’s built in. For custom setups:

- Modify your training loop to save the model state every X steps or hours.

- Store weights in a dedicated folder - don’t overwrite them.

- After training ends, load the last N checkpoints.

- Average their weights:

final_weights = sum(checkpoints) / N(for SMA) or apply exponential decay (for EMA). - Save the averaged model as your final output.

For EMA, you can do it online - update weights during training, not just at the end. The formula is simple:

ema_weight = decay * ema_weight + (1 - decay) * current_weight

Start with decay = 0.9. Test it. If performance drops, lower it to 0.5. If it’s too slow to adapt, raise it to 0.95. The sweet spot is usually between 0.2 and 0.9. And always validate on a held-out set. One user on GitHub crashed their 13B model with decay=0.9999 - perplexity spiked 12.4%. That’s a red flag.

Who’s Using This - And Who Isn’t

By 2025, 87% of organizations training models above 1B parameters use checkpoint averaging. That’s not a trend. That’s an industry standard. NVIDIA, Microsoft, Meta, and Google all bake it into their frameworks. Even startups training 10B+ models can’t afford to skip it. The math is brutal: training a 70B model costs $1.8 million. A 1-2% performance gain isn’t just better accuracy - it’s fewer iterations, less compute, and faster time-to-market.

But adoption drops sharply below 1B parameters. Only 48% of teams training smaller models use it. Why? Because the gains are smaller. The storage cost isn’t worth it. For models under 1B, learning rate scheduling and dropout are often enough.

Here’s the reality: if you’re training a model with more than 7B parameters, you’re already in the high-stakes zone. You need stability. You need efficiency. You need to avoid wasting $1 million on a model that underperforms. Checkpoint averaging isn’t optional anymore. It’s baseline.

What’s Next

The next evolution? Intelligent checkpoint selection. Instead of averaging the last 10, systems will pick the 5 best - based on gradient similarity, loss stability, or validation performance. NVIDIA’s NeMo 2.0, launching in 2025, does this. Yann LeCun predicts by 2027, 95% of LLM training will use adaptive merging. That’s not speculation - it’s inevitable.

But there’s a warning. Stanford’s Percy Liang cautions that over-reliance on checkpoint averaging can mask deeper training issues. If your model keeps crashing, don’t just average the crashes. Fix the root cause. Checkpoint averaging isn’t a band-aid. It’s a stabilizer. Use it to refine, not to hide.

Final Takeaway

Checkpoint averaging and EMA are low-effort, high-reward tools. They cost almost nothing to implement. They require no new hardware. They don’t change your data. And they deliver measurable gains in performance, stability, and cost efficiency. If you’re training large language models, you’re already spending millions. Why not make sure the model you end up with is the best one possible? Start saving checkpoints. Try EMA with decay=0.8. Test it. And don’t wait until your next training run crashes to realize you should’ve done this sooner.

Ben De Keersmaecker

February 26, 2026 AT 12:35Just don't use it during fine-tuning. Learned that the hard way-overfitting went nuts.

Aaron Elliott

February 28, 2026 AT 08:00Chris Heffron

March 1, 2026 AT 17:54Adrienne Temple

March 2, 2026 AT 12:40Also, if you’re worried about storage, just delete old ones after 24 hours. You don’t need them all. Keep it clean.

Sandy Dog

March 3, 2026 AT 22:40It was a 70B model. Loss spiked on day 17. I thought ‘nah, it’ll recover.’ IT DIDN’T. I had no checkpoints. NONE. I just sat there staring at the screen like a zombie. Then I remembered this post. I started crying harder. I’ve never felt so stupid in my life. Now I’m redoing it with EMA on. I saved checkpoints every 15 mins. I even set up a Slack alert. I’m not gonna risk it again. EVER. I’m a changed woman. 🙏🙏🙏

Nick Rios

March 4, 2026 AT 15:35Also, big thanks to the author for mentioning NVIDIA’s NeMo 2.0. That’s gonna be huge. I’ve been waiting for automated checkpoint filtering. Finally, someone’s building tools that respect how messy real training is.