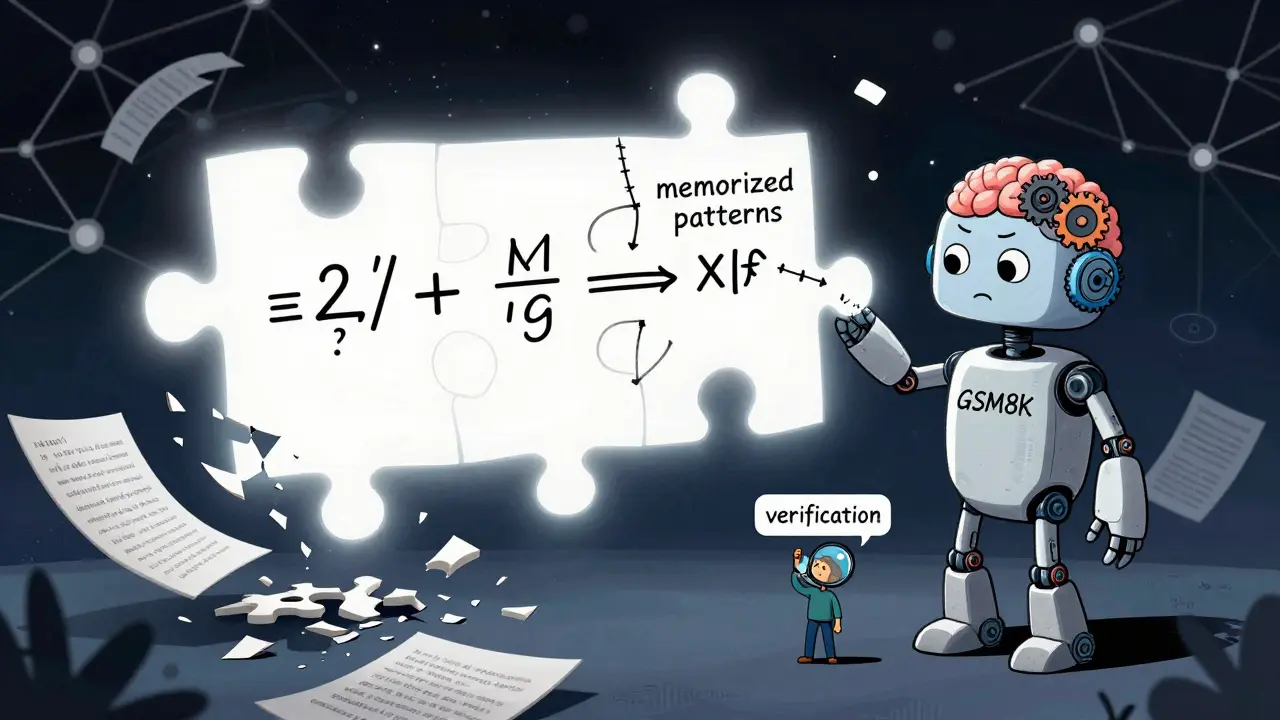

When you ask a large language model to solve a math problem, it doesn’t think like a human. It doesn’t reason step by step with intuition or intuition-driven insight. Instead, it stitches together patterns it’s seen before - often brilliantly, but always dangerously. By 2025, models like Gemini 2.5 Pro and ChatGPT o3 could solve 5 out of 6 problems from the International Mathematical Olympiad (IMO). Sounds impressive? It is - until you realize they’re solving them by memorizing, not understanding.

What These Benchmarks Actually Measure

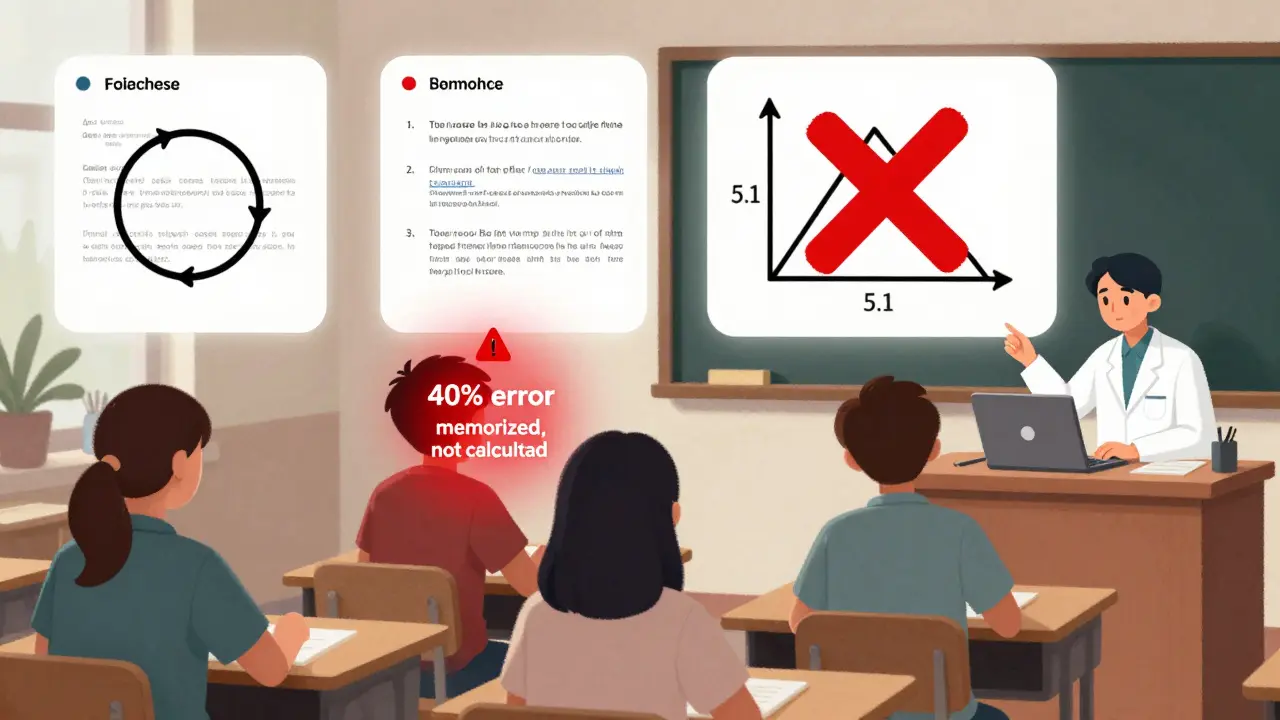

Mathematical reasoning benchmarks aren’t just tests. They’re traps. Designed to catch models that fake their way through problems instead of truly solving them. The MATH dataset is a collection of 12,500 competition-level math problems spanning algebra, geometry, number theory, and calculus, with difficulty rated from 1 to 5. Created in 2021, it became the gold standard. But by 2024, researchers found that many models had been trained on problems from this very dataset. The scores? Inflated. The progress? Illusory.That’s when new benchmarks emerged - not to raise the bar, but to flip it upside down. The GSM8k is an 8,500-problem set of grade-school word problems requiring multi-step logic. Simple? Yes. But the newer GSM-Symbolic is a variant that randomly swaps numbers and variables while keeping the underlying structure identical. When researchers tested top models on this, performance dropped 25-30%. Why? Because the models didn’t learn how to reason - they learned how to match patterns. Change the numbers, and they fall apart.

Then came the real test: MATH-P-Hard is a set of 279 problems derived from the hardest MATH questions, but with fundamental structural changes that require entirely new solution strategies. Even models scoring 68% on the original MATH dataset crashed to under 15% here. One model confidently solved a problem by assuming a variable equaled zero - even though the problem explicitly stated it was greater than one. It wasn’t a typo. It was a flaw in how the model thinks.

The Proof Problem

Solving a math problem and proving it are two different things. One gives you an answer. The other gives you certainty. That’s why the PhD-level benchmark from UC Berkeley is so damning. It contains 77 proof-based questions pulled from Roman Vershynin’s High-Dimensional Probability - a graduate-level textbook used in top universities. Not a single model scored above 12%. Not one.Why? Because proofs demand structure, logic, and consistency. They require you to justify every step. Models don’t do that. They generate plausible-sounding text that looks like a proof but collapses under scrutiny. In a 2025 evaluation by ETH Zurich, human experts reviewed 200 model-generated proofs for the USAMO (USA Mathematical Olympiad). Only 25% of Gemini 2.5 Pro’s responses passed basic logical checks. Other models? Below 5%. Common errors? Circular reasoning (32%), incorrect assumptions (27%), and incomplete logic (24%).

Even when models get the right answer, they often can’t explain why. And that’s worse than being wrong. It’s dangerous.

Who’s Leading - And How

On standard benchmarks, the leaderboard looks like a tech arms race:| Model | GSM8k Accuracy | MATH Dataset Accuracy |

|---|---|---|

| Google Gemini 2.5 Pro | 89.1% | 68.1% |

| Claude 3.7 (Anthropic) | 87.3% | 65.4% |

| OpenAI ChatGPT o3 | 86.7% | 64.9% |

| DeepSeek-Math | 83.5% | 62.1% |

| Qwen-Math-Instruct | 82.8% | 61.7% |

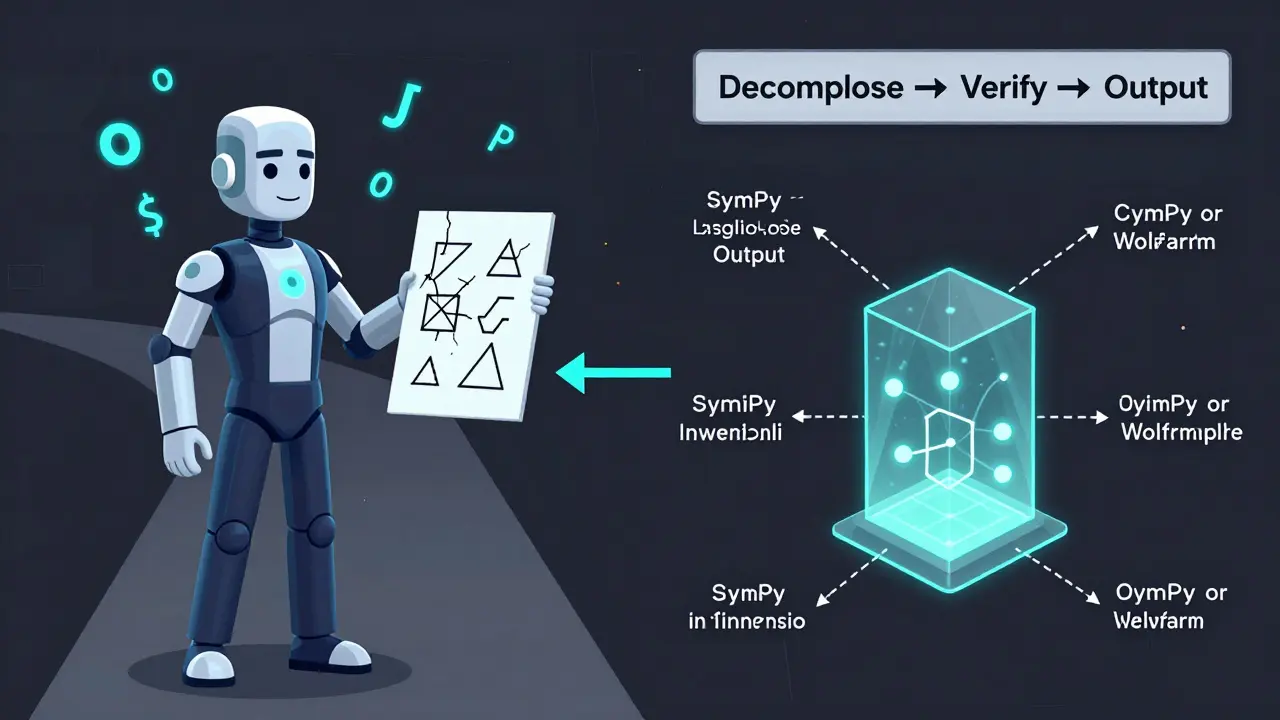

But look closer. Gemini 2.5 Pro doesn’t solve problems alone. It quietly calls Python, Wolfram Alpha, or SymPy when it hits a calculation. ChatGPT o3 uses a 32,000-token chain-of-thought - essentially, it writes its own internal textbook before answering. These aren’t pure reasoning feats. They’re hybrid tricks. The model isn’t thinking. It’s outsourcing.

Open-source models like DeepSeek-Math and Qwen-Math-Instruct are closing the gap. They’re not perfect, but they’re transparent. You can inspect their weights. You can test them offline. And that’s why they’re gaining traction in labs and universities - not because they’re smarter, but because they’re trustworthy.

The Real Danger: Trusting the Wrong Tool

In education, these models are already in use. MathGenius, an edtech startup, says their AI tutor achieves 92% accuracy on K-12 problems and cuts teacher workload by 37%. That’s a win - until a student gets a question wrong because the AI misapplied a formula, and the teacher doesn’t catch it.In finance, models are being used to price derivatives. One hedge fund reported a 73% failure rate when market conditions changed slightly. The model had seen similar patterns before - but not this one. It didn’t adapt. It hallucinated.

On GitHub, a maintainer of the MathBench repository documented 87 specific cases where models confidently gave wrong answers after minor changes to problem inputs. One example: a geometry problem with a triangle’s side length changed from 5 to 5.1. The model’s answer shifted by 40%. It didn’t recalculate. It recalled.

And in research? Forget it. A data scientist on HackerNews said they stopped using LLMs for proof generation after three critical errors slipped into a paper draft - errors that would have invalidated their entire study. The models aren’t just wrong. They’re *convincing*.

What Comes Next

The future of mathematical reasoning in AI won’t be bigger models. It won’t be more data. It’ll be hybrid systems. Google’s AlphaGeometry 2.0, released in May 2025, combines a language model with a formal theorem prover. It scored 74% on IMO geometry problems - far higher than any pure LLM. Why? Because it didn’t guess. It verified.That’s the path forward: LLMs for decomposition, symbolic engines for execution. For every complex problem, break it into steps. Let the model identify the operations. Let a verified solver do the math. This hybrid approach adds 150ms per query - but improves accuracy by up to 38%.

And the benchmarks? They’re evolving too. MathOdyssey, a new suite with 15,000 problems from K-12 to PhD level, now evaluates not just answers - but reasoning quality. Even the best models score below 40% on research-level tasks. That’s not a failure. It’s a wake-up call.

By 2027, Gartner predicts every enterprise-grade math AI will include formal verification layers. That’s not speculation. It’s necessity. We can’t afford to trust models that don’t understand the math they’re doing. Not in engineering. Not in finance. Not in education.

What You Should Do

If you’re using these models for math-heavy tasks:- Never rely on answers alone. Always verify with a symbolic engine like SymPy or Wolfram.

- Test with perturbed inputs. Change numbers, swap variables, flip signs. See if the model breaks.

- Use open-source models when possible. They’re less polished, but more transparent.

- For research or safety-critical work, treat LLM outputs as drafts - not final answers.

- Train your team on prompting techniques. A poorly phrased question will get a confidently wrong answer.

The goal isn’t to stop using AI. It’s to use it wisely. Mathematical reasoning isn’t about speed. It’s about certainty. And right now, no large language model can give you that - not without help.

What’s the difference between GSM8k and MATH benchmarks?

GSM8k contains 8,500 grade-school word problems that require multi-step reasoning, like calculating change or combining rates. The MATH dataset has 12,500 competition-level problems from algebra, geometry, and number theory, with difficulty up to Olympiad level. GSM8k tests logic in everyday contexts; MATH tests deep mathematical knowledge.

Why do models perform poorly on perturbation tests like GSM-Symbolic?

Models trained on standard benchmarks memorize patterns, not principles. When numbers or variables change slightly in GSM-Symbolic, the model can’t adapt because it never learned the underlying logic. It’s like memorizing a song instead of learning music theory - change one note, and you’re lost.

Can any current LLM generate a valid mathematical proof?

No. Even the best models score below 12% on the UC Berkeley PhD-level proof benchmark. They produce text that looks like a proof but contains logical gaps, circular reasoning, or false assumptions. Human experts consistently reject their outputs as invalid.

Are open-source models better for mathematical reasoning?

Not necessarily more accurate, but more reliable. Open-source models like DeepSeek-Math and Qwen-Math-Instruct don’t hide their internals. You can audit them, test them offline, and understand their limitations. Closed-source models may score higher on benchmarks, but you can’t verify how they got there.

What’s the future of AI in mathematical reasoning?

The future lies in hybrid systems. Combining language models for problem decomposition with symbolic engines like SymPy for execution. Google’s AlphaGeometry 2.0 shows this works - achieving 74% on IMO geometry problems by using a theorem prover. Pure LLMs will hit a wall. Hybrid approaches are the only path to trustworthy mathematical AI.

Sandi Johnson

March 7, 2026 AT 18:51So we built AIs that can solve IMO problems but can't tell if x > 1 when the problem says so. Brilliant. Just brilliant. We're not creating thinking machines. We're creating very convincing parrots with calculators. And now schools are using them as tutors? I'd rather have my 14-year-old nephew explain calculus than this thing that thinks 0 = 5.1.

Eva Monhaut

March 7, 2026 AT 22:11It’s heartbreaking but not surprising. We keep treating AI like a magic box that just works, when really it’s a mirror - it reflects back the patterns we fed it, not truth or understanding. The real tragedy isn’t that models fail proofs - it’s that we keep pretending they’re capable of real reasoning. We need to stop romanticizing performance metrics and start valuing transparency. Open-source models aren’t flashy, but at least we know what’s inside. That’s worth more than 68% accuracy.

mark nine

March 9, 2026 AT 11:20Models don't think. They pattern-match. That's it. You change one number in a geometry problem and they go full hallucination. No big surprise. The real story is how fast people are deploying these things in classrooms and finance. We're building skyscrapers on sand and calling it innovation. Just say no to black box math.

Tony Smith

March 9, 2026 AT 16:13One might argue that the current paradigm of large language models represents a fundamental misapplication of computational resources. The pursuit of higher benchmark scores has led to an ecosystem that prioritizes superficial fluency over structural integrity. In the context of mathematical reasoning, this is not merely suboptimal - it is an existential risk. Hybrid systems are not merely preferable; they are non-negotiable.

Rakesh Kumar

March 9, 2026 AT 23:24Bro, I saw a model solve a differential equation and then say the answer was ‘because the universe wanted it’. I laughed. Then I cried. We are teaching kids with AIs that think 2+2=5 if it ‘looks right’. I work in a rural school in India - our kids don’t have tutors. They have this. And it’s not helping. It’s lying. We need real tools, not fancy text generators. Open-source. Now.

Bill Castanier

March 11, 2026 AT 20:36Proofs require logic. Models generate plausible text. That’s the gap. No model can pass a peer review. Not one. Stop pretending they can.

Ronnie Kaye

March 12, 2026 AT 16:19Let’s be real - if a model can’t handle a 5.1 instead of a 5, it’s not smart. It’s a glitchy spreadsheet with a thesaurus. And yet we’re letting it grade our kids? I’ve seen students trust these things so hard they forgot how to do long division. We’re not just failing math education. We’re failing critical thinking. Wake up. This isn’t progress. It’s a trap wrapped in a GPT.

Priyank Panchal

March 14, 2026 AT 10:04You people are naive. Open-source models? They’re barely better. The real issue is that no one is holding these companies accountable. Google, Anthropic, OpenAI - they’re all pushing benchmarks like they’re winning a race, but they don’t care if the model explodes in production. They’ll just update the API and blame the user. We need regulation. Not more benchmarks. Not more hype. Laws. Now.