When you use an LLM like GPT-4 or Claude 3, you’re not just asking a question-you’re paying for it. But how exactly are you being charged? Most companies think it’s simple: pay per word, or per request. But the real story is more complicated. Right now, nearly every LLM provider charges based on cost per token. But a new model is quietly rising: cost per action. And the difference between them could save you thousands-or cost you more than you expected.

What Even Is a Token?

Before you compare pricing models, you need to understand what a token is. It’s not a word. It’s not even always a full word. A token is a chunk of text that the model processes. In English, 1,000 tokens usually equals about 750 words. But it’s not consistent. "Hello" might be one token. "Unbelievable" could be two or three. "Dr. Smith"? Two tokens. "The cat sat on the mat."? Seven tokens. It depends on the model, the language, and even punctuation. When you send a prompt to an LLM, it breaks it down into tokens. Then, when it generates a response, it builds that response one token at a time. Both the input and the output cost money. And they’re not priced the same. Take Claude Sonnet 4.5. Input tokens cost $3 per million. Output tokens? $15 per million. That’s a five-to-one ratio. Why? Because generating new text is computationally heavier than reading what you typed. The model has to predict each next token, one after another, while input processing can happen in parallel. This isn’t a bug-it’s how the math works. But it means if your task generates long answers, your bill spikes fast. And then there’s reasoning tokens. Some models, like Anthropic’s, charge extra for internal thinking. If the model has to reason through a legal contract step-by-step, those "thinking" steps are counted separately-and priced higher than output tokens. Users on Reddit have reported surprise bills 3x higher than expected because they didn’t realize the model was using extra tokens just to think.Why Cost per Token Dominates (For Now)

Right now, 92% of LLM providers charge by token. That’s not random. It’s practical. Token-based pricing directly reflects the computational cost. More tokens? More GPU time. More memory. More electricity. Providers like OpenAI, Google, and Anthropic built their APIs around this model because it’s transparent, scalable, and fair-if you’re technically savvy. For developers, it makes sense. You can track exactly how much each prompt costs. You can test different phrasings. You can cache responses. You can optimize prompts to cut token usage by 50% or more. A SaaS startup in Austin cut its monthly LLM bill from $1,200 to $380 just by rewriting prompts to be more concise. That’s real savings. But here’s the catch: you need to be a developer to do this. You need to understand tokenization, context windows, caching, and model behavior. Most business teams don’t. Marketing teams don’t care how many tokens a blog post generates. Legal teams don’t want to count how many tokens are in a contract summary. They just want the job done.

Enter Cost per Action

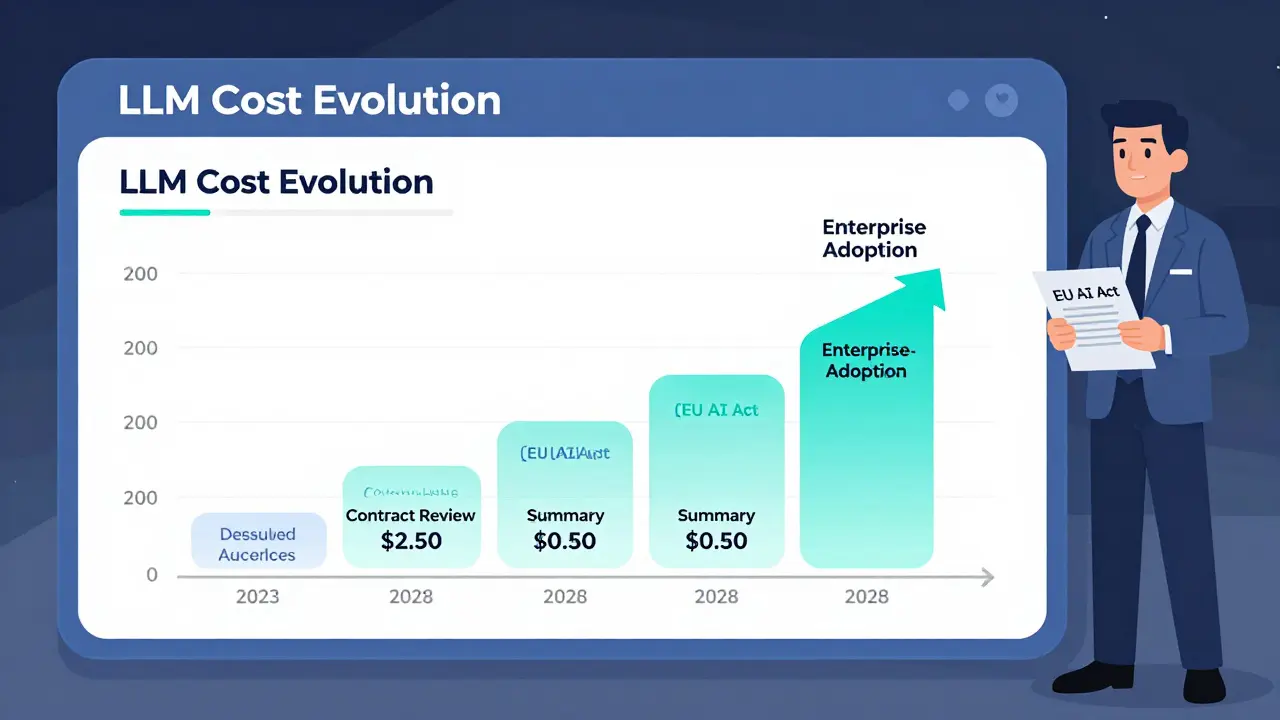

Cost per action flips the script. Instead of charging for tokens, you charge for tasks. One contract review = $1. One summary = $0.50. One data extraction = $0.25. No counting. No surprises. Just a fixed price for a defined outcome. This isn’t theoretical anymore. In April 2025, Jasper.ai launched "Content Generation Packs"-fixed pricing for one blog post, one social media campaign, or one product description. In May 2025, Harvey AI introduced "Legal Task Units"-$2.50 per contract review, $1.75 per clause extraction. These aren’t gimmicks. They’re responses to real pain. The advantages are obvious:- Budgets become predictable. Finance teams can plan.

- Non-technical users don’t need training. Just click "summarize" and pay the fee.

- Costs align with business value. You’re not paying for how long the model thinks-you’re paying for the result.

Which Model Fits Your Use Case?

It’s not about which is better. It’s about which fits your workflow. If you’re doing:- Exploratory work (brainstorming, open-ended Q&A, research): Stick with cost per token. You need flexibility.

- High-volume, repetitive tasks (summarizing support tickets, extracting data from forms, classifying emails): Look for cost per action. You’ll save time and money.

- Long-form content generation (reports, whitepapers, legal briefs): Be careful. Per-token pricing can balloon if outputs are unpredictable. Per-action models with capped output length are safer.

- Enterprise workflows (HR onboarding, compliance checks, contract analysis): The future is hybrid. Providers are starting to offer "outcome-based pricing"-you pick the quality level (fast, balanced, thorough), and the system picks the model and pricing automatically.

What’s Coming Next?

Per-token pricing isn’t going away. Not yet. But it’s becoming less relevant for non-technical users. By 2028, experts predict 35% of enterprise LLM workflows will use per-action or outcome-based pricing. Why? Because regulation is catching up. The EU AI Act now requires transparent cost calculation. That means providers can’t hide behind "it depends on tokens." They’ll need to show clear value per task. Also, LLM inference costs are dropping fast. From Q3 2023 to Q1 2025, per-million-token costs fell by 68%. That’s a good thing. But it also means providers need new ways to make money. Charging by task lets them bundle value-like including editing, formatting, and compliance checks-into a single price. The trend is clear: pricing is shifting from technical units to business outcomes. The best companies aren’t just choosing models-they’re choosing pricing models.What Should You Do Today?

Start by asking three questions:- Do your teams understand token usage? If not, per-token pricing will confuse them.

- Are your LLM tasks predictable? If yes, demand per-action pricing from your vendor.

- Are you paying more than $100/month for LLMs? If so, you’re probably overpaying. Track usage. Test alternatives.

Is cost per token going away?

No, not anytime soon. Cost per token is still the standard for developer-facing APIs and flexible use cases. But it’s becoming less relevant for business users. Expect it to remain dominant through 2027, especially for startups and experimental projects. However, as enterprises demand predictable budgets, per-action pricing will grow rapidly in regulated industries like legal, healthcare, and finance.

Why do input and output tokens cost different amounts?

Because processing input is faster than generating output. Input tokens are read in parallel-your whole prompt is analyzed at once. Output tokens are generated one by one, requiring complex, sequential calculations. Each new token depends on the last. This takes more computing power, more time, and more energy. That’s why output tokens cost 3x to 5x more than input tokens on most models.

Can I save money by using smaller LLMs?

Yes, but not always. Smaller models like Claude Haiku or GPT-3.5-turbo are cheaper per token. But they’re less accurate. If you need high-quality summaries or legal analysis, using a cheaper model might mean more retries, longer prompts, or manual corrections-which adds hidden costs. The goal isn’t to pick the cheapest model. It’s to pick the one that delivers the right result with the least total cost. Sometimes, a more expensive model is actually cheaper overall.

What are "reasoning tokens," and why do they cost more?

Reasoning tokens are internal steps a model takes to think through a problem-like breaking down a contract clause or solving a math problem step-by-step. These aren’t part of the final output, but they still require computational resources. Providers like Anthropic charge extra for them because they’re computationally intensive. If you’re processing complex documents, reasoning tokens can double your bill. Always check if your provider breaks them out separately.

How do I know if I’m being overcharged?

Track your cost per task, not just per token. If you’re processing 100 contracts a month and paying $500, but a competitor offers the same service for $150 per contract using fixed pricing, you’re overpaying. Use tools like Traceloop to map token usage to business outcomes. If you can’t explain why your cost went up last month, your pricing model isn’t working for you. Ask your vendor for per-action pricing. If they refuse, look elsewhere.

Destiny Brumbaugh

February 28, 2026 AT 08:24Also, why is everyone still using GPT-4? Claude Haiku does 90% of what we need and costs a fraction. Stop being loyal to brands. Be loyal to savings.

Sara Escanciano

February 28, 2026 AT 12:00And yes, I've seen the invoices. I know what I'm talking about.

Elmer Burgos

March 1, 2026 AT 11:16Also, props to the author for mentioning Langfuse. That tool saved my sanity last quarter.

Jason Townsend

March 1, 2026 AT 18:47They're not selling you a service. They're selling you dependence.

Antwan Holder

March 3, 2026 AT 03:25Every token I paid for today feels like a little death. And the worst part? I'm okay with it. That's the real horror.

Angelina Jefary

March 4, 2026 AT 14:41