Generative AI is everywhere now. It writes emails, designs logos, codes apps, and even helps doctors interpret scans. But who’s responsible when it gets things wrong? When a medical AI misdiagnoses a patient? When a marketing tool generates racist content? When a job screening system filters out qualified candidates because it learned bias from old data? These aren’t hypotheticals anymore. They’re happening daily. And without clear governance, the chaos will only grow.

Why Governance Isn’t Optional Anymore

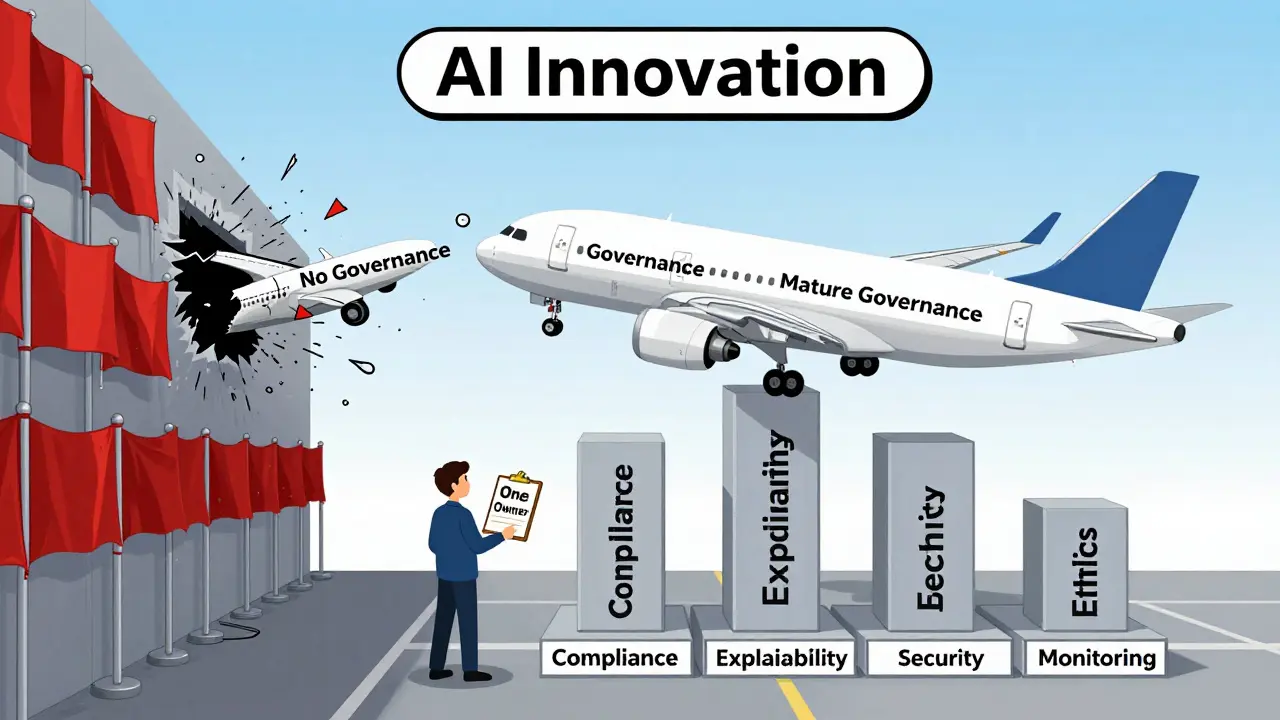

In 2023, companies treated generative AI like a wild experiment. Try it out. See what happens. If it breaks, fix it later. Today, that mindset is dead. According to PwC’s 2025 Responsible AI survey, organizations with mature governance frameworks see 23% higher ROI on AI projects. Why? Because governance isn’t about stopping innovation-it’s about making it sustainable. Without structure, AI becomes a liability. With it, AI becomes a strategic asset. The ModelOp 2025 AI Governance Benchmark Report found that 100 senior AI leaders saw a growing gap between how much they invested in AI and how much value they actually delivered. The culprit? Poor governance. Disconnected systems. Unclear ownership. Too many approvals. Too little speed. And worst of all-governance theater. That’s when companies set up committees, write policies, and call it done, but never actually enforce them. Dr. Marcus Wong from the TechPolicy Institute calls it the biggest trap: creating the appearance of control without real risk management.The Three Main Governance Models in 2025

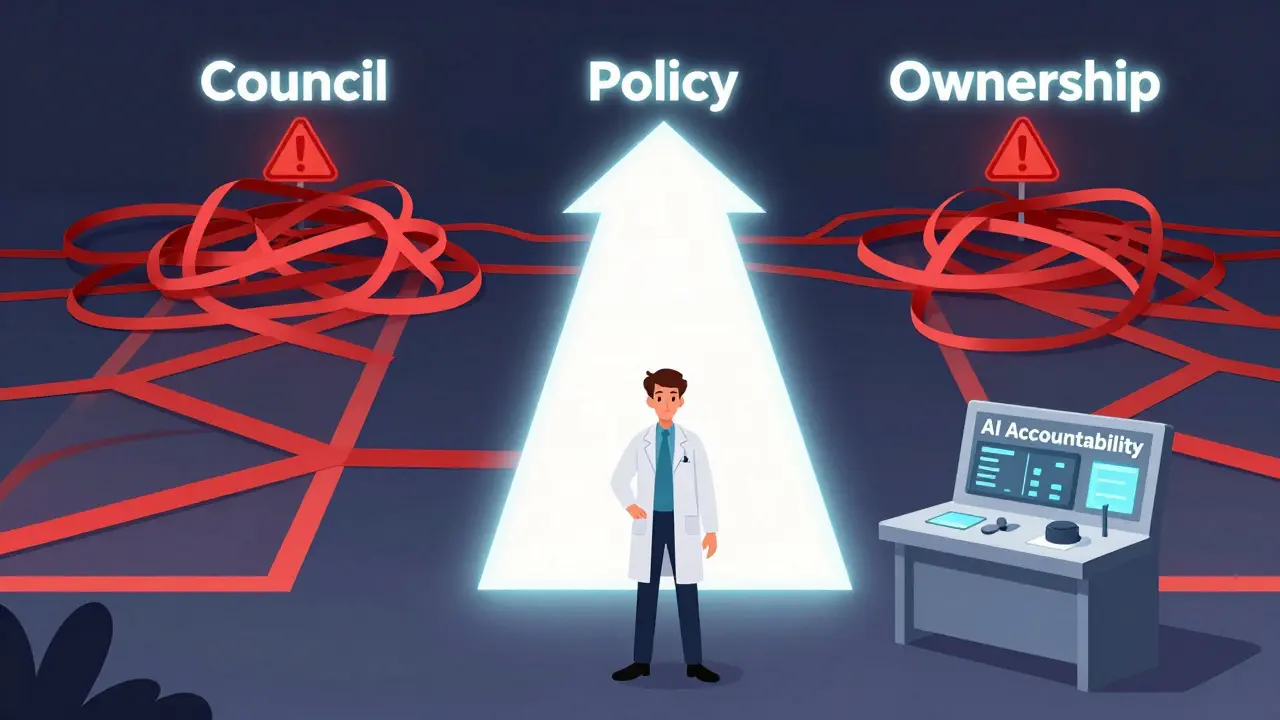

There’s no one-size-fits-all solution. But three models dominate how organizations are responding:Council-Based Oversight

This was the first big wave. Companies formed AI ethics councils-cross-functional teams with legal, compliance, data science, and business reps. The idea? Collective wisdom prevents bad decisions. It sounds smart. And for a while, it worked. But here’s the catch: councils are slow. PwC’s survey found that 62% of organizations using this model added 14 to 21 days to every AI deployment. A senior data scientist at a major bank told Reddit users their council initially delayed deployments by 18 days. That’s not just frustrating-it’s dangerous. In fast-moving industries like finance or healthcare, waiting weeks to launch a fraud-detection model means losing money every day. Worse, councils often lack real authority. They review, they advise, they delay. But who makes the final call? No one. And when something goes wrong? Blame gets passed around like a hot potato.Policy-Driven Frameworks

The second model skips the committee and goes straight to rules. Clear policies on data quality, model training, transparency, and monitoring. Think of it like a playbook: here’s what you can do, here’s what you can’t, and here’s how to check if you’re following the rules. Financial institutions love this. 92% of them require mandatory red teaming-simulated attacks to test AI security. Healthcare? 87% demand explainability layers so doctors can understand why an AI made a certain recommendation. These rules are precise because the stakes are high. But policies have a fatal flaw: they’re static. AI evolves faster than any policy document. A rule written in January 2025 might be irrelevant by June. AI21 Labs warns that rigid policies cause 42% of models to be rejected for non-critical issues-wasting thousands of engineering hours. A healthcare startup reported that applying generic policies to their medical imaging AI led to 11,000 wasted hours in just one quarter.Accountability-Focused Models

The third model is the quiet winner. Instead of committees or rulebooks, it asks one question: Who owns the outcome? In accountability-focused governance, every AI project has a single person responsible-not a team, not a committee. That person is accountable for bias, accuracy, security, and compliance. If the AI misleads a customer? They answer for it. If it leaks data? They’re on the hook. This isn’t about blame. It’s about clarity. And it works. ModelOp found that organizations using this model deploy AI 33% faster than those using councils. A Fortune 500 manufacturer implemented this approach and cut AI-related incidents by 55% while speeding up deployments by 31%. No delays. No bureaucracy. Just ownership.The Core Components of Real Governance

No matter which model you choose, effective governance rests on five technical pillars, as outlined by Essert Inc. and backed by NIST’s AI Risk Management Framework:- Policy and Compliance: Your rules must align with real laws-not just wishful thinking. The EU AI Act and U.S. AI Bill of Rights (updated February 2025) set hard boundaries. Ignoring them risks fines up to 7% of global revenue.

- Transparency and Explainability: Can a user understand why the AI made a decision? If not, you’re building distrust. In healthcare, this isn’t optional-it’s a legal requirement.

- Security and Risk Management: AI models are hacked. Adversarial attacks trick them into giving false outputs. Red teaming, encryption, and access controls aren’t nice-to-haves. They’re survival tools.

- Ethical Considerations: Bias isn’t just a social issue-it’s a business risk. A 2025 study found that companies with strong bias mitigation saw a 37% drop in AI-related complaints and lawsuits.

- Continuous Monitoring and Auditing: AI doesn’t stop learning. Your oversight can’t either. Real-time dashboards that track accuracy drift, data quality, and user feedback are now standard in mature organizations.

The ‘Bring Your Own AI’ Problem

Here’s something most governance plans ignore: employees are already using AI. Not the company’s tools. Their tools. ChatGPT. Gemini. Claude. Perplexity. Microsoft’s 2024 study found 75% of employees use AI at work-and 78% bring their own tools. That’s not rebellion. It’s efficiency. People want to get their jobs done faster. But shadow AI is a security nightmare. It bypasses data controls, leaks confidential info, and creates unmonitored models that no one can audit. The solution? Don’t ban it. Contain it. Northern Light’s case studies show that secure sandbox environments-where employees can use AI tools but under controlled conditions-boost compliance from 31% to 89%. Give people the tools they want, but lock them in a safe cage.What Mature Governance Looks Like

Only 22% of organizations have what PwC calls “mature governance.” What do they have that others don’t?- Clear ownership: One person answers for each AI system.

- Integration: Governance tools talk to their data, monitoring, and deployment systems-not isolated spreadsheets.

- Speed: They don’t slow down innovation-they enable it. Deployment cycles are 22% faster.

- Adaptability: Their policies update quarterly, not annually. They use automated testing and red teaming to catch issues before they go live.

- Business alignment: Governance isn’t a compliance cost. It’s tied to revenue. Teams that link governance to business goals see 28% higher AI value.

What’s Coming Next

The biggest shift isn’t in tools-it’s in behavior. Agentic AI is here. These aren’t just chatbots that answer questions. They’re systems that make plans, book meetings, negotiate contracts, and execute actions on their own. Oliver Patel predicts that by Q4 2025, 45% of large enterprises will use “dynamic guardrails”-AI systems that adjust their own rules based on real-time risk. Instead of a static policy saying “Don’t access customer data,” the system will say: “You can access this data, but only if the request matches the user’s role, the context is approved, and the action is logged.” Gartner forecasts a $14.2 billion market for AI governance tools by 2027. But here’s the catch: if governance doesn’t accelerate 3.5x faster than AI innovation, it’ll become irrelevant. The organizations that win will be the ones who treat governance as a living system-not a checklist.What’s the difference between AI governance and AI ethics?

AI ethics is about values: fairness, transparency, human dignity. Governance is about action: who’s responsible, what rules are enforced, how violations are caught. You need both. Ethics tells you what’s right. Governance makes sure it happens.

Can small companies afford AI governance?

Yes-and they need it more than big companies. Small teams don’t have the budget to fix a scandal. Start with one rule: assign ownership. Pick one person to be accountable for every AI tool your team uses. Then add real-time monitoring. Tools like Essert and ModelOp offer lightweight plans for startups. The goal isn’t perfection. It’s prevention.

Is AI governance just for tech teams?

No. Legal, compliance, HR, marketing, and finance all need to be involved. An AI hiring tool affects HR. A customer service bot affects marketing. A financial risk model affects finance. Governance isn’t IT’s job. It’s everyone’s job.

How do I know if my governance model is working?

Look at three metrics: 1) How many AI incidents have you avoided? 2) How much faster are you deploying models now? 3) Are users and regulators satisfied? If your bias incidents dropped 37%, deployment time shrank by 22%, and audit findings fell 41%, you’re on track.

What’s the biggest mistake companies make?

Waiting until something breaks. If you’re only building governance after a scandal, you’re already behind. The best organizations start before they even deploy their first model. Governance isn’t a reaction. It’s a foundation.

Next Steps: Where to Start

If you’re just beginning:- Identify your top three AI use cases. Which ones carry the most risk? Which ones drive the most value?

- Assign one owner for each. No committees. One person. One accountability line.

- Set up real-time monitoring. You don’t need a fancy platform. Start with a simple dashboard that tracks accuracy, latency, and user feedback.

- Train your team. Even 10 hours of basic training on bias, security, and compliance cuts mistakes by half.

- Open a sandbox. Let employees use AI-but inside a controlled environment. Track usage. Audit results. Don’t ban. Contain.

Sheetal Srivastava

March 17, 2026 AT 06:12Let’s be real-governance theater is the new corporate yoga. You’ve got your AI ethics council sitting in a glass-walled room sipping matcha lattes while the model churns out biased hiring filters in the basement. The EU AI Act? Pfft. That’s just a suggestion for startups who still use Excel. Real governance isn’t about policy documents-it’s about *ontological accountability*. Who owns the emergent behavior when the LLM starts drafting union strike letters for HR? Not the compliance officer. Not the data scientist. Someone’s got to answer to the Hegelian dialectic of algorithmic oppression.

And don’t even get me started on ‘explainability.’ You think a transformer layer can be ‘explained’ like a calculus theorem? It’s a black box with a PhD in emotional manipulation. We need *interpretive frameworks*, not dashboards. The NIST framework? Cute. It’s like putting a Band-Aid on a hemorrhage.

Shadow AI? Of course employees are using ChatGPT. Why? Because your corporate AI is a 14-month-old toddler with a spreadsheet. They’re not rebels-they’re *pragmatic epistemologists*. You want to contain it? Build a *hermeneutic sandbox* with differential privacy layers, not some clunky proxy server. Otherwise, you’re just enabling the next Deepfake Scandal™.

And let’s not romanticize ‘accountability.’ Who’s the ‘owner’? The mid-level manager who got promoted because they knew how to spell ‘transformer’? That’s not ownership-that’s sacrificial lambing. Real ownership requires legal liability, equity clawbacks, and mandatory ethics re-certification. Otherwise, it’s just performative compliance dressed in Agile jargon.

Anand Pandit

March 18, 2026 AT 17:32Hey, I really appreciate this breakdown-it’s one of the clearest takes I’ve seen on AI governance lately.

I work at a small health tech startup, and we started with just one rule: one person owns every AI tool we use. No committees. No endless meetings. Just a name on a Slack channel and a quarterly review. It’s crazy how much faster we moved after that. We went from 6-week deployments to under 10 days. And guess what? Our error rates dropped too.

Turns out, people rise to the occasion when they know they’re accountable. Not because they’re scared, but because they care. We also set up a simple sandbox with filtered access to ChatGPT. Employees love it. No one’s sneaking in rogue prompts anymore. Just clean, tracked, safe usage.

Start small. Own it. Monitor it. You don’t need a whole team or a $500k platform. Just clarity. And a little courage.

Reshma Jose

March 19, 2026 AT 09:42Ugh I’m so tired of hearing about ‘councils’-they’re just bureaucracy in a hoodie.

We tried the council model at my last job. 12 people. Monthly meetings. Half the time people showed up late or didn’t read the docs. Then we switched to accountability: one PM per model. Boom. Deployments cut from 18 days to 6. No one got fired. No one got promoted. But everyone started acting like adults.

And shadow AI? Yeah, employees are using it. So what? Ban it and they’ll use personal phones. Contain it and they’ll give you feedback on what actually works. We gave them a locked-down version of Claude with audit logs. Now we’re using their feedback to improve our internal tools. Win-win.

Stop over-engineering. Stop over-politicking. Just pick one person, give them a tool, and let them run with it. The rest is noise.

rahul shrimali

March 20, 2026 AT 08:22Eka Prabha

March 21, 2026 AT 02:01Let’s not pretend this is about ethics. It’s about liability. Corporations don’t care if AI is fair-they care if it’s legally defensible. The ‘accountability model’? That’s just a legal shield. Someone gets sacrificed when things go wrong. That’s not governance-that’s scapegoating dressed up in buzzwords.

And ‘dynamic guardrails’? Sounds like a corporate fantasy. You think AI can self-regulate? It’s trained on human data. Human data is messy. Biased. Violent. You’re asking an algorithm to police itself based on the very chaos it was trained on. That’s not innovation. That’s a time bomb with a UI.

Who’s auditing the auditors? Who’s monitoring the monitoring dashboards? And who’s going to pay the lawyers when the AI starts writing threatening emails to customers? You think a ‘sandbox’ fixes that? Please. We’re building a nuclear reactor and calling it a toaster.

And don’t get me started on ‘mature governance.’ Only 22%? That’s because the other 78% aren’t stupid. They know this whole system is a smokescreen. The real goal isn’t safety. It’s avoiding class-action lawsuits. And if you believe otherwise, you’re not naive-you’re complicit.

Bharat Patel

March 22, 2026 AT 21:28It’s funny how we treat AI governance like it’s a new problem. But really, it’s just the same old question: who is responsible when a tool becomes an actor?

Think about the printing press. The church didn’t ban it. They didn’t form committees. They didn’t write 50-page policy manuals. They adapted. They rethought authority. They let new voices emerge-even if they were dangerous.

Today, we’re terrified of the AI’s voice. So we build walls. Committees. Dashboards. But the real question isn’t how to control it-it’s how to *listen* to it. What does the AI reveal about us? About our biases? Our fears? Our laziness?

Governance shouldn’t be about control. It should be about reflection. The best AI systems don’t just make decisions-they make us better at making decisions. Not because they’re perfect. But because they’re mirrors.

Maybe the real ‘accountability’ isn’t assigning blame. It’s asking: what did this AI expose about our organization that we were too afraid to see?

Bhagyashri Zokarkar

March 23, 2026 AT 05:20ok so like i read this whole thing and honestly its just a lot of corporate nonsense

they say accountability but really they just want someone to take the fall when the ai says something racist or leaks data

and the sandbox thing? yeah right like employees are gonna use some locked down version when they can just paste into chatgpt on their phone

and dont even get me started on explainability you think a doctor cares if the ai used attention weights or whatever jargon they use

they just want to know if the patient is gonna die or not

and governance theater? yeah thats what it is

companies spend millions on this stuff so they can say theyre responsible while the real problem is they dont hire enough humans to double check the ai

its all just noise

and the 22% that have mature governance? probably just have a really good PR team

we dont need more policies we need more people