Tag: tokenization

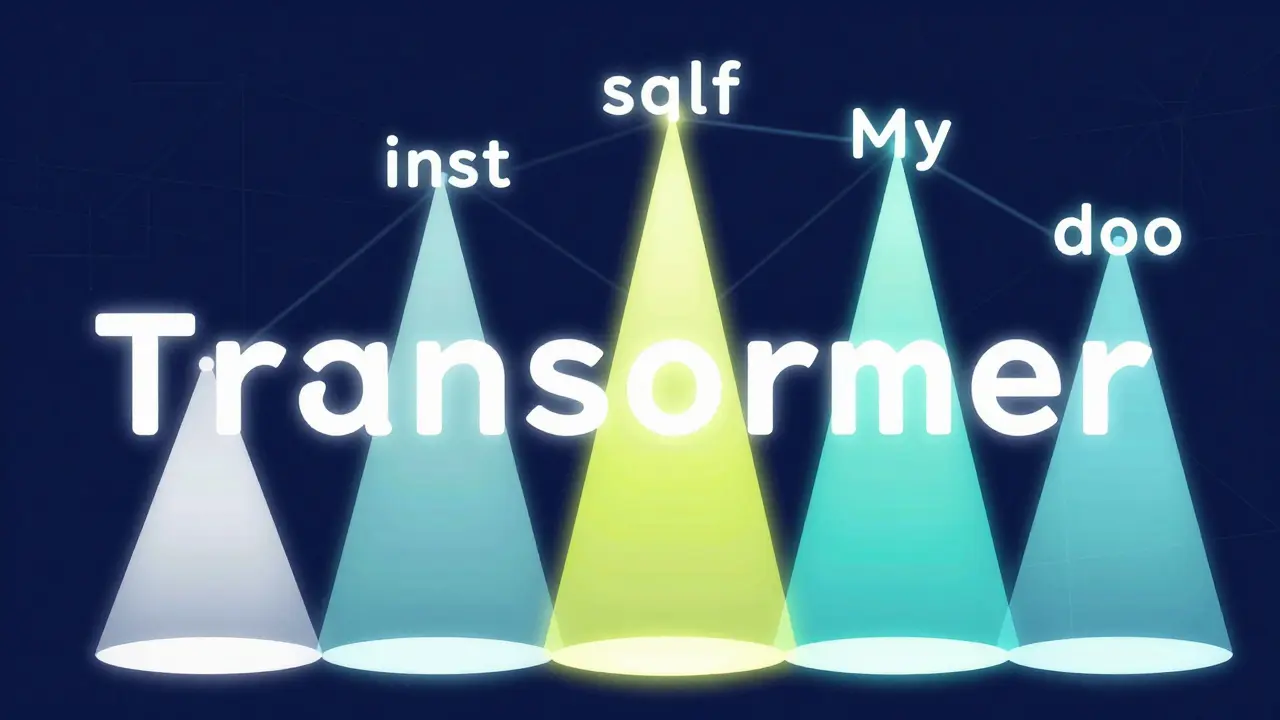

How Large Language Models Work: Core Mechanisms and Capabilities

Explore the inner workings of Large Language Models, from Transformer architecture and self-attention to tokenization and the battle against hallucinations.

Read moreHow Vocabulary Size in LLMs Affects Accuracy and Performance

Vocabulary size in large language models directly impacts accuracy, multilingual performance, and efficiency. New research shows larger vocabularies (100k-256k tokens) outperform traditional 32k models, especially in code and non-English tasks.

Read more