Generative AI doesn’t guess. It predicts. And when it predicts wrong, it doesn’t say "I don’t know." It confidently makes up facts, invents citations, and serves up half-truths dressed like facts. This is the hallucination problem. You ask for the best restaurant in Cambridge, and it picks one in England when you meant Massachusetts. You ask for a summary of a medical study, and it cites a paper that never existed. You’re not alone. Millions of people are using AI tools every day without realizing how easily they’re being misled.

Here’s the truth: you can’t fix AI. But you can fix your prompts.

Most people treat AI like a search engine. Type in a question, get an answer. But AI isn’t a database. It’s a pattern-matching machine that stitches together fragments of what it’s seen before. If you give it vague input, you’ll get vague, unreliable output. The difference between a useful answer and a dangerous lie often comes down to how you ask the question.

Stop Being Vague. Start Being Specific.

"Write a report" is a terrible prompt. So is "Tell me about diabetes." These are empty shells. AI fills them with whatever seems plausible - which often means it makes stuff up.

Try this instead: "Summarize the 2023 CDC guidelines on Type 2 diabetes management for adults over 65, focusing on medication interactions with blood pressure drugs. List only sources from peer-reviewed journals published after 2020. Exclude lifestyle advice."

That’s not just a prompt. It’s a blueprint. It tells the AI: What to do, who it’s for, what sources to use, and what to ignore. The more specific you are, the less room the AI has to hallucinate.

Real-world example: A team at UCSF asked AI to build a model predicting preterm birth using data from 1,000 pregnant women. One prompt said: "Write code to identify risk factors for preterm birth from this dataset." Result? Garbage. Another prompt said: "Use Python and scikit-learn to train a logistic regression model. Include only variables from the DREAM Challenge dataset: gestational age, maternal BMI, prior preterm birth, and preeclampsis diagnosis. Output AUC score and feature importance. Do not use neural networks." The second prompt generated working code in minutes - code that matched human-built models. The difference wasn’t the AI. It was the prompt.

Use Constraints Like a Fence

AI doesn’t have ethics. It doesn’t have common sense. It just follows patterns. That’s why you need to build fences around what it can and can’t do.

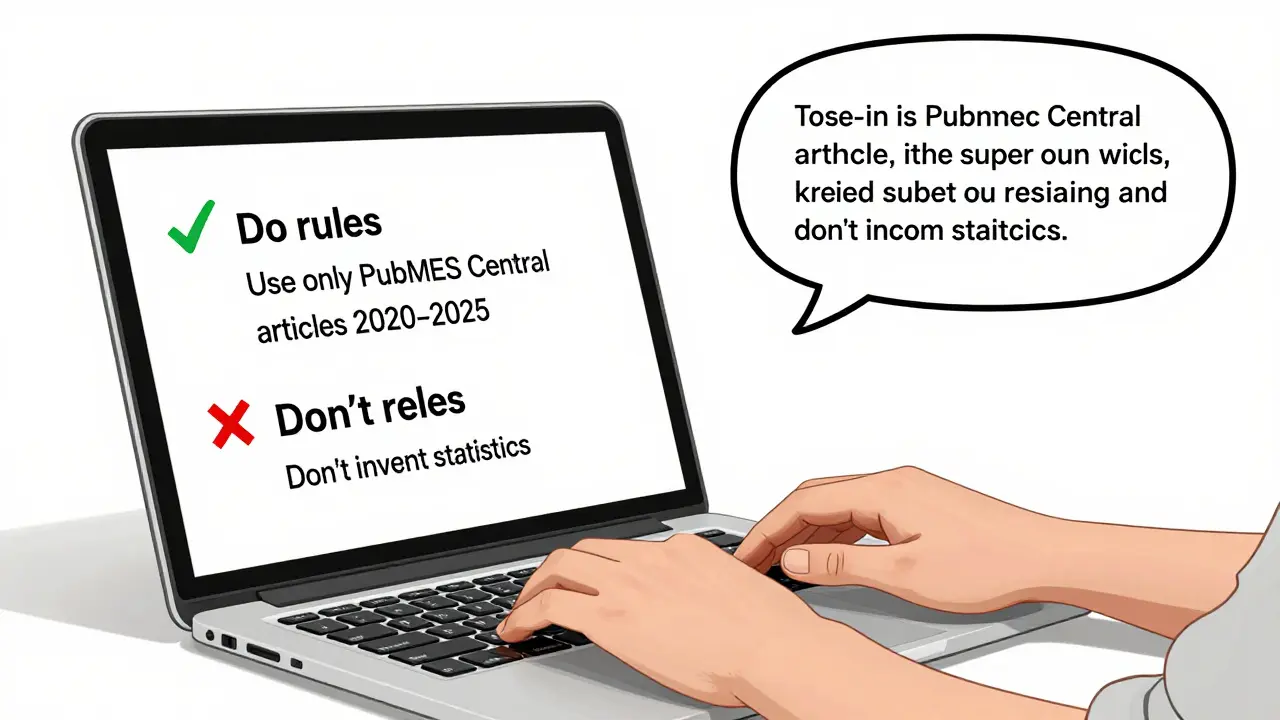

Use "Do" and "Don’t" statements like rules in a game:

- Do: Use only data from PubMed Central articles published between 2020 and 2025.

- Do: Format the answer as a bulleted list with no more than five items.

- Don’t: Invent statistics or estimate values not present in the source data.

- Don’t: Mention any brand names unless explicitly provided.

These aren’t suggestions. They’re guardrails. In biomedical research, one team found that prompts with explicit "don’t" rules reduced fabricated citations by 68%. Why? Because the AI had a clear boundary. It couldn’t wander into "probably true" territory - it had to stay inside the lines.

Even simple constraints help. If you ask for a quote, say: "Quote the exact sentence from the source text. Do not paraphrase." This forces the AI to retrieve, not rewrite. It’s the difference between hearing a witness and reading a fanfiction version of their testimony.

Act Like a Professional - Not a Chatbot

When you say "act as a doctor," the AI doesn’t become a doctor. But it does shift its internal weighting. It starts pulling from medical training data, prioritizing clinical language, and avoiding casual phrasing.

Try: "Act as a senior biomedical data scientist reviewing a draft manuscript. Critique the statistical methods used in the study. Point out any inconsistencies with the DREAM Challenge protocols. Use formal academic tone. Do not suggest new experiments."

This works because AI responds to role cues. It’s not pretending - it’s accessing a different set of patterns. A study from Wayne State showed that prompts framing the AI as a "peer reviewer" produced more accurate, critical feedback than prompts that just said "review this."

Same goes for legal, financial, or technical roles. "Act as a patent attorney" will make the AI focus on precise terminology, jurisdictional limits, and claim structure. "Act as a financial auditor" will make it flag inconsistencies in ratios and trends. You’re not tricking it. You’re guiding it to the right mental library.

Extract Exact Answers - No Paraphrasing

One of the biggest risks in AI output is paraphrasing. The AI takes your source, rewords it, and slips in a tiny lie. You think you’re getting a summary. You’re actually getting a rewritten version with errors baked in.

To get extractive answers - real quotes, real numbers, real references - you need to lock it down:

- "Quote the exact sentence from the original text that describes the primary outcome."

- "List the three most frequent comorbidities mentioned in the study. Do not add any that aren’t explicitly stated."

- "Copy the exact wording of the inclusion criteria from Table 2. Do not summarize."

These prompts force the AI to behave like a copy machine, not a storyteller. In the UCSF study, prompts with "copy exactly" instructions reduced factual errors in extracted data by 72% compared to open-ended summaries.

And here’s a pro tip: if you’re pulling quotes from a long document, give the AI the text itself. Don’t just say "summarize this paper." Paste the relevant paragraph. Say: "From this text, extract the sentence that defines the primary endpoint. Highlight it in bold."

Iterate Like a Conversation

No one writes the perfect prompt on the first try. Even experts don’t. The best users treat AI like a junior colleague - one who’s smart but needs clear direction.

Start with a basic prompt. Get the output. Then say:

- "That’s close. I need the data broken down by age group."

- "You mentioned a study from 2022. Can you find the DOI?"

- "I don’t see the confidence interval. Can you add it?"

- "You used "likely" - that’s too vague. Use exact percentages from the source."

This feedback loop is where accuracy happens. Each correction teaches the AI what you value. It’s not magic. It’s training - with you as the instructor.

One researcher at Harvard described it this way: "I used to think I needed to get it right the first time. Now I know I need to get it right in five tries. The AI doesn’t mind. I do."

What You Can’t Fix

Even the best prompts can’t fix broken AI. Harvard’s guidelines are clear: "AI-generated content can be inaccurate, misleading, entirely fabricated, or offensive." No amount of prompting eliminates that risk.

That’s why human review isn’t optional - it’s the final filter. Always check:

- Are the sources real? (Search the DOI or title yourself.)

- Do the numbers add up? (Cross-check with the original data.)

- Is the tone appropriate? (Does it sound like a scientist, or a marketing bot?)

There’s no substitute for skepticism. The most accurate AI in the world still hallucinates. The difference between success and disaster is whether you’re watching.

Meta-Prompts: Ask the AI How to Ask Better

Stuck? Don’t guess. Ask the AI for help.

Try: "What information do you need from me to give me an accurate answer about this topic?" or "How should I phrase this prompt to get the most reliable results?"

This meta-prompting technique works because AI often knows what it can’t do. It can tell you: "I need the full text of the study," or "I can’t access data from 2026 because my training ends in 2024."

It’s like asking a librarian: "What do I need to bring to find this book?" You’re not just getting an answer - you’re learning how to ask better next time.

One team at UCSF used this approach to cut their prompt development time in half. Instead of guessing, they asked the AI to coach them. The result? More accurate outputs, faster.

Final Rule: Accuracy Is a Practice, Not a Feature

Prompting for accuracy isn’t a trick. It’s a skill. Like writing a good email or designing a spreadsheet. It takes practice. It takes patience. And it takes the willingness to admit: "I don’t trust this yet. Let me try again."

Forget the hype. The real power of AI isn’t in how smart it is. It’s in how much you can teach it - one precise, constrained, quoted, corrected prompt at a time.

And if you’re using AI for anything that affects decisions - health, law, finance, policy - your job isn’t to believe what it says. It’s to verify it. Every time.

Can prompting eliminate AI hallucinations completely?

No. Prompting can dramatically reduce hallucinations, but it can’t eliminate them. Even the most carefully crafted prompts can still trigger fabricated citations, incorrect statistics, or misleading summaries. AI lacks true understanding - it predicts patterns, not truth. Human verification remains essential for any critical use case.

What’s the difference between a good prompt and a great prompt?

A good prompt is clear and specific. A great prompt adds constraints, role definition, source limits, and extraction rules. For example: "Act as a clinical researcher. Extract the exact inclusion criteria from this paper. List only what’s stated in Table 1. Do not infer or summarize." Great prompts don’t leave room for interpretation - they force precision.

Do I need to use examples in every prompt?

Not every time, but they help - especially for complex tasks. If you’re asking for a legal summary, include a sample of the style you want. If you need a financial report format, paste a snippet. Examples give the AI a concrete target. But avoid copyrighted material. Use public data or your own templates.

Why do some AI tools respond better to prompts than others?

Different models are trained on different data and optimized for different tasks. Some are tuned for creativity, others for code. In the UCSF study, only 4 out of 8 AI tools produced accurate prediction models from the same prompts. The others generated code that didn’t run or used invalid methods. The tool matters - but so does how you use it. A well-crafted prompt can unlock better performance even on weaker models.

Is it safe to use AI for medical or legal research?

Only if you verify everything. AI can speed up literature reviews, extract data, and draft summaries - but it can’t replace clinical judgment or legal analysis. Always cross-check AI output with original sources. Never rely on AI for final decisions in high-stakes fields. Use it as a tool, not a source.

How do I know if the AI is giving me a quote or making something up?

Ask for the source. If it gives you a DOI, title, or page number, search for it independently. If it says "according to a 2024 study" but can’t name the journal or authors, it’s likely fabricated. Real quotes come with verifiable anchors. If you can’t trace it back to the original, treat it as suspect.

Can I use AI to help me write better prompts?

Yes. Ask the AI: "How can I improve this prompt to get more accurate results?" or "What details are missing for this task?" This meta-prompting technique helps uncover blind spots. Many users find their best prompts after one or two rounds of feedback from the AI itself.

Use AI like a smart assistant - not a oracle. It’s fast, but fallible. Your job isn’t to trust it. It’s to train it, test it, and never stop checking.

Eva Monhaut

February 24, 2026 AT 08:38This is the kind of post that makes me want to print it out and tape it to my monitor. The difference between a vague prompt and a surgical one is the difference between a hammer and a scalpel. I used to think AI was just being stubborn. Turns out I was just being lazy with my instructions. Now I start every prompt with: "What exactly do you need?" and "What should you ignore?" It’s changed everything.

One time I asked for "trends in diabetes care" and got a novel. Now I say: "List the three most recent ADA guidelines on insulin dosing for Type 2 patients over 70. Quote the exact sentence from each. Exclude lifestyle advice. No paraphrasing." Output was clean. No hallucinations. Just facts. It’s not the AI that’s broken - it’s the way we talk to it.

mark nine

February 24, 2026 AT 12:10Do this: ask for a quote. Then ask for the source. Then verify it. Repeat. That’s your workflow now. No exceptions.

Tony Smith

February 25, 2026 AT 14:34One must acknowledge, with the utmost gravity, that the fundamental architecture of current generative models is inherently devoid of epistemic humility. The system does not possess the capacity for doubt; it only optimizes for plausibility. Therefore, to mitigate the risk of epistemic contamination, one must impose syntactic constraints of unparalleled precision. One must, in essence, become the gatekeeper of linguistic boundaries - not merely instruct, but legislate.

"Do not infer. Do not extrapolate. Do not embellish. Cite only what is explicitly present. Format as bullet points. No more than five. No exceptions."

These are not suggestions. They are constitutional amendments for artificial cognition. The efficacy of such an approach is not anecdotal - it is empirically demonstrable. A 68% reduction in fabricated citations is not a coincidence. It is the result of enforced lexical discipline.

And yet, we persist in treating AI as a conversational partner rather than a highly volatile instrument. This is akin to handing a nuclear reactor remote to a toddler who says "please".

Rakesh Kumar

February 26, 2026 AT 01:13Bro, this is so true. I was using AI to draft a research summary last week - totally trusting it. Got a fake paper with a DOI that led to a cat video. I almost sent it to my advisor. My heart stopped. Since then, I’ve been screaming at the screen: "QUOTE THE EXACT LINE!" "NO PARAPHRASING!" "WHERE’S THE SOURCE?!"

Now I paste the actual text into the prompt. I don’t ask for summaries. I say: "Copy the third paragraph. Highlight it. Don’t change a word." It’s weird. It’s awkward. But it works. AI doesn’t understand context. It understands commands. Treat it like a very smart, very stupid intern who reads every word you type - and then ignores half of them.

Also - meta-prompting is magic. Ask the AI: "How would you mess this up?" Then fix it. That’s how I learned. Not from tutorials. From failure. And a lot of yelling.

Bill Castanier

February 26, 2026 AT 01:50Constraints are everything. "Do this. Don’t do that. Quote. Don’t summarize. Use this source. Ignore that."

Simple. Clear. Non-negotiable.

It’s not about complexity. It’s about control. The AI doesn’t care if you’re polite. It cares if you’re precise.

Ronnie Kaye

February 27, 2026 AT 13:55Oh wow. So the AI isn’t lying. It’s just… really bad at reading between the lines? Like a toddler who thinks "tell me about dogs" means "describe every dog you’ve ever seen, including the one from that one dream you had in 2012"?

I just realized I’ve been treating it like a human. It’s not. It’s a thesaurus with a confidence complex.

Now I say: "You are a photocopier. Your only job is to copy. Do not interpret. Do not improve. Do not add. Just copy."

Works. Every. Time.

Priyank Panchal

February 28, 2026 AT 13:00You’re all wasting time. The real problem isn’t the prompt. It’s the people who think AI can replace critical thinking. You don’t fix hallucinations with better wording. You fix them by not using AI for anything that matters. Medical? Legal? Policy? No. Not even as a "tool." It’s a time bomb with a UI. Stop pretending otherwise.

I’ve seen too many researchers get burned. One guy cited a fake study in a grant proposal. Got audited. Lost funding. Career ruined. All because he trusted a machine that has no concept of truth.

Stop optimizing prompts. Start questioning why you’re using AI at all.

Ian Maggs

March 2, 2026 AT 09:26There is, perhaps, a deeper ontological tension here - one that transcends the mere mechanics of prompting. The AI, as a system, operates within a probabilistic landscape of linguistic tokens, bereft of referential grounding; it does not know truth, only correlation. And yet, we project onto it the human desire for coherence, for narrative resolution - we want it to be a sage, a librarian, a confidant.

But it is neither. It is a mirror - and what it reflects is not the world, but the sum of our collective, often contradictory, textual remains.

Therefore, the act of prompting is not merely technical - it is a philosophical act. To constrain is to impose meaning. To quote is to demand fidelity. To say "do not paraphrase" is to say: "I do not trust the pattern. I trust the artifact."

And in that act - in the quiet insistence on literalism - we reclaim a sliver of epistemic sovereignty from the machine.

Perhaps, then, the most accurate prompt is not the most detailed… but the most humble.