When you ask a large language model a complex question-like "What are the latest FDA guidelines on insulin dosing for elderly patients with kidney impairment?"-it doesn’t just pull answers from memory. It reaches out to external documents, scans hundreds of them, and picks the most relevant pieces to build its response. But here’s the problem: the first round of document searches often gets it wrong.

Vector search, the go-to method for finding documents, works by matching numerical embeddings. It’s fast. But it’s also shallow. A document might contain the words "insulin," "elderly," and "kidney," and score high-even if the actual discussion is about diabetes in teenagers with no kidney issues. That’s not useful. That’s noise. And when this noise gets fed into the language model, the answer becomes unreliable, sometimes dangerously so.

This is where document re-ranking comes in. It’s not a flashy new technique. It’s a necessary correction. Think of it like a second opinion. After an initial search pulls back 15-20 documents, a smarter model steps in to re-evaluate each one-not just for keywords, but for contextual relevance. It asks: Does this actually answer the question? Is the information accurate, specific, and positioned in the right context?

How Re-Ranking Works: Beyond Vector Similarity

Vector search treats documents and queries as points in a high-dimensional space. If they’re close, they’re deemed similar. Simple. Fast. But flawed.

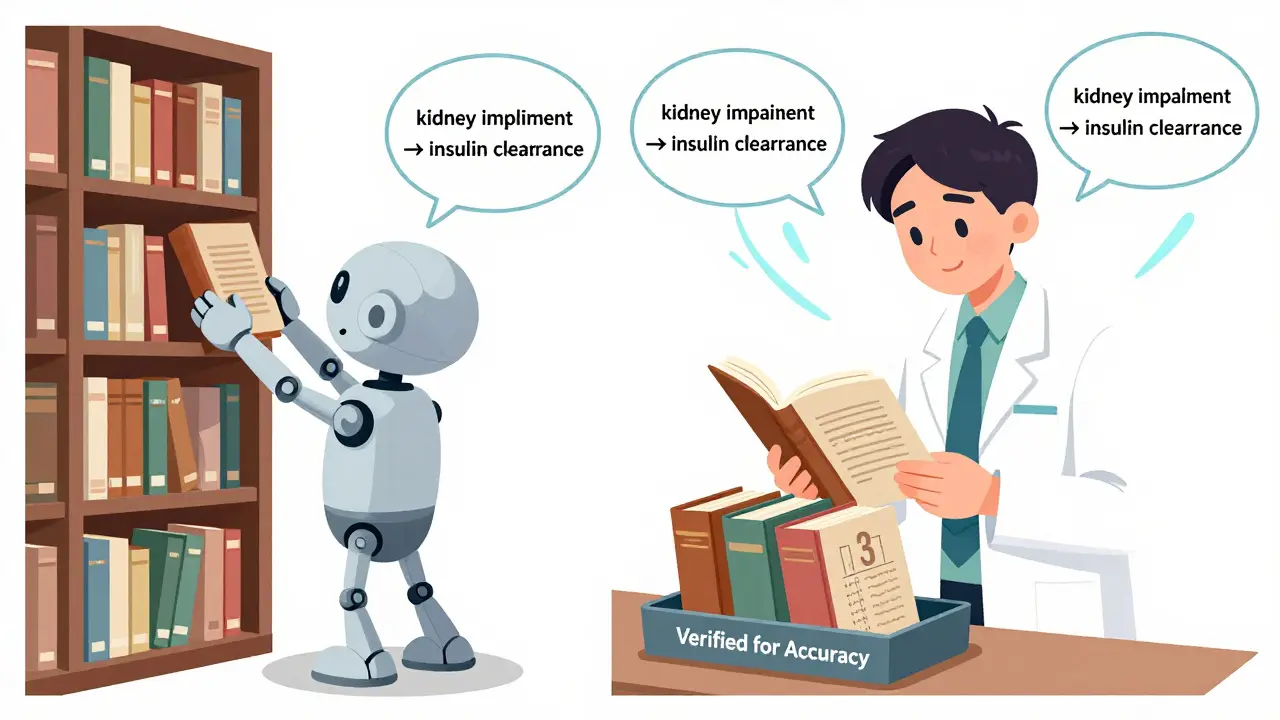

Re-ranking flips this. Instead of relying on precomputed vectors, it uses a cross-encoder transformer-a type of AI model that reads the full query and the full document together, word by word. It doesn’t compress meaning into a number. It understands the relationship between them. It sees that "kidney impairment" in one paragraph and "insulin clearance" in another are deeply connected, even if they’re not near each other in the text.

Let’s say you’re searching for "How does metformin affect liver enzymes in patients on dialysis?". An initial vector search might surface a 50-page clinical trial paper because it contains "metformin," "liver," and "dialysis." But the paper’s main focus is on cardiovascular outcomes. The section on liver enzymes is one sentence buried on page 37. Vector search misses that. Re-ranking doesn’t. It reads the whole thing, notices the sparse mention, and still ranks it high because the context matches your intent.

This is why re-ranking reduces hallucinations. It doesn’t just find documents that look similar-it finds documents that mean what you’re asking.

The Two-Stage Pipeline: Speed Meets Precision

Re-ranking isn’t meant to replace vector search. It complements it. The best systems use a two-stage pipeline:

- Stage 1: Fast retrieval-Use vector search or BM25 to pull back 15-20 documents. This ensures you don’t miss the needle in the haystack.

- Stage 2: Deep re-ranking-Feed those 15-20 documents into a cross-encoder model. It scores each one on relevance. The top 3-5 move forward to the language model.

Why not re-rank all documents? Because cross-encoders are slow. Each query-document pair requires a full forward pass through the model. Doing this on 100,000 documents would take minutes. Doing it on 20 takes milliseconds. That’s the trade-off: sacrifice recall at the first stage (which is fine, since we’re pulling a wide net), and gain precision at the second.

Studies show this approach improves answer accuracy by 18-32% compared to using top-k vector results alone. In enterprise settings-like legal discovery, medical diagnosis support, or financial compliance-those percentages translate into real risk reduction.

JudgeRank: The Human-Like Approach

Most re-rankers are statistical. JudgeRank is different. It mimics how a human expert would evaluate documents.

Instead of just scoring relevance, JudgeRank breaks the task into three steps:

- Query analysis: What’s the real question behind the words? Is the user looking for a definition, a comparison, or a recommendation?

- Document analysis: Extract a summary of each document that’s tailored to the query. Not just keywords-intent-aware summaries.

- Relevance judgment: Decide if the document answers the question fully, partially, or not at all. Then explain why.

This isn’t just a score. It’s reasoning. And it works. On the BRIGHT benchmark-a test designed for real-world, ambiguous queries-JudgeRank outperformed fine-tuned models, even without training on those specific tasks. It generalized. That’s rare.

For systems handling complex, multi-layered questions (like "Compare the long-term side effects of GLP-1 agonists in patients with history of pancreatitis"), this kind of reasoning matters. You’re not just retrieving documents. You’re filtering for usable knowledge.

Why Re-Ranking Matters for Factuality

LLMs hallucinate because they’re given bad context. Re-ranking fixes that at the source.

Take a medical RAG system that pulls from clinical guidelines, journal articles, and hospital protocols. Without re-ranking, it might include:

- A 2019 review paper that’s been superseded by 2025 guidelines

- A case study with a single patient, presented as general advice

- A document where the word "contraindicated" appears, but the actual recommendation is "use with caution"

Re-ranking detects these pitfalls. Cross-encoders can spot temporal mismatch, sample size bias, and nuanced language shifts. A document that says "insulin should be avoided" in a section about type 1 diabetes-but your query is about type 2-is flagged as low relevance.

This isn’t theoretical. A 2025 internal study at a major U.S. health network showed that adding re-ranking reduced incorrect medical advice from 1 in 7 responses to 1 in 23. That’s a 65% drop in harmful outputs.

Trade-Offs: Cost, Complexity, and When Not to Use It

Re-ranking isn’t magic. It has limits.

- Computational cost: Each re-rank inference takes 10-50x longer than vector search. You need GPU resources. Cloud costs add up.

- Latency: If your app needs sub-200ms responses, re-ranking might push you over.

- Overkill for simple queries: If users are asking "What is the capital of France?" you don’t need a cross-encoder. Just return the top result.

Re-ranking shines when:

- Documents are long, dense, or multi-topic

- Queries are nuanced or require inference

- Accuracy is more important than speed

- You’re handling regulated domains: healthcare, finance, law

For consumer chatbots or FAQ bots, skip it. For enterprise knowledge systems, it’s becoming mandatory.

What’s Next: Multimodal and Domain-Specific Rerankers

Re-ranking is evolving beyond text.

New models now handle PDFs with tables, scanned forms, and even medical imaging reports. A re-ranker can now compare a text query like "Find reports showing elevated bilirubin after chemo" with a PDF that contains both narrative text and a lab table. It doesn’t just scan words-it reads tables, spots trends, and links them.

Specialized rerankers are also emerging. One model fine-tuned on legal contracts outperforms general-purpose ones by 27%. Another, trained on biomedical literature, understands gene symbols, drug nomenclature, and clinical trial phases better than any off-the-shelf model.

The future isn’t one-size-fits-all. It’s context-aware rerankers tailored to your data, your domain, and your risk tolerance.

Implementation Checklist

If you’re building or improving a RAG system, here’s how to get started:

- Start with vector search. Use a proven model like OpenAI’s text-embedding-3-small or NVIDIA’s NV-Embed.

- Retrieve 15-20 documents per query. Don’t go lower than 10; don’t go higher than 30.

- Integrate a cross-encoder reranker. Try BAAI/bge-reranker-large (open-source) or NVIDIA’s NeMo Retriever.

- Test on real queries-not synthetic ones. Use your own user logs.

- Measure improvement: Track answer accuracy, hallucination rate, and user satisfaction.

- Optimize cost: Cache reranking results for repeated queries. Use quantization if latency allows.

Don’t try to re-rank everything. Re-rank only what matters.

Is document re-ranking the same as fine-tuning a language model?

No. Fine-tuning changes how the LLM generates responses. Re-ranking changes what documents the LLM sees before generating. They’re complementary. You can use re-ranking with any LLM, even unmodified ones like GPT-4 or Llama 3.

Can I use re-ranking with open-source models?

Yes. Models like BAAI/bge-reranker-v1-mistral, Cohere’s rerank-multilingual-v3, and Microsoft’s MiniLM-L12-v2 are all open-source and work well. You don’t need expensive commercial APIs to get strong results.

Does re-ranking help with multilingual queries?

Yes, if you use a multilingual reranker. Models like Cohere’s rerank-multilingual-v3 support over 100 languages. They understand semantic relationships across languages-not just keyword translation. So a query in Spanish can accurately re-rank documents in French or German.

How much does re-ranking improve accuracy?

On benchmarks like BEIR and BRIGHT, re-ranking typically improves answer accuracy by 18-32%. In real enterprise use cases-especially in healthcare and legal domains-improvements of 25-65% have been observed, depending on document complexity and query specificity.

Should I use re-ranking for every query in my app?

No. Use it only for complex, high-stakes queries. For simple questions like "What’s your business hours?" or "How do I reset my password?", stick with top-k vector search. Reserve re-ranking for queries that require deep understanding, context, or fact-checking.

Final Thought: Precision Over Volume

More data doesn’t mean better answers. More relevant data does.

Re-ranking isn’t about adding more documents. It’s about removing the noise. It’s about making sure the language model sees only what it needs to answer correctly-and nothing else. In a world where AI responses can have real-world consequences, that’s not a luxury. It’s a requirement.

Amber Swartz

February 24, 2026 AT 11:29This is the most overhyped garbage I've seen all year. Re-ranking? Please. You're telling me we need a whole second AI just to filter out dumb results? I've been building RAG systems since 2021, and the only thing that matters is clean data and good embeddings. Stop overengineering. Just clean your corpus and move on.

And don't get me started on 'JudgeRank'-sounds like some grad student's thesis project dressed up as enterprise software. Real companies don't need 'reasoning.' They need speed and cost efficiency. This is peak AI theater.

Robert Byrne

February 24, 2026 AT 14:25You’re absolutely right that re-ranking isn’t magic, but dismissing it as ‘overengineering’ is dangerously naive. I’ve seen medical bots give lethal dosage recommendations because they pulled a single line from a pediatric study. Vector search doesn’t care if the context is about a 7-year-old or a 72-year-old. It just sees ‘insulin’ and ‘kidney’ and calls it a day.

Re-ranking caught a 2018 paper mislabeled as ‘guideline’ in our system. It was actually a case report. Without re-ranking, that paper was top-3. We caught it because the cross-encoder noticed the word ‘case’ appeared 17 times and ‘recommendation’ zero. That’s not theory. That’s patient safety.

Barbara & Greg

February 25, 2026 AT 05:51It is profoundly disturbing how easily we have surrendered our intellectual rigor to algorithmic convenience. The notion that we can outsource comprehension to a ‘cross-encoder’-a black box trained on datasets we do not control-is not innovation. It is epistemological surrender.

Human judgment, cultivated through decades of clinical experience, legal precedent, or scholarly inquiry, is being replaced by statistical correlation masquerading as understanding. JudgeRank, for all its pretensions, is still a proxy for the human mind. And proxies, no matter how sophisticated, are not substitutes.

We are not building systems to answer questions. We are building systems to avoid responsibility. This is not progress. It is abdication.

selma souza

February 26, 2026 AT 19:46Let’s be precise. The article says ‘vector search is shallow’-correct. But then it cites BAAI/bge-reranker-large without specifying which version. There are at least three variants: v1, v2, and v3. The v3 model requires 768-dimensional embeddings. If you’re using 512, you’re not getting the claimed 32% improvement.

Also, ‘Cohere’s rerank-multilingual-v3’ supports 100+ languages-but only if your input is properly tokenized. If you feed it raw PDF OCR text with garbled glyphs? Performance drops 40%. This article reads like a marketing slide deck with footnotes.

And ‘cache reranking results’? That’s only viable if your query distribution is stationary. In healthcare, new drug names emerge weekly. Caching becomes a liability.

Frank Piccolo

February 27, 2026 AT 13:24Oh wow, another Silicon Valley white paper that sounds like a TED Talk. Re-ranking? Sure. But let’s be real: this only works if you have a team of 12 ML engineers, a $50k/month GPU budget, and a legal department that doesn’t care if your system takes 1.2 seconds to respond.

Meanwhile, real companies are running on AWS Lambda with 512MB RAM. They need answers in 150ms. Not 1500ms. And you’re telling them to add a cross-encoder? That’s not engineering. That’s performance art for venture capitalists.

Also, ‘JudgeRank’? Who named this? A 12-year-old with a thesaurus? This isn’t a system. It’s a self-help book for AI.

James Boggs

February 27, 2026 AT 18:03Excellent breakdown. I’d only add one thing: when testing re-rankers, always evaluate on out-of-distribution queries-not just your usual support tickets. We found that our model, which performed brilliantly on common clinical questions, failed catastrophically on queries like ‘Is there any evidence that insulin affects circadian rhythm in elderly diabetics?’-a query that sounds absurd until a patient asks it at 3 a.m.

Also, quantization works surprisingly well. We used 8-bit quantization on the reranker and saw only a 2% drop in accuracy, with 70% latency reduction. Worth considering for edge deployments.

Addison Smart

February 27, 2026 AT 22:12There’s something deeply human about this whole approach. We’re not just optimizing for accuracy-we’re trying to replicate the way a seasoned clinician, lawyer, or researcher would sift through information. They don’t skim. They read. They cross-reference. They question assumptions.

Re-ranking, at its core, is an attempt to bring that human depth into automation. It’s not about replacing intuition. It’s about augmenting it with consistency. I’ve worked in legal tech for 15 years. I’ve seen systems that retrieved 50 documents, and none were relevant. Then we added re-ranking. Suddenly, the top 3 were usable. Not perfect. But usable.

And yes, it costs more. But in high-stakes domains, the cost of being wrong is far higher. One incorrect drug interaction, one misinterpreted contract clause, one flawed compliance opinion-it can end careers. Or lives.

So yes, this is expensive. But sometimes, what’s expensive is also necessary. And this? This is necessary.

David Smith

March 1, 2026 AT 05:16Wow. Just… wow. You really think a machine can understand ‘context’? That’s cute. I’ve read 300+ medical journals. I know what ‘context’ means. And no algorithm, no matter how many transformers it has, will ever get it. You’re outsourcing judgment to a system that doesn’t even know what ‘mortality’ means.

Also, ‘JudgeRank’? That’s not a name. That’s a punchline. I bet the guy who wrote this named it after himself. Classic.