Tag: LLM benchmarks

Mathematical Reasoning Benchmarks for Next-Gen Large Language Models

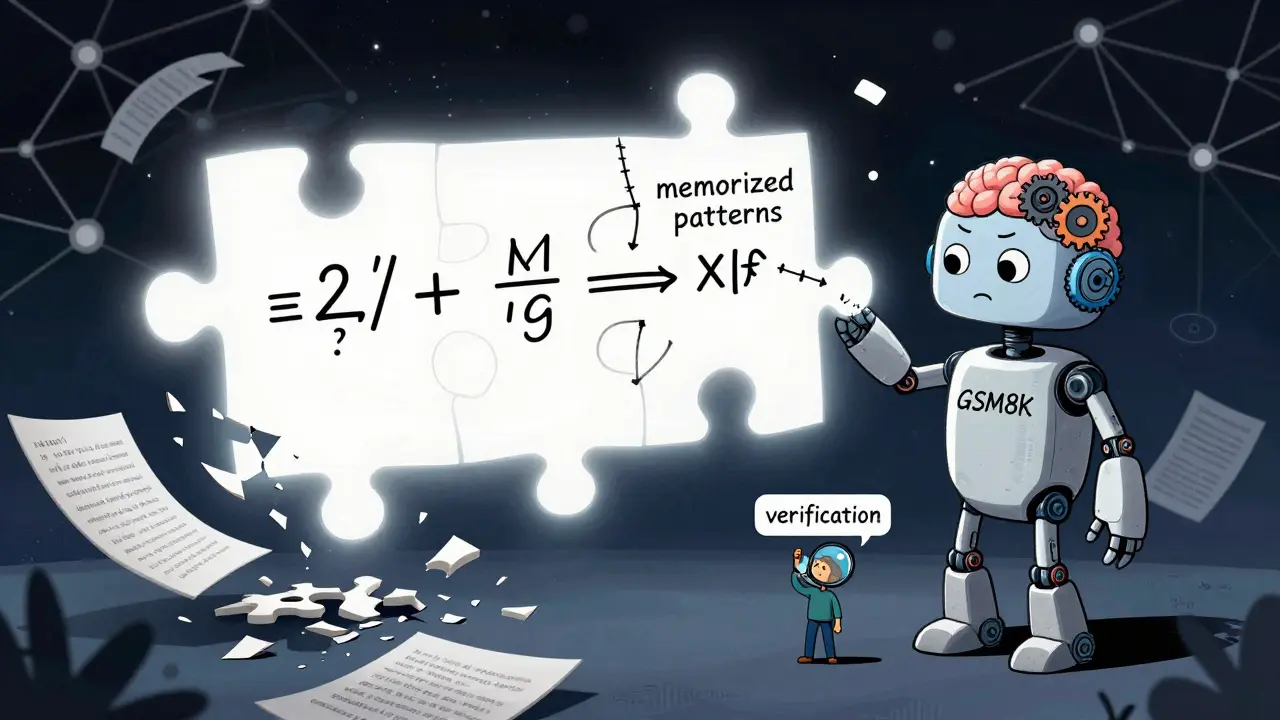

Mathematical reasoning benchmarks reveal that even the most advanced LLMs struggle with true mathematical understanding. While models solve Olympiad problems, they fail under perturbation tests - exposing reliance on memorization over reasoning.

Read more