The California AI Transparency Act (AB 853) isn’t just another law-it’s a direct response to the flood of AI-generated images, videos, and audio that have started showing up everywhere. From fake political speeches to manipulated celebrity videos, the problem is real. And California, as of September 2025, decided to act. The law doesn’t just ask companies to be honest-it forces them to build tools that let you, the user, know when something was made by AI.

What the Law Actually Requires

AB 853 targets large platforms and device makers. If a company has over 1 million monthly users in California, it’s covered. That includes social media sites like Instagram and TikTok, video hosting services like YouTube, and even AI tools like Midjourney or Sora if they’re accessible to the public there.

Here’s what they must do by August 2, 2026:

- Provide a free, easy-to-use tool that lets users check if an image, video, or audio file was created or altered by AI.

- Preserve provenance data-the digital fingerprint that shows where content came from-no matter how many times it’s shared or reformatted.

- Never strip away that data, even if the file gets compressed or converted.

- Let users view the provenance info right on the platform, without digging through menus or technical settings.

- Stop storing personal data tied to that provenance info. You can check if something’s AI-made, but no one can track who checked it.

It’s not just about platforms. Starting January 1, 2028, any camera, phone, or microphone sold in California must include an option to embed an authentication marker into human-created content. Think of it like a digital signature for photos you take yourself. It’s optional, but it means your real photos can carry proof they’re real.

How Detection Tools Actually Work

The law doesn’t say which detection method to use. But the tools must analyze audio, video, and still images. Most tools today look for subtle patterns that AI leaves behind-like unnatural eye blinking, inconsistent lighting, or odd texture patterns in hair or fabric.

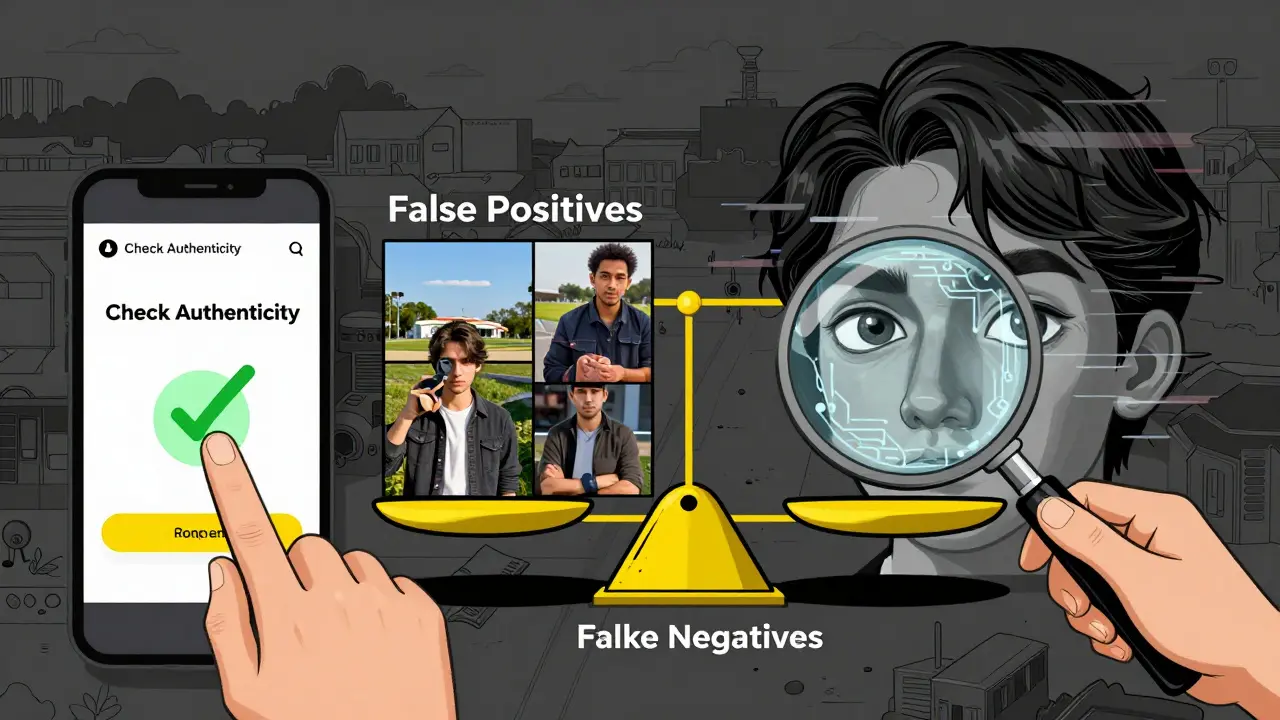

But here’s the catch: these tools aren’t perfect. According to G2 Crowd data from November 2025, detection accuracy ranges from 68% to 82%, depending on the content type. Video detection is the weakest-only 68% accurate. That means nearly one in three AI videos slip through.

False positives are a big issue too. A 2025 study from the Electronic Privacy Information Center found that 62% of negative reviews for early detection tools came from people whose landscape photos were flagged as AI-generated. Why? Because AI models trained on real photos learned to mimic artistic styles-sunsets, fog, long exposures-that look unnatural to detection algorithms.

And then there’s the metadata problem. Platforms like Instagram compress images heavily to save space. That process often wipes out EXIF data-the basic info that comes with photos. Now AB 853 demands even richer provenance data, like cryptographic signatures tied to the AI model used. Early tests show 20-30% of that data gets lost when content moves between platforms. If Instagram doesn’t change how it handles uploads, the law’s requirements could break.

What’s Not Covered

The law is specific: it only applies to multimedia. Text-based AI content-like ChatGPT-generated articles or LinkedIn posts-is completely out of scope. That’s a major gap. Imagine someone using AI to write a fake news story, then pasting it into a video caption or tweet. The law won’t touch it.

It also doesn’t apply to small businesses or indie creators. If your AI tool has fewer than 1 million users in California, you’re exempt. That means the burden falls on giants like Meta, Google, and OpenAI, while smaller players can still use AI without disclosing it.

And the authentication markers on cameras? They’re voluntary until 2028. That gives manufacturers time to build the feature, but it also means for the next two years, your iPhone photos won’t carry any extra proof unless you turn it on manually.

How This Compares to Other Laws

The EU AI Act focuses on risk levels-high-risk AI systems get stricter rules, but there’s no requirement to label AI-generated content. The federal AI Foundation Model Transparency Act, introduced in June 2025, asks companies to disclose how they trained their models, not what their AI outputs look like.

AB 853 is different. It’s the first U.S. law to target content at the point of distribution and creation. No other state has tied hardware to content labeling like this. Even New York’s proposed bill doesn’t require camera manufacturers to do anything.

It’s closest to the proposed federal DEEPFAKES Accountability Act, but AB 853 goes further. It doesn’t just require labels-it demands tools, metadata preservation, and user access. That’s why experts at Georgetown University called it the most comprehensive state-level GenAI law to date.

Real-World Impact and Challenges

Companies are already scrambling. BIP Consulting estimates compliance costs between $150,000 and $500,000 per platform. That’s not just coding-it’s hiring machine learning engineers, digital forensics experts, and metadata specialists. OneTrust’s guide says you need 3-5 skilled people just to get started.

Platforms like Instagram and TikTok will need to overhaul their upload pipelines. Right now, they optimize for speed and storage. AB 853 forces them to optimize for trust. That means slower uploads, bigger file sizes, and more server load.

And what about creators? On Reddit, user ‘ContentCreator87’ worried this would hurt small artists. If a platform has to add detection tools and metadata checks, they might slow down uploads or charge fees to cover costs. Indie creators might get buried under the weight of compliance.

There’s also a legal gray area. WilmerHale lawyers pointed out that forcing platforms to display provenance info could raise First Amendment issues. Is the government compelling speech? What if the provenance label is wrong? What if a user’s real photo gets falsely flagged?

What Comes Next

The California Attorney General launched a GenAI Transparency Task Force in November 2025. Their first draft of implementation guidelines is due January 15, 2026. They’ll define exactly how detection accuracy must be reported, what formats provenance data must use, and how often platforms must update their tools.

Meanwhile, the Partnership on AI started the Content Provenance Initiative in October 2025. Their goal? Create one standard format everyone can use-so your iPhone’s authentication marker works on YouTube, TikTok, and Twitter. Without that, we’ll end up with 10 different systems that don’t talk to each other.

By 2028, when cameras start embedding authentication markers, we could see a world where your real photos come with a digital seal. But that only works if everyone adopts the same standard. Apple, Samsung, and Sony are already testing it in prototypes-so the hardware is coming. The question is whether the software will keep up.

The global trend is clear. Twenty-seven countries are considering similar rules. California didn’t invent AI detection-but it’s the first to make it mandatory, widespread, and user-facing. Whether it works depends on how well the tools improve, how much metadata survives, and whether we stop trusting tech that’s still not reliable enough.

Does the California AI Transparency Act apply to text-based AI content like ChatGPT posts?

No. AB 853 only covers audio, video, and still images. Text generated by AI-like blog posts, social media captions, or emails-is not regulated under this law. That’s a major limitation, since text-based AI misinformation is one of the most common forms of AI abuse. Other laws, like the federal AI Foundation Model Transparency Act, focus on text, but AB 853 does not.

What happens if a platform strips provenance data from uploaded content?

AB 853 explicitly forbids it. Platforms must preserve provenance metadata throughout the entire lifecycle of the content-even if the file is compressed, resized, or converted. If a platform like Instagram removes or degrades this data, it’s in violation of the law and could face enforcement action from the California Attorney General. The law requires cryptographic signatures to verify authenticity, and removing them breaks the chain of trust.

Are AI detection tools 100% accurate under AB 853?

No, and the law doesn’t claim they are. Detection tools currently have accuracy rates between 68% and 82%, depending on the content type. Video detection is the least accurate. Providers must publicly document their tool’s false positive and false negative rates. Users need to understand these tools are aids, not guarantees. The law requires transparency about limitations, not perfection.

Does the law apply to small businesses or indie AI developers?

Only if they have over 1 million monthly users in California. Most small businesses and indie developers won’t meet that threshold. That means a solo AI artist using Midjourney to create digital art for sale on Etsy won’t need to comply. The law targets large platforms and manufacturers-not individual creators or small teams.

Why does AB 853 require detection tools to be free?

To ensure equal access. If detection tools were paywalled, only wealthy users or organizations could verify content authenticity. The law wants every California resident-regardless of income-to have the ability to check if something they see online is AI-generated. That’s why providers must offer the tool at no cost directly on their platform.

Nick Rios

March 22, 2026 AT 04:35Amanda Harkins

March 23, 2026 AT 21:13Jeanie Watson

March 24, 2026 AT 03:50Tom Mikota

March 24, 2026 AT 21:00Mark Tipton

March 25, 2026 AT 07:39Adithya M

March 27, 2026 AT 02:44Jessica McGirt

March 27, 2026 AT 19:57Donald Sullivan

March 28, 2026 AT 13:55