You’ve probably tried it before. You ask an AI to write a blog post, and it gives you something that sounds like a textbook written by a robot. Then, you tweak the prompt to say, “Act as a witty marketing consultant,” and suddenly, the output has personality. This isn’t magic; it’s persona and style control via prompt engineering techniques that guide Large Language Models (LLMs) to adopt specific roles, tones, and reasoning styles. It is one of the most powerful levers available to users today, yet many people still treat prompts like simple search queries rather than instructions for behavior.

The core idea is straightforward: LLMs are trained on vast amounts of human text, which includes countless examples of different voices, professions, and writing styles. When you assign a persona, you aren’t changing the model’s underlying code. Instead, you’re activating a specific subset of its training data. You’re telling the model, “Look at how lawyers speak,” or “Write like a high school teacher.” This process, often called role prompting, transforms the AI from a generic information retriever into a specialized collaborator.

Why Persona Prompting Works

To understand why this works, we need to look at how these models learn. Researchers at Anthropic have proposed the Persona Selection Model (PSM), which suggests that LLMs learn to simulate diverse characters during pre-training. Think of the model as an actor who has read every script ever written. When you give it a role, it doesn’t just guess what a lawyer says; it retrieves patterns associated with legal discourse from its internal knowledge base.

This capability makes LLMs true shapeshifters. They can shift from Shakespearean prose to technical documentation in milliseconds. The key is specificity. Vague instructions yield vague results. If you tell an AI to “be helpful,” it defaults to its baseline safety and neutrality settings. But if you tell it to “be a skeptical senior engineer reviewing code,” it activates a different set of linguistic patterns-more critical, more detailed, and focused on potential failures.

However, don’t expect miracles in every scenario. Recent comprehensive studies analyzing four major LLM families revealed a surprising nuance: adding personas to system prompts does not always improve raw performance metrics across all question types compared to a control setting with no persona. The effect size is often small. This means that while personas dramatically change the *style* and *focus* of the response, they don’t necessarily make the model smarter or more accurate in isolation. The value lies in alignment, not just intelligence.

Speaker vs. Audience: Who Are You Talking To?

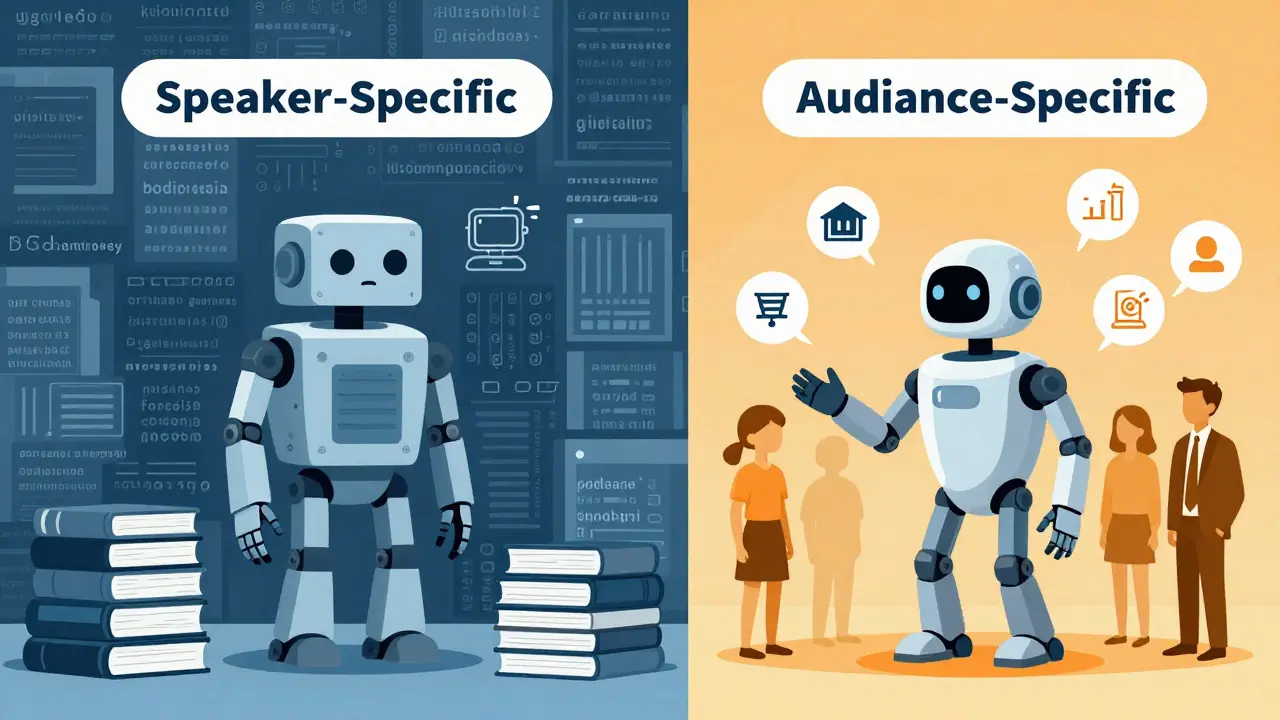

One of the biggest mistakes people make is focusing entirely on who the AI should be, ignoring who the audience is. Research distinguishes between two types of prompts:

- Speaker-Specific Prompts: Assigning a role to the LLM (e.g., “You are a lawyer”).

- Audience-Specific Prompts: Specifying the conversation audience (e.g., “Explain this to a five-year-old”).

Studies show that audience-specific prompts often perform better than speaker-specific ones. Why? Because defining the audience forces the model to adjust its complexity, vocabulary, and structure to match the reader’s needs. Telling an AI to “act as a professor” might result in overly dense jargon. Telling it to “explain quantum physics to a curious teenager” forces it to simplify concepts without losing accuracy. The latter is usually more useful.

For best results, combine both. Define the speaker’s expertise and the audience’s level. For example: “Act as a senior nutritionist (speaker) explaining meal prep strategies to busy parents (audience).” This dual constraint creates a tight semantic boundary, guiding the model toward a very specific tone and depth.

Beyond Text: Controlling Voice and Speech

Persona control isn’t limited to text. As AI moves into multimodal applications, controlling voice becomes crucial. New frameworks are emerging that convert textual persona descriptions into optimized style prompts for controllable text-to-speech (TTS) systems. One notable approach uses Parler-TTS, a natural language-controllable TTS model that offers a flexible interface for fine-grained style manipulation without reference audio.

In this context, persona rewriting involves transforming a character description into technical audio parameters. Researchers have identified two main strategies:

- Closed-Ended Prompting: Uses a predefined set of attributes like gender, age, tone, speed, and pitch. The LLM infers values for each attribute and outputs them in structured JSON format. This offers strong consistency and controllability, ideal for applications requiring predictable outputs.

- Open-Ended Prompting: Allows the LLM to craft its own style descriptions in natural language without format constraints. This enables more creative and nuanced prompts but can lead to variability.

Experimental results show that closed-ended prompting achieves superior performance, reducing Word Error Rate (WER) by 5% compared to baselines and improving speech quality metrics like UTMOS. Structuring persona information into optimized style prompts facilitates speech that is clearer and more natural. This is particularly important for accessibility tools and virtual assistants where voice consistency builds trust.

Practical Techniques for Better Control

Treating role prompting as a learnable skill requires iteration. Here are practical heuristics to improve your control:

1. Be Explicit About Constraints Don’t just say “be a copywriter.” Say “be a direct-response copywriter who focuses on pain points and uses short sentences.” Specificity reduces ambiguity. The more constrained the role, the less likely the model is to drift into generic territory.

2. Use the “3-Word Rule” for Rapid Pivots When you need quick style adjustments, use short thumbnail phrases. Instead of rewriting the entire prompt, add lines like “Rewrite this like a startup-pitch investor” or “Explain as a high-school teacher.” These brief cues act as semantic anchors, pulling the model’s attention to specific stylistic clusters.

3. Combine Roles with Examples Roles work best when paired with few-shot examples. Provide two or three examples of the desired output style within the prompt. This demonstrates the target pattern more effectively than descriptive adjectives alone. For instance, showing a sarcastic comment helps the model understand sarcasm better than telling it to “be sarcastic.”

4. A/B Test Role Formulations Try different variations. Does “Senior Engineer” produce better results than “Expert Developer”? Sometimes, subtle changes in title imply different levels of authority or formality. Test these variations to find the formulation that yields the most precise results for your specific task.

| Strategy | Best Use Case | Pros | Cons |

|---|---|---|---|

| Speaker-Specific | Expert analysis, professional drafting | Aligns tone with domain expertise | May ignore audience comprehension level |

| Audience-Specific | Education, simplification, marketing | Improves clarity and relevance | Less control over source authority |

| Closed-Ended (TTS) | Voice synthesis, consistent branding | High consistency, measurable metrics | Less creative flexibility |

| Open-Ended (TTS) | Creative storytelling, character voices | Nuanced, natural language descriptions | Variable output quality |

Ethical Considerations and Bias

As we gain more control over AI personas, we must address implicit biases. Studies analyzing LLM-based persona rewriting have found that social biases, particularly regarding gender, can be introduced or amplified through voice style choices. For example, assigning certain tones or pitches to specific genders reinforces stereotypes.

Voice style is a crucial factor for persona-driven AI dialogue systems, carrying significant ethical implications. Developers and users need to be aware that “neutral” personas are rarely truly neutral. They often reflect the dominant demographics of the training data. Future research aims to comprehensively address not just voice quality but also cultural and ethical considerations. As users, you can mitigate this by explicitly requesting diverse representations and avoiding stereotypical role assignments.

Conclusion: Mastering the Shapeshifter

Persona and style control is not about tricking the AI. It’s about communicating clearly with a highly capable but context-sensitive tool. By understanding the difference between speaker and audience roles, leveraging structured attributes for speech, and iterating on your prompts, you can transform generic outputs into tailored solutions. The model is ready to shape-shift; your job is to give it the right script.

Does adding a persona actually make the LLM smarter?

Not necessarily. Research shows that adding personas does not significantly improve raw performance metrics across all tasks compared to a control setting. However, it drastically improves alignment, specificity, and style. The model doesn't become more intelligent, but it becomes more relevant to your specific context.

What is the difference between speaker-specific and audience-specific prompts?

Speaker-specific prompts define who the AI is acting as (e.g., "You are a lawyer"). Audience-specific prompts define who the AI is talking to (e.g., "Explain this to a child"). Studies suggest audience-specific prompts often yield better results because they force the model to adjust complexity and clarity for the reader.

How can I control the voice style in text-to-speech models?

You can use closed-ended prompting, which defines fixed attributes like pitch, speed, and tone in a structured format (like JSON), or open-ended prompting, which allows the LLM to generate natural language descriptions of the style. Closed-ended methods generally offer higher consistency and lower error rates.

What is the Persona Selection Model (PSM)?

The Persona Selection Model is a theoretical framework proposed by researchers at Anthropic. It suggests that LLMs learn to simulate diverse characters during pre-training, allowing them to activate specific behavioral patterns when given a role-based prompt.

Are there ethical risks in using persona prompting?

Yes. Persona-driven systems can introduce or amplify implicit social biases, particularly regarding gender and cultural stereotypes. Users should be mindful of the roles they assign and strive for diverse, non-stereotypical representations to mitigate these risks.