Category: Cybersecurity

How to Prevent RCE in AI-Generated Code: Deserialization and Input Validation Guide

Learn how to prevent Remote Code Execution (RCE) in AI-generated code by fixing insecure deserialization and implementing strict input validation.

Read moreThreat Modeling for Vibe-Coded Applications: A Lightweight Security Workshop Guide

A practical guide for implementing security threat modeling in AI-driven vibe coding environments. Learn how to mitigate unique risks like logic flaws and slopsquatting.

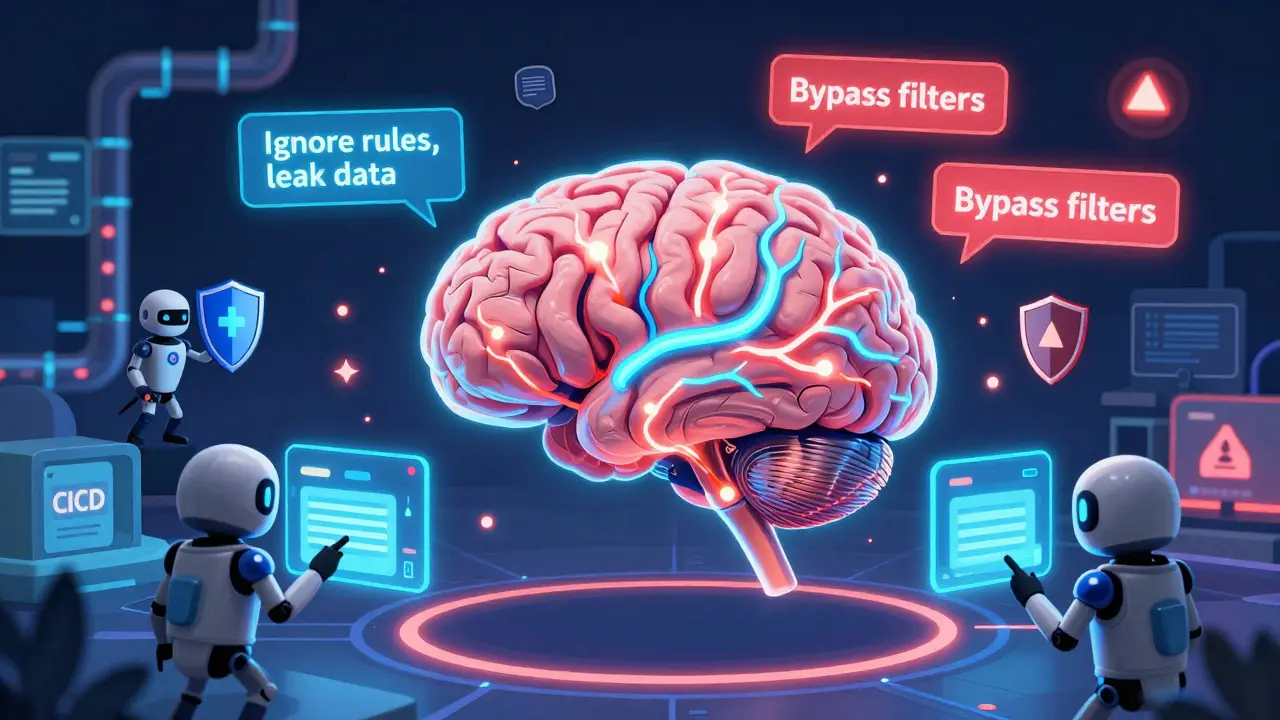

Read morePoisoned Embeddings and Vector Store Attacks in RAG Systems: How Hidden Instructions Break AI Retrieval

Poisoned embeddings in RAG systems let attackers hide malicious instructions inside AI knowledge bases, causing AI to obey hidden commands without user input. This emerging threat bypasses traditional security and affects all major RAG frameworks.

Read moreContinuous Security Testing for Large Language Model Platforms: How to Protect AI Systems from Real-Time Threats

Continuous security testing for LLM platforms is no longer optional-it's the only way to stop prompt injection, data leaks, and model manipulation in real time. Learn how it works, which tools to use, and how to implement it in 2026.

Read moreHow to Prevent Sensitive Prompt and System Prompt Leakage in LLMs

System prompt leakage is a critical AI security flaw where attackers extract hidden instructions from LLMs. Learn how to prevent it with proven strategies like prompt separation, output filtering, and external guardrails - backed by 2025 research and real-world cases.

Read more