The core of the problem is that LLMs are great at logic but terrible at security context. They often suggest native serialization methods because they are convenient, even though they are incredibly dangerous. If you are relying on AI to generate your backend logic, you are essentially inviting a security gap into your system if you don't know exactly where to look for these flaws.

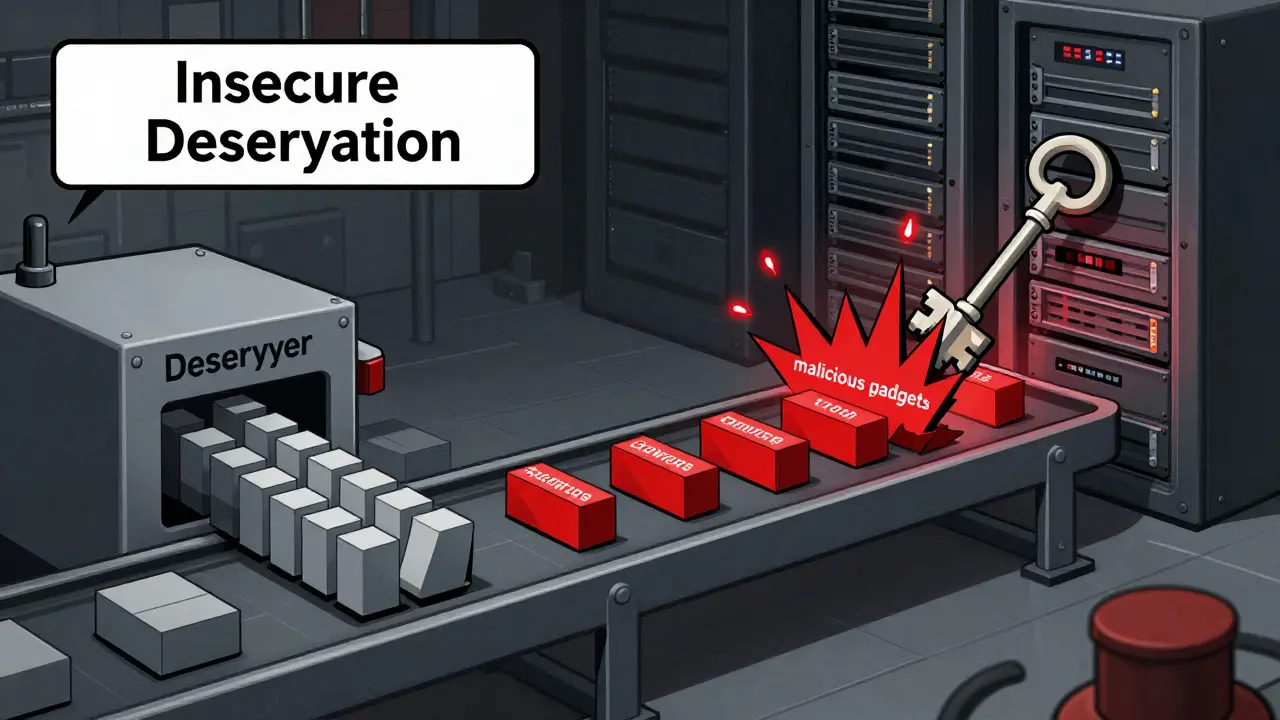

The Danger of Insecure Deserialization

To understand the risk, we first need to look at what serialization actually does. Serialization is the process of converting a complex data object into a format that can be stored or transmitted across a network . Deserialization is the opposite: turning that stream of bytes back into a living object in your memory.

The disaster happens when an application deserializes untrusted data without checking what that data actually is. A threat actor can manipulate the serialized object to include a "gadget chain"-a sequence of existing code fragments that, when executed during the deserialization process, trigger a command on the OS. In the world of AI, this is amplified because AI-generated code frequently skips these guardrails to keep the example simple.

According to the OWASP Top 10, insecure deserialization is a critical risk often resulting in CVSS scores as high as 9.9. We've seen this in the real world with the Citrix CVE-2023-3519, where a failure in how the system handled data allowed complete server takeovers. If your AI suggests using a native binary format for speed, it might be handing you a skeleton key to your own server.

Why AI-Generated Code is a Security Magnet

You might wonder why AI is specifically prone to this. The reality is that AI models prioritize functionality over security. Researchers have found that AI-generated code increases deserialization risks by up to 300% compared to human-written code because the LLM doesn't know the trust level of the data your app will actually handle.

A huge percentage of AI frameworks rely on Python. If you've used an AI to build a machine learning pipeline, it almost certainly suggested the

pickle module. Pickle is Python's native object serialization library, and it is notoriously insecure. It doesn't just store data; it stores instructions on how to reconstruct the object. An attacker can replace a legitimate object with a malicious one that executes os.system('rm -rf /') the moment pickle.load() is called.

It isn't just Python. Java's native serialization and .NET's BinaryFormatter are equally dangerous. In 2024, researchers identified RCE vulnerabilities in AI libraries from giants like NVIDIA and Apple, proving that even the pros struggle to keep these entry points closed when dealing with complex AI model serving APIs.

Comparing Serialization Formats

Not all data formats are created equal. If you want to stop RCE, you need to move away from "executable" formats toward "pure data" formats. Pure data formats describe what the data is, not how to rebuild a complex object in memory.

| Format | RCE Risk Level | Main Vulnerability | Performance Impact |

|---|---|---|---|

| Python Pickle | Extreme | Arbitrary code execution via __reduce__ |

Very Low (Fast) |

| Java Native | High | Gadget chains in classpath | Low |

| JSON | Low | Post-parsing logic flaws | Moderate (Slower) |

| XML | Medium | XXE (External Entity) attacks | Moderate |

Switching to JSON can reduce your vulnerability likelihood by about 76%. Yes, you might see a 12-18% hit in performance during high-throughput AI inference, but that is a small price to pay compared to a total system breach.

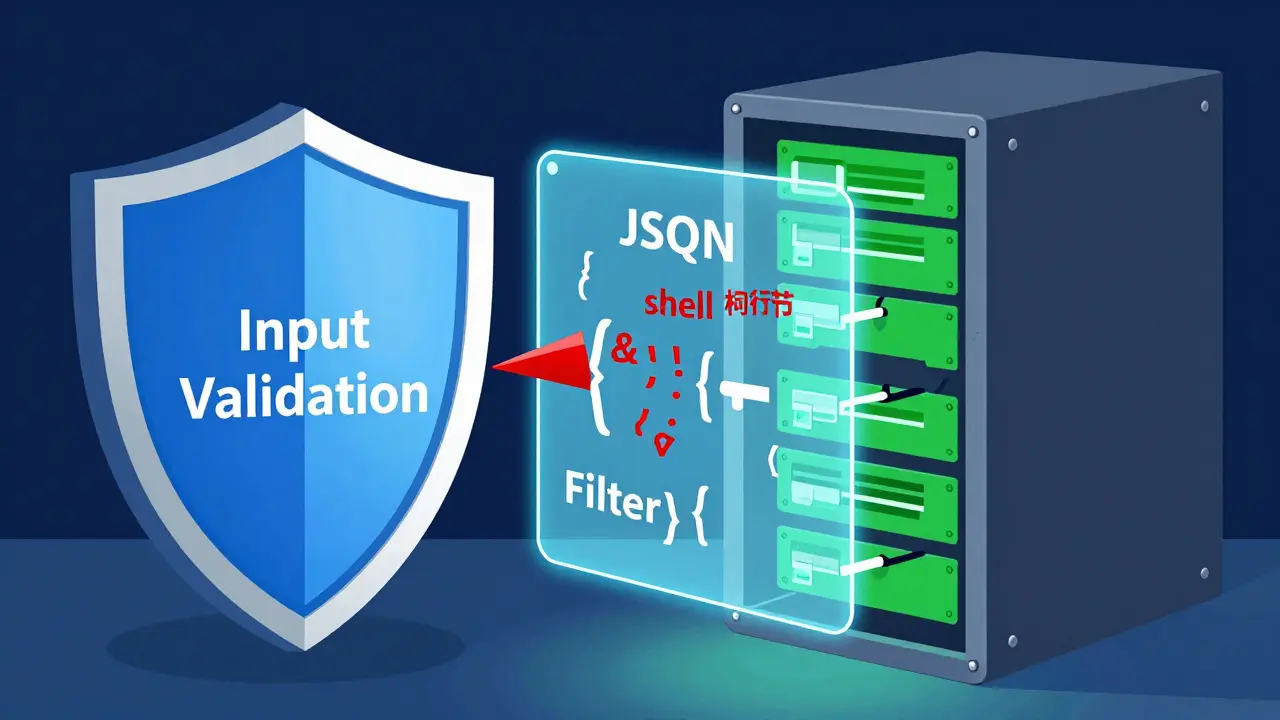

The Second Line of Defense: Strict Input Validation

Even if you use JSON, you aren't 100% safe. Why? Because the vulnerability often isn't in the parsing, but in what happens after the parsing. If your AI generates code that takes a JSON field and passes it directly into a database query or a system command, you've just traded one RCE for another (like SQL injection or Command Injection).

The fix is strict Input Validation. Do not just check if the data is the right type; check if it fits a strict allow-list of expected values. If you expect a username, don't just check that it's a string-check that it doesn't contain shell metacharacters like ; or &.

A common mistake in AI-generated code is the "lazy check." The AI might write if data: which only checks if the object exists. You need a validation layer that enforces a strict schema. Implementing this might increase your development time by 20-30 hours per sprint, but it's the only way to stop 82% of these security incidents.

Practical Steps to Secure Your AI Code

If you are auditing AI-generated code today, follow this workflow to close the RCE gaps:

- Map your entry points: Find every place where your app accepts data from a user, an API, or a cloud bucket. Look specifically for functions like

pickle.load(),yaml.load(), orunpickle(). - Replace native serialization: Swap

pickleforjsonorprotobuf. If you absolutely must use pickle, use a signing mechanism like HMAC to ensure the data hasn't been tampered with. - Implement a Schema Validator: Use libraries like Pydantic (for Python) to enforce strict data types and constraints before the data ever reaches your business logic.

- Deploy Runtime Protection: Consider RASP (Runtime Application Self-Protection). These tools monitor the app's behavior in real-time and can block a deserialization attack in under 5 milliseconds.

Be warned: if you implement these strictly, you might find that some third-party AI models stop working. Some community models are built using these dangerous serialization methods, and a strict security wrapper can cause a 10-25% model rejection rate. You'll need to balance security with compatibility.

Tools for Detecting Deserialization Flaws

You can't rely on your eyes alone to find these bugs. Manual reviews miss too much, and basic scanners often ignore the complex logic of AI frameworks. You need a mix of Static Analysis (SAST) and Dynamic Analysis (DAST).

Commercial tools like Qwiet AI claim very high detection accuracy for deserialization, while open-source options like SonarQube are great for general code quality but might miss some of the more novel RCE vectors found in AI environments. The goal is to integrate these into your CI/CD pipeline so that no code reaches production without a security scan.

Is JSON completely safe from RCE?

JSON itself is a data format and doesn't execute code, making it significantly safer than pickle. However, if the application takes a value from a JSON object and passes it into a dangerous function (like eval() or os.system()), you can still have RCE. The risk shifts from the deserialization process to the logic that follows.

Why does AI-generated code specifically use pickle?

AI models are trained on massive amounts of open-source code where pickle is the standard for saving ML models and weights. Because it is the most common way to handle Python objects, the AI suggests it as the most "efficient" path, unaware that it creates a massive security hole in a production environment.

How do I detect if a serialized object is malicious?

It is nearly impossible to reliably detect a malicious payload just by looking at the bytes. The best approach is to avoid native deserialization entirely. If you must use it, use a cryptographic signature (HMAC) to verify that the object was created by your own trusted server and hasn't been altered by a user.

What is the performance cost of switching to secure formats?

Switching from binary formats like pickle to text formats like JSON usually introduces a 12-18% overhead in high-throughput scenarios. For most apps, this is negligible. For ultra-low latency AI inference, you can use binary-safe formats like Protocol Buffers (protobuf), which provide speed and security.

Will a WAF stop RCE attacks?

A Web Application Firewall (WAF) can identify common serialized object patterns and block known attack signatures with roughly 83% accuracy. However, attackers can often obfuscate their payloads to bypass the WAF. A WAF is a great first layer, but it cannot replace secure coding and input validation.

Kirk Doherty

April 5, 2026 AT 20:11just let the ai write it and hope for the best lol

Meghan O'Connor

April 6, 2026 AT 03:41The author's claim about the 300% increase in risk is vaguely cited. Where is the actual peer-reviewed paper? It's typical for these kinds of articles to throw around percentages without providing a rigorous methodology or a source link. Also, the phrasing in the second paragraph is clunky at best. If you're going to lecture people on security, at least try to master basic syntax first. The technical a-la carte of solutions provided is just a surface-level summary that any junior dev would already know. It's honestly exhausting seeing this kind of lazy content being pushed as a guide. We need actual data, not marketing fluff and anecdotal evidence from some unnamed researchers who probably just ran a few prompts and called it a study.

Tyler Springall

April 6, 2026 AT 15:59Imagine actually thinking a WAF is a viable primary defense in 2024. It is absolutely pathetic that some people still believe in that fairy tale. Only an amateur would suggest that as a layer of defense when the underlying code is a disaster. This entire discussion is almost beneath me, but the sheer incompetence of relying on AI to handle serialization is just a comedy of errors at this point. Truly tragic.

Morgan ODonnell

April 7, 2026 AT 01:06i think it's just a learning curve for everyone

Colby Havard

April 8, 2026 AT 07:22One must ponder the moral imperative of the developer... Is it not a dereliction of duty to outsource the cognitive labor of security to a machine?! The arrogance of the modern coder, who believes a prompt can replace a decade of disciplined study, is truly a stain upon the profession. We are sacrificing our intellectual integrity for the sake of a faster sprint cycle!!!

Dmitriy Fedoseff

April 9, 2026 AT 20:42The utter disregard for basic safety is a symptom of a decaying culture of engineering! We are trading our digital sovereignty for the convenience of a chatbot. It is an insult to those who built the foundations of secure computing to see people blindly copy-pasting pickle modules into production environments. Wake up before your entire infrastructure is owned by a script kid from halfway across the globe!

Patrick Tiernan

April 10, 2026 AT 20:34pydantic is okay i guess but like who actually has time to write schemas for everything when the ai can just do it in two seconds lol

Patrick Bass

April 12, 2026 AT 06:50I agree that Pydantic is a solid choice here.

Liam Hesmondhalgh

April 13, 2026 AT 09:10Absolute rubbish. The formatting in this post is a joke and the logic is even worse. Why are we even using these tools if we have to spend 30 hours a sprint fixing the garbage they produce? It's a waste of time and a disgrace to the craft. Get a real job and stop relying on a magic box to write your code for you!