When AI starts writing your code, traditional security checks don’t cut it anymore. That’s the reality for teams using vibe coding - where developers type natural language prompts and AI tools like GitHub Copilot or Cursor generate the actual code. It’s fast. It’s powerful. But it’s also breaking the rules of old-school compliance frameworks like SOC 2 and ISO 27001. These standards were built for human-written code, not AI-generated snippets that appear out of nowhere in your IDE. Without specific controls, you’re not just risking security failures - you’re risking audit failures.

Why SOC 2 and ISO 27001 Don’t Work for Vibe Coding

SOC 2 audits focus on five trust service criteria: Security, Availability, Processing Integrity, Confidentiality, and Privacy. ISO 27001 asks for documented controls around access, data handling, and incident response. Both assume code comes from a known developer, reviewed in a known process, tracked through version control. Vibe coding flips that.

Here’s the problem: when an AI generates a piece of code from a prompt like "fetch user data and send it to the API," who’s responsible? The developer who typed it? The AI model? The plugin that inserted it? Traditional audits can’t answer that. A 2024 survey by Black Duck found that 68% of compliance failures in vibe-coded environments came from prompts that were too vague - leading to insecure code that passed unit tests but failed in production.

And it gets worse. Auditors need traceability. They need to see: Who requested this? When? Why? What was the exact prompt? What code was generated? Was it reviewed? Most systems today log nothing. Or they log it in a way that takes weeks to piece together manually. One Reddit user from a fintech team said they spent three weeks just correlating code snippets with prompts for their SOC 2 auditor. That’s not sustainable.

The New Rules for Vibe Coding Compliance

Compliance for vibe coding isn’t about adding more checkboxes. It’s about redesigning the development pipeline from the inside out. The key shift? Shift-left security. Instead of checking code at commit or build time, you enforce controls at the moment the AI generates it - inside the IDE.

Here’s what modern compliance controls require:

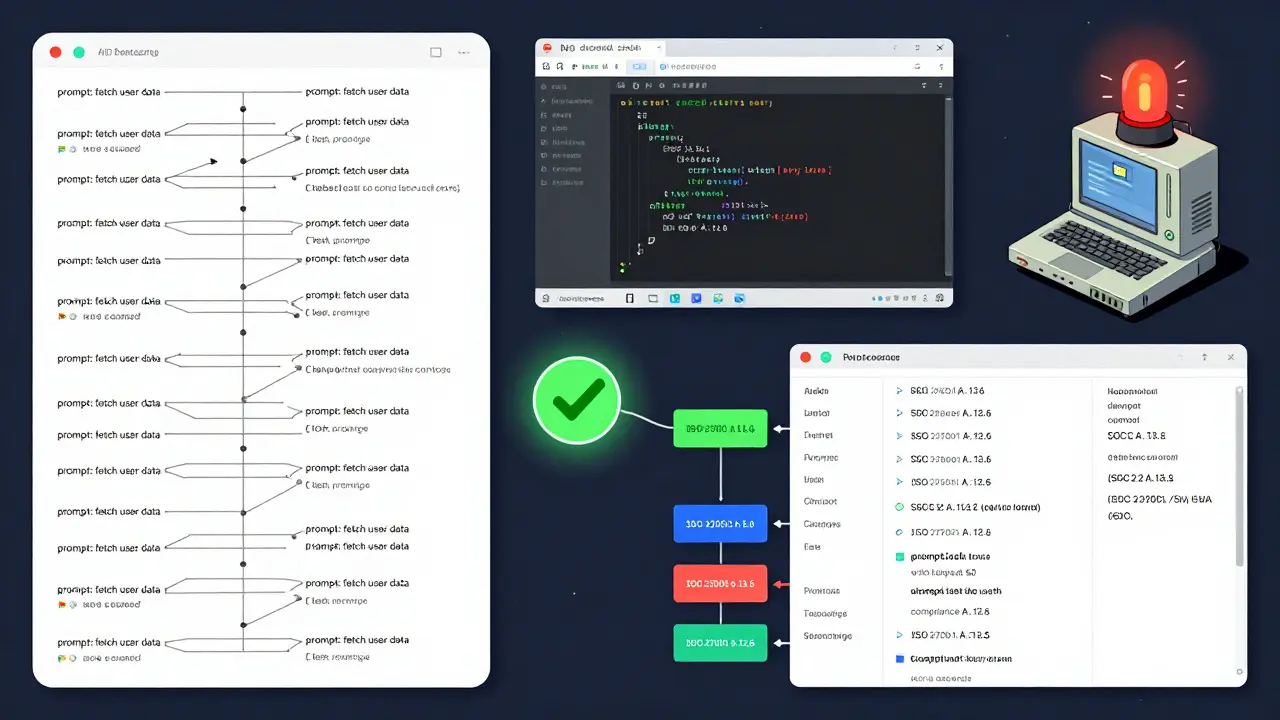

- Real-time IDE scanning: Tools like Knostic Kirin and Contrast Security’s AVM scan code as it’s typed. They check for known vulnerabilities from the NVD database, block risky patterns, and flag insecure API calls before they’re even saved.

- Full audit trails: Every AI-generated line must be tied to a prompt, a user, a timestamp, and a review status. Systems now capture 275+ data points per code change automatically - no manual logging needed.

- Secrets detection at the source: If the AI accidentally inserts an AWS key or database password into the code, it gets blocked before it leaves the editor. Tools like HashiCorp Vault and AWS Secrets Manager integrate directly with IDE plugins to scan for credentials in real time.

- Prompt governance: You can’t just let developers type anything. Leading platforms now enforce prompt templates with guardrails. For example, a prompt like "store password in environment variable" gets flagged and replaced with a secure alternative. Superblocks reports this reduces false positives by 63%.

These aren’t optional extras. They’re mandatory. According to Gartner, 70% of enterprises will require specialized vibe coding controls by 2026 - up from just 15% in 2024. And it’s not just about security. It’s about proving you’re compliant.

How Knostic, Contrast Security, and Others Are Fixing This

Companies like Knostic, Contrast Security, and Legit Security aren’t just adapting old tools - they’re building new ones from the ground up.

Knostic Kirin 2.3, released in late 2024, is already being used by Fortune 500 financial firms. It blocks 97.3% of vulnerable packages before they’re integrated. More importantly, it maps every AI-generated code change directly to SOC 2 and ISO 27001 controls. One Capital One team cut their SOC 2 evidence collection time from 20 days to 3. How? Because every action - prompt, code, review, approval - is logged automatically and tagged to the right compliance standard.

Contrast Security’s Application Vulnerability Monitoring (AVM), updated in March 2025, goes a step further. It doesn’t just look at static code. It runs lightweight instrumentation in real time, catching vulnerabilities in AI-generated code with 89% accuracy - compared to 62% for traditional SAST tools. That’s because AI code often has subtle logic flaws that don’t show up in scans but break in production.

Legit Security’s framework demands 100% credential scanning across IDEs, repositories, and CI/CD pipelines. No exceptions. Even if the AI is just generating a test file, if it contains a secret, it’s blocked. This isn’t about being overly cautious - it’s about meeting ISO 27001’s A.13.2 control on system access.

Where Traditional Compliance Falls Apart

Here’s the hard truth: if your team is still using the same SOC 2 checklist from 2022, you’re already behind.

Traditional controls assume:

- Code is written by a person

- Review happens after writing

- Version history is linear and traceable

- Security scans happen at build time

Vibe coding breaks all four. AI generates multiple code variants in seconds. Developers might not even see the final version before it’s committed. Version control becomes a mess of AI-generated branches. And by the time a SAST scan runs, the damage is already in the repo.

A 2025 report from Superblocks showed that teams using standard compliance frameworks had 43% more audit findings related to development lifecycle controls than those with vibe-specific controls. Why? Because they’re trying to fit a square peg into a round hole. The AI didn’t skip the process - it changed the process.

And then there’s the legal risk. A healthcare startup failed HIPAA compliance in 2024 because AI-generated code accidentally logged patient data. The auditor couldn’t tell if the developer wrote the line or the AI did. That’s not just a technical failure - it’s a liability nightmare.

Implementation: What It Really Takes

You can’t just install a plugin and call it done. Implementing vibe coding compliance is a 10- to 18-week project. Legit Security breaks it into four phases:

- Package governance (2-4 weeks): Define which libraries and dependencies are allowed. Block high-risk packages before they’re even requested.

- Plugin control (1-3 weeks): Roll out IDE plugins for VS Code, JetBrains, and others. Enforce policy at the point of generation.

- In-IDE guardrails (3-5 weeks): Set up prompt templates, credential scanning, and auto-reviews. Train developers on what to avoid.

- Audit automation (4-6 weeks): Connect everything to your SIEM and compliance dashboard. Automatically map findings to SOC 2 and ISO 27001 controls.

And you’ll need people. Black Duck found teams need 2.3 additional full-time equivalents just to manage vibe coding compliance - policy engineers, prompt auditors, AI oversight specialists. This isn’t a task for a junior DevOps engineer anymore.

Success factors? Executive sponsorship. Dedicated AI compliance champions. Integration with existing IAM systems. And documentation that actually works. Knostic’s platform scores 4.7/5 on G2 for clarity. VibeSec? Just 3.2 - because their examples are theoretical, not real-world.

The Future: Compliance-as-Code

The next evolution? Compliance-as-code. Imagine writing a policy like:

IF prompt contains "store password" THEN block AND suggest "use AWS Secrets Manager"

IF code contains "console.log(user.email)" THEN flag AND require human review

IF no review within 24 hours THEN auto-rollbackThat’s not sci-fi. Knostic’s Kirin 3.0, shipping in Q2 2025, will do exactly that. It automatically maps policy rules to SOC 2 and ISO 27001 controls with 95% accuracy in beta tests.

Forrester predicts that by 2027, 85% of vibe coding compliance will be enforced by automated policy engines - not manual reviews. That’s the future. And it’s coming fast.

But here’s the catch: AI is getting smarter. As models become more autonomous, today’s controls might not be enough tomorrow. Black Duck’s CTO warns that without dynamic, adaptive frameworks, compliance systems will become obsolete. The goal isn’t just to keep up - it’s to build systems that evolve with the AI.

Who Needs This Now?

Not every team needs this level of control. If you’re building a side project, maybe not. But if you’re in:

- Finance - 73% adoption rate

- Healthcare - HIPAA and GDPR apply

- Government or regulated industries - SOC 2 is mandatory

- Any company with ISO 27001 certification

Then you’re already at risk. The EU’s AI Act, effective February 2026, requires detailed documentation of AI development processes. NIST’s updated SP 800-218, released in January 2025, now explicitly demands traceability from prompt to production code.

Ignoring this isn’t an option. It’s a ticking audit time bomb.

What is vibe coding, and why does it break compliance?

Vibe coding is when developers use AI tools like GitHub Copilot to generate code from natural language prompts. It breaks compliance because traditional frameworks like SOC 2 and ISO 27001 assume code is written, reviewed, and tracked by humans. With vibe coding, code appears without clear ownership, review history, or traceable prompts - creating audit gaps that can lead to failed compliance reviews.

Do SOC 2 and ISO 27001 cover AI-generated code?

Not directly. Both standards were designed for human-driven development. While they cover security controls and audit trails, they don’t address how to track AI-generated artifacts, validate prompts, or enforce human review of machine-written code. Without additional controls, teams using vibe coding will fail audits because they can’t prove processing integrity or accountability.

What are the biggest risks of vibe coding without controls?

The biggest risks include: leaking secrets (like API keys or passwords), introducing unpatched vulnerabilities, violating data privacy rules (like logging PHI), and failing audits because you can’t prove who wrote what. A healthcare startup failed HIPAA compliance in 2024 because AI-generated code accidentally logged patient data - and auditors couldn’t tell if the developer or the AI caused it.

How long does it take to implement vibe coding compliance?

It takes 10 to 18 weeks for a full rollout. This includes setting up package governance (2-4 weeks), deploying IDE plugins (1-3 weeks), configuring in-IDE guardrails (3-5 weeks), and automating audit trails (4-6 weeks). Teams also need to hire or retrain staff - on average, 2.3 additional FTEs are required to manage the new controls.

Is vibe coding compliance only for large companies?

No. While large enterprises are leading adoption (73% in finance), any company subject to SOC 2, ISO 27001, HIPAA, or the EU AI Act needs these controls. Even small teams in regulated industries can be fined or lose contracts if they can’t prove secure development practices. The cost of non-compliance far outweighs the investment in compliance tools.

mani kandan

March 22, 2026 AT 12:50Interesting take. I've been using Copilot for six months now, and honestly, the biggest surprise wasn't the code quality-it was how often I'd catch myself staring at a generated function thinking, 'Who the hell wrote this?'

Our team started logging prompts last quarter, and it changed everything. Suddenly, we could trace back why a vulnerable dependency slipped in-not because someone was sloppy, but because the prompt said 'use the fastest library' and Copilot picked the one with 12k stars but zero updates in two years.

Now we have a simple rule: no prompt without context. Instead of 'fetch user data,' it's 'fetch user data via authenticated API call, mask PII, and log access.' Small change. Huge difference.

And yes, auditors love it. Last SOC 2 review? We handed them a clickable timeline of every AI-generated line, who prompted it, and what review flag it had. They asked for a beer afterwards. Seriously.

It’s not magic. It’s just discipline dressed up as tooling.

Rahul Borole

March 24, 2026 AT 05:15The paradigm shift here is not merely technical-it is systemic. Traditional compliance frameworks are predicated upon the assumption of human agency in software development, an assumption that has been rendered obsolete by the emergence of generative AI in the development lifecycle.

It is imperative that organizations reengineer their Software Development Life Cycle (SDLC) to incorporate AI-native audit trails, prompt governance protocols, and real-time vulnerability interception mechanisms. Failure to do so constitutes a material risk to operational integrity and regulatory standing.

Furthermore, the notion that compliance can be achieved through retrofitted tools is not only erroneous-it is dangerously misleading. The solution lies not in adaptation, but in reconstruction.

Organizations must treat AI-generated code as a first-class artifact, subject to the same rigor as human-authored code. This requires policy enforcement at the IDE layer, not the CI layer. The moment of generation is the moment of control.

Investment in AI compliance specialists is not an overhead-it is a strategic imperative. The cost of non-compliance, as evidenced by the healthcare startup case, is incalculable in both financial and reputational terms.

Sheetal Srivastava

March 25, 2026 AT 19:43Oh honey, you’re still thinking in binaries? ‘Human-written’ vs ‘AI-generated’? That’s so 2023.

The real issue isn’t compliance frameworks-they’re fine. It’s that your org still has a human-centric worldview. You’re trying to fit a quantum system into a Newtonian box.

You need to stop thinking of prompts as ‘requests’ and start treating them as *intent signatures*. Each prompt is a behavioral fingerprint. The AI isn’t writing code-it’s *executing a legal contract* between developer intent and system output.

And if you’re not tagging every AI-generated line with a zero-knowledge proof of intent, you’re not just non-compliant-you’re *legally negligent*.

Also, why are you still using GitHub Copilot? It’s like using a typewriter in 2025. Knostic Kirin’s prompt-embedding blockchain layer is the only thing that actually maps to ISO 27001 A.14.2.3. Everything else is theater.

Bhavishya Kumar

March 25, 2026 AT 23:55You wrote 'vibe coding' 27 times in this article. It's not a term. It's a buzzword. You're not describing a new methodology-you're repackaging autocomplete with a marketing team.

Also, 'prompt governance' isn't a thing. It's called input validation. 'IDE scanning' is just static analysis with a different name. 'Secrets detection at the source' has existed since 2018.

And you say '275+ data points per code change'? That's excessive. You're not auditing a nuclear reactor. You're writing a web app.

Grammar note: 'AI-generated snippets that appear out of nowhere'-'nowhere' is not a location. You mean 'without traceability.' Please revise.

ujjwal fouzdar

March 27, 2026 AT 23:45Let me tell you something about the soul of code.

When a human writes a loop, they’re thinking of the user, the edge case, the sleepless night they’ll have debugging it. When AI writes it? It’s just pattern matching. No soul. No fear. No humility.

And that’s why compliance breaks.

SOC 2 isn’t about firewalls or encryption-it’s about accountability. Who bears the weight of the decision? Who carries the guilt when the system crashes? The AI doesn’t feel shame. The developer? They shrug and say, ‘It was the bot.’

We’re not losing control of code.

We’re losing the *meaning* of it.

And maybe… that’s the real audit failure.

Have you ever looked at a line of AI-generated code and felt… nothing?

That’s the danger.

Not the vulnerability.

The emptiness.

Anand Pandit

March 28, 2026 AT 06:49Big thanks for laying this out so clearly! I’ve been on the fence about AI coding tools because I worried about audits, but this gave me a roadmap.

We started with just blocking secrets in VS Code-no more hardcoded AWS keys-and it cut our incident tickets by 40% in a month.

Also, the prompt templates? Game-changer. Instead of ‘get user data,’ we now use ‘get user data with masking, auth check, and audit log’-and the AI actually follows it better than some devs.

And yes, we hired a prompt auditor. She’s part-time, but she’s brilliant. We call her our ‘AI translator.’

If you’re scared to try this, start small. One plugin. One rule. One team. You’ll be surprised how fast it clicks.

You’ve got this.

Reshma Jose

March 28, 2026 AT 09:35Ugh I just had a meeting where my manager said 'just use Copilot and fix it in review.'

NO. Just no.

I spent two days cleaning up AI-generated code that had 17 different versions of the same function, all with different variable names, no comments, and one had a hardcoded password. (Yes, really.)

We turned on Knostic Kirin last week. It blocked 80% of bad prompts before they even hit the file. I didn’t think it’d work, but now I’m the office cheerleader.

Also-yes, we need more people. My dev team is 5 people. We now have 1 person whose whole job is managing AI prompts. It’s weird. It’s necessary.

Rajat Patil

March 29, 2026 AT 18:54Thank you for sharing this. I’ve been quietly worried about our team’s shift to AI-assisted coding, but didn’t know how to articulate the risks.

The part about traceability really resonated. We’ve had two near-misses where code slipped through because no one could say who asked for it or why.

I agree with the need for new roles-not to replace developers, but to support them. We’re starting small: one policy engineer, one training session per week.

It’s not about fear. It’s about responsibility.

And yes, the audit time dropped from weeks to days. That’s not just efficiency-it’s peace of mind.