Ever spent hours writing complex prompts only for your LLM to return a JSON object with a missing bracket or a stray comma that crashes your entire production pipeline? It is a frustratingly common problem. Even the most powerful models occasionally hallucinate syntax, and in a production environment, a single misplaced character is the difference between a working feature and a 500 Internal Server Error. This is where Constrained Decoding is a technique that manipulates a generative model's token generation process to ensure the output adheres to a specific structural format, such as JSON or a regular expression . Instead of hoping the model follows your instructions, constrained decoding forces the model to stay within the lines.

How Constrained Decoding Actually Works

To understand this, you have to look at how LLMs generate text. They don't pick words; they predict the probability of the next token from a massive vocabulary. In a standard setup, the model might see a 10% chance for a comma and a 5% chance for a closing brace. If the comma is more likely, it picks the comma, even if that comma breaks the JSON schema you requested.

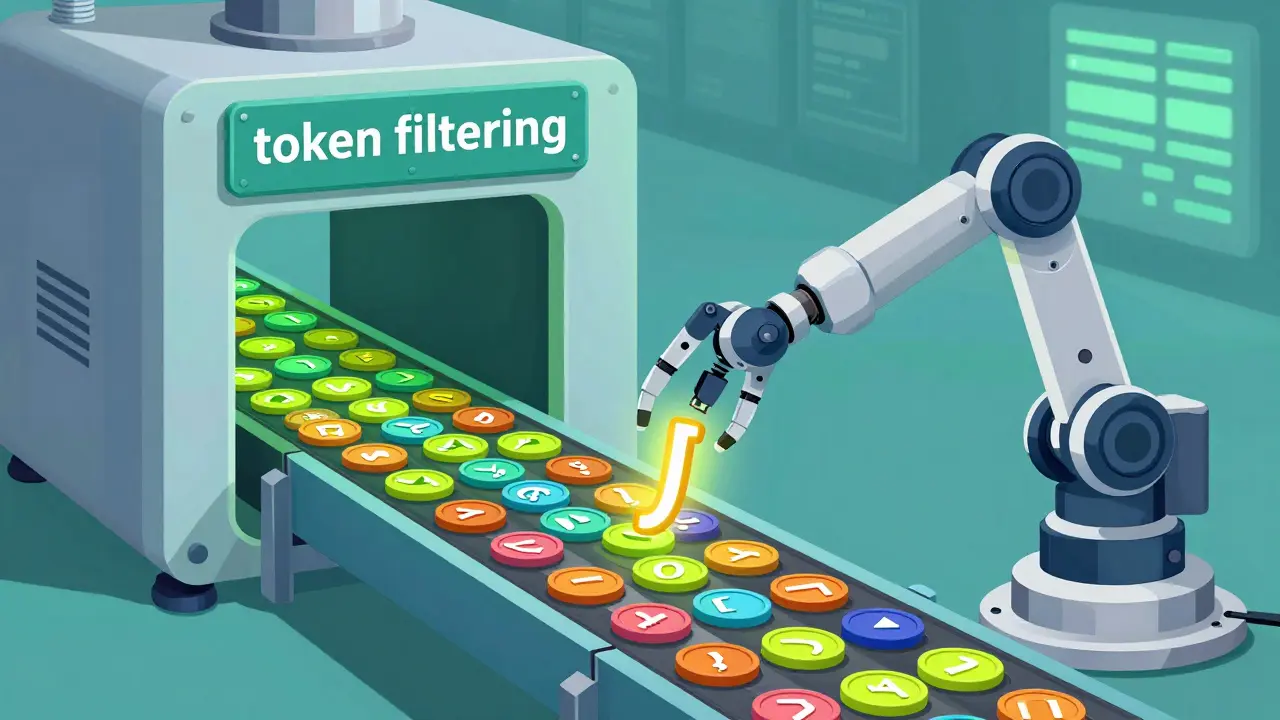

Constrained decoding changes the game by filtering the vocabulary at every single step. If the current state of the output requires a closing bracket to be syntactically valid, the system simply zeroes out the probability of every other token in the vocabulary. The model is then forced to pick from the remaining valid options. As noted in the ACL 2025 RANLP proceedings, this process derives a restricted set of tokens, ensuring that the sequence adheres to the schema with mathematical precision.

Modern implementations, like those found in NVIDIA Triton Inference Server is an open-source inference server that provides a standardized interface for deploying AI models with native support for constrained decoding , have become incredibly efficient. They can now skip "boilerplate" tokens-the parts of the JSON or schema that are predictable-and only spend computational power on the actual data the model needs to generate. This keeps the performance hit low, typically adding only about 5% to 8% to the total inference time.

Controlling Output with JSON, Regex, and Schemas

Depending on your goal, you can apply constraints at different levels of strictness. Most developers start with JSON mode, but the real power lies in more granular controls.

- JSON Control: This ensures proper bracket matching, comma placement, and key-value structure. It effectively eliminates the 38.2% formatting error rate seen in zero-shot unconstrained generation.

- Regex Constraints: If you need a specific format-like a US phone number (xxx-xxx-xxxx) or a specific SKU ID-you can use Regular Expressions is a sequence of characters that specifies a search pattern for text matching, used here to restrict LLM token generation . The model can only select tokens that continue a string matching that specific pattern.

- Schema Control: For complex data needs, you can provide a full schema. The system expands non-terminals and backtracks when necessary to ensure the final output is syntactically perfect.

| Constraint Type | Strictness | Best Use Case | Implementation Difficulty |

|---|---|---|---|

| JSON Mode | Medium | API responses, data extraction | Low |

| Regex | High | IDs, dates, phone numbers | Medium |

| Full Schema | Very High | Complex nested objects, legal forms | High |

The Trade-off: Structural Validity vs. Semantic Quality

Here is the catch: you can't get something for nothing. There is a fundamental tension between making the output look right (structure) and making the output be right (semantics). When you force a model to pick a token it didn't "want" to pick, you're introducing bias into the output distribution.

Research by Wang et al. (2024) shows that constrained tokens often have a probability nearly five times lower than the model's natural choice. This can lead to "semantic degradation." Essentially, the model might be so focused on closing a JSON bracket that it forgets the actual logic of the answer it was providing. This is especially noticeable in Instruction-Tuned Models is LLMs that have been further trained using RLHF or supervised fine-tuning to follow specific human instructions , which can actually see a drop in accuracy when forced into strict constraints because they've been optimized for natural conversation, not rigid syntax.

Interestingly, this trade-off varies by model size. Smaller models (under 14B parameters) benefit massively from constrained decoding, with some seeing a 41.2% improvement in structured reasoning tasks. For giant models, however, the bias can sometimes outweigh the benefits, meaning a few-shot unconstrained prompt might actually be more accurate than a zero-shot constrained one.

Practical Implementation and Tools

If you're looking to implement this today, you have a few paths. You can use high-level inference servers like vLLM is a high-throughput LLM serving engine that supports PagedAttention and constrained sampling or Text Generation Inference (TGI). Alternatively, open-source libraries like Outlines is a Python library used for guiding LLM generation through regular expressions and JSON schemas allow you to define your constraints directly in your code.

Be prepared for a bit of a learning curve. While setting up a basic JSON response is quick, crafting a complex regex constraint can take a few days of debugging. You'll find that prompt engineering still matters; constrained models often show steeper performance gains when you provide a few a examples (few-shot prompting) alongside the constraints.

Avoid using this for creative writing or open-ended brainstorming. The restrictions that make it great for data extraction make it terrible for storytelling. Use it when the cost of a syntax error is higher than the cost of a slight dip in linguistic fluidity.

Does constrained decoding slow down my LLM?

Yes, but usually not significantly. Most industry-standard implementations like NVIDIA Triton see an inference time increase of about 5-8%. Some custom implementations might see a larger slowdown (12-15%), but for most production use cases, the trade-off is worth the guarantee of valid output.

Will this stop my model from hallucinating facts?

No. Constrained decoding only guarantees structural validity, not factual accuracy. Your model will still provide a perfectly formatted JSON object, but the data inside that object could still be wrong. You still need verification steps for the content itself.

Which models work best with constrained decoding?

Smaller models (under 14B parameters) and base models often see the biggest boosts in reliability. Mixture-of-Experts (MoE) architectures have also shown about 23.5% better constrained performance compared to traditional dense architectures.

What is the difference between JSON Mode and Constrained Decoding?

JSON Mode is often a simplified version of constrained decoding provided by API providers (like OpenAI) that generally ensures the output is a valid JSON object. Full constrained decoding allows for specific regex patterns, custom grammars, and strict schema adherence beyond just "being a valid JSON."

Can I use this for complex regular expressions?

Yes, but be careful. Extremely complex grammars can lead to a higher rate of semantic errors (up to 22.3% increase) because the model struggles to find a valid token that also makes sense in context.

Next Steps and Troubleshooting

If you are just starting out, begin by implementing a simple JSON schema using a library like Outlines. If you notice that the model's answers are becoming nonsensical or too brief, try relaxing your constraints or adding 2-3 high-quality examples to your prompt to guide the semantic flow.

For those deploying at scale in financial or healthcare sectors, prioritize the integration of constraints directly into your inference server (like vLLM) to minimize latency. If you encounter a scenario where a larger model is performing poorly under constraints, consider switching to an unconstrained approach combined with a robust post-processing validation script-sometimes the "natural" path is the most accurate one for 70B+ parameter models.