It is easy to feel overwhelmed by the buzz surrounding artificial intelligence regulation. With the rules already in place and new deadlines approaching rapidly, the gap between innovation and legal compliance is shrinking fast. As we navigate mid-2026, organizations deploying AI systems face a clear reality: the EU AI Acta comprehensive regulatory framework established by the European Union governing artificial intelligence systems is not just theoretical anymore. The bans are active, the governance rules are running, and the penalties for missing compliance windows are significant.

This article cuts through the noise to explain exactly what the regulation demands for generative AI tools. You do not need a law degree to understand your obligations, but you do need to know which category your technology falls into. Whether you are building a foundation model or integrating chatbots into customer service, the path forward depends entirely on risk classification.

The Four Tiers of AI Risk Classification

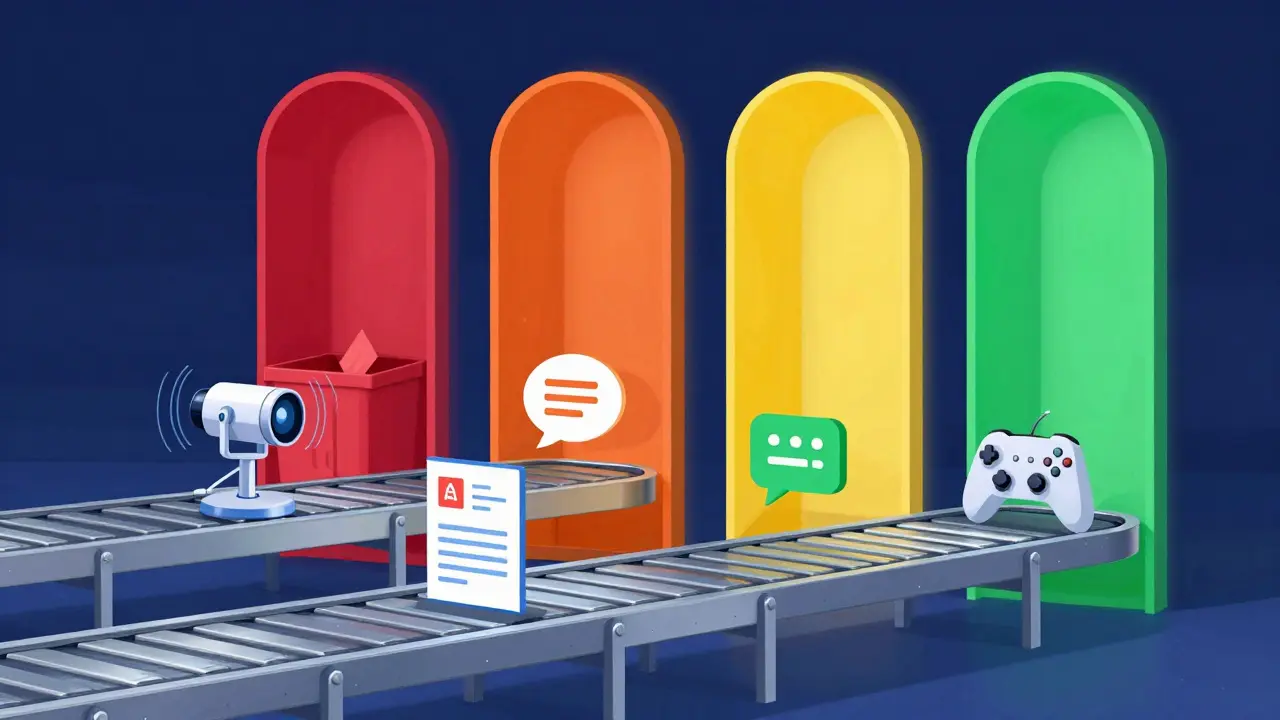

The core of the regulatory approach is a tiered system. Instead of banning everything, the legislation categorizes systems based on potential harm. Think of it as a traffic light system, but with four colors instead of three. Each color dictates a different set of rules.

The most severe category includes unacceptable-risk applications. These systems are flat-out banned because they violate fundamental rights. Examples include social scoring systems used by governments and certain biometric identification technologies in public spaces. These prohibitions became enforceable on February 2, 2025. If you are currently operating any tool similar to these examples, you must cease activity immediately.

The next level targets high-risk AI systems. This group covers critical areas where errors could cause serious physical or economic damage. Think of recruitment tools that screen CVs, educational assessment systems, and law enforcement support tools. For these systems, strict compliance obligations apply regarding data quality, human oversight, and cybersecurity measures.

| Risk Category | Regulatory Status | Examples |

|---|---|---|

| Unacceptable Risk | Banned (Article 5) | Social scoring, biometric surveillance |

| High Risk | Strict Compliance Required | CV screening, education grading, medical devices |

| Limited Risk | Transparency Obligations | Chatbots, deepfakes, general-purpose models |

| Minimal Risk | No Specific Rules | Video games, spam filters |

Where Generative AI Fits in the Framework

Most people ask where large language models and image generators sit in this structure. The answer lies in the General-Purpose AI (GPAI) category. Unlike specific applications designed for a single task, GPAI models act as foundational building blocks. They can be tweaked and adapted for countless downstream uses.

General-Purpose AI AI models capable of performing a wide range of tasks with adaptabilityBecause these models are so versatile, the regulations treat them differently than a specialized tool. Governance rules for these providers took effect on August 2, 2025. This means you have been operating under these rules for several months. The focus here is less on pre-market approval and more on transparency and safety by design.

You need to distinguish between the model itself and the application built on top of it. A foundation model might fall under limited risk, but if a company uses that model to triage patient health data, the resulting application becomes a high-risk system. Both the upstream provider and the downstream operator have responsibilities.

Specific Obligations for Providers

For those developing or distributing generative AI systems, the obligations have shifted from vague promises to concrete documentation. You cannot claim ignorance once the August 2026 deadline arrives for broader transparency rules.

First, there is the matter of copyright. Providers must establish policies respecting intellectual property rights. This does not mean avoiding copyrighted material entirely, but it does require a mechanism to handle opt-outs. You must publish a short summary of the copyrighted material used for training. The European Commission has provided templates for this disclosure to standardize the information across the industry.

Second, technical documentation is mandatory. You need a private dossier-often called a black box-that shows regulators exactly how the model was built and tested. This isn't just code; it is a log of the development process, data sources, and testing results. Regulators need this access if issues arise later.

Third, communication matters. You must provide customers with a model card. This document specifies what the model is meant to do and, crucially, what it is not meant to do. It sets realistic expectations for users and helps prevent misuse. If a customer uses your tool for something unsafe, having defined limitations in the model card can help limit liability.

- Maintain policies ensuring copyright compliance

- Publish summaries of training datasets

- Create and keep a technical "black-box" dossier

- Provide detailed model cards to customers

- Report serious incidents to authorities

Transparency and Deepfake Labeling

Deception is one of the biggest concerns regarding generative AI. To counter this, the Act mandates that AI-generated content must be identifiable to users. If an AI creates an image, text, or audio file intended to inform the public, it must carry a label indicating its artificial origin.

This requirement applies broadly. When you interact with a chatbot, the system must clearly indicate it is an AI agent, not a human. For deepfakes specifically, the labeling must be machine-readable and visible. This ensures that automated systems and search engines can also detect synthetic content.

These transparency rules are scheduled to become fully applicable on August 2, 2026. If you are currently rolling out marketing campaigns using AI-generated assets, you should prepare your workflow to embed these labels now. Waiting until the deadline risks falling behind competitors who are already compliant.

Upcoming Deadlines and Penalties

We are approaching a critical period in the legislative timeline. While some rules were adopted in 2025, the teeth of the regulation bite deeper in 2026. Understanding the penalty structure helps prioritize resources.

Fines are substantial. General violations can attract penalties up to €15 million or 3% of global annual turnover. However, prohibited practices carry much steeper costs, reaching €35 million or 7% of global turnover. These figures represent a real financial risk for large enterprises.

GPAI-specific fines commence on August 2, 2026. This gives current developers a few months to finalize their copyright compliance and documentation. Once that date passes, the European Commission will actively enforce these provisions. The European Commission has established an AI Office to oversee these processes.

For high-risk systems embedded in regulated products like medical devices, the transition period extends to August 2, 2028. Stand-alone high-risk systems generally follow the August 2026 timeline, depending on when adequate support measures are confirmed. This staggered approach allows for adaptation, but it does not excuse total inaction.

Innovation Through Regulatory Sandboxes

Compliance does not mean stopping innovation. The Act recognizes that testing new technologies in a vacuum is risky for everyone involved. Article 57 requires each EU Member State to establish at least one AI regulatory sandbox by August 2, 2026.

These sandboxes are controlled environments where companies can test their AI technologies before full market deployment. Inside a sandbox, participants get regulatory guidance and reduced compliance burdens. It is essentially a safe space to prototype while working closely with supervisors.

Using a sandbox is particularly useful for complex projects where the risk classification is unclear. By engaging early, developers can get feedback on whether their system qualifies as high-risk or limited-risk before investing millions in product development. Many national agencies are already setting up frameworks to manage these programs effectively.

Practical Steps for Immediate Action

If you are reading this in March 2026, you have a narrow window to align before the heavy enforcement dates arrive. Start by auditing your inventory. Map every AI system you use or sell against the risk categories. Determine which are high-risk and which fall under GPAI rules.

Next, review your data supply chains. Do you have licenses for the training data you used? Can you produce a summary of that data? If not, start compiling it now. Documentation takes time, and retroactive analysis is always harder than ongoing tracking.

Finally, prepare your internal communications. Employees need to understand that using unauthorized tools is a compliance risk. Training staff on AI literacy became mandatory for organizations operating in the EU market back in 2025. Revisit that training to ensure everyone knows the difference between acceptable and unacceptable use cases.

When do the GPAI fines actually start?

Fines specifically targeting General-Purpose AI providers commence on August 2, 2026. While governance rules were applicable earlier, the financial penalties for non-compliance begin at that August deadline.

Is my internal chatbot considered high-risk?

Generally, internal chatbots fall under limited risk requiring transparency. However, if the chatbot influences employee hiring decisions or access to credit, it becomes a high-risk system subject to stricter controls.

Do US companies need to comply with the EU AI Act?

Yes, if you offer goods or services to individuals in the EU or monitor their behavior, the extraterritorial scope of the Act applies regardless of where your headquarters are located.

What happens if I miss the sandbox registration deadline?

Missing the sandbox deadline does not exempt you from compliance. You must meet the standard regulatory requirements. Sandboxes are optional support mechanisms to aid compliance, not replacements for the law.

How do I determine if my AI is a GPAI model?

A model is classified as GPAI if it performs a wide range of tasks and can be easily modified to serve many different users and purposes without extensive retraining.