You spent millions on Generative AI, a technology that promises to revolutionize your business operations. Your teams are using it. The tools are live. But when the CFO asks for proof of return on investment, you hit a wall. You can’t tell if the productivity boost came from the AI or just from the new software update you rolled out last month. This is the core attribution challenge in Generative AI ROI: isolating AI effects from other concurrent changes.

In 2025 and 2026, this problem exploded. MIT’s report on the 'GenAI Divide' revealed that 95% of organizations failed to show measurable financial returns despite spending $30-40 billion. It’s not because the AI doesn’t work. It’s because we’re trying to measure a complex, interconnected transformation with outdated, industrial-era metrics. If you want to secure budget for next year, you need to stop guessing and start isolating.

Why Traditional ROI Models Fail for GenAI

We used to buy machines that did one thing. A conveyor belt moved boxes faster. You measured the speed before and after. Simple. Generative AI is different. It doesn’t just replace a task; it changes how people think, write, code, and interact. The value is distributed across customer satisfaction, risk reduction, innovation speed, and employee morale.

Traditional frameworks look for direct cost savings. They miss the soft ROI entirely. Dr. Erik Brynjolfsson from Stanford Digital Economy Lab put it bluntly: "We're trying to measure the value of electricity by counting how many candles it replaces." When you apply 20th-century accounting to 21st-century cognitive work, the numbers don’t add up. You end up with vague promises of "efficiency gains" that CFOs reject. To fix this, you must accept that GenAI creates non-linear value that requires multi-dimensional tracking, not just a single bottom-line figure.

The Data Collection Burden: What You Need to Track

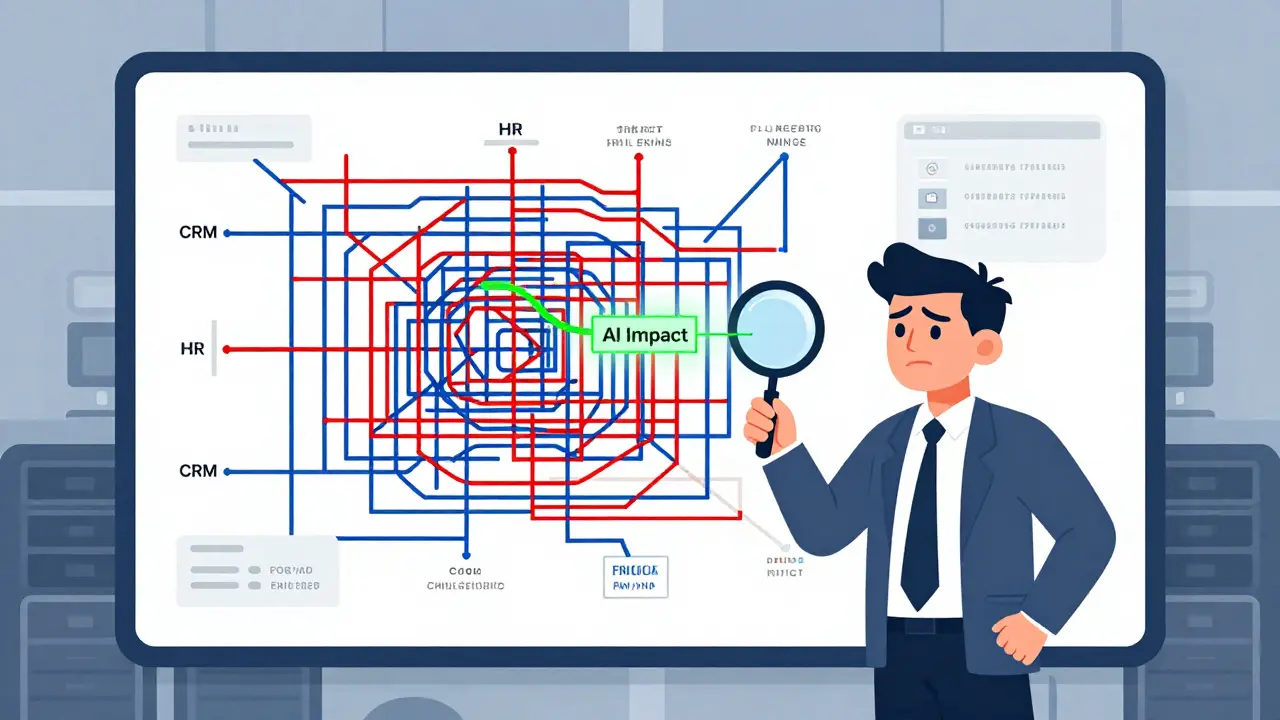

To isolate AI effects, you need data. Lots of it. Deloitte’s 2025 study found that measuring AI impact requires 57% more data collection effort than traditional tech projects. You aren’t just looking at one system. You need to integrate data from 8-12 disparate sources-CRM, HR platforms, code repositories, customer support logs-to see the full picture.

Most companies fail here because their data lineage is broken. Techverx reported that 51% of enterprise data stacks lack the capability to trace an AI output back to a specific business outcome. Without this link, you’re flying blind. You need robust pipelines that capture granular interaction data. Think about capturing 15-20 data points per AI interaction. Did the user accept the suggestion? Did they edit it? How much time did it save? If you aren’t recording these micro-interactions, you can’t prove macro-results.

Establishing Baselines and Control Groups

You can’t measure improvement without knowing where you started. Yet, only 31% of AI initiatives establish pre-deployment baselines. That’s a fatal error. Before you launch your generative AI tool, document your current state. How long does it take to write a sales email? How many errors occur in code reviews? Get specific numbers.

Then, you need control groups. In a perfect world, you’d run an A/B test. One team uses AI; another doesn’t. Informatica’s survey showed that 68% of data science teams struggle to create proper control groups because AI spreads too quickly through an organization (shadow adoption). If everyone starts using it, you lose your comparison group. Successful companies like Siemens use counterfactual analysis. They statistically model what would have happened without the AI. This method gave them 95% confidence that their 27% productivity gain was due to AI, not market trends.

Timing Is Everything: Avoiding the Early Assessment Trap

Patience is rare in tech, but essential for AI attribution. Forty-two percent of organizations try to measure ROI within 3-6 months. This is too soon. Generative AI requires a learning curve. Teams dip in productivity as they learn to prompt effectively, then surge as they master the tool. If you measure during the dip, you’ll kill the project.

Mature AI practitioners wait 12-18 months for full maturation. Berkeley Executive Education found that focusing only on short-term ROI causes you to miss 73% of AI’s actual value. The biggest wins often come from capability enhancements-things employees can do now that they couldn’t do before-which take time to materialize financially. Don’t let quarterly reporting cycles force premature conclusions. Use time-series decomposition to separate the initial noise from the long-term signal.

Differentiating Hard vs. Soft ROI

You need to track two types of value simultaneously:

- Hard ROI: Tangible financial impacts. Reduced headcount needs, lower cloud compute costs, fewer customer churn events. These are easy to quantify but often underestimate the total benefit.

- Soft ROI: Productivity gains, reduced burnout, faster innovation cycles, higher employee satisfaction. These are harder to measure but critical for retention and agility.

Leading organizations use hybrid frameworks. American Express combined quantitative metrics (22% reduction in service handling time) with qualitative assessments (developer feedback on code quality). Ignoring soft ROI leaves money on the table and blinds you to cultural shifts that drive long-term success.

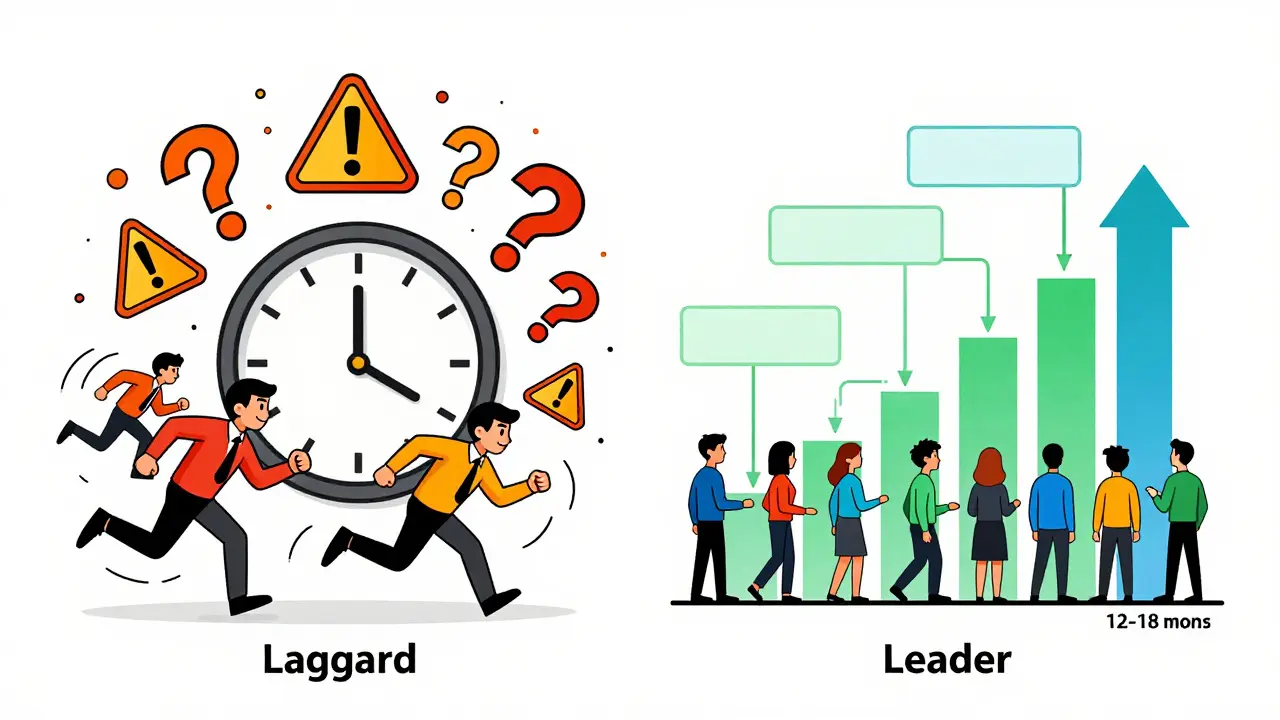

| Attribute | Laggard Organizations (74%) | Leader Organizations (26%) |

|---|---|---|

| ROI Assessment Window | 3-6 months (Too early) | 12-18 months (Allows maturation) |

| Baseline Establishment | Rare (18% do it) | Standard (92% do it) |

| Attribution Method | Single-metric calculation | Multi-touch attribution + Counterfactuals |

| Data Integration | Siloed systems | 8-12 integrated sources |

| Confounding Variables | Ignored (78% overlook them) | Explicitly controlled for |

Practical Steps to Isolate AI Effects Now

If you’re stuck in pilot purgatory, here is how to get unstuck. Start small. Pick one high-value use case. Create an "attribution sandbox"-a controlled environment where you can isolate variables. Document every step. Use difference-in-differences analysis to compare your AI-enabled teams against similar non-AI teams over time. Partner with finance early. Show them you’re building rigorous evidence, not hype. By Q2 2025, the AI Measurement Consortium released standardized methodologies for this exact purpose. Adopt their framework. It’s time to move from promises to proof.

Why is it so hard to attribute ROI to Generative AI?

It is difficult because GenAI integrates into complex workflows rather than replacing a single machine. Its benefits are distributed across multiple departments and include both tangible financial savings and intangible productivity gains. Additionally, concurrent changes like process reengineering or market shifts often mask or amplify AI's true impact, making isolation challenging without rigorous statistical methods.

What is the "GenAI Divide" mentioned in recent reports?

The GenAI Divide refers to the gap between the 26% of organizations that have mature measurement frameworks and can prove AI value, and the 74% that cannot demonstrate clear financial returns despite significant spending. This divide determines which companies will continue to receive executive funding and which will face budget cuts.

How long should I wait before measuring GenAI ROI?

You should wait 12 to 18 months for a comprehensive assessment. Measuring within 3-6 months often captures the initial learning curve dip rather than the sustained productivity gains. Short-term measurements miss approximately 73% of AI's total value, which manifests through long-term capability enhancements.

What is counterfactual analysis in AI attribution?

Counterfactual analysis is a statistical technique used to estimate what would have happened without the AI intervention. Since creating perfect control groups is often impossible due to widespread adoption, this method models the expected baseline performance and compares it to actual results, allowing you to isolate the AI's specific contribution with high statistical confidence.

Do I need to change my data infrastructure to measure AI ROI?

Yes, typically. Effective attribution requires integrating data from 8-12 disparate systems and capturing granular interaction data (15-20 points per interaction). Most existing analytics stacks lack the lineage and storage capacity needed. You may need specialized AI observability tools and real-time feedback loops to connect AI outputs directly to business outcomes.