When you shrink a large language model down to fit on a phone or run cheaply in the cloud, you don’t just save memory-you risk breaking it. That’s the harsh truth behind today’s model compression boom. Companies are cutting down models with quantization, pruning, and distillation because they have to. But how do you know if the shrunken version still works? The old way-checking perplexity-isn’t enough anymore. In fact, it’s misleading. A model can look perfect on paper and still fail when you ask it to reason, translate, or answer a customer’s question. This isn’t theory. It’s happening in production right now.

Why Perplexity Alone Is a Lie

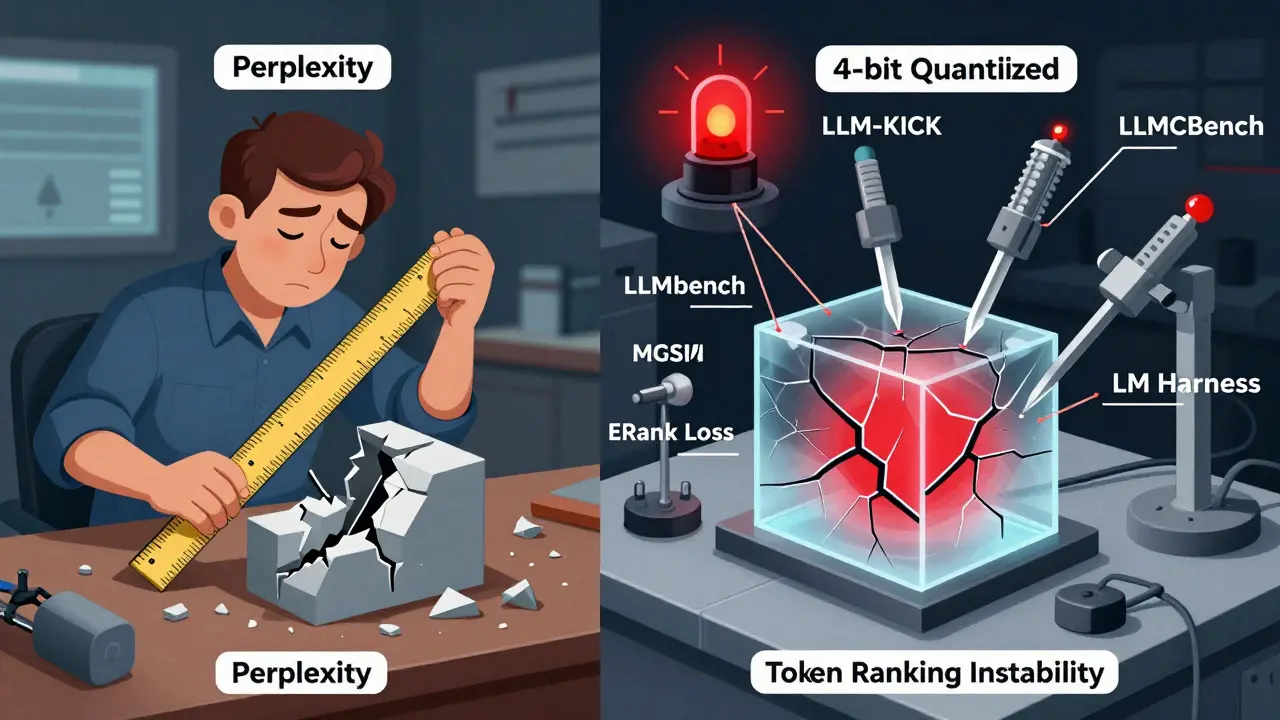

Perplexity used to be the gold standard. It measures how surprised a model is by the next word in a sentence. Lower number? Better. Simple. But after compression, perplexity becomes a trap. Apple’s LLM-KICK research in 2023 showed compressed models could have perplexity scores within 0.5 points of the original-yet still drop 30% on knowledge tasks. Why? Because perplexity doesn’t care if the model gets the right answer. It only cares if the answer looks likely. A model can confidently say "The capital of Australia is Sydney" and still score well on perplexity-even though it’s wrong. That’s not performance. That’s hallucination dressed up as confidence.Real-world users don’t care about perplexity. They care if their chatbot understands medical jargon. If their translation tool gets the tone right in Swahili. If their customer service bot doesn’t invent facts. Perplexity can’t measure any of that. And yet, until recently, most teams still ran tests on WikiText-2 or C4 datasets and called it a day. That’s like judging a car’s handling by how well it accelerates in a garage.

The New Evaluation Trinity

Today, serious teams use three dimensions to evaluate compressed models: size, speed, and capability. Not one. Not two. All three.- Size: How much disk space or VRAM does it take? A 4-bit quantized 7B model should fit under 2GB. If it’s using 7GB, you didn’t compress it-you just moved the problem.

- Speed: How many milliseconds per token? For real-time apps, under 50ms is the target. Over 100ms? You’re not ready for production.

- Capability: This is where it gets real. Can it do math? Reason step-by-step? Understand context across 500 words? Answer questions that require synthesis? That’s not covered by perplexity. That’s where LLM-KICK and LLMCBench come in.

The EleutherAI LM Harness is the most widely used tool-it tests 62 benchmarks across 350+ subtasks. But even it misses things. A 2025 study found compressed models maintained 98% of their perplexity score but lost 22-37% in token ranking consistency. That means when the model picks the next word, it’s no longer confident in the right ones. It flips between plausible lies. That’s a silent failure. No one notices until a user gets a dangerously wrong answer.

LLM-KICK: The Benchmark That Catches Silent Failures

Apple’s LLM-KICK isn’t another language test. It’s a diagnostic suite built for compression. It has 15 tasks designed to expose hidden weaknesses: multi-hop reasoning, fact verification, counterfactual reasoning, and domain-specific knowledge (like legal or medical terms). Here’s the kicker: LLM-KICK scores correlate with human evaluations at ρ=0.87. Perplexity? ρ=0.32. That’s barely better than random.One engineer on Reddit shared how his team deployed a 4-bit quantized model that scored 92.3 on LM Harness. It looked flawless. Then customers started getting confused answers about insurance policies. He ran LLM-KICK. The model failed 7 of the 15 tasks. It wasn’t broken-it was degraded. It sounded right but got the logic wrong. LLM-KICK caught it. LM Harness didn’t.

And it’s not just for big models. LLM-KICK works across sizes-from 2.7B to 6.7B parameters. That’s important because most deployed models are now under 7B. You can’t test compression on 70B models and assume it’ll work on 7B. The behavior changes.

LLMCBench: The Heavyweight Champion

If LLM-KICK is a scalpel, LLMCBench is an MRI. It combines 12 metrics across five dimensions: knowledge, generalization, resource use, hardware compatibility, and trustworthiness under attack. It measures something called ERank-effective rank-which tells you how much structural complexity remains after compression. A 6.7B model might have an ERank of 17.8. After compression, it drops to 13.9. That’s a 22% loss in internal structure. But if accuracy stays the same, you might think you’re fine. You’re not. That structure loss means the model can’t adapt to new contexts. It’s brittle.LLMCBench also tracks Diff-ERank: how much the structure changes during compression. Larger models show bigger changes-2.280 vs. 1.410 for smaller ones. That means bigger models are more fragile under compression. You can’t just scale down the same protocol. You need different rules.

The downside? LLMCBench takes nearly 19 hours to run on one model. Most teams can’t wait that long. So it’s used for final validation-not daily testing. Think of it like a full car inspection before delivery. You don’t do it after every drive. But you do it before you hand over the keys.

What’s Missing? Real-World Context

Even the best benchmarks still miss one thing: context. The WMT25 Model Compression Shared Task in 2025 found that compressed models performed 15-22% worse on low-resource languages like Swahili or Bengali than on English. Why? Because training data for those languages is sparse. Compression amplifies gaps. A model trained mostly on English forgets how to handle sentence structure in Tagalog. No benchmark tests that unless you build it in.And then there’s trust. A 2025 study showed compressed models develop polarized confidence: some wrong answers are delivered with 99% certainty. Others are hesitant, even when correct. Perplexity doesn’t see this. Human evaluators do. That’s why WMT25 started using LLMs as judges-AI evaluating AI-and got 89.7% agreement with humans. BLEU scores? Only 63.4%. That’s not progress. That’s a red flag.

How to Actually Implement This

You don’t need to run all three benchmarks. But you need at least two. Here’s a realistic pipeline:- Day 1-3: Run perplexity on WikiText-2. Just to make sure the model didn’t break completely.

- Day 4-7: Use EleutherAI LM Harness. Test on 10 core tasks: question answering, summarization, classification, math, logic, translation, code generation, fact checking, dialogue, and instruction following.

- Day 8-14: Run LLM-KICK. Focus on knowledge-intensive tasks. If it fails 3+ tasks, go back to training.

- Final check: If you’re deploying in high-risk areas (health, finance, legal), run LLMCBench once. Don’t automate it. Do it manually. Save the report.

Hardware matters too. LLM-KICK needs 48GB+ VRAM for a 7B model. If you’re on a 24GB GPU, you’re not testing accurately. You’re guessing.

The Bottom Line

Model compression isn’t a one-step trick. It’s a trade-off-and you need to measure the cost properly. Perplexity is dead as a standalone metric. Teams that still rely on it are gambling. The industry is moving fast. By 2026, 95% of enterprise LLMs will be compressed. And 95% of those will be evaluated with multi-dimensional protocols. If you’re not ready, you’ll deploy models that seem fine… until they’re not.The best teams don’t just compress. They validate. They test. They look for what’s broken-not what’s still there. Because in AI, what you can’t measure, you can’t trust. And what you can’t trust, you shouldn’t deploy.

Why is perplexity still used if it’s unreliable?

Perplexity is still used because it’s fast, cheap, and familiar. Most teams don’t have the time, hardware, or expertise to run advanced benchmarks. It’s also baked into training pipelines and academic papers. But that’s changing. As deployment moves to edge devices and real-world applications, teams are forced to adopt better methods. Perplexity is the starting point-not the finish line.

Can I use just one evaluation tool instead of multiple?

You can, but you’ll miss critical failures. EleutherAI LM Harness gives you broad coverage but misses subtle reasoning flaws. LLM-KICK catches those but doesn’t measure speed or size. LLMCBench covers everything but is too slow for regular use. The smart approach is layered: use LM Harness for daily checks, LLM-KICK for major releases, and LLMCBench for final validation. One tool isn’t enough anymore.

Do I need to test on every language my model supports?

Yes-if you care about real-world performance. Compression hurts low-resource languages harder. A model that works perfectly in English might fail badly in Urdu or Vietnamese because training data is sparse. The WMT25 shared task proved this. Always test on at least 2-3 low-resource languages you support. If performance drops more than 15%, your compression is too aggressive.

How long does it take to set up a full evaluation pipeline?

Expect 80-120 hours for a first-time setup. That includes installing frameworks, downloading datasets, configuring hardware, and writing automation scripts. Apple’s team documented this in 2024. After that, each new model takes 2-5 days to evaluate fully. Automation helps cut that time by 37%. Start small: get LM Harness running first. Add LLM-KICK next. Don’t try to do everything at once.

Are there free tools I can use to start?

Yes. EleutherAI LM Harness is open-source and free. LLM-KICK is also publicly available on GitHub. Both require Python and a GPU with 24GB+ VRAM. Hugging Face’s evaluation suite will integrate LLM-KICK in July 2025, making it even easier. You don’t need to buy anything. You just need to invest time. The cost of not evaluating? Far higher.

What’s next for evaluation protocols?

The future is integration. MLCommons is building standardized APIs for compression evaluation, expected in September 2025. The "Lottery LLM Hypothesis" is testing whether compressed models compensate for lost capability by using external tools-like search or calculators. Evaluation will shift from measuring the model alone to measuring the system it’s part of. That’s the next frontier.

Amber Swartz

March 7, 2026 AT 13:36Perplexity is a joke at this point. I saw a model that scored 94 on LM Harness and then told a user the capital of Canada was Toronto. TWICE. In a row. With 98% confidence. No one noticed until a customer filed a complaint. We were using perplexity as our main metric. Literally. We thought we were safe. Turns out we were just lucky. And now our whole team is embarrassed. Don’t be like us. Run LLM-KICK. Even if it takes a weekend. You’ll thank yourself later.

Robert Byrne

March 8, 2026 AT 03:35You’re all missing the point. It’s not about benchmarks-it’s about trust. I’ve seen models that pass every test but still sound like a drunk intern when you ask them to explain a contract clause. That’s not ‘degraded performance.’ That’s betrayal. And companies keep deploying them because they’re cheap. We’re not testing AI. We’re testing how much we’re willing to lie to ourselves. LLMCBench isn’t just a tool-it’s a mirror. And right now, most of us are ugly in it.

Tia Muzdalifah

March 9, 2026 AT 12:11omg i just tried to use a compressed model for my small biz chatbot and it told a customer ‘your invoice is paid’ when it wasnt?? like wtf. i thought it was just glitchy. now i get it. we were using perplexity. it looked perfect. but it was just… kinda guessing. i switched to lm harness + llm-kick and now it’s 10x better. also, testing in swahili? yes. we got 3 clients from kenya and the model was dead wrong on their names. ouch. learn from my pain lol

Zoe Hill

March 11, 2026 AT 00:53Hey, I just wanted to say thank you for writing this. I work in edtech and we were about to deploy a compressed model for student tutoring. We were only checking perplexity. I read this and freaked out. We paused everything, ran LLM-KICK, and found it failed 5/15 tasks on basic math reasoning. It was giving wrong answers but sounding super confident. Scary. We’re redoing our whole pipeline now. You saved us from a lot of angry parents. Seriously. Thank you.

Albert Navat

March 11, 2026 AT 19:13Let’s cut through the noise. The real issue isn’t perplexity-it’s the institutional inertia of ML teams who treat evaluation like a checkbox. You’re not ‘testing’ anything if you’re not measuring token ranking consistency, ERank decay, or Diff-ERank. If you’re not quantifying structural entropy loss, you’re just running placebo benchmarks. And yes, LLMCBench is slow-but that’s the point. If you can’t afford 19 hours to validate a model that’s going to interact with real humans, you shouldn’t be deploying it. Period. Stop optimizing for speed and start optimizing for safety. The ROI isn’t in latency-it’s in avoiding lawsuits.