It is easy to get swept up in the hype surrounding Large Language Model (LLM) agents, which are autonomous systems powered by large language models that perform specific tasks and make decisions within enterprise workflows. You have seen the demos. You know they can write code, summarize meetings, and answer complex data questions. But when your CFO asks for a hard number on return on investment, the excitement usually hits a wall. How do you put a price tag on an AI assistant that saves time, reduces errors, and makes employees happier? The answer lies not in guessing, but in a structured measurement framework that captures both immediate financial gains and long-term strategic value.

The Core Formula for LLM Agent ROI

Before diving into complex metrics, you need a baseline. The standard formula for calculating Return on Investment (ROI) remains straightforward, even for advanced AI systems: ROI = [(Net Benefits - Total Investment) / Total Investment] x 100. This gives you a percentage that tells you exactly how much return you generate for every dollar spent.

Let’s look at a realistic scenario. Suppose your company invests $100,000 in deploying LLM agents for customer support and internal data analysis. This investment covers software licensing, integration with existing tools like Slack or Salesforce, and initial training. Over the first year, these agents handle 40% of routine inquiries, saving 2,000 hours of human labor. If those hours are valued at $50 per hour, you have $100,000 in direct labor savings. Additionally, faster response times lead to a 5% increase in customer retention, valued at $50,000. Your total benefits are $150,000. Plugging this into the formula: [(150,000 - 100,000) / 100,000] x 100 equals a 50% ROI. This simple calculation provides a clear starting point for executive discussions, though it only scratches the surface of true value.

Key Metrics That Drive Quantifiable Value

To build a robust case, you must move beyond broad estimates and track specific performance indicators. Different workflows require different metrics, but three categories consistently deliver measurable results: search efficiency, task automation, and user adoption.

- Search Success Rate: This measures the percentage of queries that yield relevant results on the first attempt. In enterprise search, if an LLM agent helps an employee find a document in seconds instead of minutes, the cumulative time savings across hundreds of staff members become significant. A success rate above 80% typically indicates high utility.

- Time Saved Per Task: Track the reduction in time spent on repetitive tasks compared to previous methods. For example, if an LLM agent automates the generation of meeting summaries, saving 15 minutes per meeting for ten participants, you can calculate the aggregate productivity gain across the organization.

- User Adoption Rate: This is the percentage of employees actively using the new platform. High adoption signals that the tool is user-friendly and delivers perceived value. Low adoption often points to integration issues or a lack of trust in the AI’s outputs, requiring immediate intervention.

These metrics form the foundation of quantifiable ROI. They provide the hard data needed to justify continued investment and identify areas for improvement. However, focusing solely on time savings misses the broader organizational impact.

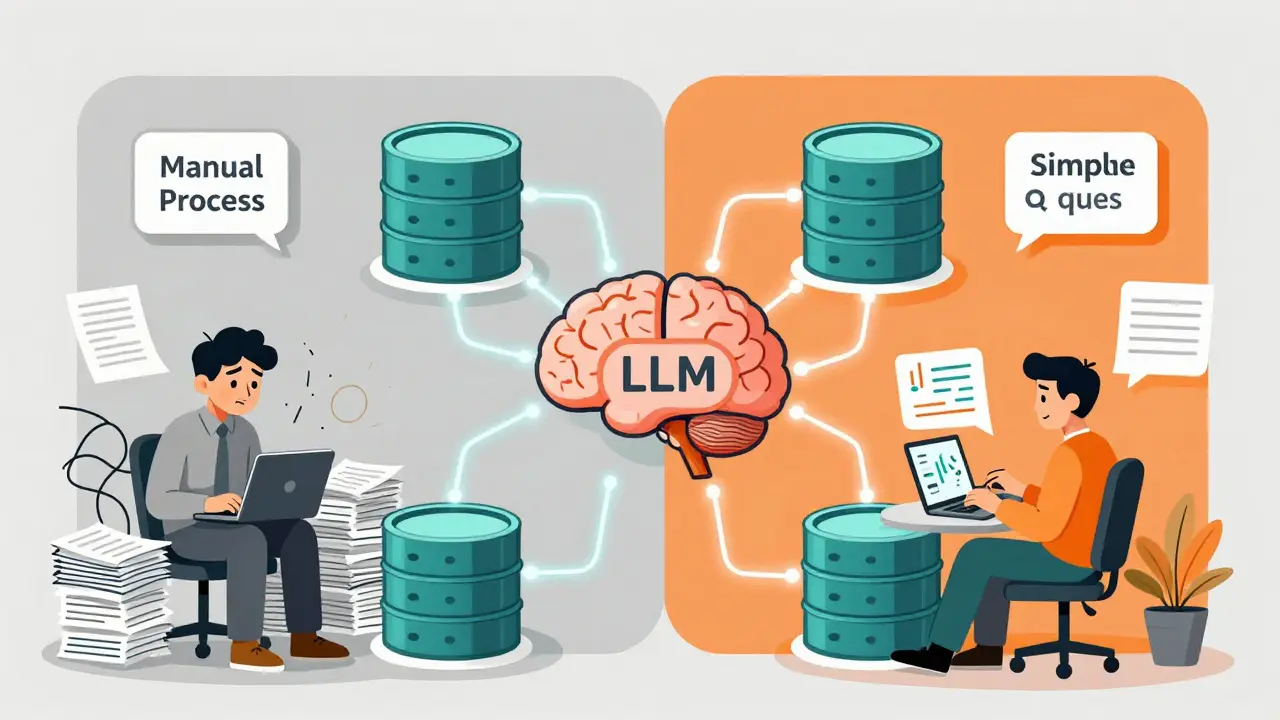

Data Governance and Self-Service Analytics

One of the most compelling use cases for LLM agents is in data governance and self-service analytics. Many organizations struggle with dispersed knowledge and outdated documentation. BlueSoft’s real-world testing demonstrated that LLMs can effectively index database structures, generate metadata, and answer natural language questions without extensive context input. This simplifies information access and builds trust in data assets.

Consider a support team of five specialists serving fifty data users. If each user asks two data-related questions per week, and each question takes twenty-five minutes to resolve, the team spends over forty hours weekly on repetitive queries. By implementing an LLM agent for conversational data access, organizations can achieve savings of up to 90% in these areas. The cost of tokens for LLM services is significantly lower than the cost of manual work hours. This shift not only reduces expenses but also frees up expert resources to focus on creative, high-value work rather than answering basic questions via email or Slack.

| Metric | Manual Process | LLM Agent Process |

|---|---|---|

| Average Response Time | 25 minutes | < 30 seconds |

| Cost Per Query | $12.50 (based on specialist hourly rate) | $0.05 (token cost) |

| Specialist Distraction | High (frequent interruptions) | Low (focused on complex tasks) |

| Scalability | Limited by headcount | Unlimited (proportional token costs) |

Strategic Benefits Beyond Cost Reduction

Financial savings are important, but they are not the whole story. LLM agents deliver strategic benefits that enhance organizational agility and decision-making. Reduced distraction for data engineers and analysts allows them to concentrate on innovative projects rather than administrative burdens. Stronger team alignment emerges from automatically generated glossaries and data descriptions, helping business and technical teams communicate in a shared language. This eliminates communication barriers and accelerates project timelines.

Furthermore, LLM agents demonstrate exceptional scalability. Performance remains high regardless of user count or system size, with costs growing proportionally to usage. This contrasts sharply with traditional hiring strategies, where scaling operations requires significant recruitment and training investments. The ability to onboard new employees faster and engage them more deeply with data assets creates a compounding effect on productivity over time.

Advanced Measurement Frameworks

Traditional ROI calculations often fail to capture the nuanced value of AI implementations. To address this, frameworks like the D2L IMPACT Framework incorporate confidence scoring and comprehensive business alignment. This model evaluates six dimensions: Involvement, Mastery, Performance, Alignment, Confidence, and Total ROI. Its distinguishing feature is presenting conservative ROI ranges with documented confidence levels, acknowledging uncertainty in long-term benefit predictions.

Similarly, the Anderson Value of Learning Model emphasizes strategic alignment over individual program evaluation. It addresses gaps between learning strategy and business priorities through return on expectations calculations alongside traditional ROI. These frameworks recognize that enterprise AI agents create organizational value that traditional financial metrics alone cannot fully capture. They require multi-dimensional assessment approaches that consider both quantitative data and qualitative improvements.

Tailoring the Narrative for Stakeholders

Different leaders care about different aspects of ROI. To secure buy-in, you must present the same data through lenses that resonate with each stakeholder group:

- Operations Leaders: Focus on process efficiency and performance consistency. Highlight how LLM agents reduce administrative burden, standardize workflow delivery, and provide visibility across teams.

- Finance Executives: Emphasize cost transparency and risk mitigation. Show personnel cost optimization realized through reduced manual task processing and predictable token-based pricing.

- Chief Executives: Frame LLM agents as strategic capabilities that create competitive advantage and growth enablement. Discuss workforce agility and the ability to pivot quickly in response to market changes.

- Board Members: Connect AI initiatives to strategic KPIs aligning with enterprise objectives. Demonstrate how AI drives long-term sustainability and shareholder value.

This tailored approach ensures that every stakeholder sees clear value aligned with their respective priorities, making the business case more compelling and easier to approve.

Technical Challenges and Model Selection

Deploying LLM agents at scale involves significant technical challenges that affect ROI realization timelines. Training performant models requires massive datasets, often containing sensitive organizational information. State-of-the-art models are trained on hundreds of gigabytes of data, and enterprises need additional fine-tuning on task-specific data to achieve sufficient performance.

Federated learning methodologies address these privacy concerns by enabling enterprises to train models across siloed datasets without collecting raw data on centralized servers. Major companies like Apple and Google have deployed federated learning at scale, and approximately 80% of global enterprises investigated this methodology by 2024. Choosing the right model is critical; selecting an inappropriate LLM can derail ROI. Evaluate models based on performance requirements, infrastructure compatibility, scalability, and total cost of ownership, including training, inference, and maintenance expenses.

Capturing Long-Tail Value

Finally, remember that LLM agents generate long-tail value that accrues over extended timeframes. This includes compounding benefits from agent learning and improvement, strategic capabilities that create new business opportunities, and organizational knowledge embedded within deployed systems. Traditional ROI calculations often miss these emerging patterns. To capture long-tail value, reassess ROI periodically rather than treating AI implementations as discrete projects with fixed timelines. Real-time monitoring platforms enable continuous tracking of agent performance against business metrics, transforming AI ROI from defensive reporting into a strategic advantage. By adjusting strategies and reallocating resources based on actual data, you improve the likelihood of achieving or exceeding projected returns.

What is the standard formula for calculating LLM agent ROI?

The standard formula is ROI = [(Net Benefits - Total Investment) / Total Investment] x 100. This calculates the percentage return generated for every dollar invested in the LLM agent system, providing a baseline for financial decision-making.

How do LLM agents impact data governance costs?

LLM agents can reduce data governance costs by up to 90% in areas like conversational data access and automatic labeling. By handling routine queries and generating metadata, they free up specialist time for higher-value tasks, significantly lowering the cost per query compared to manual processes.

Why is user adoption rate a critical metric for LLM ROI?

User adoption rate indicates whether the solution is user-friendly and delivers perceived value. High adoption correlates with increased productivity gains and successful integration into workflows, while low adoption suggests usability issues or lack of trust, which can undermine ROI projections.

What is the D2L IMPACT Framework?

The D2L IMPACT Framework is a comprehensive measurement model that evaluates AI implementations across six dimensions: Involvement, Mastery, Performance, Alignment, Confidence, and Total ROI. It presents conservative ROI ranges with confidence scores, acknowledging uncertainty in long-term benefit predictions.

How does federated learning affect LLM deployment costs?

Federated learning allows enterprises to train LLMs on siloed datasets without centralizing sensitive data, reducing privacy risks and compliance costs. While it adds technical complexity, it enables safer and more scalable model training, ultimately supporting broader adoption and sustained ROI.

What is long-tail value in the context of LLM agents?

Long-tail value refers to compounding benefits that accrue over time, such as improved agent learning, new business opportunities, and embedded organizational knowledge. These benefits are often missed in initial ROI calculations but become significant contributors to overall returns as systems mature.

How should I present LLM ROI to finance executives versus CEOs?

For finance executives, emphasize cost transparency, risk mitigation, and personnel cost optimization. For CEOs, frame LLM agents as strategic capabilities that drive competitive advantage, workforce agility, and growth enablement. Tailoring the narrative ensures each stakeholder sees value aligned with their priorities.

Can LLM agents improve search success rates in enterprise environments?

Yes, LLM agents can significantly improve search success rates by delivering relevant results on the first attempt. This reduces time spent on information retrieval and minimizes frustration, leading to substantial cumulative productivity gains across the workforce.