Imagine trying to read a book where every word is chopped into random syllables. You’d miss the meaning, right? That’s exactly what happens when you pair a Large Language Model with a poorly designed tokenizer. Most developers treat tokenization as a boring preprocessing step-something you configure once and forget. But recent research shows this mindset is costing teams up to 30% of their model’s potential capacity. The way you break text into tokens doesn’t just affect speed; it fundamentally shapes how well your AI understands code, numbers, and even slang.

If you’re building or fine-tuning an LLM in 2026, ignoring tokenizer design is like buying a Ferrari and filling it with low-grade fuel. You might get somewhere, but you won’t go fast, and you’ll burn out quickly. This guide cuts through the academic jargon to show you exactly how different tokenization strategies impact real-world model quality, efficiency, and accuracy.

The Hidden Cost of Bad Tokenization

We often think of tokenizers as simple translators, converting human text into numbers for machines. In reality, they are the lens through which the model sees the world. Dr. Elena Rodriguez from Stanford University puts it bluntly: "A mismatched tokenizer can blind your model to crucial linguistic patterns." When that lens is blurry, the model struggles.

Consider the problem of numerical representation. Standard tokenizers often split numbers into individual digits or arbitrary chunks. If you have a financial analysis model, the number "1,000,000" might be tokenized differently than "999,999," creating inconsistent embeddings. A study published in PMC11339515 highlighted that this digit-length variability causes significant embedding inconsistencies. Users on Reddit’s r/LocalLLaMA reported that switching to custom numerical token handlers improved accuracy by up to 18%. Without proper handling, your model treats numbers as opaque symbols rather than mathematical values, leading to errors in tasks requiring precise calculation.

Then there’s the issue of vocabulary overlap. Research from the TokSuite team revealed that different tokenizers share less than 25% of their vocabulary. This means if you train one model with Byte-Pair Encoding (BPE) and another with Unigram, they are essentially speaking slightly different languages. Integrating these models later requires up to 20% more engineering effort. You aren’t just saving time upfront; you’re preventing future headaches.

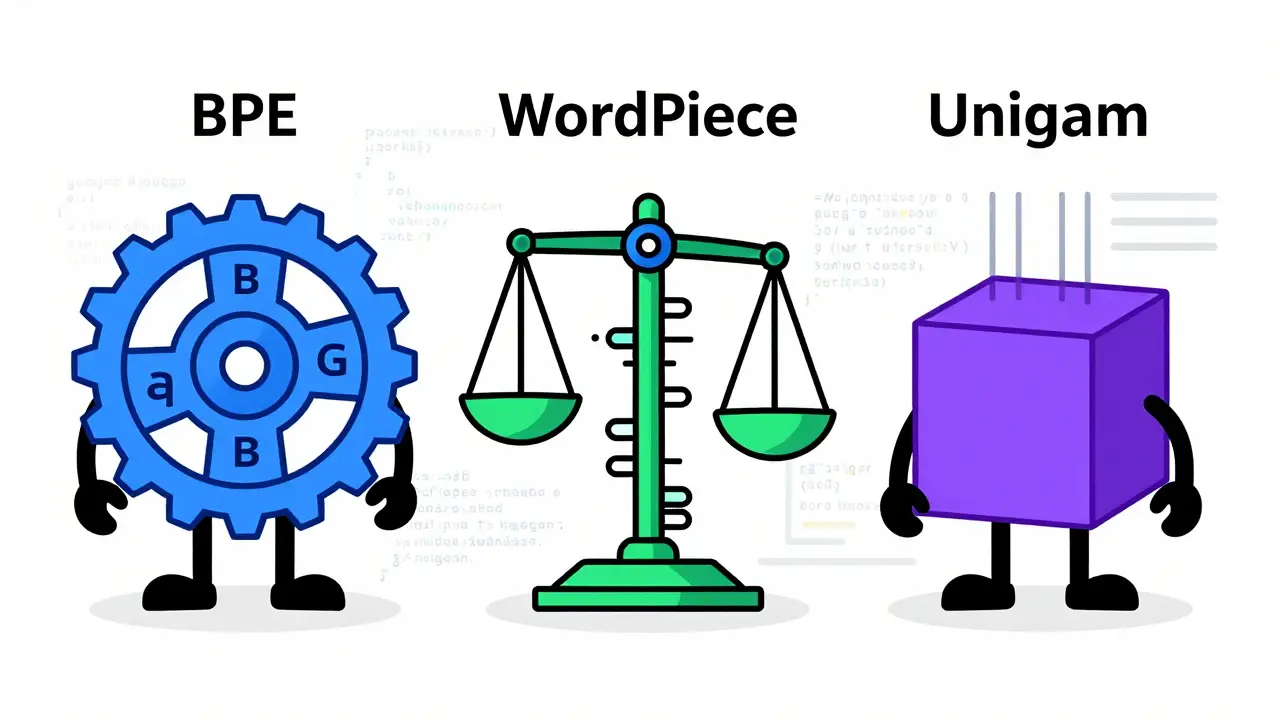

BPE, WordPiece, and Unigram: Which One Wins?

You’ve probably heard these names before, but do you know which one fits your specific use case? Let’s break down the three dominant algorithms powering today’s AI.

| Algorithm | Core Mechanism | Best For | Key Trade-off |

|---|---|---|---|

| Byte-Pair Encoding (BPE) | Merges most frequent character pairs iteratively | General-purpose applications, balanced performance | Moderate compression; standard industry default |

| WordPiece | Selects merges based on likelihood scores | Tasks requiring high granularity (e.g., detailed NLP) | Higher computational cost (10-15% increase) |

| Unigram Language Model | Starts large, removes tokens minimizing likelihood impact | Code analysis, assembly, maximum compression | Slightly lower fertility (granularity preservation) |

Byte-Pair Encoding (BPE), introduced by Philip Gage in 1994 and popularized by OpenAI’s GPT models, remains the workhorse of the industry. It works by finding the most common pairs of characters and merging them until it hits a vocabulary limit. It’s reliable, predictable, and powers giants like GPT-4 (with ~50,000 tokens) and Llama 3 (with a custom variant of 128,000 tokens). If you need a safe bet for general text, BPE is your friend.

WordPiece, developed by Google for BERT, takes a probabilistic approach. Instead of just counting frequency, it looks at likelihood scores. This method preserves more granular information, showing 8-12% higher fertility. However, this comes at a price: increased computational costs. If your task requires understanding subtle nuances in every single token, WordPiece shines, but be prepared for heavier compute loads.

Enter Unigram Language Model. This algorithm starts with a massive vocabulary and prunes away the least useful tokens. Recent benchmarks from arXiv study 2511.03825v1 (November 2024) showed Unigram crushing the competition in compression efficiency. It required 12-18% fewer tokens per instruction compared to BPE and WordPiece on assembly code datasets. If you are processing dense, structured data like code or binary files, Unigram allows you to pack more information into each context window slot.

The Vocabulary Size Dilemma

Choosing an algorithm is only half the battle. The size of your vocabulary-the total number of unique tokens your model knows-is equally critical. There is no "one-size-fits-all" number here, and getting it wrong can tank your performance.

Smaller vocabularies, around 3,000 tokens, drastically reduce memory overhead. We’re talking about a 60% reduction in embedding layer memory. Sounds great, right? Not so fast. With fewer tokens, the model has to break words down further, increasing sequence length by 25-40%. Longer sequences mean slower processing and higher attention mechanism costs. Plus, smaller vocabularies struggle with Out-Of-Vocabulary (OOV) tokens, especially in technical domains.

Larger vocabularies, such as 128,000 tokens, flip this dynamic. They decrease sequence length by 30-45%, allowing the model to process more instructions per batch. Users on GitHub reported that doubling their vocabulary from 32K to 64K for a code generation model boosted accuracy by 9%. However, this gains come with a steep memory penalty: usage increases by 75-90%. For enterprise deployments where hardware costs are paramount, this trade-off needs careful calculation.

Data from the November 2024 arXiv study confirms that larger vocabularies consistently improve accuracy across all tokenizer types. Models using 35K and 128K vocabularies showed 7-12% higher accuracy in function signature prediction tasks compared to those using 3K. If your dataset contains wide numerical ranges or specialized terminology, lean toward the larger end of the spectrum.

Practical Implementation: Getting It Right

So, how do you actually implement these choices without pulling your hair out? The community consensus points to a few key steps.

- Collect a Representative Corpus: Don’t train on random internet text. Gather at least 100 million tokens from data that mirrors your production environment. If you’re building a medical AI, feed it medical literature. If it’s for code, use GitHub repositories.

- Choose Your Method: Stick to BPE for general use. Switch to Unigram if you’re dealing with code or need maximum compression. Use WordPiece if granularity is non-negotiable.

- Train with Hugging Face: The Hugging Face tokenizers library is the gold standard here. It’s robust, well-documented (4.3/5 stars on GitHub), and widely supported. Custom implementations often suffer from sparse docs and average 3.1/5 ratings.

Expect a learning curve. Developers typically spend 15-20 hours becoming proficient with tokenizer customization. The biggest pain points? Vocabulary size selection (cited in 41% of complaints) and numerical handling (29%). To mitigate this, preprocess your data carefully. The same arXiv study noted that preprocessing enhanced vocabulary alignment across different tokenizers by 5-8%. Simple rules like normalizing whitespace or standardizing number formats before tokenization can yield surprising gains.

Future Trends: Adaptive Tokenizers

The field isn’t standing still. By 2027, industry analysts predict average vocabulary sizes will grow from the current 30K-50K range to 80K-120K. But the bigger shift is toward adaptive tokenizers. These systems dynamically adjust their vocabulary based on the input content. Imagine a model that expands its dictionary when it encounters legal jargon and shrinks it for casual chat. The TokSuite team predicts this could reduce average sequence length by 25-35% while maintaining semantic fidelity.

Another frontier is numerical reasoning. Researchers at Google DeepMind are prototyping specialized numerical tokenizers that encode numbers as mathematical expressions rather than character sequences. Early tests showed a 28% improvement in numerical reasoning tasks. If you’re working on finance or science, keep an eye on these developments-they might render traditional digit-splitting obsolete.

What is the best tokenizer for coding tasks?

For coding tasks, Unigram is generally superior due to its high compression efficiency. Studies show it requires 12-18% fewer tokens per instruction compared to BPE and WordPiece on assembly and code datasets. This allows models to process more lines of code within the same context window, improving both speed and accuracy.

How does vocabulary size affect model memory usage?

There is a direct trade-off. Smaller vocabularies (e.g., 3K) reduce memory overhead by approximately 60% but increase sequence length by 25-40%. Larger vocabularies (e.g., 128K) decrease sequence length by 30-45% but increase memory usage by 75-90%. Choose based on whether your bottleneck is RAM or compute speed.

Why do LLMs struggle with numbers?

Standard tokenizers often split numbers into individual digits or arbitrary chunks, treating "100" and "1000" as unrelated symbols. This creates embedding inconsistencies. Implementing custom numerical tokenization rules or using specialized handlers can improve accuracy by up to 18%.

Is BPE still the industry standard?

Yes, BPE holds a 63% market share among commercial LLMs. It offers the most balanced performance for general-purpose applications. However, Unigram is gaining traction in specialized fields like code analysis due to better compression rates.

How long does it take to customize a tokenizer?

Developers typically require 15-20 hours to become proficient with tokenizer customization. Implementation time ranges from 2-3 days for standard adaptation to 2-3 weeks for custom pipelines with domain-specific rules.