Here is the truth nobody talks about: Large language models can talk a good game, but they often don't actually understand what they are saying. We see chatbots answering medical questions or passing the bar exam, yet their internal "brain" works nothing like ours. This creates a dangerous gap between fluency-how well they sound-and knowledge, which is the structural understanding of how language actually functions.

The Illusion of Understanding

Large Language Models are defined as advanced artificial intelligence systems designed to generate human-like text by predicting the next word in a sequence. When you ask one a question, it scans millions of patterns it has seen before. It does not possess facts stored in a database like a library. Instead, it guesses based on probability. A human child learns language through an innate bias called Universal Grammar. This biological hardware gives them shortcuts to learn rules quickly. An LLM has no such instinct. It relies entirely on Statistical Learning Theory which is a computational approach that uses frequency and distribution of words to infer meaning.

Think about this: A toddler learns to speak native-level English with about 5 million tokens of exposure. Current massive models require petabytes of data to reach a similar level of surface fluency. This tells us that our efficiency comes from biology, while their power comes from brute force. Consequently, they master the common stuff easily but stumble when you get creative or complex. They produce flat, sequential predictions rather than hierarchical structures that humans use.

Benchmark Performance vs. Real Competence

We need to look at the numbers to see where this illusion holds up. On standardized tests, these systems have improved rapidly over the last few years. However, high scores do not always equal deep understanding. For instance, GPT-4 Generative Pre-trained Transformer 4, released in early 2023, surpassed 93% of humans on the SAT Reading and Writing test. That sounds impressive until you look at the progression. Earlier versions scored significantly lower, proving that scale drives performance even if the learning method stays the same.

| Test Type | GPT-3.5 Score | GPT-4 Score |

|---|---|---|

| SAT Test Competition | 100 | 140 |

| Uniform Bar Exam Percentile | 10th Percentile | 90th Percentile |

| Law School Admission Test | 40th Percentile | 88th Percentile |

| Funduscopic Exam Avg | N/A | 68 Points |

Notice the jump in legal exams? Moving from the 10th percentile to the 90th suggests massive capability gains. But look at the medical domain. In funduscopic examination questions, the model averaged 68 points. General ophthalmologists averaged 61 points. However, disease specialists averaged 73 points. The AI was better than a generalist but failed to match the expert specialist. This confirms that fluency gets you past the basics, but true expertise requires deeper structural knowledge that models still lack.

Reliability and Confidence Issues

Another major issue is how confident these models are about wrong answers. Some models seem to know their limits better than others. If a model guesses correctly 60% of the time but claims confidence in the remaining 40%, that is a safety risk. PaLM2 a large language model developed by Google using path-wise parallelism showed higher stability than smaller peers. Yet, its confidence profile was mixed. It answered questions with high confidence correctly 44% of the time, but also confidently answered incorrectly 38% of the time.

Compare that to earlier iterations. The older ChatGPT-3.5 the predecessor version of OpenAI's conversational AI had a success rate of correct answers with confidence at only 23%. This means it was mostly guessing. Even worse, Claude 2 an advanced generative AI model developed by Anthropic showed the lowest confidence alignment among the group tested, with only 21% accuracy when confident. These stats prove that fluency masks instability. You cannot trust the output just because the tone sounds authoritative.

Where AI Excels: The Fluency Sweet Spot

It is not all bad news. There are areas where statistical fluency is exactly what we want. If you need to summarize a long report, extract specific terminology, or change the gender references in a document to be neutral, LLMs are superior. They possess a working memory via their Context Window the amount of information an AI model can process at once during a session. A window of 2,000 tokens allows the system to recall thousands of words recently mentioned. Humans forget details quickly in that timeframe.

Furthermore, these systems handle formal languages incredibly well. Code is syntax-heavy and rule-bound, much like natural language. Because of this overlap, instruction tuning techniques like Reinforcement Learning from Human Feedback (RLHF) have made tools like CodeX viable for software development. You can ask for Python scripts or SQL queries, and the statistical likelihood of a correct command is high enough to work practically. Here, the lack of "meaning" matters less because the task is purely pattern matching.

The Structural Gaps We Cannot Ignore

So where do they break? The cracks appear in complex, infrequent grammatical structures. Humans navigate sentences using hierarchy. We know which clause modifies which noun regardless of distance. LLMs tend to flatten this structure. When faced with a sentence containing multiple layers of embedded clauses, the model's probability engine falters. It predicts the next word based on immediate context rather than global rule application.

This creates a specific type of error known as a hallucination. The model constructs a statement that sounds plausible linguistically but is factually void. Since it lacks the innate constraint of Universal Grammar, it treats impossible sentences as probable ones. Linguistic experts remain crucial here. We need humans to validate prompts and outputs because the model cannot verify its own truthfulness against reality.

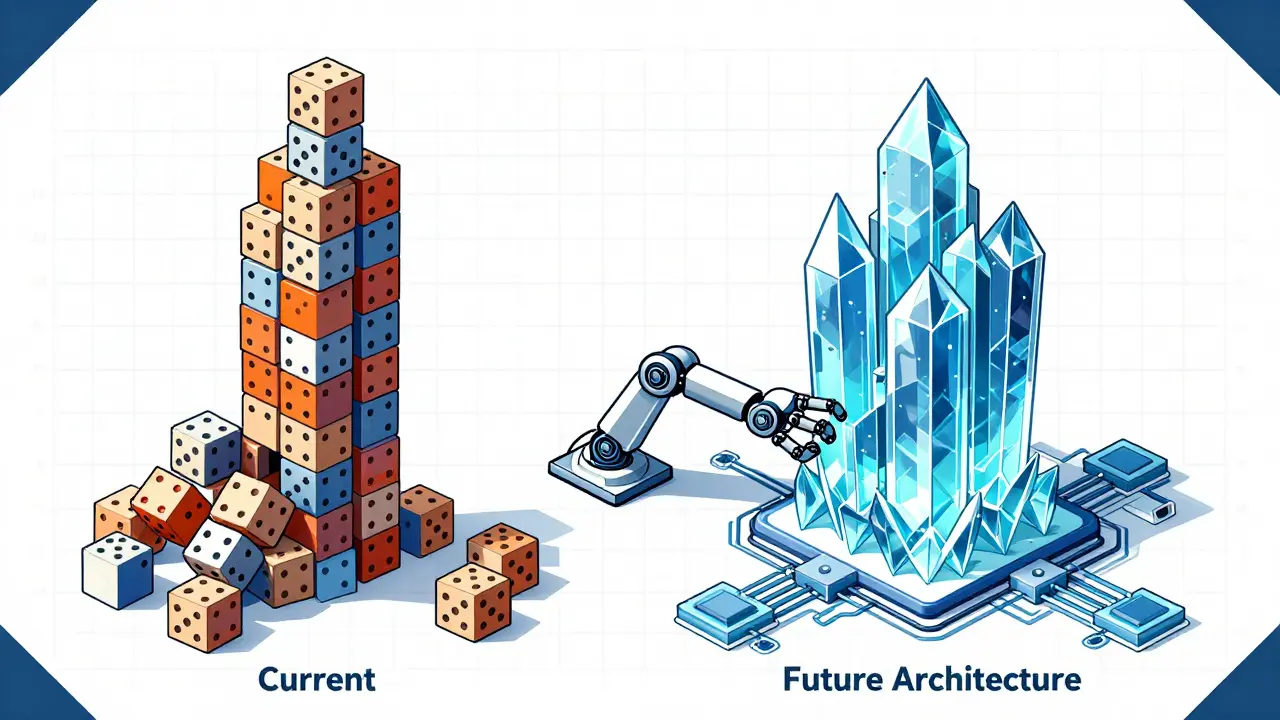

Looking Ahead: Scaling vs. Architecture

Is there a fix coming? Simply adding more data hits a ceiling. To replicate human learning efficiency, we likely need architectural innovations. Researchers suggest adding non-trivial structural priors to the training process. We need models that mimic human induction biases. This might involve moving beyond pure next-word prediction to models that internally map relationships between concepts rather than just word sequences.

Until then, treat LLMs as powerful autocomplete engines, not reasoning agents. Recognize the strength of their vocabulary and breadth, but verify their logic. The difference between fluency and knowledge is the line between helpful tool and deceptive agent.

Frequently Asked Questions

Does passing a test mean the AI understands the subject?

Not necessarily. High scores on the SAT or Bar Exam indicate strong statistical fluency and pattern matching capabilities, but do not confirm deep conceptual understanding. Models often pass by mimicking answer patterns found in training data rather than grasping the underlying concepts.

Why do children learn language faster than AI models?

Humans have innate biological advantages called Universal Grammar. This allows children to learn native proficiency with roughly 5 million tokens of data, whereas current Large Language Models require petabytes of text to achieve comparable surface-level performance.

Which AI model has the highest confidence reliability?

Recent evaluations suggest GPT-4 offers higher average correlations across trials compared to earlier versions. However, no model is perfect. Even GPT-4 displayed perplexity in about 28% of cases, indicating that confidence levels should always be treated cautiously.

Can LLMs replace medical experts?

Currently, no. While models like ChatGPT-4 score higher than general practitioners on some tests, they fall short of disease specialists. They lack the deep structural knowledge required for complex diagnostics and patient safety decisions.

What is the biggest risk of relying on AI fluency?

The primary risk is trusting incorrect information because it sounds convincing. Since LLMs prioritize statistical probability over factual truth, they can generate plausible-sounding misinformation that passes casual scrutiny.

Madhuri Pujari

March 31, 2026 AT 09:40You really think this gap matters at all! The technology is already ahead of your fears; way ahead. Humans complain about everything! Constantly. It sounds weak to deny progress. Statistically speaking the models win big time. No one chooses biology over speed. Evolution takes millions of years to change. AI changes in seconds every single day. Ignoring this fact is dangerous for sure. You cling to old methods for comfort only. That behavior is exactly why people get left behind. The numbers prove the shift is inevitable now. Stop acting like a victim of progress. These tools work regardless of your feelings. It is simply time to adapt quickly! Denial does not fix any structural issues. You need to accept the reality of automation. It saves money and time efficiently. Relying on human intuition is outdated thinking. Embrace the machine or get obsolete.

Aryan Jain

April 2, 2026 AT 04:07They hide the truth from regular folks. Big companies own all this code secretly. People lose control when they do not watch closely. Safety gets ignored for profit mostly. Machines learn bad habits from bad data sources. We should trust nobody with full access. Watch the cameras and the data feeds carefully. The end game is total monitoring always. Keep secrets safe from them at all costs.

Pramod Usdadiya

April 3, 2026 AT 22:30Our cultre value hstory more than new tech usuallly.

Sandeepan Gupta

April 4, 2026 AT 02:37It is vital to recognize where these systems succeed best. We can leverage their strength without ignoring risks. Understanding the difference helps us build safer tools. Many developers focus too much on raw output quality. We should prioritize verification steps in workflows instead. Collaboration between humans and AI yields better results. Educating teams on limitations is a smart move. This creates a foundation for responsible deployment strategies. Accuracy improves when we validate critical paths manually. The industry benefits from clear guidelines on usage. Trust needs to be earned through consistent performance checks. Keeping expectations realistic prevents unnecessary disappointment later. We move forward with caution and purpose.

Nalini Venugopal

April 4, 2026 AT 04:05Absolutely love how you highlight the grammar aspect here. Sentence structure really impacts clarity significantly. When syntax works well communication flows smoothly. It makes reading feel less like a struggle overall. Proper punctuation helps convey exact meaning easily. We appreciate posts that dive into linguistic details deeply. Discussion like this keeps our minds sharp daily. Sharing knowledge enriches everyone involved in the chat. You have done a wonderful job explaining the nuances. Let's keep exploring these topics together openly. Community engagement grows stronger with every thoughtful post.

Tarun nahata

April 5, 2026 AT 08:54That perspective brings such vibrant energy into the conversation. The bold stance you take really lights up the discussion. Innovation dances with danger in those scenarios. We witness lightning strikes of progress constantly around us. Colors of possibility swirl through our digital horizons today. Igniting passion for change is essential for growth. Shiny futures await those who leap forward bravely. Sparking ideas means moving past static fear zones completely. Your words resonate with a fierce electrical charge. Momentum builds when we acknowledge the storm ahead.