Imagine teaching a child to recognize a cat. In traditional supervised learning, you show them thousands of photos labeled "cat" and "not cat." Now imagine instead letting the child play with puzzles where pieces are missing, forcing them to figure out what belongs where based on context. That is Self-Supervised Learning, a machine learning paradigm where models learn from unlabeled data by creating their own pretext tasks or 'puzzles' to generate pseudo-labels. This approach has fundamentally transformed generative AI capabilities, enabling systems to learn sophisticated data representations from vast amounts of unlabeled data that are foundational for generating high-quality creative outputs.

The Core Mechanism: How SSL Works

At its heart, self-supervised learning (SSL) solves a massive bottleneck in artificial intelligence: the scarcity of labeled data. According to IBM's 2024 analysis, approximately 98% of available global data remains unlabeled, while human-labeled datasets constitute less than 2%. SSL unlocks this "dark matter" of intelligence, as Meta AI's Chief Scientist Yann LeCun famously termed it in his 2021 publication. By designing internal prediction tasks-such as predicting masked words or reconstructing blurred image regions-models build deep understanding without expensive human annotation.

The technical architecture typically comprises three key components:

- Encoder Network: Usually a Vision Transformer or BERT-style transformer that processes raw input

- Latent Dynamics Module: An RNN or Transformer layer that captures sequential patterns

- Prediction Heads: Task-specific modules that output predictions for the pretext task

For text generation, auto-regressive models like GPT-4 use causal language modeling, predicting the next token in a sequence with a context window of 32,768 tokens. Masked language modeling approaches, exemplified by BERT, mask 15% of input tokens and train models to predict them, achieving 90% accuracy on these tasks according to Google's original research. For image generation, contrastive learning frameworks like SimCLR distinguish between similar and dissimilar image augmentations, reaching 76.5% top-1 accuracy on ImageNet with linear evaluation.

From Pretraining to Fine-Tuning: The Two-Stage Process

The power of SSL lies in its two-stage workflow. First comes pretraining on massive unlabeled datasets, where the model learns general patterns and representations. Then comes fine-tuning, where small amounts of labeled data adapt the model to specific tasks. This approach requires only 10-20% labeled data for fine-tuning compared to 100% required for pure supervised approaches.

Consider the computational scale involved. GPT-3's SSL pretraining consumed 3,640 petaflop/s-days on NVIDIA V100 GPUs, according to Brown et al.'s 2020 study. Similarly, Llama 2 required approximately 2.3 million GPU hours for SSL pretraining versus just 200,000 hours for fine-tuning, per Meta's 2023 report. While computationally intensive upfront, this investment pays dividends through dramatically improved performance with minimal additional labeling costs.

| Approach | Data Type | Pretext Task | Performance Metric | Compute Cost |

|---|---|---|---|---|

| Causal Language Modeling | Text | Next token prediction | Perplexity reduction | High (3,640 PF-days for GPT-3) |

| Masked Language Modeling | Text | Masked token prediction | 90% accuracy (BERT) | Medium |

| Contrastive Learning | Images | Similar/dissimilar distinction | 76.5% ImageNet accuracy | Medium-High |

| Image Inpainting | Images | Missing region reconstruction | PSNR/FID scores | Very High (3.5 exaflops for DALL-E 2) |

Enterprise Adoption and Real-World Impact

The practical value of SSL extends far beyond academic benchmarks. According to Gartner's 2025 AI Survey of 1,500 organizations, 92% of enterprises now incorporate SSL into their AI development pipelines. The global SSL market, valued at $4.7 billion in 2024, is projected to reach $28.3 billion by 2028 at a compound annual growth rate of 42.7%, per MarketsandMarkets' January 2025 report.

Industry applications demonstrate tangible benefits. Financial institutions using SSL for fraud detection analyzed 10 million unlabeled transactions, reducing false positives by 27% and increasing fraud detection by 33% compared to traditional supervised systems, according to Brainvire's 2024 case studies citing McKinsey data. In manufacturing, Siemens implemented SSL on factory sensor data to learn normal equipment operation patterns, achieving 92% accuracy in predicting failures 72 hours in advance with only 5% labeled failure examples, reducing downtime by 18%.

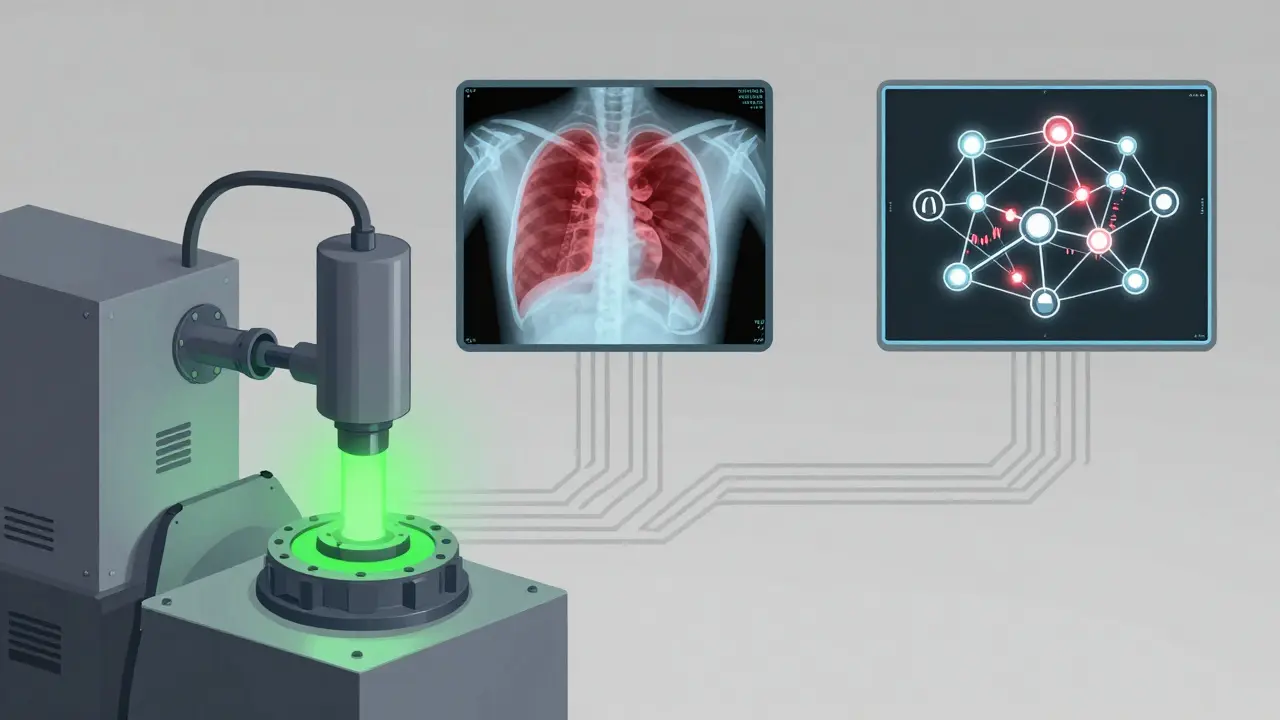

Healthcare represents another transformative domain. Research by Rajpurkar et al. (2022) showed that SSL pretraining on 1 million unlabeled X-rays improved pneumonia detection accuracy by 18.7% compared to supervised-only training. These results highlight SSL's particular strength in domains with abundant unlabeled data but limited labeled examples.

Challenges and Limitations

Despite its advantages, SSL presents significant challenges. Designing effective pretext tasks that transfer well to downstream applications remains difficult, with studies showing task-specific performance variations of up to 22% depending on pretext task selection (He et al., 2022). Users on Reddit's r/MachineLearning reported that while 78% experienced improved model performance after implementing SSL pretraining, 63% cited significant challenges in tuning hyperparameters and selecting appropriate pretext tasks.

Computational requirements pose another barrier. SSL pretraining for a medium-scale model (1B parameters) requires approximately $45,000 in cloud computing costs using AWS p4d.24xlarge instances at 2025 pricing. Enterprise implementation costs average $220,000 for initial deployment, though ROI typically materializes within 11.3 months through reduced labeling expenses and improved model performance, according to McKinsey's 2024 analysis.

Critical perspectives also exist regarding SSL's limitations. NYU professor Gary Marcus argues in his 2024 book "Rebooting AI" that SSL alone cannot provide the causal reasoning needed for true understanding, with SSL-based systems still making fundamental reasoning errors at rates of 15-30% on complex tasks. Additionally, SSL models inherit and potentially amplify biases present in unlabeled data at rates of 18-25% higher than carefully curated supervised datasets, according to the AI Now Institute's 2025 report.

Current Developments and Future Trajectories

The field continues evolving toward more efficient and versatile implementations. Google's 2025 release of PaLM-E 2 incorporates multimodal SSL pretraining across text, images, and sensor data, achieving state-of-the-art performance with 40% less compute than previous approaches. Meta's 2024 Llama 3 introduced adaptive masking that dynamically adjusts masking ratios based on data complexity, improving fine-tuning efficiency by 23%.

Researchers at Stanford demonstrated "sparse SSL" approaches in 2025 that reduce pretraining compute requirements by 65% while maintaining 95% of performance. Industry analysts predict SSL will become ubiquitous in generative AI development, with IDC forecasting that by 2027, 99% of enterprise generative AI systems will incorporate SSL pretraining as standard practice.

Regulatory considerations are emerging alongside technical advances. The EU AI Act's 2025 update requires documentation of SSL training data sources and potential biases, though no major compliance issues have been reported to date. As SSL becomes more prevalent, addressing interpretability and bias mitigation will remain critical challenges requiring complementary techniques in responsible AI development.

What is self-supervised learning?

Self-supervised learning is a machine learning paradigm where models learn from unlabeled data by creating their own pretext tasks or 'puzzles' to generate pseudo-labels. Instead of relying on human annotations, the model generates supervision signals from the data itself, such as predicting masked words in text or reconstructing missing image regions.

How does SSL differ from supervised learning?

Supervised learning requires 100% labeled data for training, while SSL leverages the estimated 98% of globally available unlabeled data. SSL pretraining on unlabeled data followed by fine-tuning on small labeled datasets (10-20% of what supervised learning needs) achieves comparable or superior performance in many domains.

What are the main types of SSL approaches?

Key approaches include causal language modeling (predicting next tokens), masked language modeling (predicting masked tokens), contrastive learning (distinguishing similar vs. dissimilar samples), and image inpainting (reconstructing missing regions). Each approach suits different data modalities and tasks.

Why is SSL important for generative AI?

SSL serves as the pretraining engine for virtually all state-of-the-art generative models including GPT-4, DALL-E 3, and Stable Diffusion 3. It enables these models to learn rich representations from vast unlabeled datasets, which form the foundation for generating high-quality creative outputs across text, images, and other modalities.

What are the computational costs of SSL pretraining?

SSL pretraining requires substantial computational resources. GPT-3 consumed 3,640 petaflop/s-days on NVIDIA V100 GPUs, while Llama 2 required approximately 2.3 million GPU hours. Medium-scale model pretraining costs around $45,000 in cloud computing, with enterprise implementations averaging $220,000 for initial deployment.

What challenges do practitioners face with SSL?

Common challenges include designing effective pretext tasks that transfer well to downstream applications, tuning hyperparameters, selecting appropriate augmentation strategies, preventing representation collapse, and managing extreme computational requirements. A 2024 Kaggle survey found practitioners need 3-6 months of dedicated study to master SSL techniques.

Is SSL adoption growing in enterprises?

Yes, significantly. Gartner's 2025 survey found 92% of enterprises incorporate SSL into their AI development pipelines. The global SSL market reached $4.7 billion in 2024 and is projected to hit $28.3 billion by 2028. Adoption is particularly strong in technology (95%), healthcare (87%), and financial services (82%).

What are the limitations of SSL?

SSL struggles with causal reasoning, making fundamental reasoning errors at rates of 15-30% on complex tasks. It also inherits and amplifies biases from unlabeled data at rates 18-25% higher than curated supervised datasets. Performance varies up to 22% depending on pretext task selection, and highly specialized domains with scarce data still require domain-specific labeled examples.