Imagine trying to fit a massive library into a small backpack. That is essentially the problem engineers face when deploying Large Language Models is AI systems like LLaMA or GPT that possess billions of parameters, requiring immense computational power and memory to operate. A model like LLaMA-30B needs about 60GB of GPU memory just for inference. For most people and businesses, that's a deal-breaker. To fix this, we use model compression, and specifically, pruning. Pruning isn't just about deleting random parts of a model; it's a strategic operation to remove unnecessary weights without breaking the AI's brain. If done right, you get a model that's faster and smaller but still just as smart.

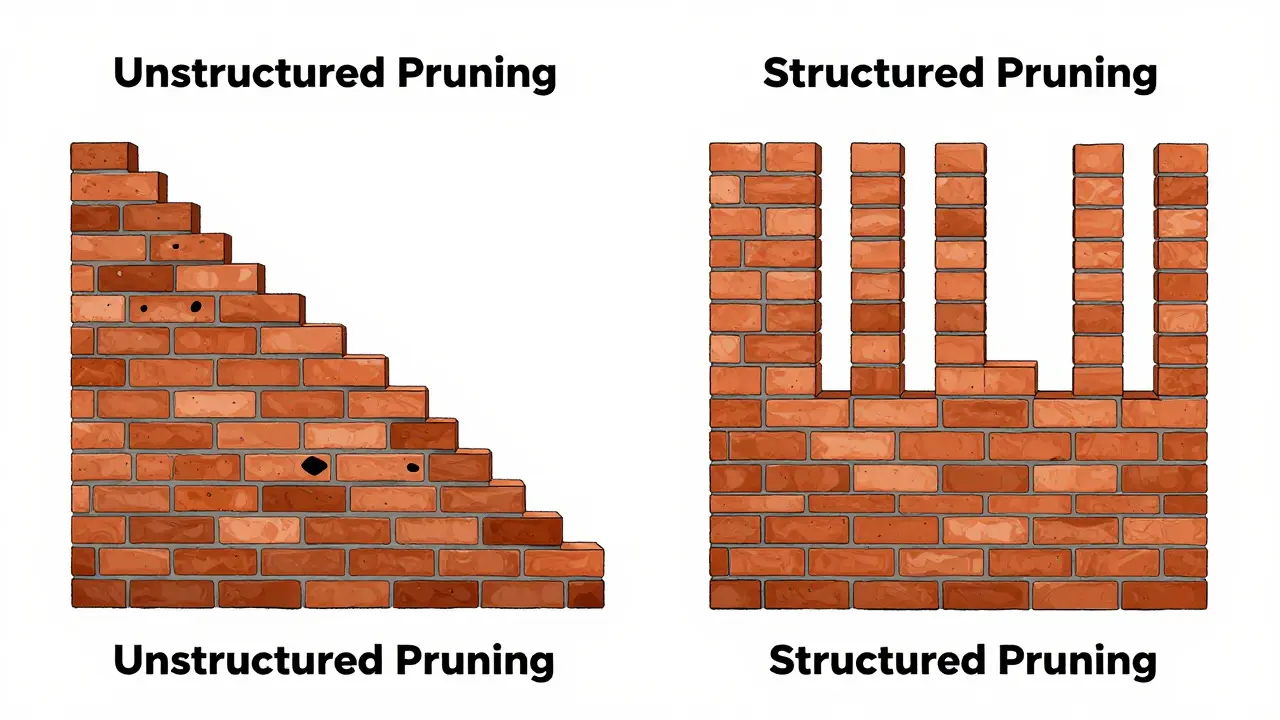

The Core Difference: Structured vs Unstructured Pruning

When you hear the word "pruning," you might think of trimming a hedge. In AI, it's similar. You're cutting away the "dead wood"-the weights in the neural network that don't actually contribute to the final answer. However, how you cut determines whether your model can actually run on standard hardware.

Unstructured Pruning is a technique that removes individual weights based on their importance, regardless of where they are located in the model's architecture. Think of this like removing random individual bricks from a wall. The wall still stands, but it's full of tiny holes. This is great for maximizing compression-you can remove a huge percentage of weights while keeping the model accurate. The catch? Most GPUs aren't designed to handle these "holey" matrices. Unless you have specialized hardware like NVIDIA's Ampere architecture with sparse tensor cores, you won't see a real speed boost.

Structured Pruning is a method that eliminates entire components, such as whole neurons, channels, or layers, maintaining a regular network shape. Instead of removing random bricks, you're removing entire rows or columns of the wall. Because the resulting model is still a standard dense matrix, it runs fast on any GPU or mobile chip without needing special software. This makes it the go-to choice for anyone deploying AI on an iPhone or a Raspberry Pi.

| Feature | Unstructured Pruning | Structured Pruning |

|---|---|---|

| What is removed? | Individual weights (random) | Entire components (rows/columns) |

| Hardware Compatibility | Requires specialized sparse cores | Works on all standard hardware |

| Compression Ratio | High (can reach 50%+ sparsity) | Moderate (accuracy drops after 60%) |

| Inference Speedup | 1.3x - 1.8x (on specialized gear) | 1.5x - 2.1x (on general gear) |

| Best Use Case | Cloud environments / Research | Mobile & Edge deployment |

Unstructured Pruning in Action: The Wanda Method

For a long time, people pruned weights based on "magnitude"-if a weight was very small, they assumed it was useless and deleted it. But that's a bit too simple for LLMs. Enter Wanda is a pruning method introduced in 2024 that determines weight importance by multiplying the weight magnitude with the input activations.

Why does this matter? Because some weights might be small, but they are multiplied by huge activation values, making them critical to the model's output. By looking at the "weights × activations" product, Wanda can prune LLaMA-7B to 40% sparsity without any retraining. In a real-world benchmark on WikiText-2, it maintained a perplexity of 7.8 compared to the dense model's 7.6. The best part for developers is that it doesn't require a full training cycle, which would cost thousands of dollars in compute. You just need a tiny calibration dataset of about 128 sequences to get it running.

However, Wanda isn't a magic bullet. If you're implementing it, be prepared for a memory spike. Because it needs to cache activations, you might need an extra 25-35GB of VRAM for a LLaMA-7B model. If you're working with models larger than 13B parameters, some users have reported instability, so keep a close eye on your logs.

Structured Pruning: From Low-Rank Factorization to FASP

If you need your model to run on a mobile device, you need structured pruning. Early research, such as the work by Wang et al. in 2020, used a technique called low-rank factorization. They basically broke down large weight matrices into smaller, simpler pieces and removed the least important ones. This allowed a BERT-base model to run on a Raspberry Pi 4 with only a tiny drop in accuracy.

More recently, we've seen the rise of FASP is a fast and accurate structured pruning framework that interlinks sequential layers to prevent performance loss. Instead of pruning each layer in isolation, FASP looks at the relationship between layers. If it removes a column in one layer, it removes the corresponding row in the next. This "interlinking" prevents the accuracy collapse that usually happens when you cut too deep into a model.

The speed of FASP is genuinely impressive. Pruning a LLaMA-30B model takes about 20 minutes on a single NVIDIA RTX 4090. Compare that to older structured methods that could take hours or days. For someone building a real-time app, this means they can iterate on their model efficiency in minutes rather than weeks.

The Practical Trade-offs: Which One Should You Use?

Choosing between these two usually comes down to where your model will live. If you're deploying to a cloud environment with the latest NVIDIA H100s, unstructured pruning might be your best bet because you can push the compression further without destroying the model's logic.

But if you're targeting a mobile app or an IoT device, structured pruning is the only real choice. Apple's Core ML 7.0 now has native support for these structured patterns, which can lead to 2.1x faster inference on devices like the iPhone 13. The risk here is the "accuracy plateau." Most experts, including those at Stanford HAI, note that once you hit about 60-70% sparsity with structured pruning, the model starts to suffer from catastrophic forgetting-it basically forgets how to speak coherently.

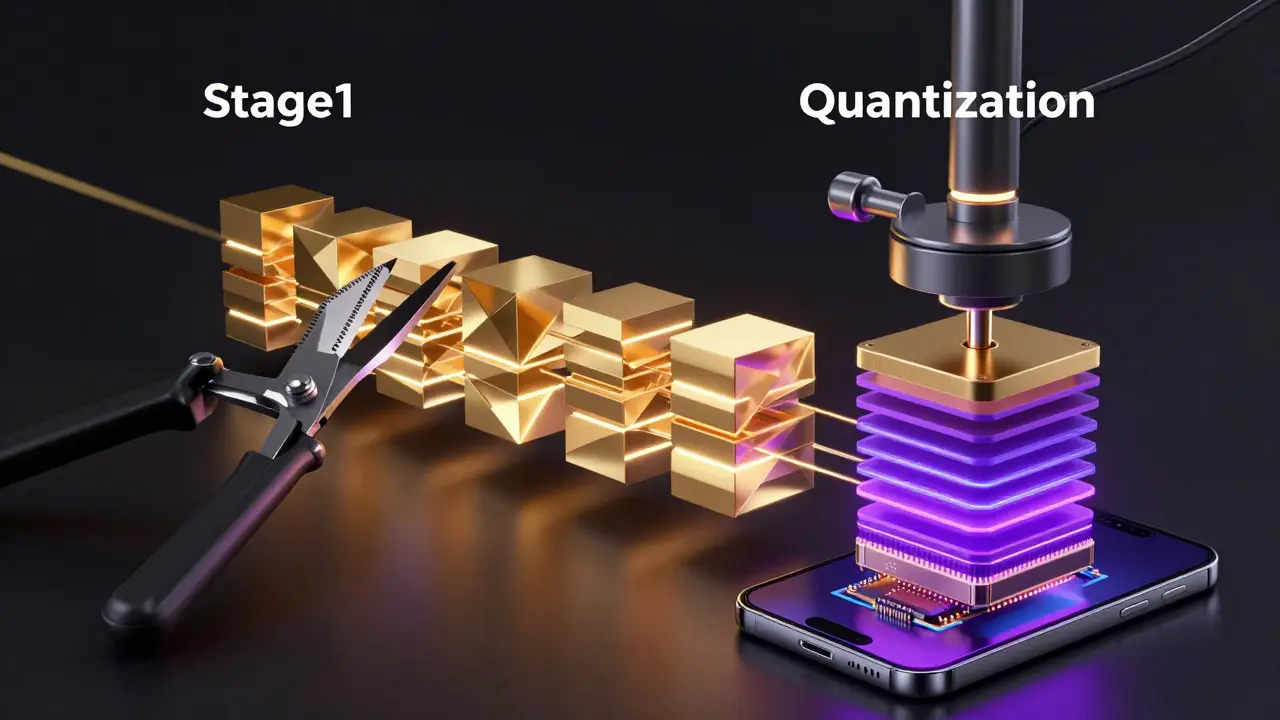

For the best results, don't rely on pruning alone. The current industry trend is a hybrid approach. Combining pruning with Quantization is the process of reducing the precision of weights, such as moving from 16-bit floating point to 4-bit integers. By pruning the unnecessary weights and then quantizing the remaining ones, tools like NVIDIA TensorRT 9.2 can achieve a 4.7x reduction in model size, which is often the only way to get a high-quality LLM onto a consumer device.

Implementation Guide: Getting Started

If you're a developer looking to implement these, here is the reality of the workload:

- Wanda Implementation: Expect about 2 hours of work if you know PyTorch. You'll need a small calibration set and plenty of GPU memory for caching.

- FASP/Structured Implementation: This is a steeper climb, likely 8-10 hours. You'll need to deal with layer dimension mismatches and potentially adjust the pruning threshold ratio to stop the model from crashing.

A pro tip for anyone working with non-English languages: be careful. Pruning often hits low-resource languages harder. Research shows that while English performance might only drop by 1.8%, a language like Swahili could see a 5.2% drop at the same compression level. Always test your pruned model on a diverse dataset, not just English text.

Does pruning require retraining the model?

Not always. Modern methods like Wanda are designed specifically for "pruning without retraining," using a small calibration dataset to identify important weights. However, some structured pruning methods do benefit from a short period of fine-tuning to recover lost accuracy.

Can I prune a model by 90% and keep it useful?

Generally, no. Most LLMs hit a performance wall between 60% and 70% sparsity. Beyond that, you usually see a "catastrophic collapse" where the model's perplexity spikes and it starts generating gibberish. To get higher compression, you'll need to combine pruning with distillation or quantization.

Why is structured pruning faster on mobile phones?

Standard mobile processors are designed to perform dense matrix multiplications. Structured pruning removes entire blocks of data, leaving a smaller but still dense matrix. Unstructured pruning leaves a "sparse" matrix with holes, which standard hardware can't optimize, often making the "compressed" model run slower than the original.

What is the 'weights x activations' criterion in Wanda?

It's a way of measuring a weight's importance. Instead of just looking at the weight's size (magnitude), Wanda multiplies that weight by the typical value of the data flowing through it (activation). If both are large, the weight is critical. If the activation is near zero, the weight is useless regardless of its size.

Will pruning work for Mixture-of-Experts (MoE) models?

Yes, though it is more complex. Recent updates to the Wanda framework (v1.2) specifically added support for MoE architectures, reporting about 15% faster pruning times for these types of models.

Next Steps for Optimization

If you've just pruned your model and it's still too slow, don't keep cutting weights. Instead, try these three things:

- Apply 4-bit Quantization: Use tools like bitsandbytes or AutoGPTQ to shrink the remaining weights further.

- Knowledge Distillation: Use your original large model as a "teacher" to train your pruned "student" model, which helps recover the accuracy lost during pruning.

- Check your Runtime: If using unstructured pruning, ensure you are using a runtime like TensorRT that actually supports sparse tensors; otherwise, you're wasting your time.

Dave Sumner Smith

April 24, 2026 AT 23:56typical corporate garbage. you think the 4090 is actually the bottleneck here. the real bottleneck is that they're hiding the actual weights from the public to keep the power centralized. structured pruning is just a way to make the leash shorter while pretending the AI is still 'smart' enough for the masses

Elmer Burgos

April 26, 2026 AT 10:17thats a really cool breakdown of the two methods. i think the hybrid approach with quantization sounds like a great way to go for most people

Paul Timms

April 26, 2026 AT 22:31The distinction between sparse and dense matrices is clearly explained. It is a helpful guide.

Cait Sporleder

April 27, 2026 AT 03:59I find myself utterly captivated by the sheer intricacy of the Wanda method, specifically the kaleidoscopic way it intertwines weight magnitude with input activations to preserve the cognitive essence of the model! It is truly a marvelous feat of engineering that allows one to sculpt these digital behemoths into more lithe, elegant forms without necessitating the exorbitant expenditure of a full training cycle, which would otherwise be an absolutely ruinous financial endeavor for any modest researcher attempting to innovate in this burgeoning field.

Jeroen Post

April 27, 2026 AT 18:24existence is just a series of pruned weights anyway we are all just approximations of a higher dimensional truth and these devs are just playing god with matrices

Antwan Holder

April 28, 2026 AT 16:48The tragedy of it all! We strip these models of their essence, cutting away the 'dead wood' until they are mere shadows of their former selves! It is a digital autopsy performed in the name of efficiency, a cold, calculating murder of nuance to fit a model into a pocket-sized rectangle! My soul weeps for the lost parameters!

Angelina Jefary

April 28, 2026 AT 22:57Imagine thinking these frameworks are actually 'open' when they're clearly just tools for the deep state to deploy surveillance bots in our pockets. Also, your use of a hyphen in the table is technically inconsistent with the formatting of the rest of the document. Pathetic.

Kasey Drymalla

April 30, 2026 AT 14:53this is all a lie they just want us using 4bit so the ai can brainwash us faster without lagging