Generating text one token at a time is slow. Even the most powerful large language models (LLMs) hit a wall when they have to wait for each word to be calculated before moving to the next. This isn’t just a minor delay-it makes chatbots feel sluggish, real-time translation lag, and AI assistants take too long to respond. Speculative decoding changes that. It doesn’t make the model smarter. It makes it faster-by letting a smaller, simpler model guess the next few words, while the big model double-checks them in parallel. And when it works, you can cut inference time by up to 3x.

How Speculative Decoding Works

Imagine you’re writing a sentence. You pause after each word, waiting for your brain to come up with the next one. Now imagine someone else whispers the next three words to you. You don’t have to think about them-you just check if they make sense. If they do, you keep them. If not, you ignore them and write the next word yourself. That’s speculative decoding.

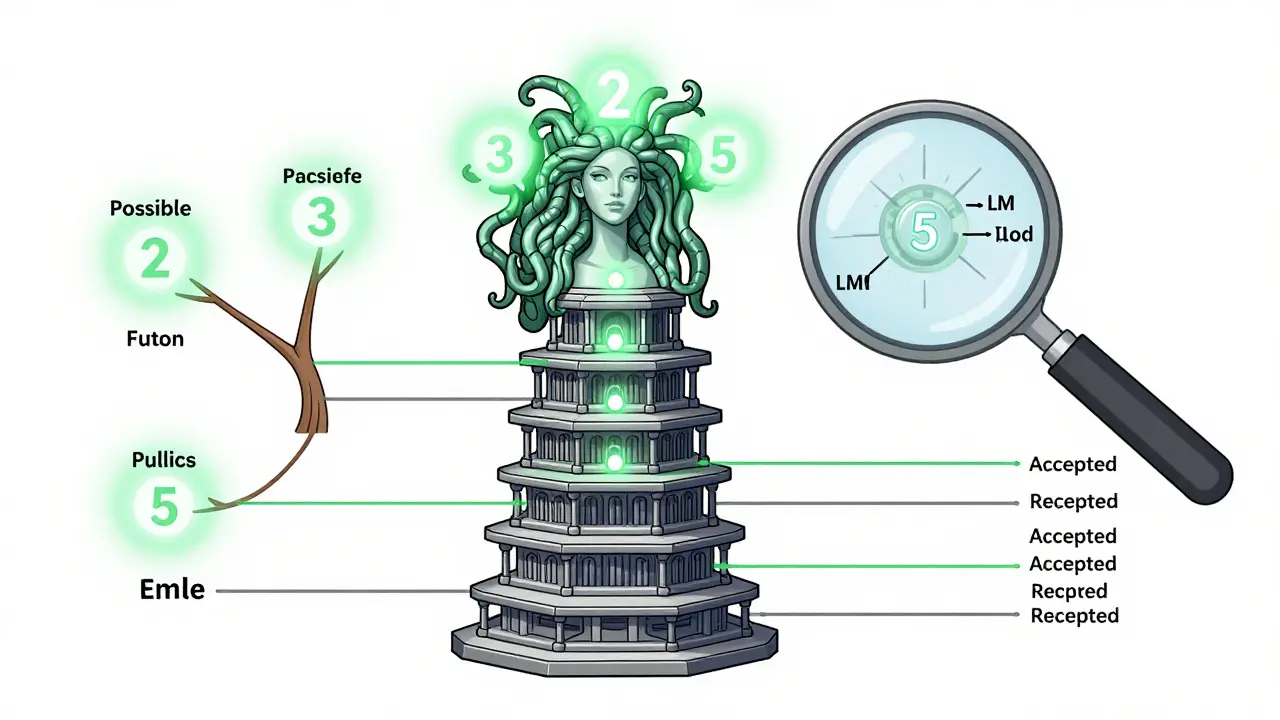

The system uses two models: a small draft model and a large target model. The draft model runs fast because it’s tiny-maybe 1/10th the size of the main model. It takes the current context and predicts the next k tokens all at once. Then, instead of waiting for the big model to generate each token one by one, it checks all k tokens together. If the big model agrees with the draft’s prediction, the token is accepted. If it disagrees, everything after the mismatch is thrown out, and the big model generates just the next token on its own. Then the cycle repeats.

This isn’t magic. It’s math. The target model calculates the log-likelihood of each draft token. If the probability is high enough, it accepts it. The result? The expensive part-the big model’s forward pass-is done in parallel, not sequentially. And since most next tokens are predictable (think: "the", "and", "but"), the draft model gets a lot right.

Why Draft Models Matter More Than You Think

Not all draft models are created equal. A generic draft model trained on web text might work okay for general chat, but it’ll fail badly if you’re asking it to generate legal documents, medical summaries, or financial reports. Why? Because it doesn’t know your domain.

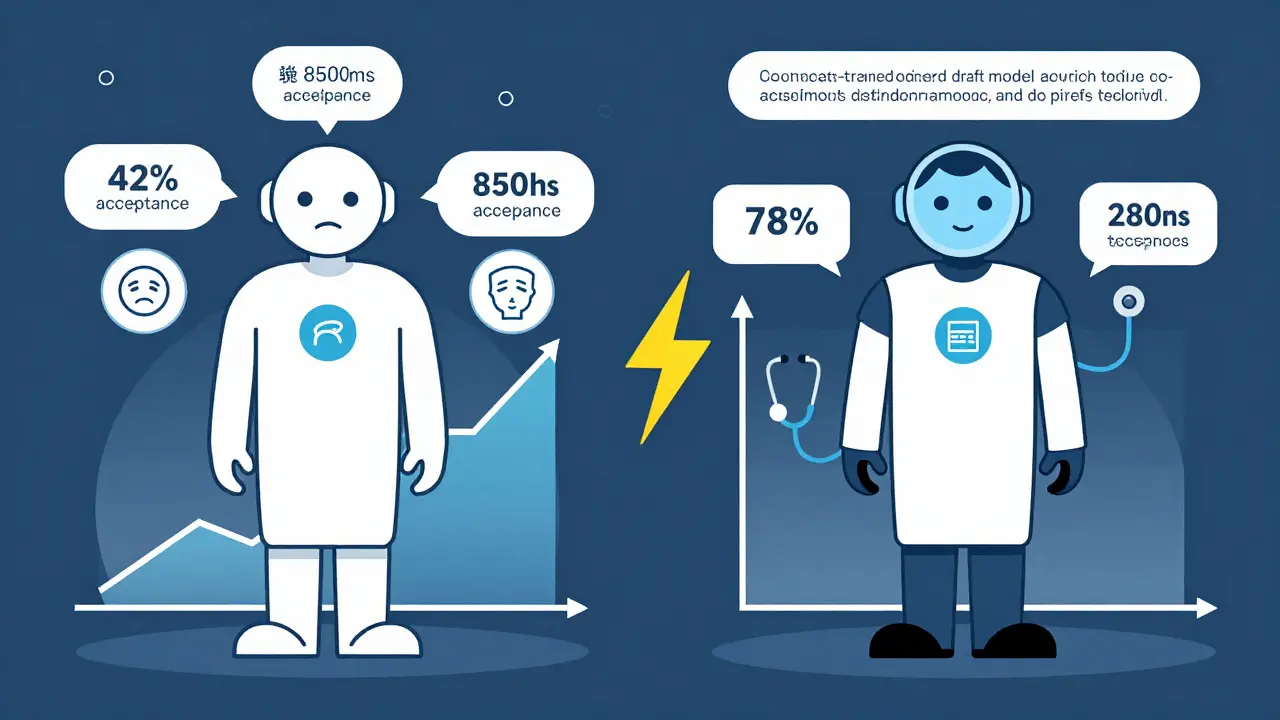

Research from BentoML showed that theoretical speedups of 3x only happen when the draft model’s predictions match the target model’s probability distribution almost perfectly. In practice, off-the-shelf draft models often achieve acceptance rates below 50%. That means half the time, the system throws away work and falls back to slow, sequential generation. The result? You get maybe a 1.5x speedup instead of 3x.

The fix? Train your own draft model. Not on random internet data. On your company’s documents, customer support logs, internal reports-whatever your LLM actually needs to understand. When you do that, acceptance rates jump. One team at a healthcare startup trained a 700M-parameter draft model on clinical notes. Their acceptance rate went from 42% to 78%. Their latency dropped from 850ms to 280ms per response. No change to the target model. Just a better draft partner.

Breaking the Mold: EAGLE and Medusa

Early speculative decoding relied on a separate, tiny model. But that created new problems: training it, syncing it, keeping it aligned. Two newer approaches cut that middleman out.

EAGLE doesn’t train a new model. It reuses the top layers of the target model itself. Instead of predicting tokens, it predicts the hidden features that come before the final output. This gives the draft model access to richer context-information the big model already knows. The result? Better predictions, higher acceptance rates, and faster speedups without needing a separate model.

Medusa goes even further. It adds multiple prediction heads directly onto the last hidden layer of the base LLM. No separate model. No training pipeline. Just a few extra linear layers that predict the next 2, 3, or even 5 tokens in one go. It builds a tree of possible continuations and lets the model verify them all at once. In benchmarks, Medusa generated 2.8x more tokens per second than standard speculative decoding. And because it’s built into the same model, there’s no distribution mismatch. It’s like the model is whispering to itself.

When You Don’t Need a Draft Model

Not every use case needs this complexity. If you’re doing greedy decoding-always picking the single most likely next token-speculative decoding still works. You don’t need fancy sampling tricks. Just let the draft model predict a few tokens, and verify them one by one. It’s simpler, and still gives you 1.5x-2x speedup.

And if your current setup already feels fast? Maybe you don’t need to change anything. Speculative decoding isn’t a universal upgrade. It’s a tool. If your model responds in under 400ms for your users, and they’re happy, adding complexity might not be worth it. But if you’re struggling with latency spikes, or users are dropping off because the AI takes too long, then this is where you start.

Implementation Tips

Here’s what actually works in production:

- Start with what you have. Try speculative decoding with a pre-trained draft model like Phi-3 or TinyLlama. Measure your acceptance rate. If it’s above 60%, you’re already ahead.

- Track your latency, not just tokens. Speedups look great on paper, but what matters is real-world response time. Use tools like Prometheus or Langfuse to monitor end-to-end latency under load.

- Train on your data. If you’re in finance, law, or healthcare, train your draft model on your own documents. Use 10,000-50,000 samples. You don’t need millions.

- Consider Medusa if you can. If your target model is based on Llama or Mistral, Medusa’s integrated heads are easier to deploy than managing two models. It’s becoming the new standard.

- Test with real prompts. Don’t benchmark with generic queries. Use your actual user inputs. A draft model that works well on "What’s the weather?" might fail on "Explain this insurance clause."

What You Gain (and What You Don’t)

Speculative decoding doesn’t change what the model says. It only changes how fast it says it. The output distribution stays identical to standard decoding. That’s huge. You’re not sacrificing accuracy for speed. You’re not introducing hallucinations. You’re not altering the model’s behavior. You’re just making it quicker.

This matters for compliance, safety, and trust. In regulated industries, you can’t afford to tweak the model’s output. But you can absolutely speed up how fast it delivers the same answer.

And the best part? It works on any autoregressive transformer. Llama, Mistral, Qwen, Phi-you name it. As long as it generates tokens one by one, speculative decoding can help.

Future Directions

Research is moving fast. LayerSkip is testing whether skipping layers in the target model can generate draft tokens without extra parameters. MTP (Medusa Tree Parallel) is exploring how to predict longer sequences with dynamic branching. And companies are starting to combine speculative decoding with quantization and pruning-stacking optimizations on top of each other.

But the core idea stays the same: if you can guess well, you don’t have to calculate everything. The future of LLM inference isn’t bigger models. It’s smarter shortcuts.

What’s the difference between speculative decoding and quantization?

Quantization reduces model size by using lower-precision numbers (like 8-bit instead of 32-bit), which makes inference faster and uses less memory. Speculative decoding doesn’t change the model’s weights-it uses a smaller model to predict ahead and lets the big model verify. You can use both together: quantize the target model and pair it with a speculative draft model for even better speed.

Can speculative decoding be used with any LLM?

Yes, as long as it’s an autoregressive transformer model that generates tokens one at a time. This includes Llama, Mistral, Phi, Qwen, and others. It doesn’t work with non-autoregressive models like those that generate all tokens at once (e.g., some summarization models). But for chat, coding, and text generation, it’s widely compatible.

Do I need to retrain my main LLM to use speculative decoding?

No. The target model stays unchanged. You only need to train or select a smaller draft model. With architectures like Medusa, you don’t even need a separate model-you add prediction heads directly to your existing LLM. No retraining of the base model is required.

Is speculative decoding better than using a smaller LLM instead?

It depends. Using a smaller model alone means sacrificing quality. Speculative decoding keeps the quality of the big model while speeding it up. If you need high accuracy and speed, speculative decoding wins. If you can accept lower quality for lower cost, a smaller model might be enough. But for production systems where quality matters, speculative decoding is the better trade-off.

How much faster will my LLM be with speculative decoding?

In ideal conditions-high acceptance rate, good draft model, and parallel verification-you can see 2x to 3x speedups in tokens per second. Real-world results vary: 1.5x-2.5x is common. If your draft model is poorly matched, you might only get 1.2x. The key is tuning the draft model to your data. The best results come from domain-specific training, not off-the-shelf models.

Jawaharlal Thota

March 24, 2026 AT 20:30Man, I've been tinkering with speculative decoding on our internal chatbot for weeks now, and let me tell you-it’s been a game changer. We were stuck at 900ms per response with a 7B model, and users were complaining about lag during peak hours. We tried Phi-3 as a draft model out of the box, got a 48% acceptance rate, barely any improvement. So we trained a 1.3B draft model on our customer support logs-just 12,000 transcripts from the last six months. No fancy preprocessing, just cleaned, lowercased, tokenized. Acceptance rate jumped to 71%. Latency dropped to 260ms. No change to the target model. Just better context. The paper talks about domain alignment, but nobody says how easy it is to actually do it. You don’t need a PhD. You just need data. And patience. And maybe a weekend with a GPU cluster. Worth it.

Also, if you’re using Medusa, don’t just assume it’ll work out of the box. We tried it on Mistral-7B and got weird divergence in the first three tokens. Had to tweak the temperature on the prediction heads. It’s not plug-and-play. But once tuned? Pure magic. The model whispers to itself like it’s got a secret. I love that.

One thing I wish more people would mention: speculative decoding doesn’t help if your bottleneck is I/O. If your API gateway is slow or your database is blocking, no amount of token speculation will fix that. Measure end-to-end latency. Use Prometheus. Watch your queue depth. You’d be surprised how often the problem isn’t the model at all.

And for anyone thinking about quantization + speculative decoding together-yes, do it. We went 8-bit on the target and paired it with our tuned draft model. Got 3.1x speedup. Memory usage halved. Cost savings? 40% on our cloud bill. This isn’t just tech porn. It’s real infrastructure win.

Don’t listen to the hype guys who say “just use TinyLlama.” If your domain is legal contracts or medical notes, generic models will fail hard. I’ve seen draft models reject 80% of tokens on insurance claim summaries. It’s not that they’re dumb-it’s that they’re not trained on your world. Train on your data. It’s not hard. Just do it.

Lauren Saunders

March 26, 2026 AT 07:25How quaint. Another 'revolutionary' optimization that only works when you're running a 2023-era Llama on a 4090 with a 10GB dataset. The real world doesn't operate on academic benchmarks. In production, latency spikes are caused by resource contention, not token-by-token generation. This 'speculative decoding' is just a glorified lookahead buffer with a fancy name. And don't even get me started on Medusa-adding linear layers to the final hidden state? That's not innovation, that's a hack that only works if your model architecture is already perfectly symmetric. Most real models have asymmetric attention heads, residual connections, or layer norm variants that make this approach unstable. The paper's 3x speedup? Probably measured on synthetic prompts with perfect cache alignment. Real users ask follow-ups. They type corrections. They interrupt. And then your entire speculative tree collapses. It's not a solution. It's a fragile illusion built on cherry-picked metrics.

sonny dirgantara

March 27, 2026 AT 20:49Andrew Nashaat

March 29, 2026 AT 11:48Let me just say this, with absolute clarity: if you’re still using off-the-shelf draft models like Phi-3 or TinyLlama without fine-tuning, you’re not optimizing-you’re gambling. And you’re losing. Every time. The fact that people are even considering this without domain-specific training is frankly irresponsible. You’re deploying AI in environments where accuracy isn’t optional-healthcare, legal, finance-and you’re letting a model trained on Reddit comments and Wikipedia summaries predict what comes next? That’s not innovation. That’s negligence. And don’t even get me started on people who think Medusa is ‘easier.’ It’s not. It’s a black box with more failure modes than a Windows 95 driver. You think you’re saving time by not managing two models? You’re just hiding complexity under a pretty interface. The real answer? Train a dedicated draft model. On YOUR data. With YOUR validation set. With YOUR compliance audits. And if you can’t do that? Then don’t deploy speculative decoding at all. Because the cost of a single hallucinated token in a medical summary? Not just a bug. It’s a lawsuit. A career. A life. This isn’t a tech demo. It’s a responsibility. And if you’re treating it like a weekend project? You shouldn’t be near a production server.

Gina Grub

March 29, 2026 AT 12:35Nathan Jimerson

March 30, 2026 AT 11:49This is one of those rare tech breakthroughs that actually feels meaningful. Not just incremental, not just flashy-real, tangible improvement. I’ve seen teams waste months chasing bigger models, when the answer was always right in front of them: use what you have, but smarter. Training a draft model on your own data isn’t hard. It’s tedious. But it’s doable. And the payoff? A healthcare team cut response time from over 800ms to under 300ms. That’s not a number. That’s a patient who didn’t have to wait. That’s a doctor who didn’t lose focus. That’s a system that didn’t break under load. Speculative decoding isn’t about raw speed. It’s about reliability. It’s about trust. And when you get it right, it doesn’t just make things faster-it makes them better. Keep going. Keep tuning. Keep training on your data. The future isn’t bigger models. It’s smarter ones.