Tag: AI hallucinations

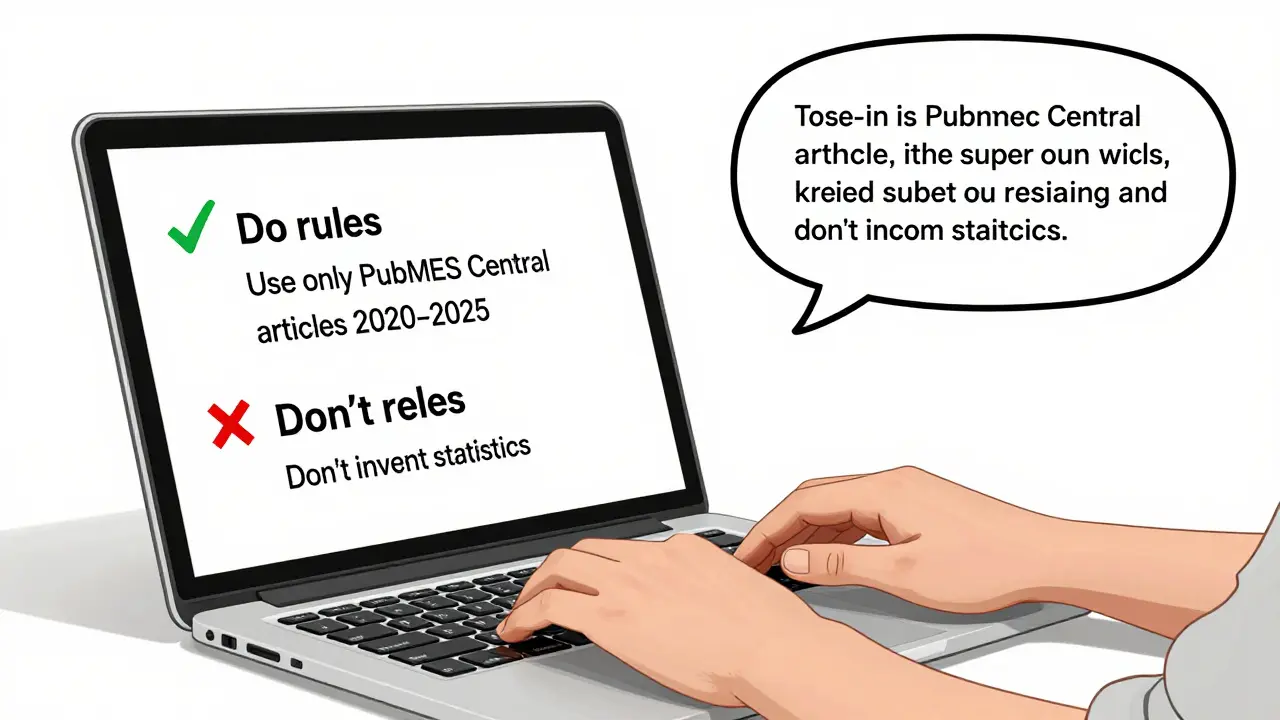

How to Prompt for Accuracy in Generative AI: Constraints, Quotes, and Extractive Answers

Learn how to use constraints, role prompts, and extractive techniques to reduce AI hallucinations and get accurate, reliable answers from generative AI tools. No fluff - just practical methods backed by real research.

Read more