Tag: LLM reasoning

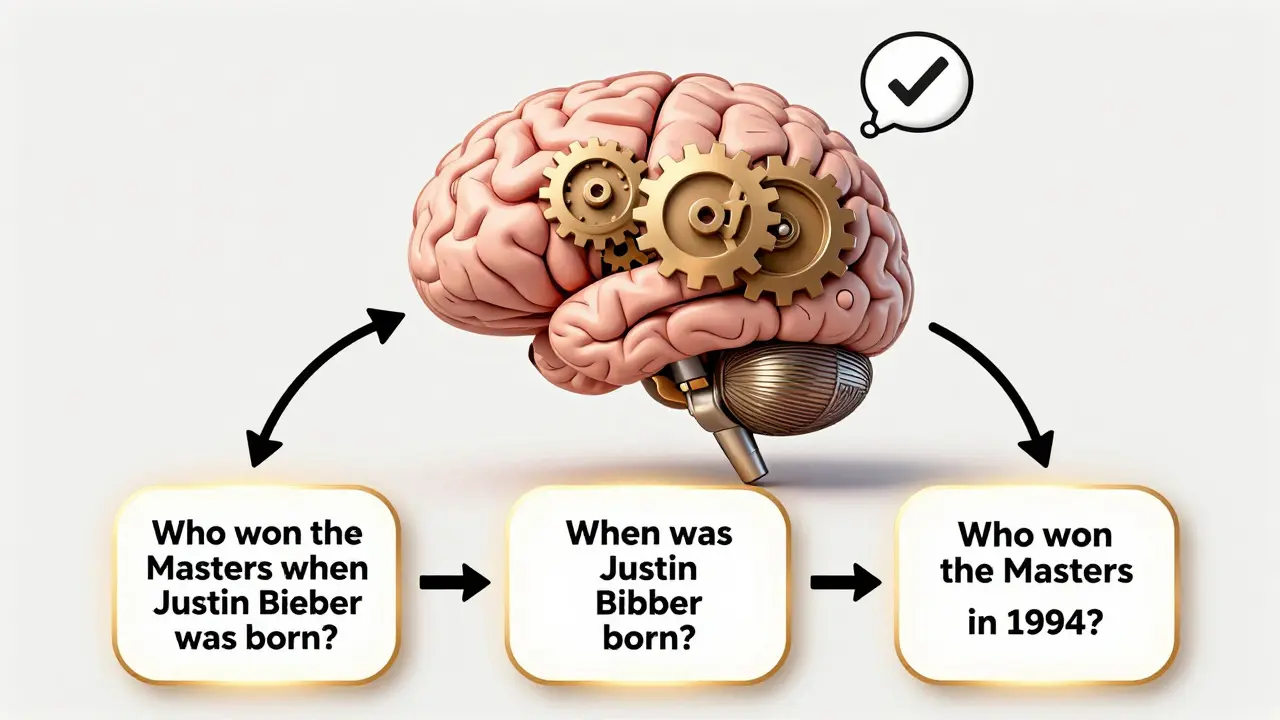

Self-Ask and Decomposition Prompts for Complex LLM Questions

Self-Ask and Decomposition Prompts improve LLM accuracy on complex questions by breaking them into clear, verifiable steps. Used in legal, medical, and financial AI systems, they boost reasoning accuracy by over 13%-but come with higher costs and latency.

Read more