Tag: LLM updates

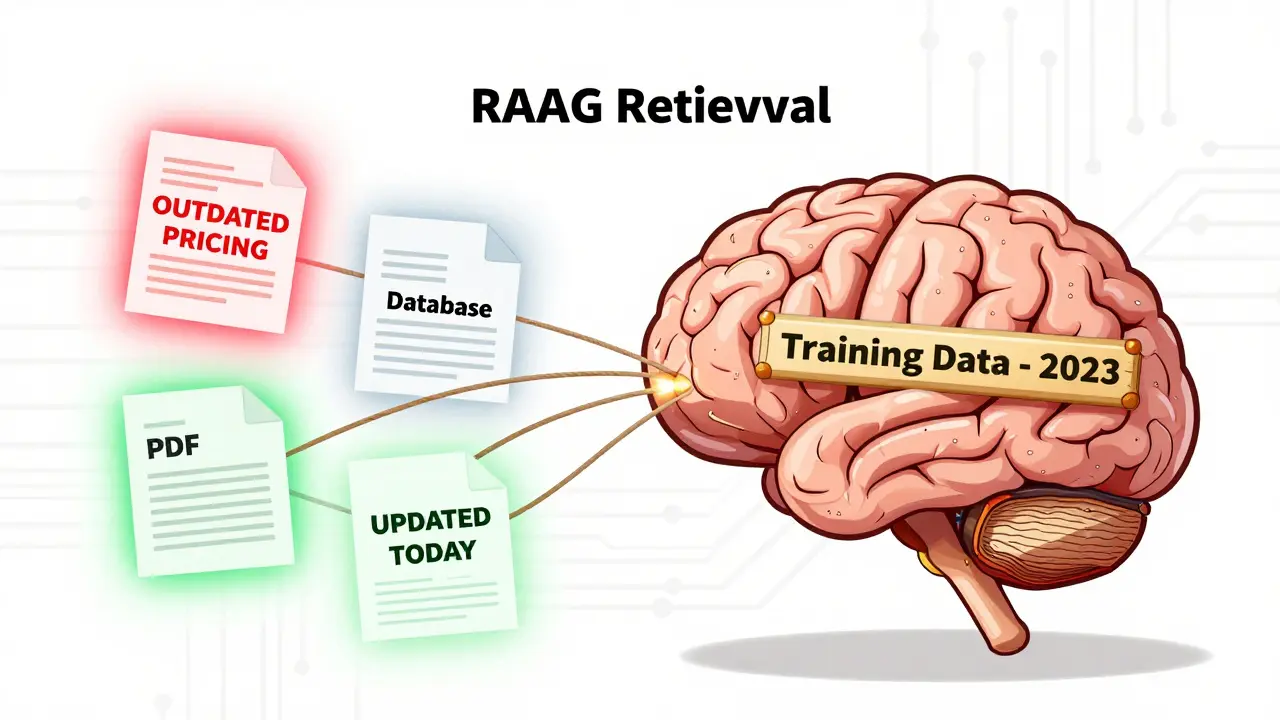

Document Freshness and Sync in RAG Systems: Keeping LLMs Up to Date

Keeping RAG systems accurate requires more than just an LLM-it demands real-time document sync. Learn how to prevent stale data from undermining your AI apps with practical strategies for freshness and synchronization.

Read more