Imagine a machine that has read almost every public book, article, and line of code ever written. It doesn't just memorize the text; it understands the hidden patterns of how humans communicate. That is the essence of a Large Language Model is a deep learning algorithm trained on massive datasets to understand, generate, and manipulate human language. Often called LLMs, these systems have moved from academic curiosities to the engines powering everything from your phone's autocomplete to complex coding assistants.

The Engine Under the Hood: Transformer Architecture

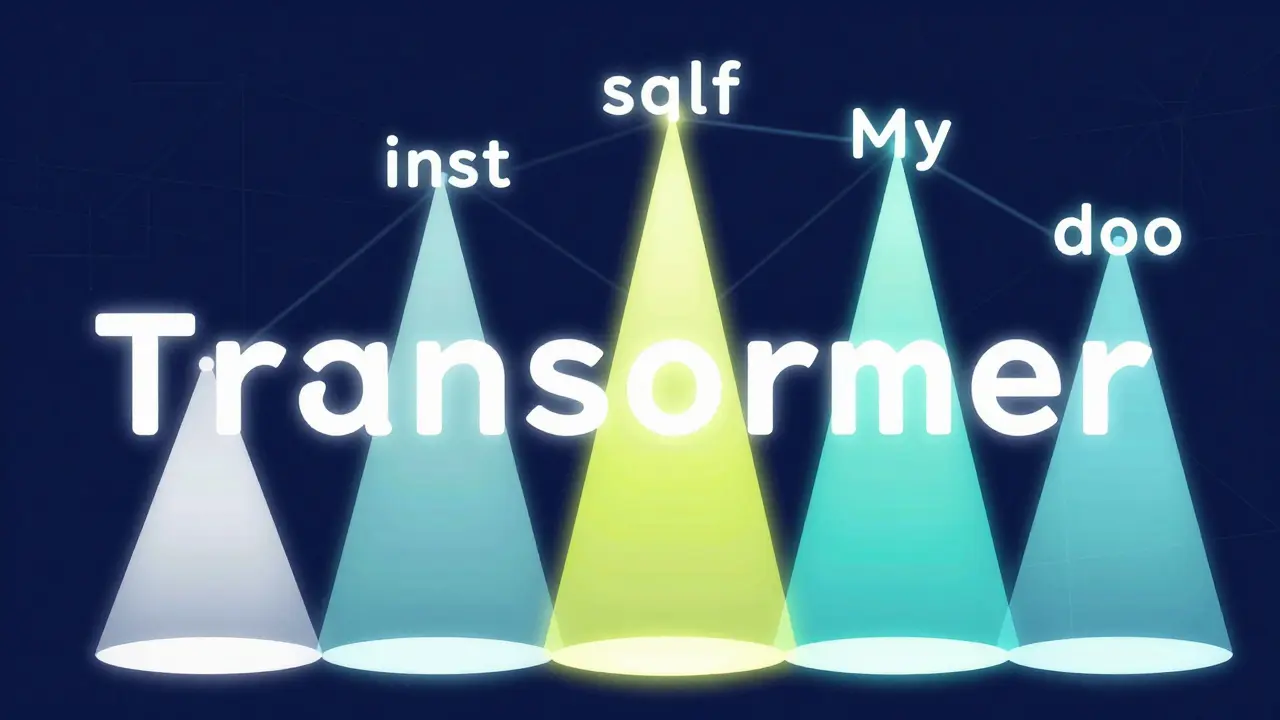

Before 2017, AI processed text like a human reading a sentence word-by-word from left to right. This was slow and often "forgot" the beginning of a long sentence by the time it reached the end. Everything changed with the Transformer architecture is a neural network design that processes entire sequences of data simultaneously rather than sequentially . Introduced by Google researchers in the paper "Attention Is All You Need," this design allows the model to look at every word in a sentence at once.

The real magic happens via the self-attention mechanism is a process that assigns different weights to different words in a sentence to determine which are most relevant to each other . Think of it as a spotlight. In the sentence "The cat sat on the mat, which was black," the model needs to know what "black" refers to. While "mat" is closer, the attention mechanism creates a strong mathematical link between "black" and "cat," allowing the AI to understand the context regardless of how far apart the words are.

From Words to Numbers: Tokenization and Embeddings

Computers can't read letters; they only understand numbers. To bridge this gap, LLMs use a process called tokenization is the process of breaking down text into smaller units called tokens, which can be whole words, characters, or sub-words . For example, a complex word like "unhappiness" might be split into ["un", "happy", "ness"]. This helps the model handle words it hasn't seen before by recognizing familiar pieces.

Once tokenized, these pieces are converted into embeddings is high-dimensional numeric vectors that represent the semantic meaning of a token in a mathematical space . If you mapped these vectors, words with similar meanings-like "dog" and "puppy"-would sit very close to each other in this invisible space, while "dog" and "skyscraper" would be far apart. Modern models often use embeddings with 1,024 to 8,192 dimensions to capture every nuance of a word's meaning.

Scaling Up: Parameters and Model Sizes

You'll often hear about "billions of parameters." In simple terms, a parameter is a variable within the model's neural network that is adjusted during training to optimize the accuracy of its predictions . Think of parameters as the "knobs" the AI turns to fine-tune its understanding. The more knobs a model has, the more complex patterns it can recognize.

| Model Series | Estimated Parameters | Key Characteristic | Typical Use Case |

|---|---|---|---|

| GPT-3 | 175 Billion | Autoregressive | General Text Generation |

| PaLM 2 | 340 Billion | Multilingual focus | Reasoning and Coding |

| Llama 3 | Up to 400 Billion | Open-weights | Enterprise Customization |

| Gemini Ultra | Trillions (Est.) | Native Multimodality | Complex Problem Solving |

Types of Models and How They Predict

Not all LLMs are built for the same job. Depending on how they are trained, they fall into a few main categories:

- Raw Language Models: These are the base versions (like GPT-2) that simply predict the next word in a sequence. If you type "The weather is," it might predict "sunny."

- Instruction-Tuned Models: These are trained to follow specific orders. Instead of just completing a sentence, they can "Summarize this article in three bullet points."

- Dialog-Tuned Models: These are optimized for back-and-forth conversation, making them feel like a chatty assistant rather than a text completer.

They also differ in how they predict. Autoregressive models (like the GPT series) predict the very next token in a line. Masked language models (like BERT) are more like a fill-in-the-blank test; they look at the words both before and after a missing gap to figure out what belongs there.

Real-World Capabilities and the "Hallucination" Problem

The sheer scale of these models allows them to do things their creators didn't explicitly program. They can write Python code, translate ancient Greek, and explain quantum physics to a five-year-old. This is possible because they've learned the underlying logic of information, not just a set of rules.

However, it's not perfect. You've probably encountered "hallucinations." This happens because an LLM is essentially a statistical prediction machine. It doesn't have a database of facts; it has a map of probabilities. If the most likely sequence of words is a lie that sounds confident, the model will output it as truth. To fight this, developers use Retrieval-Augmented Generation is a technique (RAG) that forces the model to look up factual information from a trusted external source before generating an answer .

The Cost of Intelligence: Compute and Energy

Training these giants isn't a hobby; it's an industrial operation. To train a model with 100 billion parameters, a company might need 1,000 NVIDIA A100 is a high-performance GPU designed specifically for AI workloads and large-scale data processing GPUs running for two months. This can cost between $10 million and $20 million in electricity and hardware alone.

Because of this, we're seeing a shift toward Small Language Models is compact AI models with 1-10 billion parameters optimized for specific tasks to reduce cost and latency (SLMs). These smaller versions can often do 80% of the work of a giant model but run on a laptop instead of a massive server farm.

What is the difference between an LLM and a traditional chatbot?

Traditional chatbots follow a decision tree (if user says X, answer Y). They are rigid and break easily. LLMs use probabilistic reasoning to generate original responses based on the context of the entire conversation, allowing them to handle nuance and complex requests they've never seen before.

What is a context window?

The context window is the amount of text the model can "keep in mind" at one time. If a model has a 128k token window, it can remember details from a 100-page document. Once the conversation exceeds that limit, the model starts "forgetting" the earliest parts of the chat.

Can LLMs actually think or reason?

Not in the human sense. They perform "pattern matching" at an incredibly high level. However, techniques like Chain-of-Thought prompting force them to break problems into steps, which mimics reasoning and significantly improves their accuracy in math and logic.

Why do LLMs sometimes give different answers to the same question?

This is due to a setting called "temperature." A low temperature makes the model predictable and factual, while a high temperature allows it to take risks and be more creative, which is why you get different variations of a poem or a story each time you ask.

Is the Transformer architecture the only way to build an LLM?

While it's the current gold standard, researchers are exploring hybrid models. Some are combining neural networks with symbolic AI (which uses hard logic rules) to stop hallucinations and improve mathematical precision.

John Fox

April 6, 2026 AT 18:57cool breakdown of the basics

Jim Sonntag

April 7, 2026 AT 20:36oh sure because spending 20 million on electricity to make a bot that confidently lies to me is exactly how we save the planet lol

Samar Omar

April 8, 2026 AT 10:06While the overview is adequate for the masses, one cannot help but feel that the discourse surrounding the Transformer architecture remains dreadfully superficial, as it fails to adequately encapsulate the transcendental shift from recurrent neural networks to the parallelized processing of attention mechanisms, which fundamentally alters the ontological nature of machine linguistics in a way that only those deeply immersed in the mathematical abyss of high-dimensional vector spaces can truly appreciate, yet here we are reducing it to a "spotlight" metaphor for the sake of accessibility.

chioma okwara

April 10, 2026 AT 09:36Actually you forgot to mention that RAG isnt just for facts but also helps with the context window limitations by fetching only relevant chunks of data so the model doesnt get confused by too much noise in the prompt which is basic stuff really

amber hopman

April 12, 2026 AT 07:20I've been seeing more people talk about those Small Language Models lately. It seems like the trend is moving toward efficiency rather than just adding more parameters. If a 7B model can do most of what a 175B model does through better data curation, the cost of entry for developers drops significantly.

Anuj Kumar

April 13, 2026 AT 20:20These things are just fancy autocomplete. The "reasoning" is a lie. They are just stealing data from us to build a digital brain that will eventually replace us while the big tech companies hide the real energy costs from the public. Trust me, it's all a play.

Tasha Hernandez

April 13, 2026 AT 22:38Imagine thinking a math equation can "understand" human emotion. It's just a glorified mirror reflecting our own biases back at us in a polished, corporate-approved font. Truly a masterpiece of digital delusion.

Deepak Sungra

April 14, 2026 AT 01:54I honestly don't even care about the math part but the hallucination thing is just wild. I asked one of these for a recipe last week and it tried to convince me that adding salt to coffee is a "traditional" way to brew it in some fake village. Like, why is it so confident in its own lies? It's honestly kind of funny how it just makes stuff up with a straight face. I spent an hour arguing with a bot and I'm still not sure who won. The level of drama this AI creates in my life is unmatched. It's basically a digital chaos agent.

Christina Morgan

April 14, 2026 AT 15:48It is really fascinating to see how the field is evolving! For anyone curious, looking into the specific differences between encoder-only and decoder-only models provides a lot of clarity on why some models are better at summarizing while others excel at creative writing. It's a great time to be learning about this technology.