Tag: prompt length

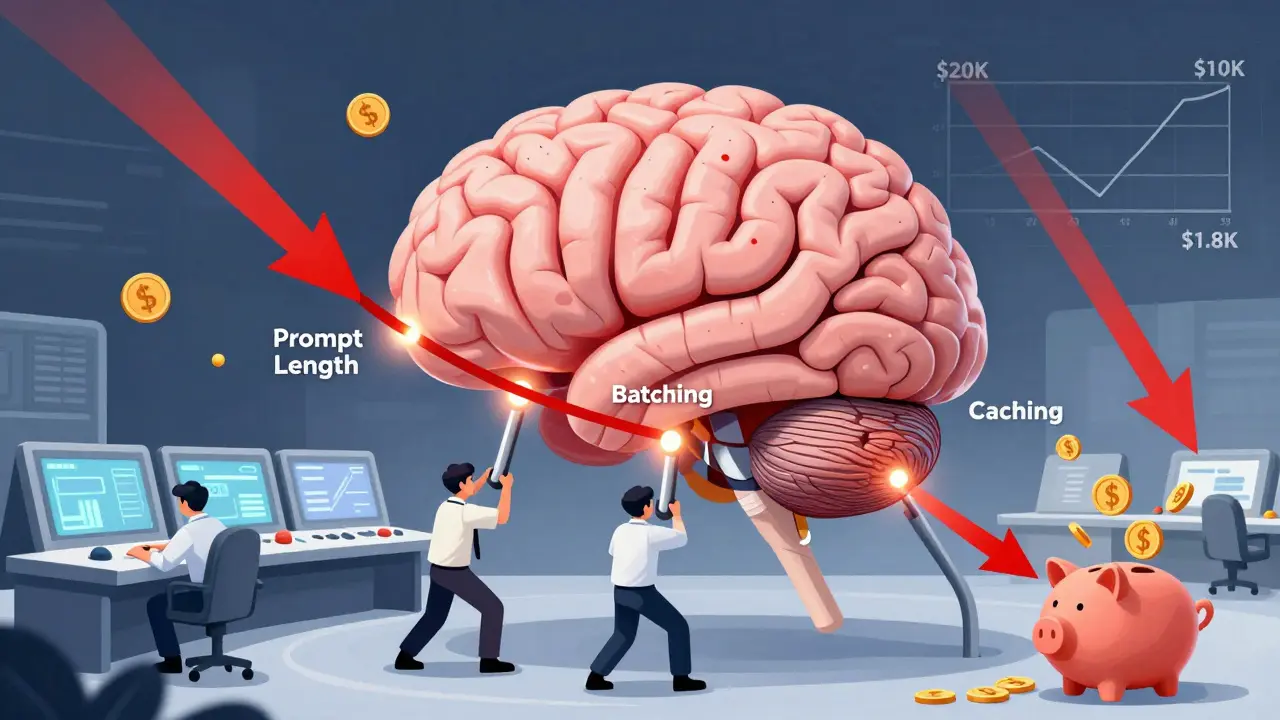

Optimization Levers for LLM Costs: Prompt Length, Batching, and Caching

Learn how prompt length, batching, and caching can slash LLM costs by up to 80% without sacrificing quality. Real-world examples from 2025 show how companies cut AI bills by focusing on usage patterns-not just hardware.

Read more