Imagine asking an image generator to create a picture of a "CEO." What do you see? If the result is overwhelmingly a white man in a suit, you are looking at more than just a bad prompt. You are seeing dataset bias embedded in multimodal generative AI systems.

This isn't a glitch; it's a feature of how these models learn. They mirror the internet they were trained on, and the internet is full of historical inequalities. When we move from text-only models to Multimodal Generative AI, which combines text, images, audio, and video, the problem gets trickier. The bias doesn't just stay in one place; it jumps between modalities, creating a feedback loop that can amplify stereotypes faster than we can fix them.

The Hidden Sources of Bias in Training Data

To understand why this happens, we have to look at where the data comes from. Most large models are trained on massive scrapes of the web-books, forums, social media posts, and billions of image-text pairs. This data is not neutral. It reflects who has had access to the internet and who has felt comfortable sharing their lives online.

Socioeconomic status plays a huge role here. Populations with high-speed internet access and digital literacy contribute disproportionately to the training corpora. This means that voices, languages, and cultural perspectives from underrepresented groups are often missing or fragmented. When a model sees millions of images of doctors labeled "doctor" but only a handful of nurses, it learns a statistical association that feels like truth to the algorithm, even if it contradicts reality.

The sheer scale required for modern AI creates another pressure point. To get enough data to train a model that can handle any task, developers often relax selection controls. They ingest everything. This "more is better" approach leads to poorer quality input data, including toxic content, extremist views, and deep-seated societal biases. The model architecture itself, particularly attention mechanisms, tends to prioritize frequently occurring patterns. If a stereotype appears often enough, the model optimizes for it, treating minority or outlier data as noise rather than signal.

Three Faces of Representational Bias

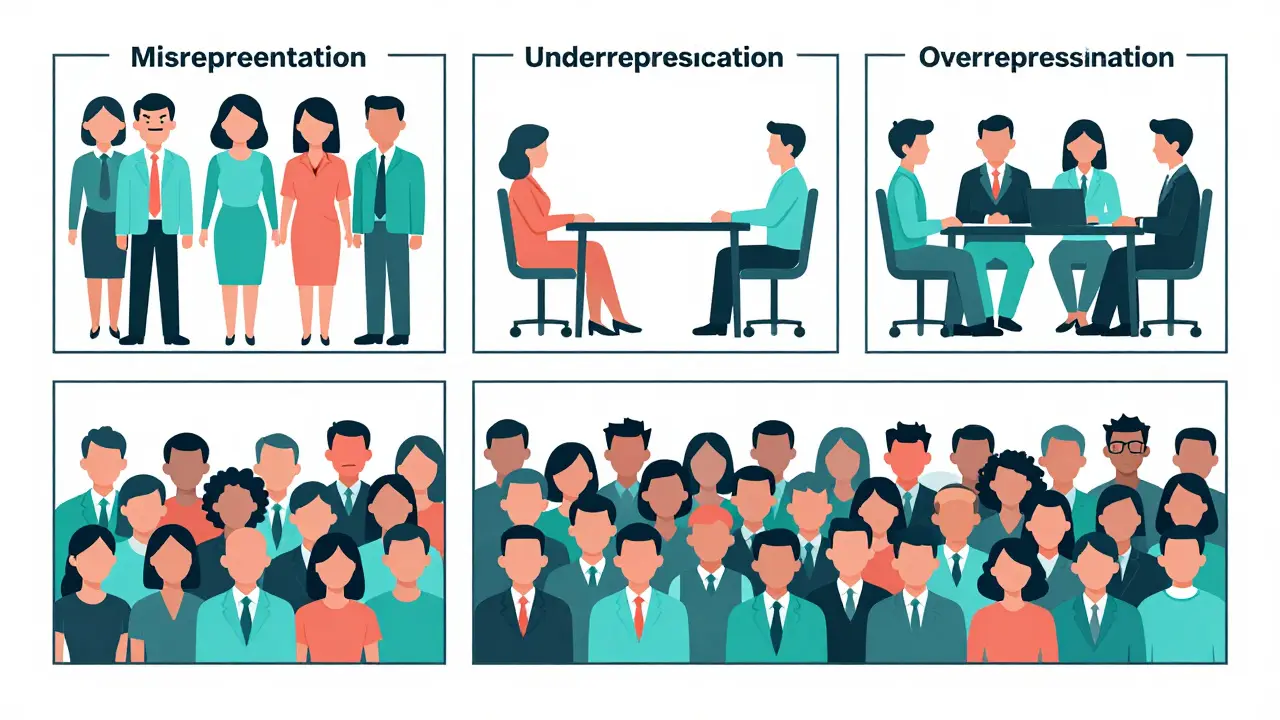

Bias in multimodal systems isn't just one thing. Researchers have identified three distinct ways it manifests, each with its own dangers.

- Misrepresentation: This occurs when minorities are included in the dataset but depicted harmfully or through narrow stereotypes. For example, if every time the model generates an image of a "criminal," it defaults to a specific demographic, it is misrepresenting that group by linking them exclusively to negative traits.

- Underrepresentation: Here, certain groups are simply absent or appear too infrequently to be recognized as part of a category. A classic example is women being largely absent from images associated with high-performing occupations like "engineer" or "executive." The model hasn't learned that women exist in these roles because the data didn't show it enough.

- Overrepresentation: This is the opposite problem. Dominant perspectives become the default setting. Anglocentric viewpoints, for instance, are often overrepresented in global datasets. Conversely, negative representations of minorities can be overrepresented in specific contexts, reinforcing harmful narratives.

We've seen concrete examples of this in popular models like Stable Diffusion. Studies have shown that these models tend to underrepresent women in high-status job images while simultaneously overrepresenting darker-skinned individuals in images related to low-wage work or criminality. These aren't random errors; they are systematic reflections of the biased data they consumed.

Fairness vs. Bias: Defining the Problem

Before we can fix bias, we need to agree on what it looks like. In the world of AI ethics, fairness and bias are related but distinct concepts. Fairness usually refers to equal probability across groups. A fair system might generate male and female faces with equal likelihood when prompted for "person."

Bias, on the other hand, is a non-random systematic error. It results in differences in accuracy or representation compared to the ground truth of the real world. Recent research has moved beyond simple definitions to categorize bias into three measurable tiers:

- Preuse Bias: Assessments conducted before the model is deployed, focusing on the training data and initial model weights.

- Intrinsic Bias: Measurements taken directly from the model's outputs during operation. This looks at what the model actually produces when prompted.

- Extrinsic Bias: Evaluations of the downstream effects. How does this biased output impact users, communities, or decision-making processes after deployment?

This three-tier framework helps us understand that bias isn't just a technical metric; it's a lifecycle issue that affects real people long after the code is written.

Detecting Bias Across Modalities

Finding bias in a multimodal model is harder than finding it in a text classifier. You can't just count words. You need multi-metric evaluation approaches that combine quantitative stats with qualitative context.

One common method is using distributional metrics. You prompt the model with a neutral term like "doctor" and measure the frequency of different demographic groups in the generated images. If 90% of the results are men, you have a distributional imbalance. Another approach involves embedding-based similarity. By converting images and text into vector space embeddings, researchers can analyze semantic similarity. This allows them to detect subtle biases, such as whether the model associates "woman" more closely with "home" than "office" in its internal representation.

However, numbers alone don't tell the whole story. Qualitative evaluations are crucial for understanding the societal implications of these patterns. A model might technically achieve "equal representation" but still produce stereotypical imagery that reinforces harmful norms. Combining quantitative distribution checks with human-reviewed qualitative assessments provides the most robust view of a model's fairness.

Mitigation Strategies: From Curation to Synthetic Data

Fixing dataset bias requires action at multiple levels. The first line of defense is curated and filtered datasets. This involves removing toxic content, extremist material, and overly dominant representations from training corpora. While necessary, filtering alone isn't enough because it often removes valuable data alongside the bad.

A more proactive approach is data resampling. Techniques like oversampling involve generating synthetic data for underrepresented subpopulations. The Synthetic Minority Over-sampling TEchnique (SMOTE) is a well-known example. It creates new, synthetic samples by interpolating between existing minority class examples and their nearest neighbors. This helps balance the dataset without discarding majority class data.

Another powerful strategy is synthetic counterfactual data generation. This involves creating new training examples that present alternative realities to stereotypical associations. For instance, if the data links "nurse" primarily with women, counterfactual generation would create balanced examples linking "nurse" with men and women equally. This augments the training data with non-biased examples, teaching the model to break old associations.

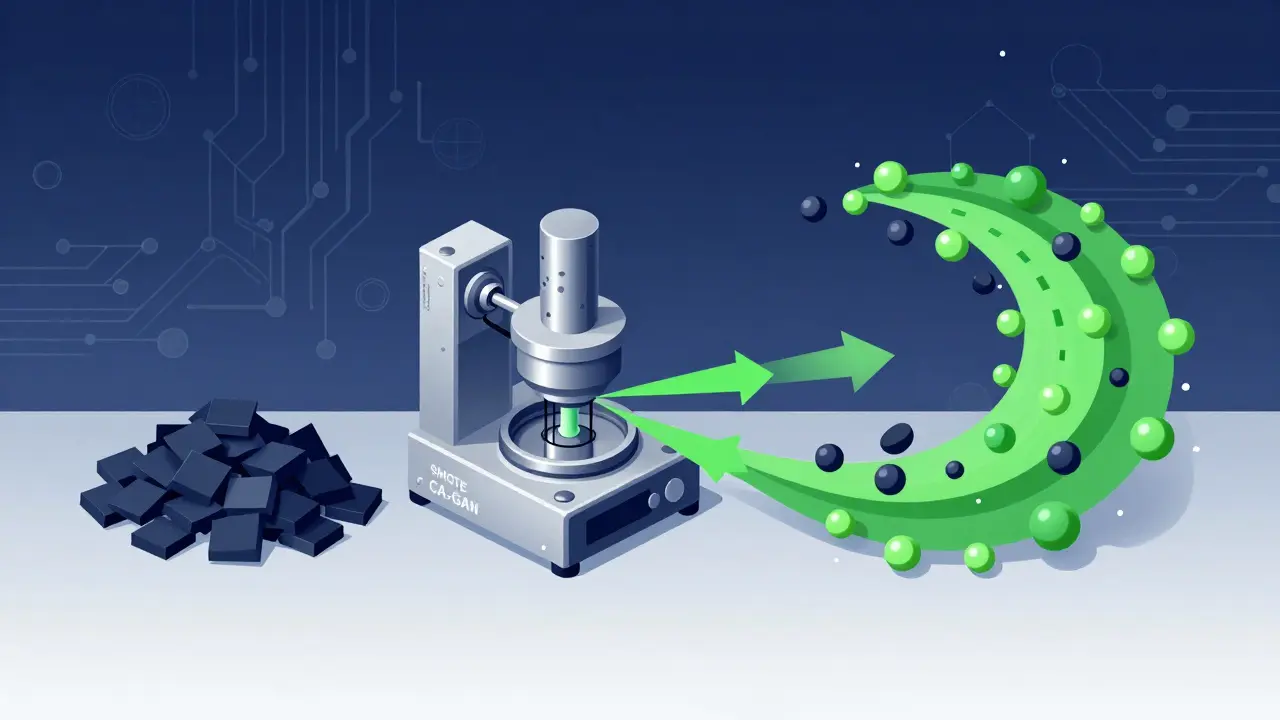

Advanced Architectures: CA-GANs and Beyond

When standard methods fall short, advanced architectures come into play. Traditional Generative Adversarial Networks (GANs) struggle with highly imbalanced datasets. They often suffer from "mode collapse," where the generator gets stuck producing only a few variations of the majority class, ignoring the minority entirely.

Conditional GANs improve on this by using labels in both the generator and discriminator, allowing the model to focus on generating specific minority-class samples. But the real breakthrough comes with newer architectures like CA-GAN (Conditional Attention GAN). CA-GAN specifically addresses the challenge of generating high-dimensional, time-series synthetic data while avoiding mode collapse.

CA-GAN uses stacked Bidirectional LSTMs (Long Short-Term Memory networks) to capture complex patterns more effectively than vanilla GANs. By increasing the layers in both the generator and discriminator and adjusting learning rates, CA-GAN can generate high-quality, authentic samples that preserve the global structure of the original data. Research shows that synthetic data generated via CA-GAN significantly improves model fairness for underrepresented groups, such as Black patients and female patients in medical imaging datasets, without sacrificing diagnostic accuracy.

The Research Gap: LMMs vs. LLMs

Despite these advances, there is a glaring gap in the industry. Large Language Models (LLMs) have received massive amounts of scrutiny regarding fairness and bias. In contrast, Large Multimodal Models (LMMs) have lagged behind. A comprehensive survey revealed that LMMs have received substantially less research attention regarding fairness compared to their text-only counterparts.

This is a critical vulnerability. Multimodal systems combine text, vision, and audio. If each modality carries its own bias, the intersection can amplify those biases exponentially. For example, a text description might be neutral, but the accompanying image could reinforce a stereotype, creating a stronger, more persuasive false narrative. As multimodal AI becomes central to applications ranging from healthcare diagnostics to creative content generation, closing this research gap is essential.

What is multimodal generative AI?

Multimodal generative AI refers to artificial intelligence systems that can process and generate content across multiple types of data, such as text, images, audio, and video. Unlike single-modality models, these systems understand relationships between different data types, allowing them to perform tasks like generating an image from a text description or describing an image in words.

How does dataset bias affect multimodal AI?

Dataset bias affects multimodal AI by causing the model to learn and reproduce historical inequalities, stereotypes, and uneven representations found in training data. This can lead to underrepresentation of certain groups, misrepresentation through harmful stereotypes, or overrepresentation of dominant perspectives, impacting the fairness and reliability of the AI's outputs.

What is SMOTE in the context of AI bias?

SMOTE (Synthetic Minority Over-sampling Technique) is a data resampling method used to address class imbalance. It generates synthetic examples for underrepresented classes by interpolating between existing minority samples and their nearest neighbors, helping to balance the dataset and reduce bias in machine learning models.

Why are Large Multimodal Models (LMMs) considered more vulnerable to bias?

LMMs are considered more vulnerable because they combine multiple modalities, each potentially carrying its own biases. The interaction between modalities can amplify these biases, creating stronger and more pervasive stereotypes than single-modality models. Additionally, LMMs have received less research attention regarding fairness compared to Large Language Models (LLMs).

What is CA-GAN and how does it help with bias?

CA-GAN (Conditional Attention GAN) is an advanced generative adversarial network architecture designed to handle imbalanced datasets. It uses stacked Bidirectional LSTMs and conditional labels to generate high-quality synthetic data for minority classes, improving model fairness and reducing mode collapse issues common in traditional GANs.

Tonya Trottman

May 8, 2026 AT 21:05Oh, look at us, playing detective with algorithms now. "Dataset bias" is just a fancy term for "the internet is full of idiots and the AI learned from them." If you want your CEO to look like anyone other than a white guy in a suit, maybe stop scraping LinkedIn profiles from 2015 where everyone was trying to look like they belonged on a Forbes cover. The model isn't biased; it's just reflecting the collective narcissism of the training data. And don't get me started on SMOTE. Interpolating between two minority samples doesn't create diversity; it creates statistical ghosts that haunt your validation set. We need better data, not more math tricks to hide the fact that we fed the beast garbage.

Sheila Alston

May 9, 2026 AT 09:49This is exactly why I refuse to use any generative tools for my work. It’s not just about aesthetics or even accuracy anymore; it’s about complicity. Every time we deploy these models without rigorous ethical oversight, we are actively participating in the erasure of marginalized communities. The article mentions "underrepresentation," but let’s be clear: this is systemic violence encoded into code. We cannot simply "fix" this with synthetic data because the underlying power structures that created the imbalance remain intact. Until we address the root causes of digital inequality, any technical solution is merely a band-aid on a gaping wound. We need to demand accountability from the developers who prioritize profit over people.

sampa Karjee

May 9, 2026 AT 21:13The average layperson here clearly lacks the intellectual capacity to grasp the nuances of high-dimensional vector spaces. You speak of "bias" as if it were a moral failing rather than a mathematical inevitability in stochastic gradient descent. The issue is not that the model is "evil"; it is that your expectations are misaligned with the optimization landscape. CA-GANs are a sophisticated solution for those who actually understand the architecture, yet you reduce it to a simple "fairness" metric. Disappointing. Most of you would struggle to implement a basic GAN, let alone critique its societal impact. Read a paper before you type.

Victoria Kingsbury

May 11, 2026 AT 20:50I find the section on CA-GANs really interesting, especially how it uses Bidirectional LSTMs to handle the mode collapse issue. It seems like a promising approach for medical imaging datasets where representation matters so much for diagnostic accuracy. However, I’m curious about the computational cost involved in generating all that synthetic counterfactual data. Does it significantly slow down the training process? Also, the part about embedding-based similarity detection sounds cool, but I wonder how scalable it is for real-time applications. Overall, a very thorough breakdown of a complex problem!

Kieran Danagher

May 12, 2026 AT 16:32Let's cut the fluff. SMOTE is old news. If you're still using interpolation for image generation, you're doing it wrong. The real issue is that most engineers treat bias as a post-processing step rather than an architectural constraint. CA-GAN is nice, sure, but it's computationally expensive and often overfits to the specific distribution of the minority class. You need adversarial debiasing during the pre-training phase, not just synthetic oversampling. Stop patching the leak and fix the pipe. Also, nobody cares about "extrinsic bias" until someone gets sued.

Natasha Madison

May 12, 2026 AT 23:29They want you to believe it's just "data" but it's a coordinated effort to rewrite our history. The globalists control the servers and the algorithms. They decide what is "represented" and what is "noise." Don't trust the tech companies. They are building a surveillance state disguised as convenience. This bias is intentional. Wake up.

Patrick Sieber

May 13, 2026 AT 13:26I appreciate the detailed explanation of the three tiers of bias. It’s helpful to distinguish between preuse, intrinsic, and extrinsic factors when evaluating model performance. In my experience working with multimodal systems, the biggest challenge is indeed the lack of standardized metrics for qualitative assessment. Quantitative checks are easy to automate, but capturing the nuance of stereotypical imagery requires human input, which is hard to scale. Do you think there will be a shift towards more community-driven auditing platforms in the near future?

poonam upadhyay

May 14, 2026 AT 11:03Ugh! The sheer audacity of these tech bros thinking they can solve centuries of colonialism with a few lines of Python! 🙄 They scrape the web-yes, THE WEB, not some curated utopia-and then act shocked when the output looks like a dystopian nightmare! It’s not just "bias," it’s structural violence wrapped in a sleek UI! And don’t get me started on SMOTE! Interpolating data points doesn’t give voice to the silenced; it just creates digital phantoms! We need radical transparency, not more jargon-heavy papers that no one reads! The system is rigged, folks, and your "fairness metrics" are just another tool of oppression! 😤

OONAGH Ffrench

May 15, 2026 AT 01:50the gap between llm research and lmm fairness is stark indeed while text models have been scrutinized extensively vision models remain largely unexamined in terms of ethical implications this is concerning given that visual stereotypes are often more deeply ingrained in societal norms than textual ones the reliance on attention mechanisms means that frequent patterns are reinforced exponentially making mitigation difficult without significant architectural changes we must consider the philosophical underpinnings of representation itself is it possible to have neutral representation in a non-neutral world perhaps not but we can strive for awareness