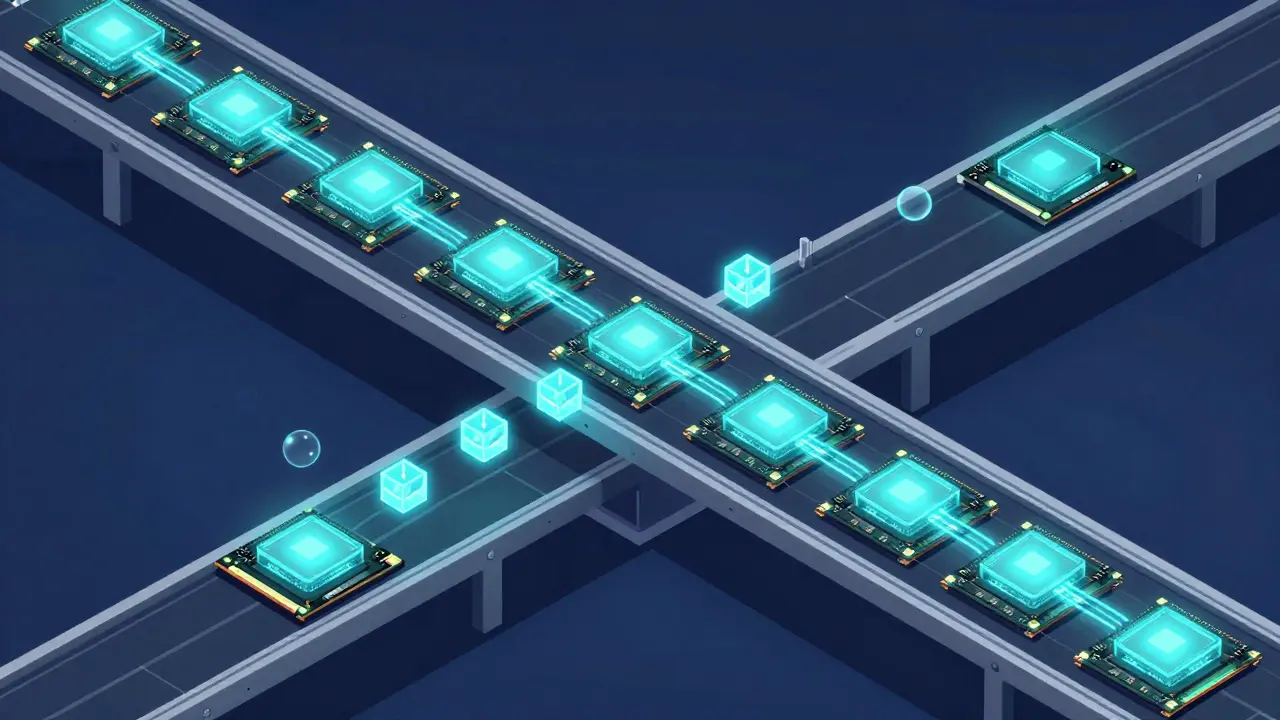

Trying to run a 70B parameter model on a single GPU is like trying to fit a grand piano into a compact car-it simply doesn't happen. As Large Language Models (LLMs) balloon from a few billion to hundreds of billions of parameters, we've hit a hard wall: hardware memory. This is where Distributed Transformer Inference is a set of techniques that allow a single model to execute across multiple computing devices by partitioning its components . If you want to move past the 10B parameter limit and achieve production-grade throughput, you can't just buy more RAM; you need a strategy to split the workload.

| Strategy | How it Works | Best For | Main Trade-off |

|---|---|---|---|

| Tensor Parallelism | Splits layers internally | Low latency, single-node | High communication overhead |

| Pipeline Parallelism | Splits layers sequentially | Scaling across nodes | Pipeline bubbles (idle time) |

| Expert Parallelism | Splits MoE experts | MoE models (e.g., DeepSeek) | Routing complexity |

The Heavy Lifting: Tensor Parallelism

When we talk about Tensor Parallelism (TP) is a method that distributes the computation of individual transformer layers across multiple GPUs , we are essentially slicing the math. Instead of one GPU calculating the entire multi-head attention mechanism, TP splits the attention heads. For example, if you have 32 heads and 4 GPUs, each GPU handles 8 heads. After the calculation, the system uses an "All-Reduce" operation to gather the results and stitch them back together.

TP is your go-to for keeping latency low. Because the GPUs work on the same layer simultaneously, the time it takes to get a single token out is minimized. However, there is a catch. All-Reduce operations are chatty. According to researchers from NVIDIA's Megatron-LM project, these communication patterns can become the dominant cost as you scale. If your interconnects aren't fast enough-think NVIDIA NVLink-the GPUs spend more time waiting for data than actually calculating, which can tank your performance by 30-50% if configured incorrectly.

The Assembly Line: Pipeline Parallelism

If Tensor Parallelism is about splitting a single task, Pipeline Parallelism (PP) is a strategy that divides the model's sequential layers across different devices . Imagine a factory assembly line: GPU 1 handles layers 1-8, GPU 2 handles layers 9-16, and so on. The output of the first group becomes the input for the second.

This is how frameworks like vLLM is a high-throughput LLM serving engine that optimizes memory and throughput through PagedAttention and distributed parallelism scale to massive models. PP is much friendlier to multi-node setups because it doesn't require the constant, high-bandwidth chatter that TP does. But it introduces "pipeline bubbles"-periods where GPU 2 is sitting idle while waiting for GPU 1 to finish the first batch. To fix this, experts recommend sophisticated scheduling to mask that latency, effectively filling the gaps with other requests.

Pushing LLMs to the Edge with MDI

Not everyone has a cluster of A100s. For those running on Raspberry Pi clusters or mobile hardware, Model-Distributed Inference (MDI) is the game-changer. Unlike standard PP, MDI accounts for the fact that your devices might be different (heterogeneous). You wouldn't give the same number of layers to a powerful GPU and a weak CPU; you'd assign layers proportional to their computational power.

Recent benchmarks from 2025 show that optimal MDI partitioning-where the starter node handles the initial and final layers plus a few transformer blocks-can reduce latency by 37% on edge clusters. This flexibility is critical for privacy-focused apps where you want the model to stay on local hardware rather than hitting a cloud API.

Dealing with the KV Cache Bottleneck

If you've struggled with inference speed, the culprit is likely the KV (Key-Value) cache. In a distributed setup, managing this cache is a nightmare. When you split a model, you also split the memory that stores the "context" of the conversation. If you don't handle this efficiently, you end up with redundant prefill computations, which slows everything down.

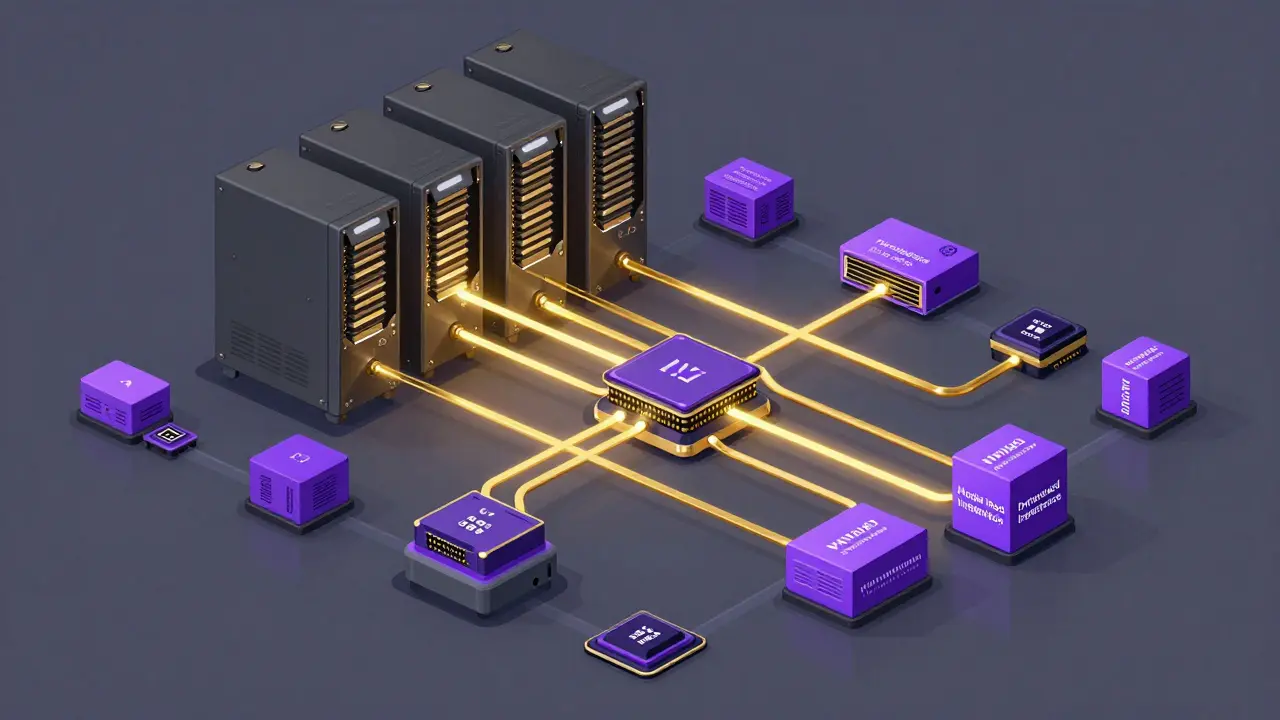

A breakthrough here is disaggregated inference. Frameworks like llm-d is a distributed inference framework by Red Hat that supports CPU/GPU disaggregation for cost-effective deployment allow the prefill stage (processing the prompt) to run on CPUs while the decode phase (generating tokens) happens on GPUs. This decoupling can improve performance by 22% by allowing the system to reuse caches across different requests rather than recalculating them every time.

Choosing Your Parallelism Strategy

Deciding between TP and PP usually comes down to your hardware and your goals. If you have a single machine with NVLink and need the lowest possible latency for a single user, lean into Tensor Parallelism. If you're scaling across a network of servers and care more about total throughput (serving thousands of users), Pipeline Parallelism is the way to go.

For the best of both worlds, a hybrid approach is the gold standard. Combining TP and PP allows you to hit scaling efficiencies of around 89% for 13B models across 8 GPUs. Just be warned: the setup is a steep climb. While a basic deployment might take a few days, fine-tuning these configurations to avoid a 60% throughput drop often takes weeks of trial and error.

What is the main difference between Tensor and Pipeline Parallelism?

Tensor Parallelism splits a single layer across multiple GPUs, meaning they work on the same part of the model at the same time. Pipeline Parallelism splits the model into chunks of layers, where one GPU finishes its set of layers before passing the data to the next GPU in the chain.

Can I run distributed inference on CPUs?

Yes, though it is significantly slower. Frameworks like llm-d allow for a hybrid approach where the CPU handles the initial prefill stage and the GPU handles the token generation, which helps reduce hardware costs.

Why does Tensor Parallelism require NVLink?

Because TP requires constant "All-Reduce" communication between GPUs to sync results, the standard PCIe bus is often too slow. NVLink provides the high-bandwidth, low-latency connection needed to prevent the GPUs from spending all their time waiting for data.

What are "pipeline bubbles"?

Pipeline bubbles are periods of inactivity where a GPU in a pipeline is idle because it is waiting for the previous GPU in the sequence to finish its computation and pass the data forward.

How does Expert Parallelism differ from the others?

Expert Parallelism is specifically for Mixture of Experts (MoE) models. Instead of splitting every layer, it distributes different "experts" (specialized sub-networks) across different GPUs, and a router decides which expert should handle a specific token.

Next Steps for Implementation

If you're just starting, don't try to implement a hybrid TP/PP setup on day one. Start with Pipeline Parallelism; it's more intuitive and less likely to crash your system with cryptic communication errors. Once you have a stable pipeline, look into Distributed Transformer Inference optimizations like Chunked Prefill in vLLM to boost throughput for long-context prompts.

For those targeting edge deployments, focus on adaptive partitioning. Don't assume all your nodes are equal. Map out the RAM and TFLOPS of each device and allocate your transformer blocks accordingly to avoid a single slow node becoming a bottleneck for the entire cluster.

Sanjay Mittal

April 27, 2026 AT 12:51For anyone struggling with pipeline bubbles, looking into micro-batching is usually the most effective way to keep those GPUs saturated. Instead of passing one massive chunk, you break it into smaller pieces so the later stages can start working while the first stage is still processing the rest of the batch. It significantly smooths out the throughput.

sonny dirgantara

April 29, 2026 AT 04:24pp is way easier to set up thn tp.

Mike Zhong

April 30, 2026 AT 02:11We are treating these models like gods just because we found a way to slice them up across a few A100s, but the fundamental inefficiency of the transformer architecture remains. We're just throwing more hardware at a broken paradigm. It's a brute-force approach to intelligence that ignores the elegance of biological neural networks, all for the sake of slightly faster token generation in a corporate chatbot.

Johnathan Rhyne

April 30, 2026 AT 15:15The audacity to suggest a hybrid setup is the "gold standard" is quite a scrumptious bit of hyperbole! While the math checks out, the sheer nightmare of configuring the communication primitives makes it more of a masochistic ritual than a standard procedure. Also, let's not pretend that NVLink is the only way to avoid a performance crater, even if it is the most glamorous way.

Jamie Roman

May 1, 2026 AT 10:20I've been thinking about how this applies to smaller hobbyist setups and it's honestly so cool to see MDI getting some love here because most of us aren't exactly swimming in H100 clusters, right? It's kind of like a community effort where every little bit of RAM on an old workstation counts, and while the latency might not be world-beating, there's something really rewarding about getting a massive model to actually breathe on hardware that wasn't designed for it in the first place.

Andrew Nashaat

May 1, 2026 AT 19:01The lack of a comma in some of these technical descriptions is practically a crime!!! It's just so disheartening when people prioritize "speed" over basic literacy... but anyway, the point about KV cache is spot on, even if the delivery was a bit rushed!!!

Salomi Cummingham

May 3, 2026 AT 03:09Oh my goodness, the sheer stress of trying to avoid a 60% throughput drop is practically a horror movie for any DevOps engineer tasked with this deployment! I can only imagine the absolute chaos and the sleepless nights spent staring at telemetry dashboards, praying that the All-Reduce operation doesn't just decide to give up on life and crash the entire node in the middle of a production rollout!

Lauren Saunders

May 4, 2026 AT 12:27Obviously, anyone with a modicum of experience knows that TP is only viable if you have the budget for a proper InfiniBand setup. Suggesting that it's a "go-to" for low latency without emphasizing the astronomical cost is just quaint. Most of these "solutions" are just glorified ways to burn venture capital on electricity.

Jawaharlal Thota

May 5, 2026 AT 18:41I really appreciate the breakdown of the different parallelism types because it makes the transition from single-GPU to multi-node feel much less intimidating for those of us who are just starting to scale our models. If you're feeling stuck, I'd highly recommend spending some extra time reading the vLLM documentation on PagedAttention since it's the foundation for so much of this memory optimization and really helps in understanding why the KV cache is such a pain point in the first place.

Jeanie Watson

May 6, 2026 AT 18:49Too many words for a basic summary of TP vs PP.