Remember when bigger was always better? For a while, that was the only rule in artificial intelligence. If you wanted a smarter model, you just threw more parameters at it. But by 2024, that strategy hit a wall. We ran out of cheap compute, energy bills skyrocketed, and training times stretched into years. Enter Sparse and Dynamic Routing, a paradigm shift primarily implemented through Mixture of Experts (MoE) frameworks. This isn't just a tweak; it's a fundamental rewrite of how large language models process information. Instead of activating every single neuron for every single word, these architectures act like efficient traffic controllers, sending data only where it needs to go. The result? Trillion-parameter models that run as fast as their much smaller dense counterparts.

The End of the Dense Model Era

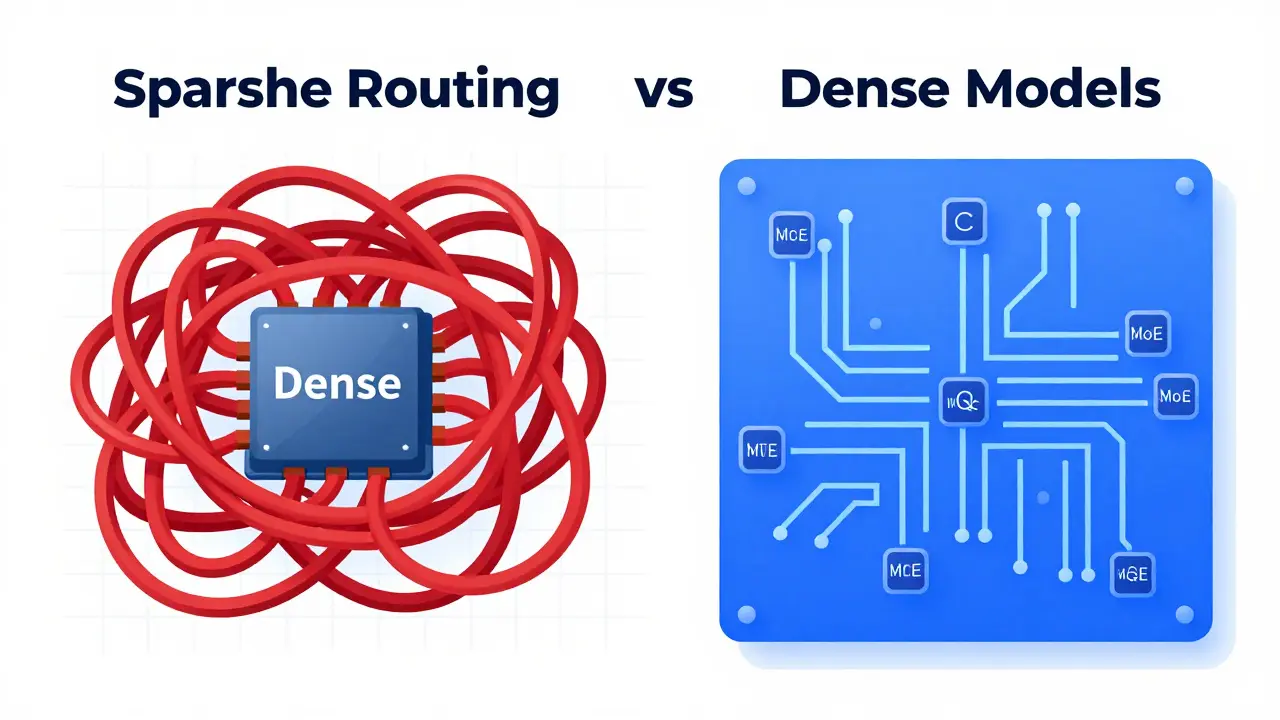

To understand why we need sparse routing, you have to look at the problem with traditional "dense" neural networks. In a dense model, every input token activates every parameter in the network. It’s like reading a book by highlighting every single word on every page, even if you only care about the plot summary. As models grew beyond billions of parameters, the computational cost didn’t just increase-it exploded quadratically. Training GPT-3 was already expensive, but scaling past that point became economically unfeasible for most organizations.

The bottleneck wasn’t just money; it was physics. Memory requirements strained advanced hardware systems, and energy consumption raised serious environmental concerns. Researchers realized that adding more parameters provided diminishing returns. You were paying exponentially more for linearly small performance gains. That’s where the concept of sparsity came in. By keeping the total parameter count massive but activating only a tiny fraction during inference, we can maintain representational capacity without the computational penalty.

How Mixture of Experts Works

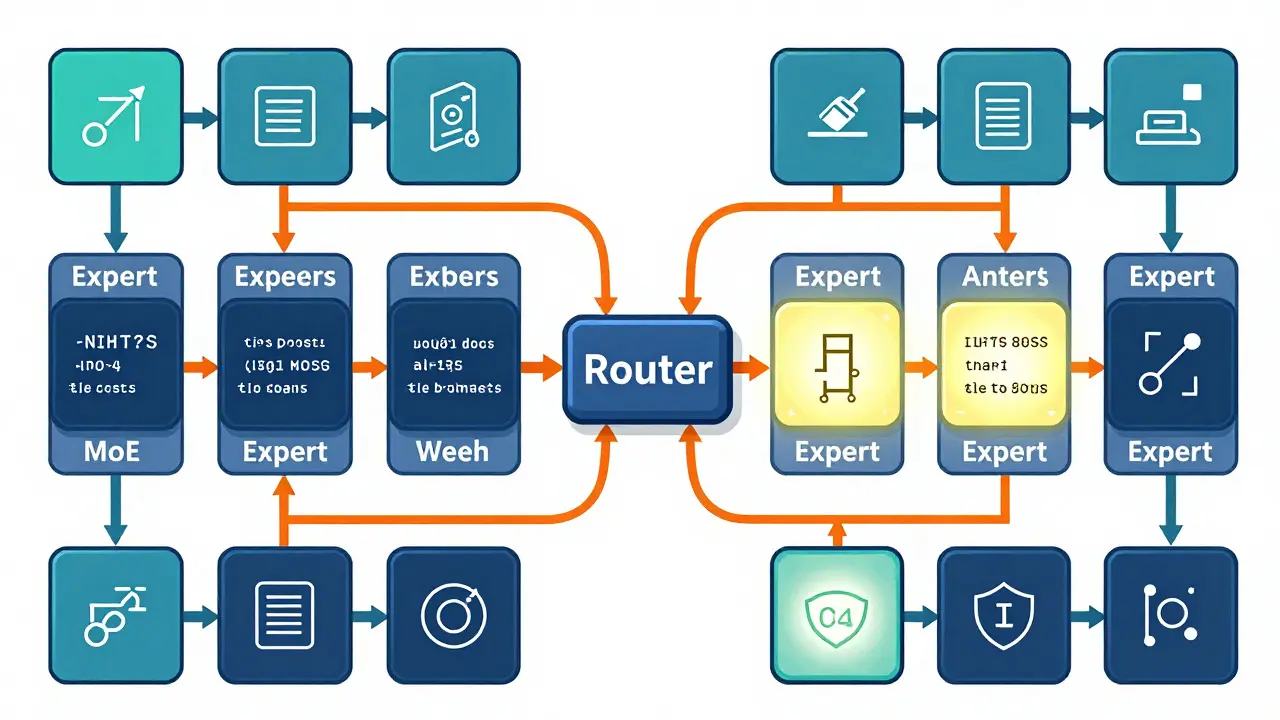

At the heart of this new architecture is the Mixture of Experts (MoE). Think of an MoE model as a team of specialists rather than a generalist army. Instead of one giant brain trying to handle everything from poetry to Python code, you have dozens-or even hundreds-of specialized "expert" networks. Each expert is good at specific tasks or patterns.

Here is the magic trick: a gating network, often called a router, decides which experts should handle each input token. For any given word, the router typically selects only the top 1 or 2 experts out of 8 to 128 available options. This means that while the model might have 1 trillion parameters in total, only a small subset-say, 12.5% to 25%-is actually active during any single inference step. The outputs from these selected experts are then combined based on their routing scores to produce the final response.

This sparse activation pattern changes the scaling game entirely. Computational cost now scales approximately linearly with model size rather than quadratically. You get the benefits of a massive knowledge base without the latency or energy drain of processing all that data simultaneously.

| Feature | Dense Models | Sparse (MoE) Models |

|---|---|---|

| Activation Pattern | All parameters activated per token | Only top-k experts activated (e.g., 1-2 out of 128) |

| Scaling Cost | Quadratic growth | Linear growth |

| Memory Usage | High during inference | Low during inference, high for storage |

| Energy Efficiency | Lower (30-50% more energy) | Higher (significant reduction) |

| Complexity | Simpler implementation | Requires specialized routing infrastructure |

The Role of Dynamic Routing and RouteSAE

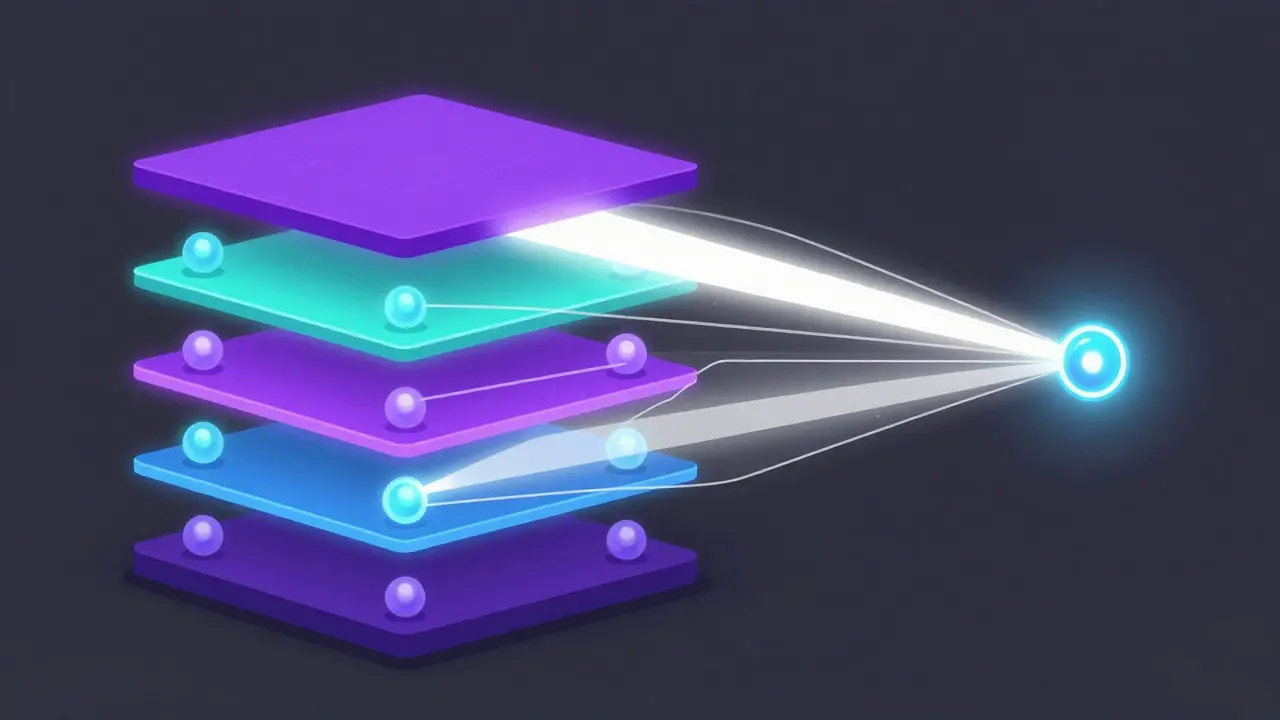

Static sparsity isn’t enough. The real power comes from dynamic routing-the ability to change which experts are used based on the context of the input. Recent developments, such as the Route Sparse Autoencoder (RouteSAE), have pushed this further. Introduced in proceedings around 2025, RouteSAE incorporates a lightweight router that dynamically integrates multi-layer residual streams from language models.

Instead of looking at just one layer of the network, RouteSAE computes normalized weights for activations from multiple layers. It uses sum pooling to form a condensed representation vector and assigns weights dynamically based on input representations. Formally, it selects layer $i^*$ with the highest probability $p_i$. This approach allows the model to capture both low-level features from shallow layers and high-level abstract features from deep layers simultaneously.

Why does this matter? Traditional approaches often struggled with interpretability. RouteSAE achieves a 22.3% improvement in interpretation scores compared to layer-specific approaches under the same sparsity constraints. It maintains robust interpretability regardless of sparsity settings, proving that we don’t have to sacrifice transparency for efficiency.

Implementation Challenges and Pitfalls

If sparse routing is so great, why aren’t all models using it yet? The answer lies in complexity. Implementing MoE architectures requires specialized infrastructure. You still have to store all those parameters in memory, even if you don’t use them all at once. This creates significant memory bandwidth requirements. Random memory access patterns inherent in dynamic routing can stall modern hardware architectures that are optimized for sequential access.

Then there’s the issue of load balancing. If the router keeps sending tokens to the same few experts, you get "expert collapse." Some experts become overworked bottlenecks, while others sit idle. NVIDIA’s documentation highlights that preventing this requires careful attention to routing mechanisms. Researchers use auxiliary loss functions to encourage balanced utilization, ensuring no single expert carries the entire workload.

Training stability is another hurdle. Cameron R. Wolfe, Ph.D., notes that while the extra parameters provide huge representational capacity, they introduce challenges in training stability that dense models simply don’t face. Suboptimal routing decisions can degrade performance quickly. Practitioners report a learning curve of 3 to 6 months to achieve production-ready implementations, requiring expertise in both traditional neural network training and specialized routing algorithms.

Market Adoption and Future Trajectory

Despite these challenges, the industry is moving fast. Major players like Google (Switch Transformer), Meta (Llama series), and Mistral have already incorporated MoE techniques into their flagship models. The driver is clear: efficiency. With energy costs rising, the 30-50% reduction in inference energy consumption offered by MoE models is too valuable to ignore.

Cerebras has gone so far as to declare that "to reach trillion-parameter models that are trainable and deployable, sparsity through MoE is becoming the only viable approach." This sentiment is echoed across the research community. The focus is shifting from whether MoE works to how we can make it work better. Current research at workshops like ICLR 2025 explores hybrid approaches, combining different sparsity mechanisms for optimal performance.

Future developments will likely focus on hardware-software co-design. We need chips built specifically to handle the random memory access patterns of sparse computation. We also expect to see more sophisticated routing policies, including hierarchical expert structures and adaptive sparsity levels that adjust based on the complexity of the query.

Key Takeaways for Developers and Strategists

If you are building or deploying AI systems today, here is what you need to know:

- Efficiency is King: Dense models are hitting a ceiling. Sparse routing offers a path to scale without breaking the bank or the planet.

- Infrastructure Matters: You cannot just swap out a dense model for an MoE model. Your hardware must support high-memory bandwidth and efficient random access.

- Beware Expert Collapse: Monitor your routing metrics closely. Uneven utilization will kill performance faster than raw lack of compute.

- Interpretability Improves: Tools like RouteSAE show that sparsity can actually help us understand model behavior better, not worse.

- Long-Term Viability: This is not a temporary hack. It is the foundational architecture for the next decade of AI scaling.

The shift to sparse and dynamic routing is inevitable. It solves the economic and physical constraints that threatened to halt progress. While the implementation is complex, the rewards-in speed, cost, and capability-are substantial. As we move through 2026, the question is no longer if you should adopt these architectures, but how quickly you can master them.

What is the main difference between dense and sparse models?

In dense models, every parameter is activated for every input token, leading to quadratic computational costs. In sparse models, specifically Mixture of Experts (MoE), only a small subset of parameters (experts) is activated per token, allowing for linear scaling and significantly lower inference costs.

How does dynamic routing improve model performance?

Dynamic routing allows the model to select the most relevant "experts" for each specific input token. This specialization ensures that complex queries are handled by the best-suited components, improving accuracy and efficiency compared to static activation patterns.

What is RouteSAE and why is it important?

RouteSAE is a sparse autoencoder framework that uses dynamic routing across multiple layers of a language model. It improves interpretability by capturing both low-level and high-level features simultaneously, achieving higher interpretation scores than traditional layer-specific approaches.

What are the biggest challenges in implementing MoE architectures?

Key challenges include managing memory bandwidth for storing massive parameter sets, preventing "expert collapse" where some experts are overused, and ensuring training stability. Specialized hardware and software infrastructure are required to handle the random memory access patterns.

Is sparse routing the future of AI?

Yes, industry leaders like Cerebras and researchers suggest that MoE-based sparse routing is the only viable approach for scaling to trillion-parameter models. It addresses critical issues of computational cost and energy consumption while maintaining or improving performance.