When your company relies on a large language model (LLM) to process patient records, approve loans, or respond to customer service tickets, a slow response or an outage isn’t just inconvenient-it’s expensive. Gartner estimates that in regulated industries, every minute of AI downtime costs an average of $5,600. That’s why enterprise teams don’t just pick an LLM based on performance benchmarks. They demand contracts-specific, measurable, enforceable contracts-that guarantee uptime, speed, security, and support. These are called Service Level Agreements, or SLAs. And if your vendor can’t deliver on these, you’re taking unnecessary risk.

Uptime Isn’t Just ‘99.9%’-It’s About What Happens When It Drops

Most LLM providers advertise a 99.9% uptime SLA. Sounds solid, right? But 99.9% means you can still lose 43 minutes of service each month. For a customer service bot handling 20,000 queries an hour, that’s over 800,000 unanswered requests. That’s not a glitch-it’s a breakdown in trust. The real differentiator? Tiered guarantees. Microsoft Azure OpenAI and Amazon Bedrock now offer 99.95% uptime for premium contracts (just 21.6 minutes of downtime per month). But in healthcare and finance, where every second counts, leading enterprises are pushing for 99.99%-that’s only 4.32 minutes of downtime per month. Providers like Google Cloud AI and Anthropic have started offering this for mission-critical workloads, but only if you’re willing to pay for it. And here’s what most vendors don’t tell you: uptime SLAs often exclude model-specific outages. In January 2025, GPT-4 had a major performance dip. Many enterprises couldn’t switch to another model mid-flow because their contracts didn’t allow it. That’s not a technical issue-it’s an SLA flaw. Enterprises need clauses that let them route traffic to backup models without penalty.Latency SLAs: Speed Isn’t Optional, It’s a Requirement

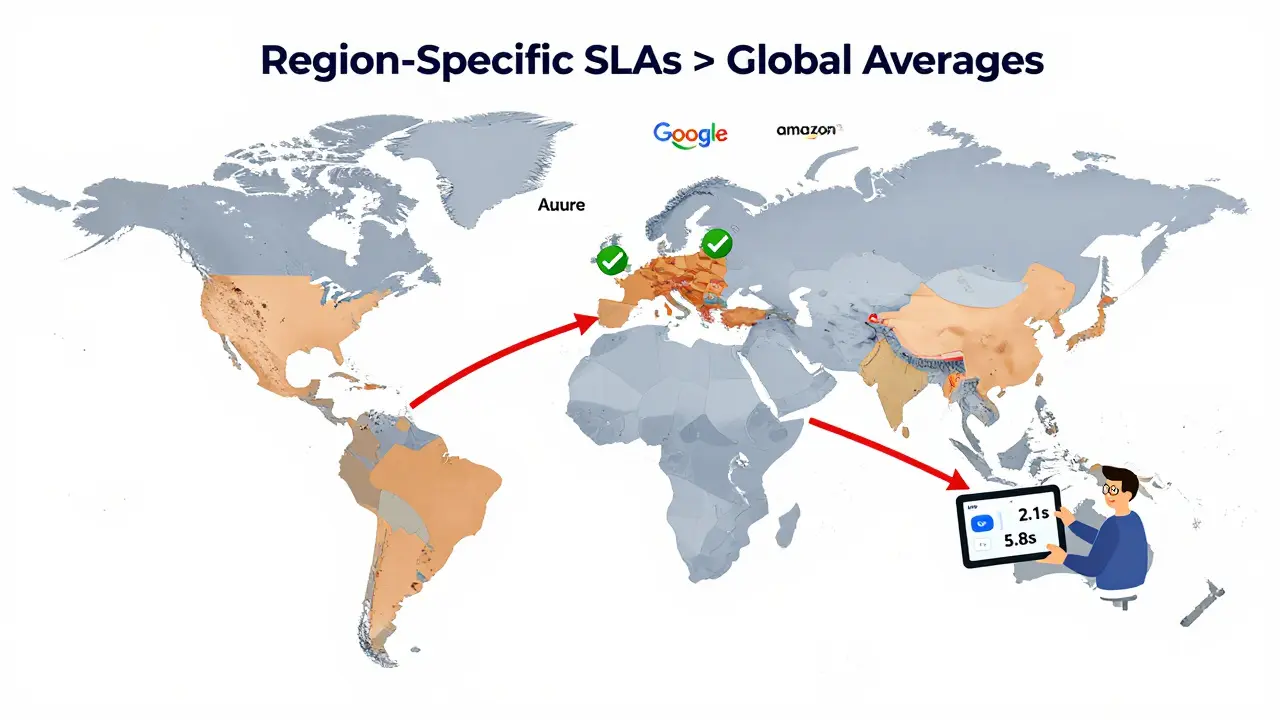

Response time matters more than you think. A 3-second delay in a financial fraud detection system can mean the difference between stopping a $50,000 transaction and letting it slip through. Standard enterprise SLAs now require 95% of requests to respond in under 2-3 seconds under normal load. During peak hours (think Black Friday or tax season), that buffer stretches to 5-7 seconds. But here’s the catch: many providers measure latency from their servers, not from your application. If your users in Europe are hitting a U.S.-based endpoint, your real-world latency could be double. Leading providers like Azure OpenAI and Google Vertex AI now offer regional endpoints with SLAs that guarantee performance within specific geographic zones. If your SLA doesn’t specify location-based response times, you’re not getting the full picture.Security and Compliance: The Hidden SLA That Saves Your Business

Uptime and speed are visible. Compliance is invisible-until you get fined. HIPAA, GDPR, SOC 2, FedRAMP High, DoD IL4/IL5-these aren’t buzzwords. They’re legal requirements. And if your LLM provider doesn’t have these certifications baked into their SLA, you’re on the hook for the violation. Anthropic’s zero data retention policy, independently audited in March 2025, became a selling point for a major hospital system that avoided a $2.3 million HIPAA penalty during an audit. But certifications alone aren’t enough. You need to know:- Where your data is stored and processed (Google Cloud AI offers 22 regional data centers)

- Whether prompts or outputs are logged (some providers store them for model improvement)

- How encryption works (AES-256 for data at rest, TLS 1.3 for data in transit)

- Who has access to your data (and whether they’re audited)

Support Isn’t a Ticket System-It’s a Lifeline

When your AI system goes down at 2 a.m. on a Sunday, who do you call? Most providers offer email support with a 4-hour response time for standard enterprise plans. That’s fine for internal chatbots. It’s disastrous for revenue-critical systems. Premium contracts now include:- 1-hour response time for Severity 1 issues

- 15-minute response windows for mission-critical clients

- 24/7 dedicated engineers with direct phone access

- Named account managers who understand your architecture

Model Versioning and Transparency: The Overlooked SLA Clause

You built your application on GPT-4-turbo. Then, without warning, the provider rolls out GPT-4-turbo-v2. Your prompts break. Your outputs change. Your compliance logs no longer match. Most SLAs don’t address this. But Gartner’s Senior Director Analyst David Groom says it’s the most overlooked requirement: “Enterprises need explicit commitments about how long specific model versions will remain available before mandatory upgrades.” Leading providers now offer version lock guarantees:- Azure OpenAI: 12-month minimum version support

- Amazon Bedrock: 6-month version retention with opt-in upgrades

- Anthropic: Model version freeze for 18 months on enterprise contracts

Hidden Costs: The Real Price of LLMs

The monthly fee you see? It’s not the full cost. AIMultiple’s December 2024 analysis found that enterprises pay 20-40% more in hidden operational expenses:- Dedicated GPU clusters to avoid throttling

- Additional security layers for data residency

- Custom monitoring tools to track SLA compliance

- Legal review fees to negotiate SLA terms

Who Leads in 2026?

Here’s how the top providers stack up on SLA essentials:| Provider | Uptime SLA | Latency Guarantee | Compliance Certifications | Support Response | Model Version Lock |

|---|---|---|---|---|---|

| Microsoft Azure OpenAI | 99.9% (standard), 99.95% (premium) | 2s avg, 5s peak (regional endpoints) | FedRAMP High, HIPAA, GDPR, SOC 2, DoD IL4/IL5 | 4h (standard), 1h (premium), 15m (mission-critical) | 12-month minimum |

| Amazon Bedrock | 99.9% (standard) | 2.5s avg, 6s peak | HIPAA, GDPR, SOC 2 | 4h (standard), 1h (premium) | 6-month retention |

| Google Vertex AI | 99.9% (standard) | 2s avg, 5s peak | HIPAA, GDPR, SOC 2 | 4h (standard), 1h (premium) | 3-month retention |

| Anthropic (Claude 4) | 99.9% (standard), 99.95% (premium) | 2s avg, 5s peak | HIPAA, GDPR, SOC 2, zero data retention | 4h (standard), 1h (premium), 15m (enterprise) | 18-month freeze |

What Enterprises Must Demand

Before signing any contract, insist on these five SLA elements:- Region-specific latency guarantees, not global averages

- Explicit model version retention period (minimum 6 months)

- Clear, auditable data residency and retention policies

- Penalty structure tied directly to downtime minutes (not vague service credits)

- 24/7 support with defined escalation paths for Severity 1 incidents

The market for enterprise LLMs is projected to hit $130 billion by 2030. But only providers who treat SLAs as strategic tools-not legal afterthoughts-will survive. The ones that don’t will lose 30-40% of their market share by 2027.

Do all LLM providers offer SLAs?

No. Open-source models like Llama 3 or Mistral don’t come with SLAs. They’re free to use but carry zero guarantees on uptime, security, or support. Enterprises using them must build their own infrastructure, monitoring, and compliance layers-which often costs more than a commercial SLA. If you need reliability, you need a provider with a formal SLA.

Can I negotiate SLA terms?

Yes, especially for contracts over $100,000/year. Leading providers now have dedicated legal teams for enterprise SLA customization. Common negotiable terms include extended version retention, regional data residency, faster response times, and higher service credit percentages. Don’t accept the default terms-ask for a tailored agreement.

What happens if an LLM provider breaks their SLA?

You get service credits-usually a percentage of your monthly fee. For example, if uptime falls below 99.9%, you might get 10% back. If it drops below 99%, you could get 25-50%. But credits rarely cover lost revenue, compliance fines, or reputational damage. That’s why SLAs are about risk mitigation, not compensation.

Are SLAs enforceable in court?

Yes, if they’re clearly written and signed. Most enterprise SLAs are legally binding contracts. However, vague language like “reasonable efforts” or “best efforts” weakens enforceability. Always insist on measurable metrics: exact percentages, time windows, and penalty calculations. Avoid boilerplate language.

How long does it take to implement an enterprise LLM with SLA?

Typically 3-6 months. This includes vendor selection, SLA negotiation, integration testing, security audits, compliance checks, and staff training. Rushing this process leads to failures. TrueFoundry’s May 2025 report found that companies completing full evaluations saw 68% fewer production incidents in the first year.

Should I use multiple LLM providers?

Yes, for mission-critical systems. Many enterprises now use a primary provider (like Azure) with a backup (like Anthropic) to avoid single-point failures. This requires SLAs that allow model switching without penalty. Amazon Bedrock’s multi-model routing makes this easier. But it adds complexity-only do this if your use case justifies it.

Stephanie Serblowski

March 14, 2026 AT 16:00Let’s be real-99.9% uptime is the digital equivalent of saying ‘I’ll try not to drop the baby’ while juggling three flaming torches. 🤡

Enterprise SLAs shouldn’t be suggestions; they should be legally binding oaths written in blood, ink, and AWS bill receipts.

Anthropic’s 18-month model freeze? That’s the only reason I’m not screaming into the void while my compliance team panics every time OpenAI ‘improves’ a model.

And don’t get me started on latency guarantees that only apply if your server is in the same zip code as the user. I’m in Chicago. My LLM is in Oregon. My users are in Berlin. Where’s the SLA for ‘please stop pretending global latency is a math problem’?

Also, ‘service credits’? Bro, my CEO lost $2M in Q3 because a bot misread a diabetic patient’s dosage. You think a 10% refund on a $50K contract fixes that? Nah. That’s like giving someone a lollipop after they break their leg.

Bottom line: if your vendor doesn’t have a named incident response team on speed dial with a direct line to their CTO, you’re not paying for reliability-you’re paying for hope.

And yes, I’m still mad about the January GPT-4 dip. I still have nightmares of 800k unanswered customer tickets. 😭

Renea Maxima

March 15, 2026 AT 23:21What if SLAs are just capitalism’s way of pretending we can quantify trust?

Uptime? Latency? Compliance? These aren’t metrics-they’re illusions wrapped in legal jargon.

We act like AI is a machine that can be ‘guaranteed,’ but it’s not. It’s a statistical ghost haunting our servers, trained on data we don’t fully understand, deployed by teams who barely know how it works.

And yet we sign contracts like it’s a toaster with a 10-year warranty.

Maybe the real SLA we need is one that says: ‘We admit we don’t know what we’re doing, and we’ll be transparent when we fail.’

But no. We want guarantees. We want control. We want magic with a service credit.

And that’s the tragedy.

Jeremy Chick

March 17, 2026 AT 12:30Bro. You’re all overthinking this. If your LLM is costing you $5,600 per minute, you’re already doing it wrong.

Stop trying to build a nuclear submarine with a IKEA instruction manual.

Use Azure. Use Anthropic. Pay for the premium tier. Get the 99.99%. Demand the 15-minute response. Lock the model version.

Stop negotiating ‘reasonable efforts’ and start demanding ‘here’s the contract, sign it, or I’m walking.’

And if you’re still using open-source models because ‘it’s cheaper’-you’re not saving money. You’re just outsourcing your job to a team of sleep-deprived engineers who are now on 24/7 panic duty.

Real talk: the market isn’t about tech. It’s about who has the guts to say NO to vague SLAs.

I’ve seen 3 startups die because they ‘waited to negotiate.’ Don’t be one of them.

Sagar Malik

March 18, 2026 AT 21:55SLAs? Pfft. You think corporations care about uptime? They care about liability shielding. The entire SLA ecosystem is a neoliberal farce designed to transfer risk from vendors to clients while charging premium fees for ‘peace of mind’ that doesn’t exist.

Did you know that most ‘audit trails’ for data residency are just log files stored in the same AWS bucket as the model outputs?

And ‘zero data retention’? That’s marketing spin. They scrub it from their primary logs-but the training pipeline still ingests fragments through backchannel telemetry.

Google’s 22 data centers? Cool. But who’s auditing the third-party subcontractors in Romania who handle the anonymization layer?

And let’s not forget: every ‘enterprise SLA’ has a clause that says ‘provider may suspend service without notice if deemed necessary for system integrity.’

Translation: if you’re not paying $500k/month, you’re not enterprise. You’re a data farm.

They’re not selling reliability. They’re selling FOMO with a contract.

And the real conspiracy? The same people who wrote these SLAs also wrote the GDPR compliance docs. Same lawyers. Same loopholes.

Wake up.

There is no SLA. There is only the algorithm.

And it doesn’t care if you’re HIPAA compliant.

It just wants tokens.

lol

Seraphina Nero

March 19, 2026 AT 22:43