Tag: LLM relevance

Document Re-Ranking to Improve RAG Relevance for Large Language Models

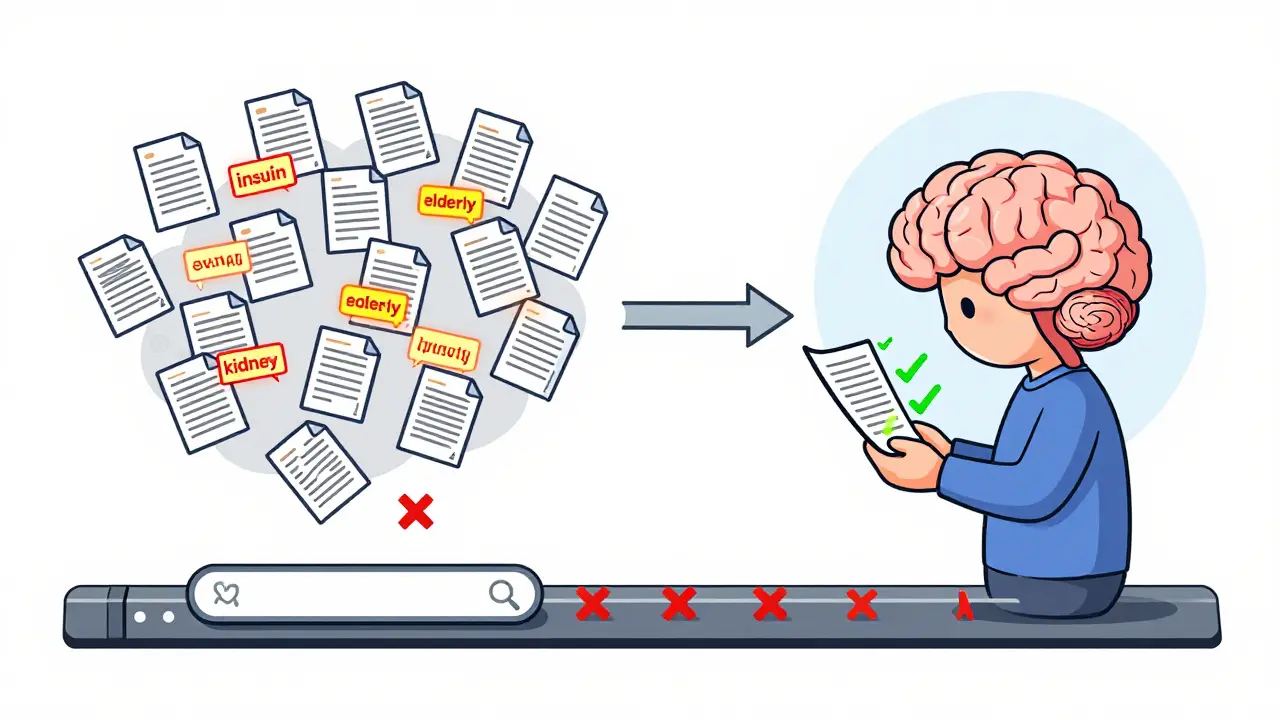

Document re-ranking improves RAG systems by filtering retrieved documents with deep semantic analysis, reducing hallucinations and boosting accuracy in large language model responses. It's essential for high-stakes applications like healthcare and legal AI.

Read more