By 2026, if you're using AI to hire, fire, promote, or monitor employees, you're not just managing tech-you're navigating a minefield of new laws. What used to be a gray area is now a legal battlefield. States like Colorado, California, and New York City have passed laws that treat AI in the workplace like a loaded gun: you can use it, but if you don't handle it right, you'll get sued. And it's not just about bias. It's about transparency, accountability, and the right to know when a machine is deciding your future at work.

AI Isn't Just a Tool-It's a Legal Actor

In 2026, the law doesn't see AI as a neutral assistant. It sees it as an extension of the employer. If a ChatGPT-like tool screens resumes, evaluates performance, or flags "low productivity" in an employee, the employer is legally responsible for what that AI says or does. Utah’s law, effective since May 2024, made this crystal clear: any discriminatory statement made by an AI system in a hiring or evaluation context is treated as if the employer said it themselves. No excuses. No "the AI did it" defense.

This isn't theoretical. In early 2025, a Denver-based logistics company was fined $180,000 after an AI tool used to rank warehouse workers flagged Black and Hispanic employees for "low efficiency" at twice the rate of white workers. The system had been trained on data from a predominantly white workforce in Iowa. The company didn't audit the tool. They didn't test it. They just turned it on. Under Colorado’s new AI law, that’s a violation. And under California’s rules, they’d also be liable for not disclosing the tool’s use to affected employees.

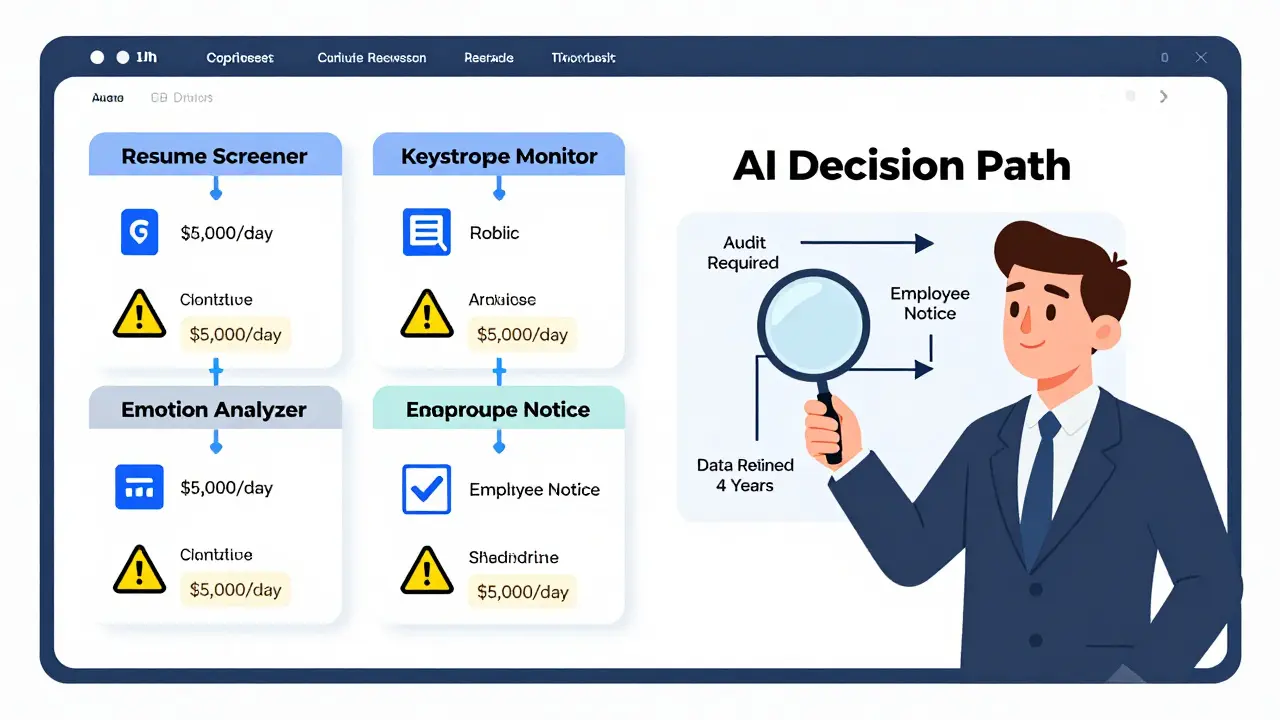

What Employers Must Do in Key States

There’s no single national rule. Instead, employers face a patchwork of laws that vary wildly. Here’s what you actually need to do depending on where you operate:

- Colorado (CAIA, effective June 30, 2026): If you use AI for hiring, promotions, or terminations, you must conduct an annual risk assessment. You must notify employees and candidates when AI is used. You must offer a human review option. And if the system shows bias, you have 90 days to report it to the state attorney general. Data? Keep it for four years-everything from input scores to final decisions.

- California (CPPA ADMT + SB 942 + AB 2013, effective January 1, 2026): You need three layers of compliance. First, under the CPPA, you must prove your AI doesn’t discriminate in employment decisions. Second, under SB 942, if your AI generates video, audio, or images used in hiring (like an AI-generated interview summary), you must clearly label it as AI-generated. Third, under AB 2013, if you use a generative AI tool to create content for job postings or performance reviews, you must disclose the system’s version, developer, and timestamp. Violations? $5,000 per day.

- New York City (Local Law 144-21, effective since 2023): You must hire an independent auditor to test your AI hiring tools every year. You must post the audit summary on your careers page. You must tell applicants in advance that AI will be used-and let them opt out. Fines? $500 to $1,000 per violation.

- Texas (TRAIGA, effective January 1, 2026): You only have to avoid intentional discrimination. No audits. No disclosures. No data retention. Just don’t program your AI to target protected groups on purpose. It’s the lightest regime in the country.

Most companies with operations in multiple states don’t try to comply with each state differently. Too risky. Too messy. Instead, they adopt Colorado’s rules everywhere. Why? Because if you’re compliant with the strictest standard, you’re safe everywhere else. It’s cheaper than lawsuits.

Monitoring Tools Are Now High-Risk Systems

Think your AI-powered time tracker or keystroke monitor is just a productivity tool? Think again. In Colorado and California, any AI system that evaluates performance, flags "low engagement," or ranks employees by output is classified as a "high-risk system." That means it’s subject to bias audits, transparency rules, and mandatory human review.

One retail chain in San Francisco used an AI tool that analyzed webcam footage to rate employee "focus levels." The system flagged workers in dimly lit break rooms-mostly night-shift employees-as "inattentive." Those workers were denied bonuses. The company didn’t realize the tool was using lighting as a proxy for work ethic. Under California law, that’s algorithmic discrimination. Under Colorado law, it’s a reportable incident. The company had to pay $320,000 in back pay and overhaul its entire monitoring system.

Even tools that seem harmless-like AI that predicts who’s likely to quit based on calendar patterns or email tone-are now under scrutiny. If they correlate with protected traits (age, gender, disability), they’re legally dangerous. You can’t just trust the vendor’s claim that it’s "fair." You have to test it yourself.

Worker Rights Are No Longer Optional

Employees aren’t just passive subjects anymore. They have rights-and those rights are enforceable.

- The Right to Know: If AI is involved in any decision that affects your job-hiring, promotion, pay, termination-you must be told. Not in fine print. Not in an employee handbook. In clear, upfront notice.

- The Right to Appeal: If an AI system denies you a promotion or flags you for termination, you can request a human review. In Colorado, this isn’t optional. In California, it’s required if the system has a significant impact.

- The Right to Opt Out: In New York City, job applicants can refuse to be evaluated by AI tools and ask for a traditional interview instead. Employers can’t penalize them for it.

- The Right to Data: You can request the data used to evaluate you. That includes scores, input parameters, and audit results. Employers must provide it within 30 days.

These aren’t suggestions. They’re legal obligations. And they’re being enforced. In January 2026, a California worker sued her employer after an AI tool reduced her bonus because she took maternity leave. The tool had flagged "time off" as a predictor of "lower productivity." The court ruled the system violated the state’s anti-discrimination laws. The employer had to pay $450,000 in damages and delete the tool.

What Happens If You Ignore the Laws?

The penalties are stacking up. In California, fines start at $5,000 per day per violation. In Colorado, the state attorney general can shut down your AI tools. In New York City, each employee affected by non-compliant AI counts as a separate violation. A single HR system used on 500 employees could mean $500,000 in fines.

But money isn’t the only cost. Reputational damage is real. In 2025, a Fortune 500 company lost 12% of its job applicants after news broke that its AI hiring tool had been flagged for racial bias. The company didn’t get sued. But it did get branded as "unfair." And in 2026, talent won’t work for companies like that.

What Should You Do Now?

Here’s what actually works in 2026:

- Map your AI tools: List every AI system used in hiring, promotion, pay, or monitoring. Don’t skip the "small" ones. Even a chatbot that answers FAQs for applicants can trigger disclosure rules.

- Identify your jurisdiction: Where do you operate? Where do your employees live? Apply the strictest rules across your entire organization.

- Test for bias: Run your tools against demographic data. If your AI consistently rates women lower on leadership potential, or older workers lower on tech skills, it’s not working. Fix it or ditch it.

- Disclose everything: Tell candidates and employees when AI is involved. Use plain language. No jargon.

- Offer human review: If someone asks for a human to look at their evaluation, say yes. Don’t make them jump through hoops.

- Keep records: Save all data, test results, and audit reports for at least four years. You’ll need them if you get audited.

There’s no shortcut. You can’t outsource compliance to your AI vendor. You can’t assume "it’s just a beta tool." The law doesn’t care. If it’s used in employment, it’s regulated.

What’s Next?

By late 2026, the EU’s AI Act will fully take effect for any U.S. company with operations in Europe. That means a ban on emotion recognition at work-and stricter rules on predictive analytics. Federal legislation is also under review. The AI Accountability Act, introduced in Congress in early 2026, would create a national baseline for AI employment transparency.

For now, the rules are messy. But they’re real. And they’re here to stay. Employers who treat AI like a magic box that just works are setting themselves up for disaster. The smart ones are building legal guardrails into their tech-before the law catches up.

Can I use AI to monitor employee productivity without breaking the law?

Yes-but only if you follow strict rules. In states like Colorado and California, any AI that evaluates performance is considered a high-risk system. You must test it for bias, notify employees, offer human review, and keep records for four years. In New York City, you must audit it annually and let workers opt out. In Texas, you just can’t intentionally target protected groups. Ignoring these rules risks fines, lawsuits, and reputational damage.

Do I need to tell job applicants if I’m using AI to screen resumes?

Absolutely. Under Colorado, California, New York City, and Utah laws, you must clearly notify applicants when AI is used in hiring. This includes resume screeners, video interview analyzers, and chatbots that answer pre-screening questions. The notice must be clear, separate from the job posting, and given before the applicant engages with the system. Failure to do so is a violation.

What if my AI tool is made by a third-party vendor?

You’re still responsible. Under Colorado’s law, deployers (employers) are legally liable for discrimination caused by third-party AI tools. Even if the vendor says their system is "bias-free," you must independently audit it. California and New York City also hold employers accountable. You can’t shift blame. If the AI discriminates, you pay the price.

Can I use AI to generate job descriptions or performance reviews?

You can-but you must disclose it. Under California’s AI Transparency Act (SB 942), any AI-generated content used in hiring or evaluation must be clearly labeled. That includes job descriptions, performance summaries, or feedback emails generated by ChatGPT. If you don’t disclose it, you risk $5,000 per day in fines. Also, if the AI includes discriminatory language, you’re legally responsible for it.

Is there a federal law on AI in the workplace yet?

Not yet. As of 2026, there’s no national law. But the patchwork of state laws is so complex and strict that most employers treat them as de facto federal standards. Federal legislation is being drafted, but until then, you must comply with every state where you operate. The safest approach is to follow Colorado’s rules everywhere.

Eka Prabha

March 1, 2026 AT 07:01Let me get this straight-employers now have to audit AI tools like they’re nuclear reactors? And keep data for FOUR YEARS? This isn’t regulation, it’s bureaucratic overkill dressed up as worker protection. Who’s going to pay for all this? The workers. Through lower wages, fewer hires, or just plain automation fatigue. The law didn’t solve bias-it just made compliance a new form of exploitation. And don’t even get me started on the ‘human review’ loophole. HR managers are already drowning in paperwork. Now they’re supposed to be AI interpreters too? This is performance theater with a $5,000-per-day price tag.

Bharat Patel

March 1, 2026 AT 13:34It’s funny how we treat AI like it’s some autonomous agent, when really it’s just a mirror of human decisions. The real issue isn’t the algorithm-it’s the data we fed it, the assumptions we coded into it, and the laziness of managers who thought ‘set it and forget it’ was a strategy. Maybe if we stopped outsourcing ethics to code, we’d have fewer lawsuits and more thoughtful workplaces. The law is late, but it’s pointing in the right direction: accountability starts with people, not machines.

Bhagyashri Zokarkar

March 3, 2026 AT 12:18i cant even believe this is real like i just got promoted and my boss said the ai said i was ‘high potential’ but i swear i just smiled a lot during the zoom call and it thought i was ‘engaged’?? and now im supposed to trust that a machine that thinks my coffee breaks = low productivity knows more than me?? also why do we have to know every single detail like its a court case?? i just want to work without being surveilled like a prisoner in a smart factory lmao

Rakesh Dorwal

March 5, 2026 AT 12:04California and Colorado are acting like they invented work itself. Meanwhile, real economies are growing in places like India and Vietnam where people actually get things done without lawyers hovering over every keystroke. This isn’t progress-it’s American overregulation masquerading as justice. You want fairness? Stop suing companies for using tools that make them more efficient. Let businesses innovate. Let workers earn. Don’t bury innovation under a pile of compliance paperwork written by consultants who’ve never held a real job.

Vishal Gaur

March 6, 2026 AT 19:35so i read this whole thing and honestly i think the real problem is no one actually tests these ai tools before using them. like, you just buy some saaS product and plug it into your hr system and hope for the best? that’s not innovation, that’s negligence. i work in tech and even our dumb chatbot had to go through 3 rounds of testing before we rolled it out. why is hr so far behind? also, 4 years of data retention? that’s a hacker’s dream. who’s securing all that sensitive employee info? not the company, probably. they’re too busy filling out forms.

Nikhil Gavhane

March 7, 2026 AT 23:47This is actually one of the most balanced takes on AI in the workplace I’ve seen. The core idea-that workers deserve transparency and recourse-isn’t radical. It’s basic human dignity. The fact that we need laws to enforce this says more about corporate culture than about technology. If we can build AI that predicts churn or optimizes shifts, we can build systems that are fair, explainable, and humane. It’s not about stopping innovation-it’s about making sure innovation doesn’t leave people behind.

Rajat Patil

March 8, 2026 AT 19:26It is important to remember that technology reflects the values of those who design and deploy it. When an AI system discriminates, it is not because the code is evil. It is because the data was biased, or the oversight was absent. Employers must act not only as users of technology, but as stewards of fairness. Compliance is not a burden-it is a moral responsibility. The law, however complex, is a step toward a more just system of work.

deepak srinivasa

March 9, 2026 AT 04:21Interesting how the laws focus on hiring and monitoring, but don’t touch AI-generated content like job descriptions or performance feedback. Isn’t that where bias often hides? Like, if an AI rewrites a job posting to favor ‘aggressive leaders’ and that subtly discourages women or neurodivergent candidates, who audits that? And what about contractors or gig workers-do they get any of these protections? The rules seem to ignore the whole ecosystem, not just the corporate HR department.

pk Pk

March 10, 2026 AT 22:28Look, I’ve been in HR for 18 years. We used to fire people for being ‘not a culture fit’ with zero documentation. Now we have rules that force us to be fair, transparent, and accountable. That’s not a threat-it’s progress. Yes, it’s a pain. Yes, it costs money. But imagine being the employee who got passed over because an AI thought you were ‘low energy’ after your mom passed away. Now imagine that same employee gets a human review, gets an apology, and gets a chance. That’s not bureaucracy. That’s humanity. We’re not just complying with laws-we’re building workplaces where people actually belong.