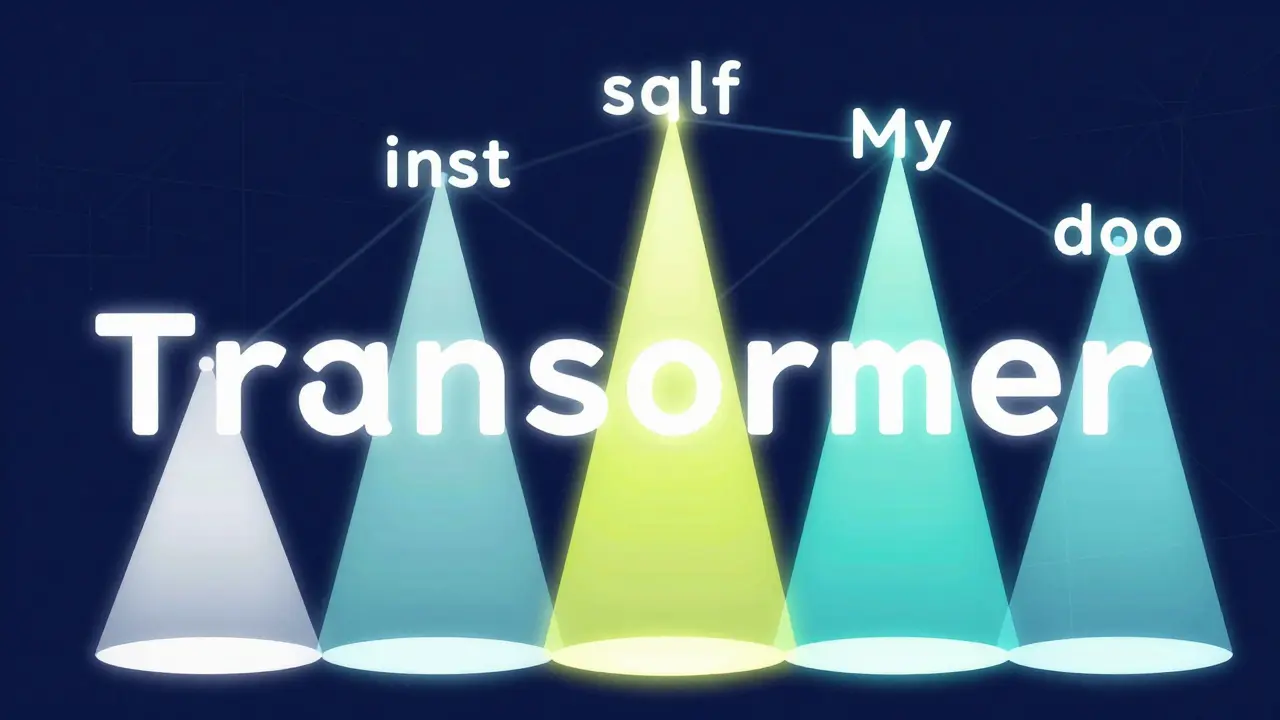

Tag: self-attention mechanism

How Large Language Models Work: Core Mechanisms and Capabilities

Explore the inner workings of Large Language Models, from Transformer architecture and self-attention to tokenization and the battle against hallucinations.

Read more