Tag: AI Architecture

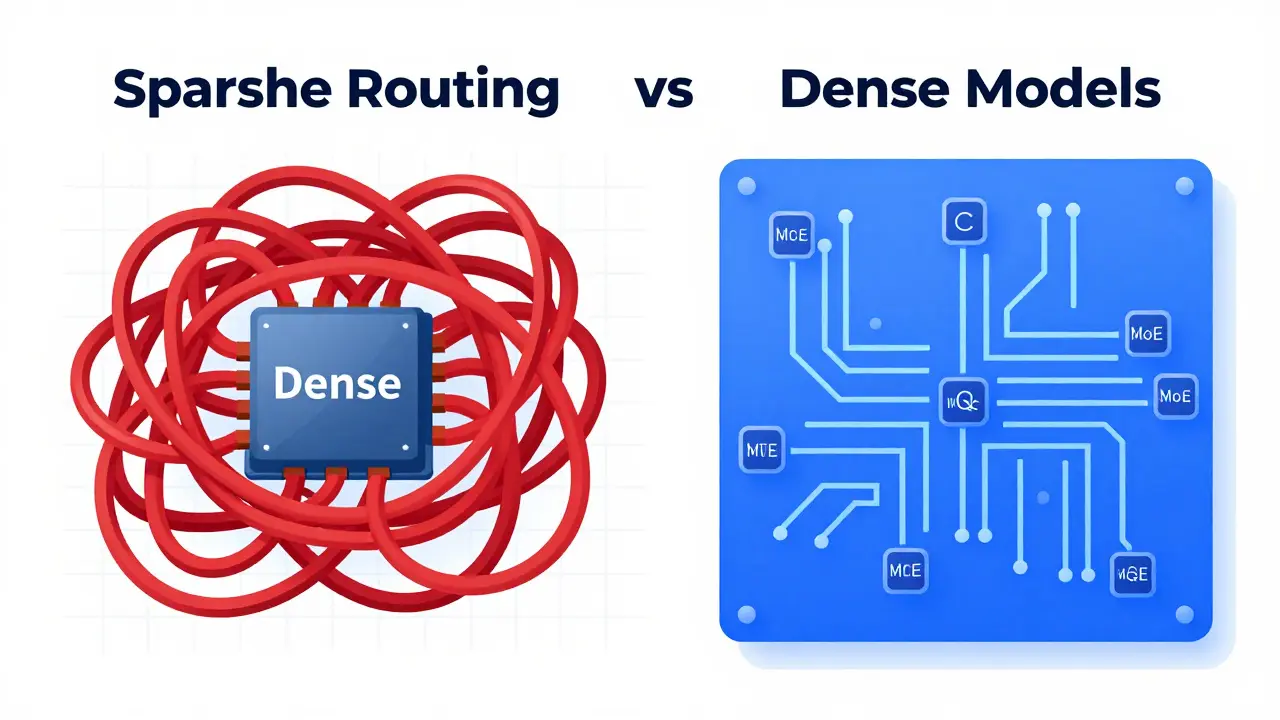

Sparse and Dynamic Routing in LLMs: The MoE Revolution Explained

Explore how sparse and dynamic routing via Mixture of Experts (MoE) transforms LLMs. Learn about efficiency gains, RouteSAE, and implementation challenges in 2026.

Read more