Tag: compressed draft models

Speculative Decoding with Compressed Draft Models for LLMs: Faster Inference Without Losing Quality

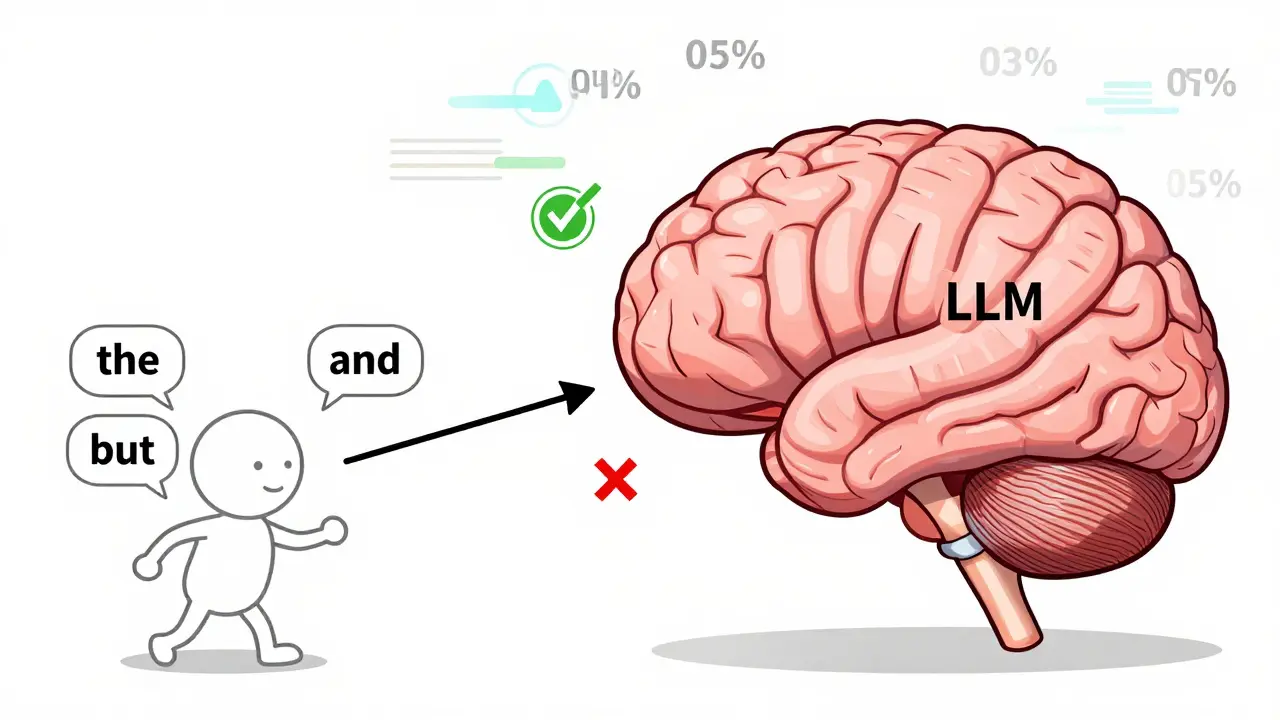

Speculative decoding with compressed draft models cuts LLM inference time by up to 3x by letting a small model predict tokens ahead, while the large model verifies them in parallel. No quality loss-just faster responses.

Read more