Tag: fine-tuning LLMs

Multi-Turn Conversations with Large Language Models: Managing Conversation State

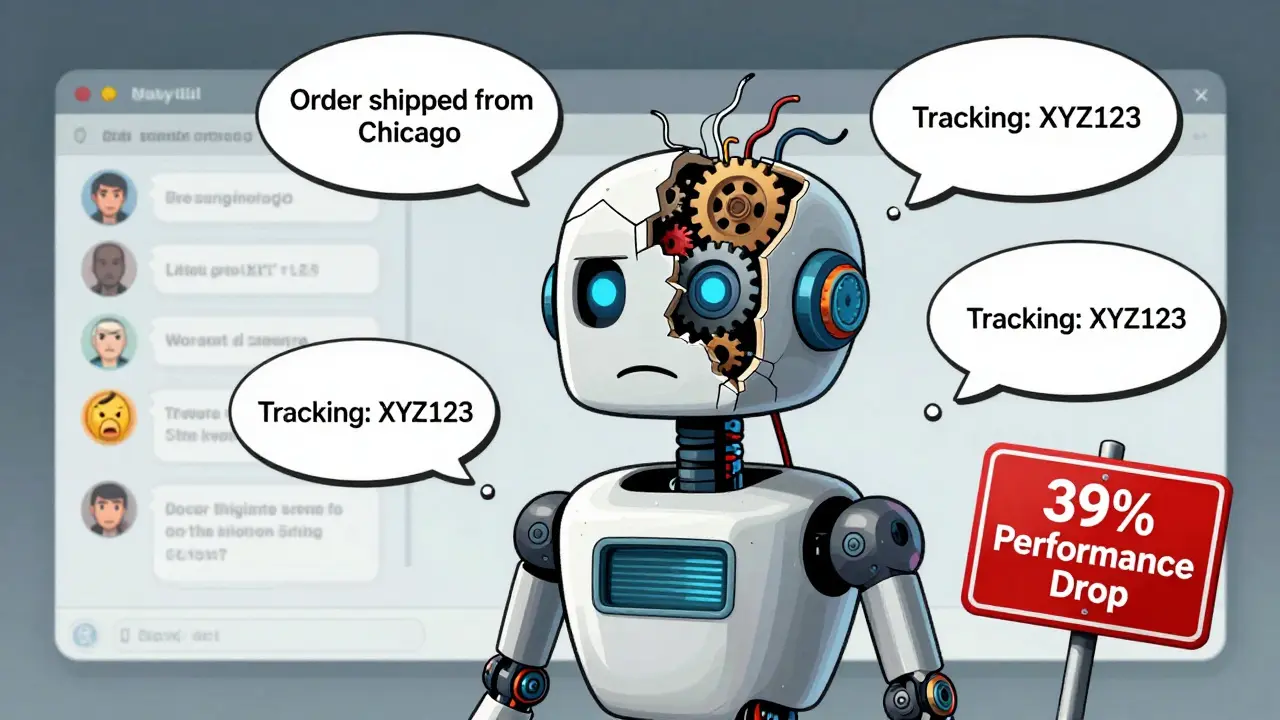

LLMs lose track in multi-turn conversations, causing 39% performance drops. Learn how loss masking, context summarization, and frameworks like Review-Instruct fix this-and why state management is now critical for real-world AI.

Read more