Tag: LLM fine-tuning

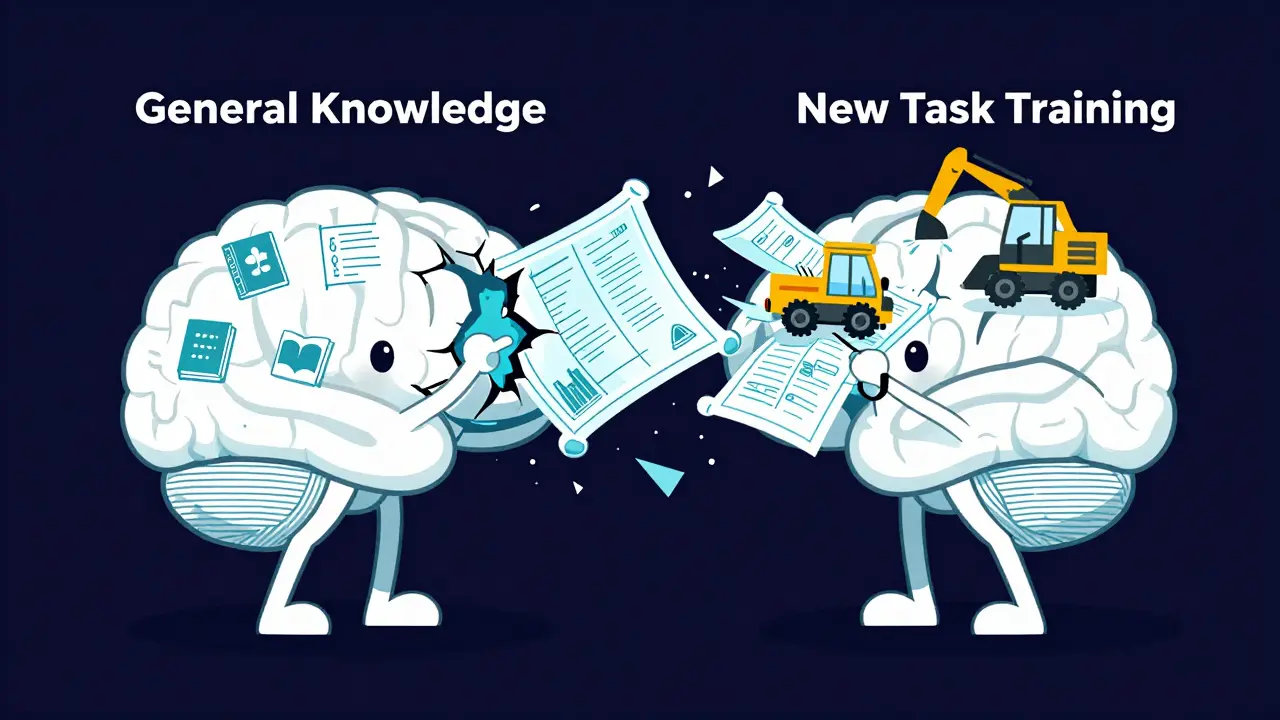

Preventing Catastrophic Forgetting During LLM Fine-Tuning: Techniques That Work

Discover why LLMs forget general knowledge after fine-tuning and how techniques like FIP, STM, and EWC can stop catastrophic forgetting in 2026.

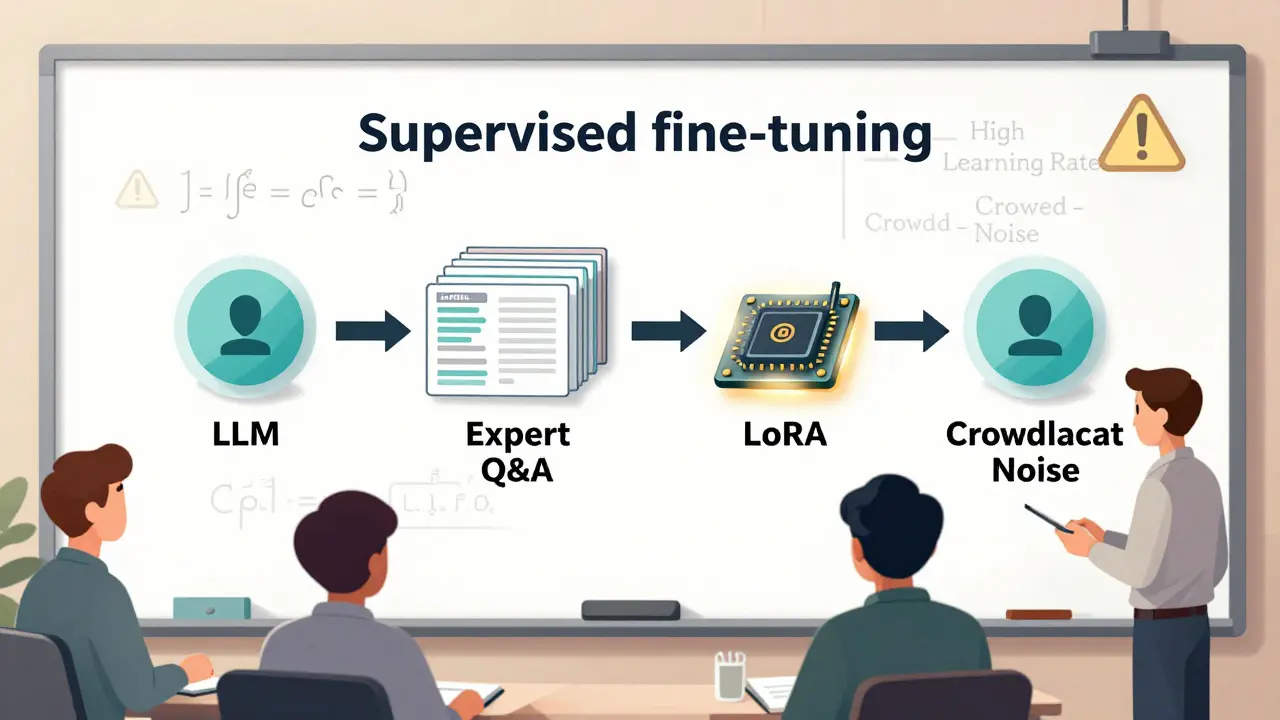

Read moreSupervised Fine-Tuning for Large Language Models: A Practical Guide for Teams

Supervised fine-tuning turns general LLMs into reliable, domain-specific assistants. Learn the practical steps, common pitfalls, and real-world results from teams that got it right - and those that didn’t.

Read more