Tag: semantic caching

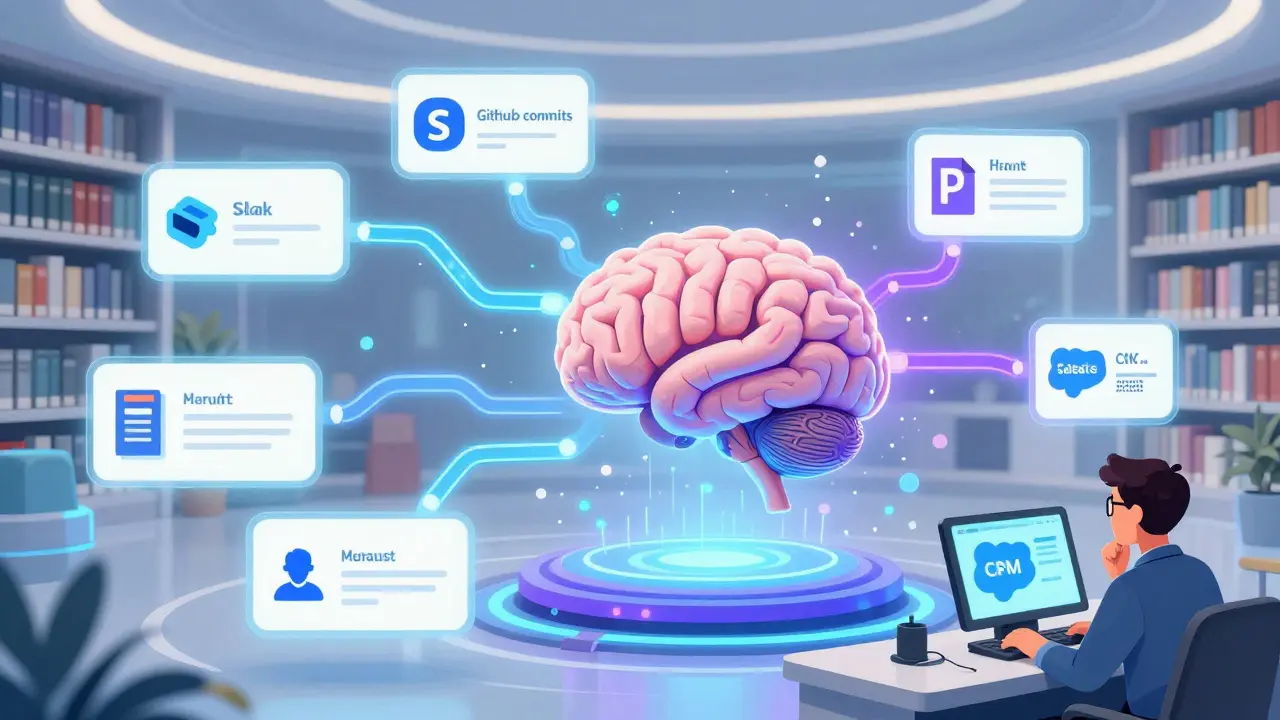

Enterprise RAG Architecture for Generative AI: Connectors, Indices, and Caching

Enterprise RAG architecture combines data connectors, hybrid indices, and intelligent caching to deliver fast, accurate, and scalable generative AI for corporate use. Learn how to connect live data, build efficient search indexes, and cut latency by 80% with semantic caching.

Read more