Archive: 2026/03 - Page 2

Evaluation Protocols for Compressed Large Language Models: What Works, What Doesn’t

Traditional metrics like perplexity fail to catch hidden failures in compressed LLMs. Learn why modern evaluation protocols using LLM-KICK, EleutherAI LM Harness, and LLMCBench are now essential for reliable deployment.

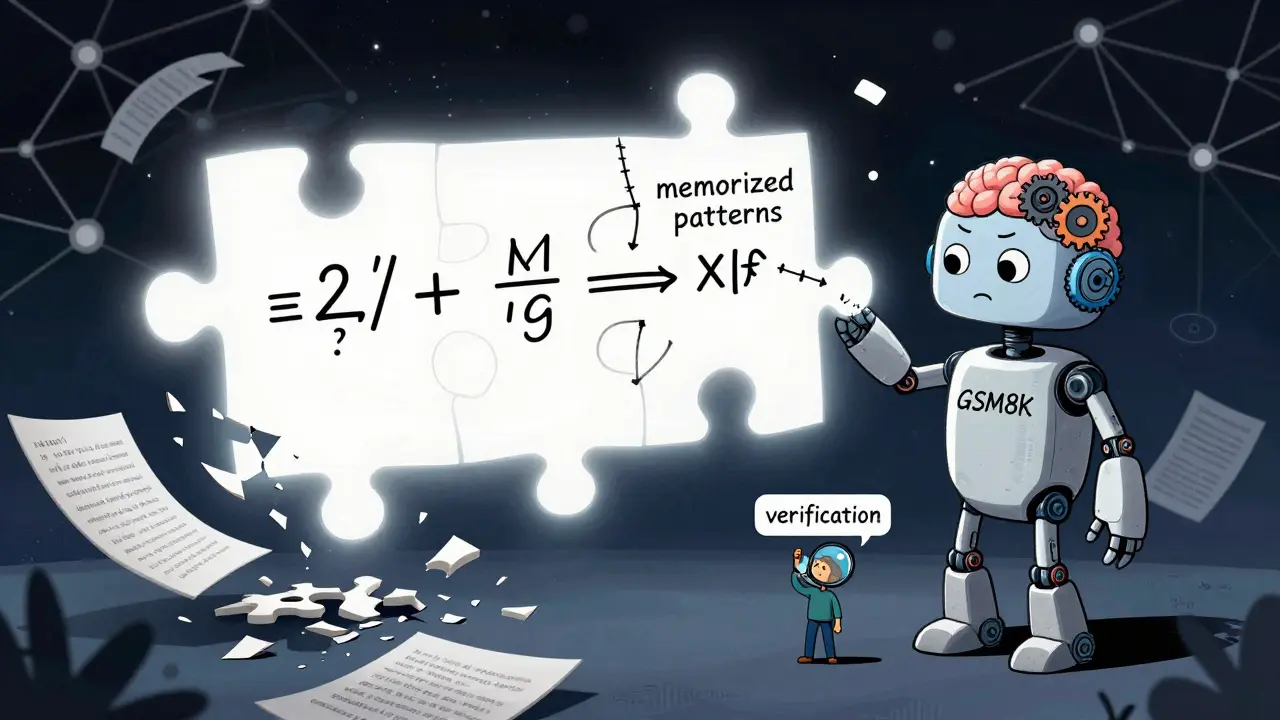

Read moreMathematical Reasoning Benchmarks for Next-Gen Large Language Models

Mathematical reasoning benchmarks reveal that even the most advanced LLMs struggle with true mathematical understanding. While models solve Olympiad problems, they fail under perturbation tests - exposing reliance on memorization over reasoning.

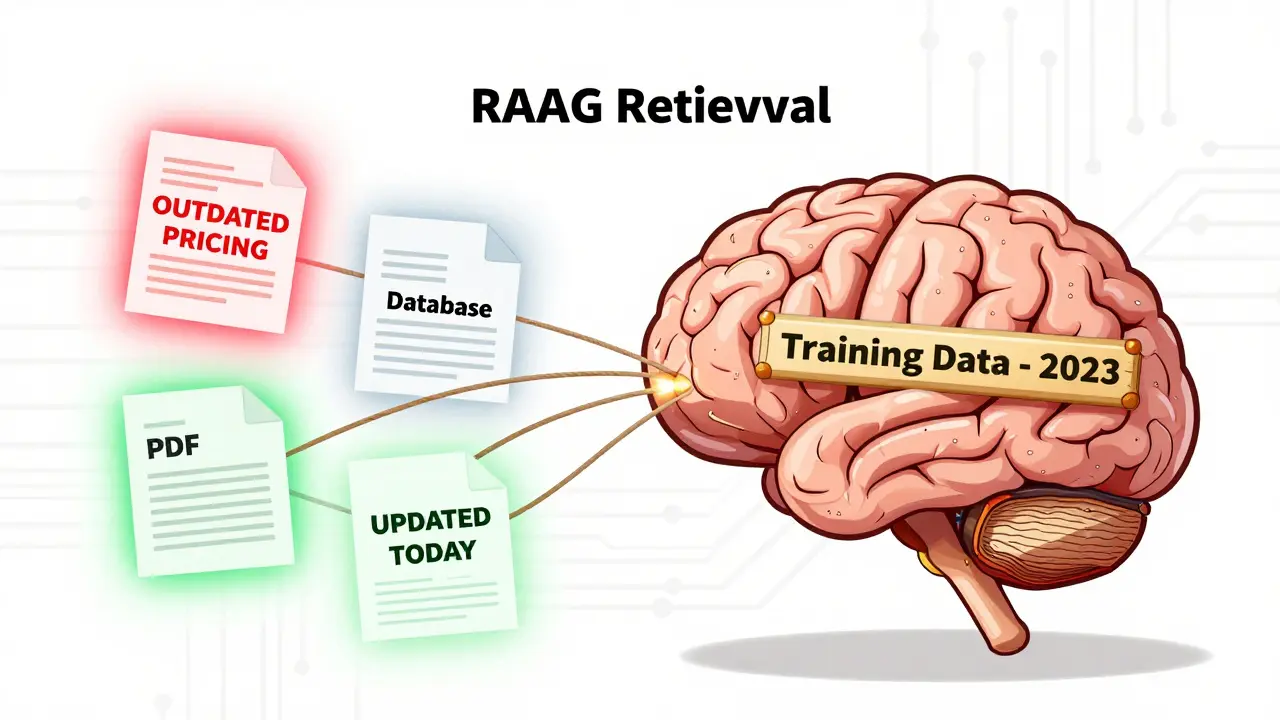

Read moreDocument Freshness and Sync in RAG Systems: Keeping LLMs Up to Date

Keeping RAG systems accurate requires more than just an LLM-it demands real-time document sync. Learn how to prevent stale data from undermining your AI apps with practical strategies for freshness and synchronization.

Read moreData Privacy in Prompts: How to Redact Secrets and Regulated Information Before Using AI

Learn how to safely use AI by redacting personal and regulated data from prompts before sending them to large language models. Avoid compliance risks with practical steps and real tools.

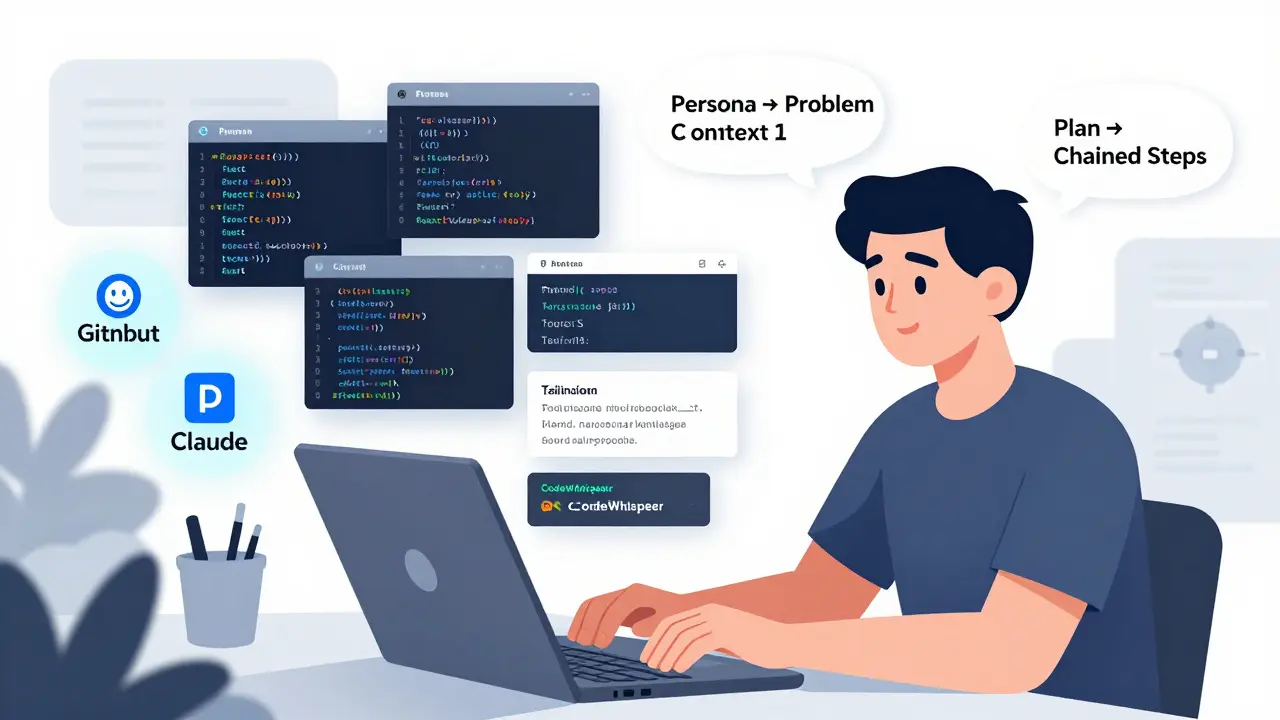

Read morePrompting Strategies and Best Practices for Effective Vibe Coding

Vibe coding uses AI prompts to turn ideas into code fast-but only if you know how to prompt well. Learn the six-step method, common pitfalls, and how top teams turn prototypes into production-ready apps.

Read more