Tag: AI compliance

Enterprise Data Governance for Large Language Model Deployments

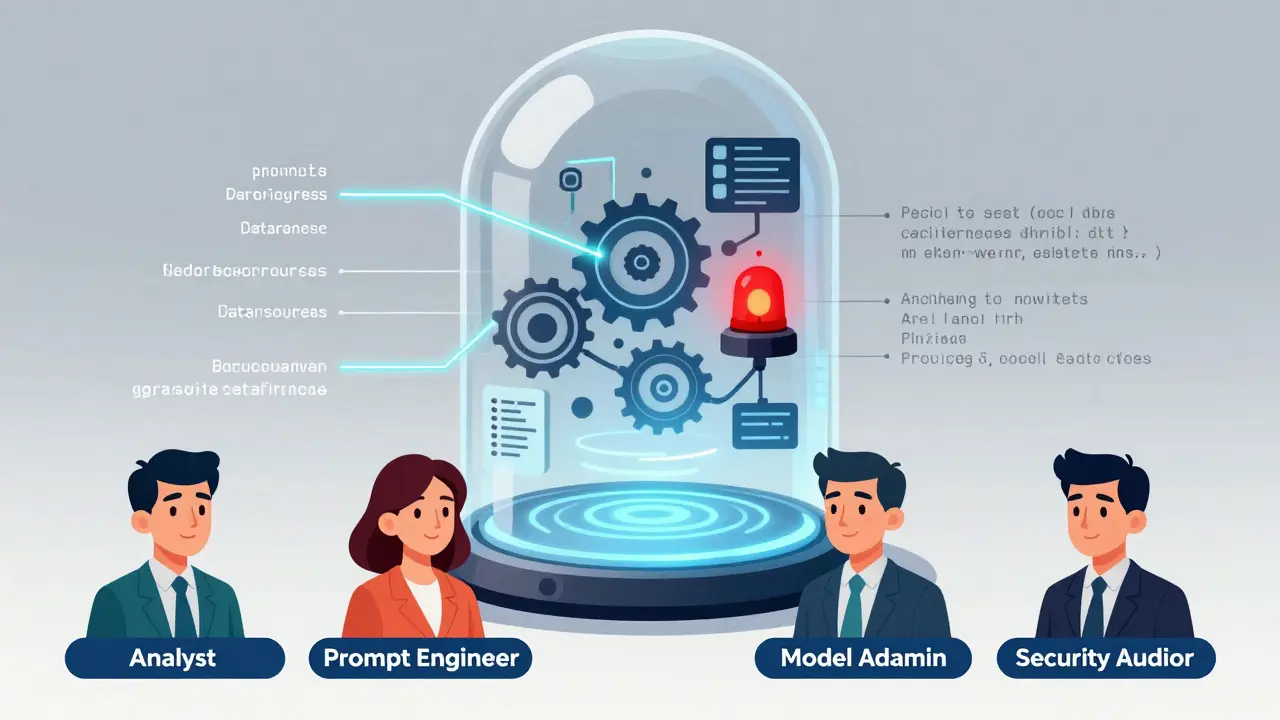

Enterprise data governance for LLMs ensures responsible AI use by controlling training data, enforcing compliance, and monitoring outputs. Without it, companies risk legal penalties, biased outcomes, and lost trust.

Read moreAccess Controls and Audit Trails for Sensitive LLM Interactions

Access controls and audit trails are critical for securing sensitive LLM interactions. Without them, organizations risk data leaks, regulatory fines, and loss of trust. Learn how to implement them effectively in 2026.

Read more