Tag: API LLMs

API LLMs vs On-Prem Deployment: Latency, Control, and Cost Tradeoffs

Explore the critical tradeoffs between API LLMs and on-prem deployment. We analyze latency speeds, data control, hidden costs, and scalability to help you decide the best AI infrastructure strategy for 2026.

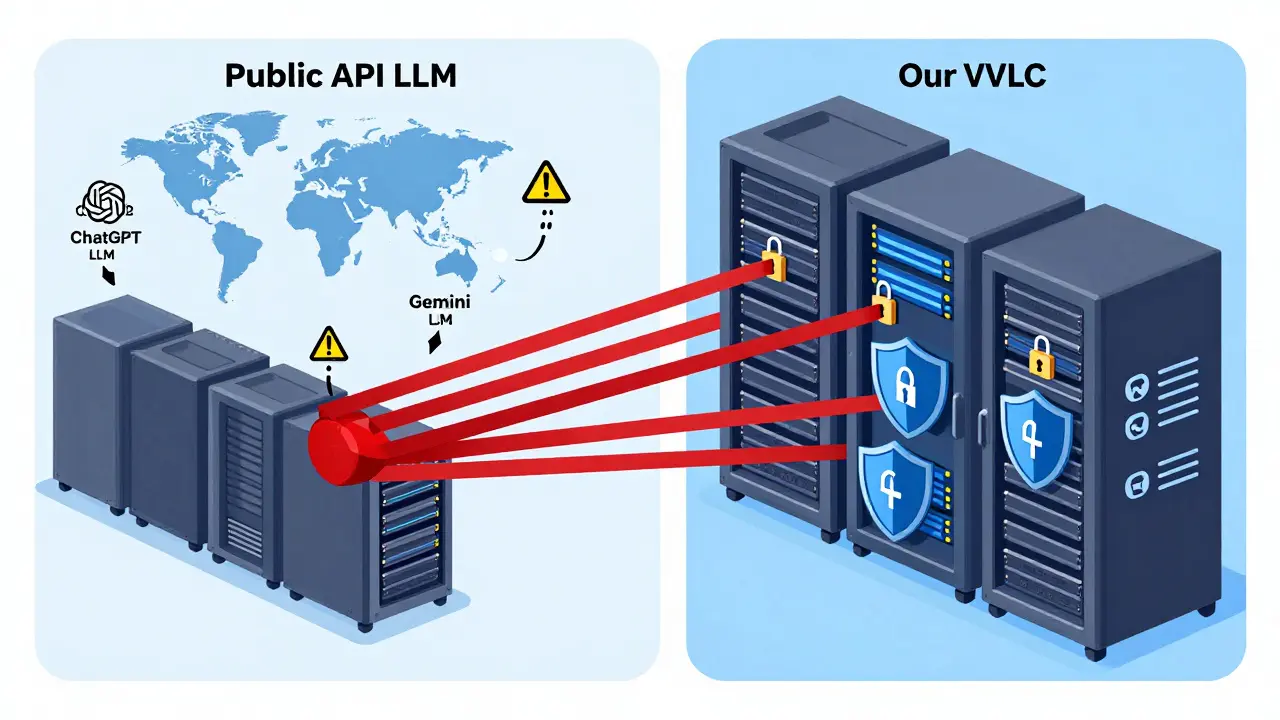

Read moreSecurity Posture Differences: API LLMs vs Private Large Language Models

Public API LLMs like ChatGPT expose your data to third parties, risking compliance and IP theft. Private LLMs keep data inside your cloud, giving you control, audit trails, and regulatory compliance. For regulated industries, the choice isn't optional.

Read more